The long-held assumption that useful quantum computation requires a million qubits is being challenged by research indicating that fewer may be sufficient for specific problems. A collaboration between QunaSys, 1QBit, Qolab, and HPE Quantum, detailed in the March publication “Partially Fault-Tolerant Quantum Computation for Megaquop Applications,” is revising resource estimates and opening the door to near-term advantages. This research focuses on problem classes such as materials design, molecular simulation, and catalysis, where even incremental quantum gains could have an impact. The findings suggest that a strategy of waiting for full fault-tolerant quantum computing (FTQC) is no longer a neutral default, but a deliberate choice with potential consequences as the line between noisy intermediate-scale quantum (NISQ) and fully fault-tolerant computing blurs. However, the effectiveness of this approach is not universal; it’s most effective for circuits with approximately 10⁵ to 10⁶ small-angle rotation gates, a range where advantages over full FTQC diminish outside of.

Partial Fault Tolerance Lowers Qubit Thresholds for Materials Science

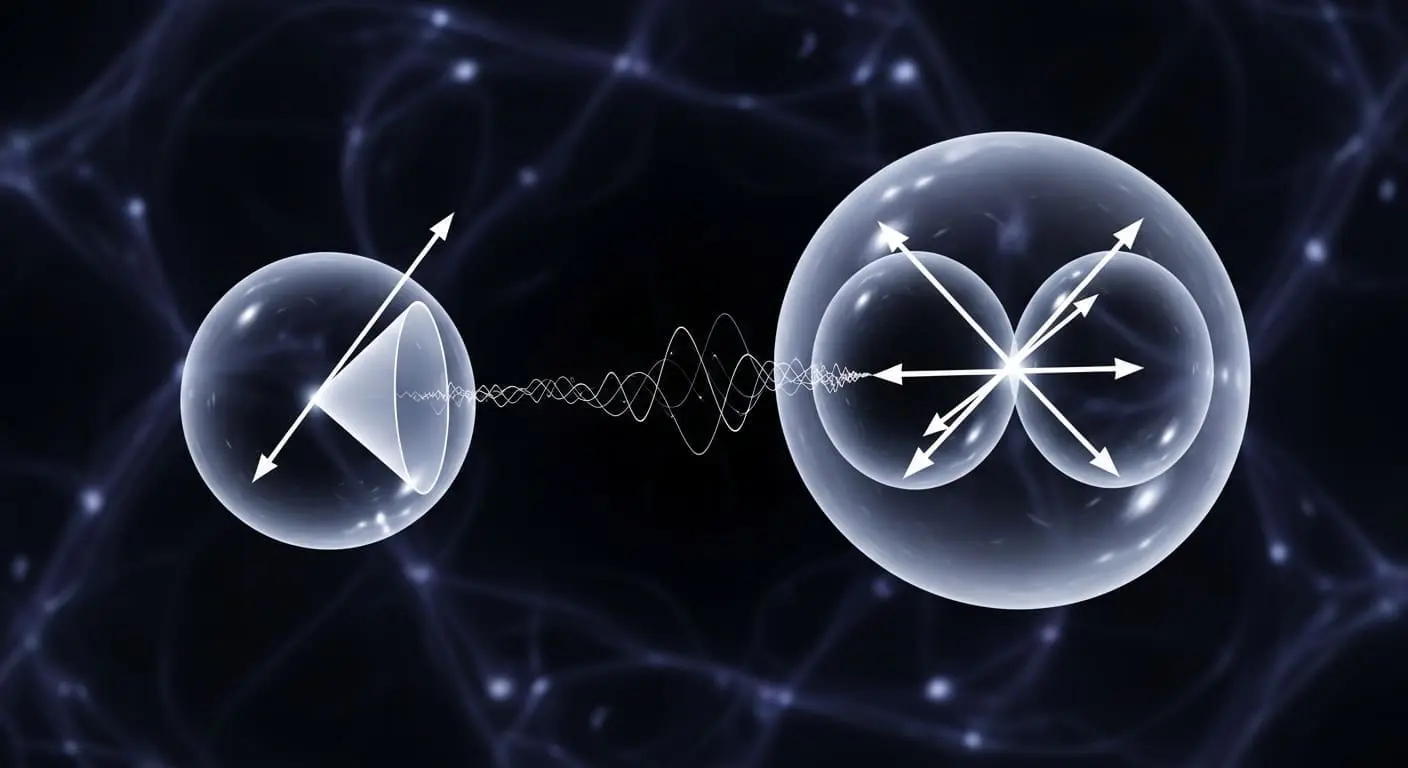

Hundreds of thousands of qubits, not millions, may soon be sufficient for achieving useful quantum computation on specific problems, challenging the long-held belief that a million qubits are necessary for impactful results. This shift in resource estimation is prompting a re-evaluation of quantum computing timelines, particularly within industries poised to benefit from even incremental advances in materials science, molecular simulation, and catalysis. The traditional view of quantum computing has positioned full fault-tolerant quantum computing (FTQC) as the ultimate goal, requiring a substantial leap in hardware to reach upwards of a million qubits. However, this new research suggests a viable pathway to achieving practical quantum advantages before that milestone is reached. The key lies in an approach that accepts some residual errors, leverages post-selection at the architecture level to mitigate them, and employs error mitigation techniques to refine the results.

This differs from full FTQC, which aims to eliminate errors entirely through comprehensive quantum error correction, a process demanding significantly more qubits. “The aim is not perfect fidelity everywhere, but sufficient accuracy for specific classes of problems,” explains the research, highlighting a pragmatic shift in focus. The Chung et al. paper specifically examines the Space-Time efficient Analog Rotation (STAR) architecture, proposed by Fujitsu Laboratories and Prof. Keisuke Fujii’s group at The University of Osaka, and evaluates its performance under realistic hardware constraints for superconducting processors. Their findings indicate that simulating the 2D Fermi-Hubbard model, a crucial problem in materials science, could be feasible with hundreds of thousands of physical qubits, a substantial reduction compared to the millions required for full FTQC. Computations on modest system sizes, under these assumptions, are projected to complete in minutes, a dramatic improvement over the thousands of years previously estimated.

This isn’t an isolated finding; multiple independent research groups are converging on similar estimates for specific problem domains. Toshio, Akahoshi, Fujii et al. ( Physical Review X ) estimate practical quantum advantage for the 8×8 Hubbard model may be achievable with approximately 68,000 physical qubits, while Google Quantum AI ( Nature ) has demonstrated that quantum error correction improves exponentially with system size, supporting the assumptions underpinning partial FTQC resource estimates. This converging evidence suggests that the boundary between “not useful” and “useful” quantum computation may be closer than previously thought, at least for workloads aligned with the strengths of partial fault tolerance. The approach is most effective for circuits with roughly 10⁵ to 10⁶ small-angle rotation gates, a “Goldilocks zone,” where advantages shrink outside this range.

Chung et al. STAR Architecture Evaluates Realistic Superconducting Resources

Recent advancements are reshaping expectations surrounding the arrival of practical quantum computation, moving beyond the previously dominant narrative of needing a fully fault-tolerant machine with a million qubits. While the pursuit of full fault-tolerance continues, a growing body of research indicates that useful quantum computation may be achievable sooner than anticipated, potentially within current strategic planning horizons, for specific problem classes. This shift challenges the long-held assumption that quantum computing remains in an early stage, and instead positions it as a developing field with near-term potential. The March publication is a collaborative effort involving QunaSys, 1QBit, Qolab, and HPE Quantum. The research doesn’t propose abandoning the pursuit of full fault-tolerance, but rather meticulously examines how far useful quantum computation can be pushed without it.

The team’s work represents the first hardware-grounded resource estimate that moves beyond simplified noise models, establishing a quantitative baseline for discussion previously reliant on optimistic approximations. They provide a hardware-grounded full-FTQC baseline, estimating one to several million physical qubits depending on system size and algorithm. The effectiveness of this approach, however, is not universal. The research indicates it’s most effective for circuits with approximately 10⁵ to 10⁶ small-angle rotation gates, a “Goldilocks zone” where advantages shrink outside this range. The authors emphasize that these favorable numbers are contingent on specific problem structures, notably the 2D Fermi-Hubbard model, and that substantial resources are still required. “The contribution is not an argument that partial FTQC is easy or close at hand,” they clarify, “It is the first hardware-grounded resource estimate that replaces the simplified, single-parameter noise models used in earlier studies.” The Chung et al.

This architecture embraces a different strategy than full FTQC, accepting residual errors while leveraging post-selection and error mitigation techniques to achieve sufficient accuracy for targeted applications. Converging evidence from independent groups supports this direction; Toshio, Akahoshi, Fujii et al.

Simulation of the 2D Fermi-Hubbard model – a workhorse problem in materials science – is estimated to be feasible with hundreds of thousands of physical qubits, rather than the millions required under full FTQC for the same problem (Mohseni et al.

Mohseni et al.

NISQ vs. FTQC: Shifting Quantum Computation Expectations

QunaSys, 1QBit, Qolab, and HPE Quantum are challenging conventional wisdom surrounding the development of useful quantum computers, collaborating on research published in March that suggests a shift in expectations regarding fault tolerance and qubit counts. For years, the prevailing assumption has been that truly impactful quantum computation necessitates a fully fault-tolerant machine boasting a million qubits, a milestone widely considered to be at least a decade away. This perspective has fostered a cautious approach, viewing quantum computing as a promising but currently unviable technology. The traditional narrative divides the quantum computing journey into two distinct phases: the noisy intermediate-scale quantum (NISQ) era, representing the current state of affairs with limited qubit counts and significant error rates, and full fault-tolerant quantum computing (FTQC), envisioning a future where quantum error correction effectively eliminates errors, unlocking transformative applications.

The substantial gap between these stages has led many to believe that significant progress in hardware is required before quantum computers can deliver tangible benefits. Partial fault tolerance proposes an alternative, altering the trade-offs inherent in this progression. Rather than striving for complete error elimination through full quantum error correction, partial fault tolerance accepts residual errors, employing post-selection techniques and error mitigation strategies to achieve acceptable accuracy levels. This approach allows for a reduction in the overall physical qubit count required, potentially bringing the realization of useful quantum computation within reach with systems containing hundreds of thousands of qubits, rather than the previously assumed millions. “These favourable numbers depend on specific problem structures,” the researchers caution, highlighting the limitations of the approach. This convergence of evidence, with multiple independent groups arriving at similar qubit estimates for specific problem domains, suggests a more nuanced timeline for quantum computing development, shifting the focus from a singular to a more granular assessment of problem-specific accessibility and underlying assumptions.

Compilation of Trotter-Based Time Evolution for Partially Fault-Tolerant Quantum Computing Architecture.

Akahoshi, Y.

Converging Estimates Suggest Earlier Quantum Advantage for Specific Problems

The shifting landscape of quantum computing resource estimates is prompting a re-evaluation of timelines for achieving practical advantage, particularly for specialized applications. A key driver of this revised outlook is the convergence of multiple independent studies refining estimates for specific problem classes. The authors frame their work as demonstrating both the strengths and the limitations of partial FTQC, offering a hardware-grounded resource estimate that replaces earlier, simplified noise models. This is a meaningful reduction, though still beyond current hardware capabilities. Toshio, Akahoshi, Fujii et al. report that approaches can target industrially relevant molecular systems, including catalysts and iron-sulfur clusters, with qubit requirements reduced by up to 80× compared to conventional FTQC estimates. This convergence of evidence suggests a more granular approach is needed; instead of solely focusing on the arrival of a million-qubit machine, the pertinent question becomes which problem classes will become accessible sooner, and under what conditions. This shift in perspective has implications for both business and R&D leaders, making modest preparation now a comparatively inexpensive investment, while unpreparedness in an accelerated scenario could prove costly.

Partially Fault-tolerant Quantum Computing Architecture with Error-corrected Clifford Gates and Space-time Efficient Analog Rotations.

Akahoshi, Y.