Riverlane forecasts a staged engineering path focused on error correction can accelerate the arrival of utility-scale quantum computing by three to five years, according to a newly unveiled technology roadmap. The company hosted a webinar on April 23, bringing together panelists from IQM, HPE, NVIDIA, Zurich Instruments, and Infleqtion to discuss the critical path toward reliable quantum machines. This collaborative effort signals a coordinated industry push to overcome the significant hurdles of quantum error correction, a challenge Riverlane believes is paramount to achieving practical quantum computation. The Riverlane QEC Technology Roadmap identifies an engineering path that could bring utility-scale quantum computing to market three to five years sooner, outlining standardized interfaces, such as QECi, and a system like Deltaflow to reduce physical qubit overheads by 75 percent to help achieve this. The industry is converging on 2026 as the pivotal year for the “MegaQuOp”, which is the point at which a quantum computer can execute one million error-free operations, utilizing 75 percent fewer physical qubits than a non-leakage-aware decoder.

How can a roadmap for Quantum Error Correction speed up utility-scale quantum computing?

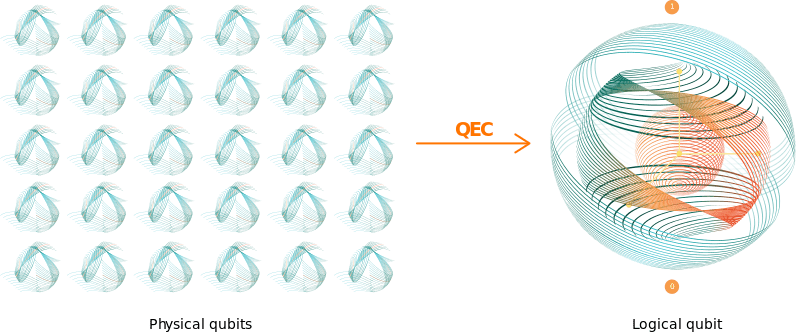

Unlike many industry roadmaps that prioritize qubit counts, Riverlane’s approach centers on quantum error correction as the key to unlocking practical quantum computation; physical qubits are inherently noisy, and without QEC, errors quickly overwhelm calculations. Neil Gillespie, Riverlane’s VP of Applied Research, emphasized during a recent webinar that the company has a concrete plan to accelerate the arrival of utility-scale quantum computing. This acceleration stems from Riverlane’s development of the Local Clustering Decoder, a hardware-based QEC solution detailed in a December publication in Nature Communications. The LCD demonstrated the ability to achieve one million error-free quantum operations while utilizing 75 percent fewer physical qubits than a non-leakage-aware decoder in a leakage-dominated noise model. This reduction in hardware overhead bypasses years of traditional qubit scaling, offering a more efficient path toward functional quantum computers.

How and when will quantum computers do something useful?

Industry leaders predict a significant acceleration in the timeline for practical quantum computing. Riverlane’s approach is defined by distinct “eras,” beginning with the mastery of maintaining a long-lived logical qubit and progressing towards representing genuine quantum computation using Clifford logic. The ultimate goal, described as the “holy grail” of the roadmap, is delivering Non-Clifford logic, essential for algorithms capable of outperforming classical computers on complex problems. This staged progression, moving beyond “lab science” and into scalable engineering, aims to achieve the “MegaQuOp”, one million error-free quantum operations, a key benchmark for quantum utility. Riverlane notes this signifies a shift from preservation to active quantum processing. The industry is converging on 2026 as the date for when quantum computers will solve a real-world problem that’s intractable on a classical machine, often called the ‘MegaQuOp’, when quantum computers can execute one million (Mega) error-free Quantum (Qu) Operations (Op).

Riverlane is already executing a plan that moves quantum computing out of the “lab science” phase and into reliable, scalable engineering. Their roadmap is defined by specific “eras,” each representing a defined capability step.

the industry is converging on as the pivotal year for the “MegaQuOp”, which is the point where a quantum computer can execute one million error-free operations to solve intractable classical problems.

What’s a quantum gate?

Industry leaders predict a significant shift in how quantum gates are approached, moving beyond simply creating qubits to actively processing information with them. Riverlane’s recent webinar, led by Neil Gillespie, their VP of Applied Research, highlighted the distinction between Clifford and non-Clifford gates as central to this progression; the former are fundamental for early fault-tolerant systems and can be classically simulated, while the latter unlock true quantum advantage. Riverlane explained that Clifford gate errors can be addressed in post-processing. The transition to non-Clifford gates, termed a “Logic Leap,” presents substantial implementation challenges, demanding real-time decoding during circuit execution rather than after. Riverlane illustrates this difference by comparing Clifford gates to navigating six points on a globe, versus the freedom to explore anywhere with non-Clifford gates.

This year will see increased focus on techniques like logical branching to enable fault-tolerant implementation of these more powerful gates. Experts anticipate that mastering these distinctions is crucial for achieving universal quantum computation and realizing the exponential speedups offered by algorithms like Shor’s, ultimately accelerating the path to utility-scale quantum computers.

They are indispensable for manipulating qubits and performing error correction.

What is a QuOp?

Industry leaders predict a shift in how quantum computing performance is measured, moving beyond simple qubit counts to a metric called the QuOp, or Quantum Operation. This unit of computation focuses on actual useful work performed by a quantum machine, rather than its theoretical capacity. The cost of generating a QuOp varies by quantum architecture, with surface code systems requiring approximately ‘D’ rounds of quantum error correction cycles, where D represents the code distance. However, architectures utilizing transversal logic may achieve a single QEC cycle, offering a potential speed advantage. Riverlane notes that it forces an honest comparison across different hardware platforms and architectures, emphasizing the QuOp’s nuanced yet valuable role in assessing real computational power. The company believes tracking QuOp numbers provides the most accurate assessment of a machine’s capabilities, signaling a move toward active quantum processing.

shift the conversation away from how many qubits a machine has, and toward how much useful work that machine can actually perform.

Why do we need “real time” quantum error correction?

This expectation stems from a fundamental shift in approach; the prevailing assumption that error correction is a post-processing step is inaccurate, as useful quantum computations demand continuous error detection and mitigation. Riverlane explains that a common misconception is that error correction can be done after a calculation is finished, emphasizing the necessity of addressing errors during computation. This need for immediate action is the core principle behind Riverlane’s Deltaflow system, designed to function as a crucial interface, abstracting away the intricacies of error correction and allowing scientists to focus on logical operations. The system actively identifies and resolves errors mid-operation, preventing their accumulation and ensuring computational integrity. Real-time quantum error correction isn’t simply about preserving quantum states; it’s about enabling active quantum processing, moving beyond theoretical demonstrations toward scalable and reliable machines capable of tackling complex problems. The ability to correct errors as they occur, rather than after the fact, represents a fundamental leap toward realizing the full potential of quantum technology.

the more reliably you can correct errors in real time, the more QuOps your system can run.

What is a quantum decoder?

Industry leaders predict this year will see a significant refinement in quantum decoder technology, essential for building reliable quantum computers. These decoders are classical algorithms that interpret data from ‘syndrome measurements’, indirect checks on qubits, to identify and correct errors affecting quantum computations. Riverlane emphasizes the immense engineering challenge of creating decoders capable of handling extremely fast cycle times, often measured in microseconds, as decoder accuracy directly translates into computational power. The company’s roadmap acknowledges the diverse architectures present in quantum computing; superconducting qubits and AMO qubits operate at vastly different speeds and present unique connectivity challenges. By building tools adaptable across the hardware landscape, Riverlane aims to support any leading qubit modality in achieving fault tolerance. This approach ensures error correction solutions evolve alongside hardware advancements, a critical step toward scalable and dependable quantum processing.

gates are the elementary units of operation used to manipulate qubits to perform calculations.

How does quantum software work?

Quantum software is increasingly vital for bridging the gap between theoretical algorithms and the physical realities of quantum hardware, enabling researchers to explore future applications. Industry leaders predict a staged engineering path, prioritizing error correction, will dramatically shorten the timeline to practical quantum computing. Riverlane estimates this focused strategy can deliver utility-scale quantum computers three to five years sooner. This acceleration is driven by the ability of software to manage the substantial overhead associated with error correction, bypassing years of traditional qubit scaling requirements. Looking ahead, the focus on software-defined error correction will be paramount, enabling more efficient use of existing and near-term quantum hardware. Experts anticipate that this layered approach will unlock the potential of quantum computers for increasingly complex problems, moving beyond purely academic demonstrations toward practical applications in fields like materials science and drug discovery. This software-centric strategy is not merely about optimization; it’s about fundamentally changing how quantum computers are programmed and utilized.

the level of logical operations without needing to understand the physical gate-level complexities happening underneath.

How important are qLDPC codes?

Riverlane is developing quantum Low-Density Parity-Check codes as a central component of their error correction roadmap, anticipating a significant impact on the timeline for practical quantum computers. These qLDPC codes, capable of encoding multiple logical qubits, offer the potential to reduce the hardware demands of error correction, a critical barrier to scaling quantum systems. However, implementing qLDPC codes presents challenges, particularly for superconducting qubits limited to nearest-neighbor connectivity, necessitating substantial investment in hardware engineering to establish the complex connections these codes require. While atomic-based quantum computing offers natural long-range connectivity, experts anticipate that optimizing the physical movement of atoms within these arrays will be crucial to minimizing added noise and overhead in the error correction cycle. The degree of motion required to establish these connections must be carefully balanced against the time it takes to accelerate and decelerate atoms, a trade-off that will define the efficiency of qLDPC implementation in various hardware platforms.

significantly reduce the overhead required for error correction, but they come with a catch: they require long-range connectivity between qubits.

Why do quantum computers need control systems?

Quantum computers, despite their potential, rely heavily on sophisticated control systems to translate abstract calculations into physical qubit manipulations. These systems employ classical signals, like microwave pulses, to calibrate gates, manipulate qubits, and implement crucial quantum error correction; they represent the essential interface between algorithms and hardware. As qubit counts escalate from dozens to the projected millions, managing the synchronization of thousands of signal lines presents a significant hurdle, demanding precise timing for every pulse. Industry leaders predict specialized ASICs are emerging as a solution, designed to distribute a global clock and maintain timing accuracy across entire systems. The development of these components is noted.

too risky and difficult to maintain as we scale the systems.

How will AI and HPC work with quantum?

Industry leaders predict a future where quantum processing units function as specialized nodes within expansive supercomputing environments, rather than standalone devices. Experts anticipate artificial intelligence will play a crucial role in accelerating quantum error correction, specifically by deducing error syndromes and enabling real-time decoding tasks; AI is already integrated into hybrid quantum algorithms and developer tools. The future of quantum computing isn’t a solo act; it’s a hybrid performance, reflects the growing consensus that seamless integration with high-performance computing infrastructure is essential for managing complex classical-quantum workflows. The computationally intensive classical control and compilation required for real-time error correction will heavily rely on HPC resources, fostering a synergistic relationship between quantum and classical systems. Organizations like the Quantum Scaling Alliance are actively uniting stakeholders to drive progress in this integrated approach, recognizing the need for collaboration across industry, academia, and national laboratories. This model is critical to fully realize the promise of utility-scale quantum computers.

like being able to navigate to six specific points on a globe, where your movement is limited to those locations.

How do we integrate HPC, AI and quantum systems?

Industry leaders predict a convergence of high-performance computing, artificial intelligence, and quantum systems will be essential for realizing practical quantum computation, with Riverlane outlining a roadmap focused on accelerating this integration. A key element of this approach involves minimizing latency between the quantum processing unit and a local host system, such as a GPU or CPU, to enable real-time error decoding and qubit stabilization during computation. This necessitates moving beyond reliance on distant cloud connections, as immediate feedback is crucial for maintaining qubit coherence. Riverlane is prioritizing architectures where the real-time host is directly integrated with the quantum controller, facilitating the low-latency response times needed for complex quantum operations. The company believes this localized processing is fundamental to overcoming current limitations in quantum error correction and scaling qubit numbers effectively.

Such a shift signifies a move from simply preserving quantum states to actively processing information within the quantum system, demanding a new level of coordination between classical and quantum resources. This close coupling of HPC and quantum hardware will not only improve error correction but also unlock opportunities for AI-driven optimization of quantum algorithms and control parameters, further enhancing performance and scalability.

What is an AI pre-decoder?

Industry leaders predict a growing reliance on specialized AI components to streamline quantum error correction, with the emergence of AI pre-decoders designed to tackle initial data processing. These circuits or algorithms function as an intermediary step, simplifying complex information before it reaches the primary decoder and reducing overall system latency. NVIDIA’s recent introduction of AI models exemplifies this trend; these models operate as a pre-decoder positioned ahead of the global quantum error correction decoder. This approach promises faster and more accurate decoding capabilities than traditional methods alone, suggesting a shift toward distributed processing within quantum computers. The integration of AI pre-decoders signifies a move beyond simply preserving quantum information to actively processing it, paving the way for more robust and scalable quantum systems.

Industry leaders predict artificial intelligence will increasingly optimize quantum computations beyond simply decoding information, driving optimization across the entire technological stack. AI is tackling the complex challenge of workload management in hybrid supercomputers, intelligently allocating tasks between classical CPUs, GPUs, and quantum processing units. By monitoring in real time, AI ensures quantum processors are reserved for applications where they offer a genuine advantage, maximizing efficiency.

conceivable and reasonable regime through smart engineering and shared infrastructure.

How much will it cost to build a utility-scale quantum computing?

Industry leaders predict the economic realities of scaling quantum computing will necessitate a shift toward cost-effective designs, preventing potential costs from escalating into the tens of billions of dollars for a million-qubit system. Without careful planning, the price of such a system would severely limit practical deployment. Experts anticipate horizontal integration will play a crucial role in reducing costs, allowing for the development of quantum computers that are both scientifically feasible and economically viable. By prioritizing pragmatic engineering, the industry hopes to avoid a future where quantum computing remains an inaccessible technology.

if we are not careful, the cost of a million-qubit system could easily spiral into the billions or even tens of billions of dollars.

What’s the difference between horizontal and vertical integration in quantum computing?

The earlier model, where a single entity controls all layers of the stack from qubits to software, is increasingly viewed as unsustainable as complexity grows; as Masood Mohseni of HPE highlighted, this approach is becoming “too risky” to maintain at scale. Looking ahead, the industry is embracing a collaborative approach where specialized vendors contribute components. This year will see more companies focusing on their core competencies and partnering to assemble complete quantum systems, accelerating research and development across the entire field. This staged progression reduces the risk of individual errors and allows for faster innovation by leveraging diverse expertise. The webinar featuring panelists from IQM, NVIDIA, and Zurich Instruments underscored this collaborative spirit, signaling a move toward a more interconnected and efficient quantum ecosystem.

Does the quantum computing industry need better standards?

Industry leaders predict a growing emphasis on standardization will be crucial for realizing the full potential of quantum computing, as horizontal integration demands a common language across disparate systems. Riverlane stated that for horizontal integration to work, the industry needs a common language, emphasizing the importance of modular and interoperable components. Initiatives such as NVIDIA’s NVQLink and Riverlane’s QECi, standardized interfaces, represent essential steps toward establishing these standards, particularly as artificial intelligence increasingly intersects with quantum technologies. Well-defined interfaces will allow AI agents to integrate more effectively across the entire quantum computing stack, accelerating innovation and potentially shortening development timelines.

This projection stems from a growing recognition that building functional quantum computers requires collaborative efforts across a specialized ecosystem, moving beyond isolated company initiatives. The consensus among participants highlights a fundamental change in how the quantum industry operates, prioritizing horizontal integration and modularity to overcome remaining challenges. Neil Gillespie said that Riverlane is genuinely excited about what comes next, signifying a move toward active quantum processing and commercially sustainable systems.