Quantum error correction is essential for building practical quantum computers, but decoding the errors presents a significant computational challenge, often requiring complex algorithms and substantial processing time. Xinyi Guo of Kyoto University, Geguang Miao of Waseda University, and Shinichi Nishizawa of Hiroshima University, along with their colleagues, introduce a new decoder called the Symmetric One-Hot Matching Elector, or SOME, which dramatically simplifies this process. The team reformulates quantum error correction decoding as a streamlined optimisation problem, enabling decoding times to plummet from milliseconds to microseconds on standard computer hardware. This breakthrough, achieved through a novel approach to constructing permutation matrices, not only reduces computational demands but also maintains high performance at physical rates exceeding those of current state-of-the-art methods, representing a crucial step towards scalable and fault-tolerant quantum computation.

Quantum error correction (QEC) decoders, such as Minimum-Weight Perfect Matching and Union-Find, offer distinct advantages in speed and error thresholds, but can be computationally complex. Each variable in the QUBO represents a potential match between flipped syndromes, with error probabilities encoded as interaction coefficients.

State-of-the-Art Quantum Error Decoding Methods

Decoding Surface Codes With Advanced Algorithms

Advanced Comparison of Quantum Error Correction Algorithms

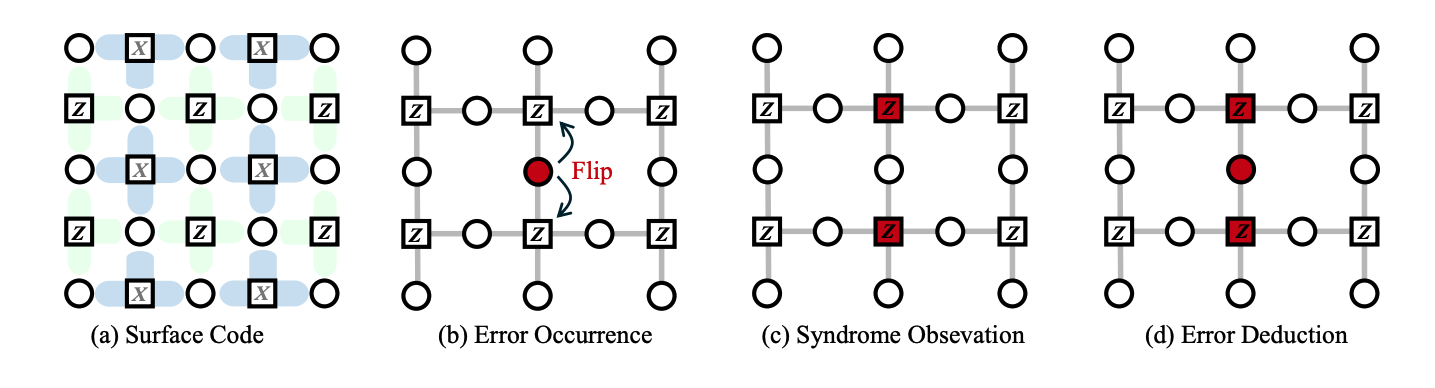

This research explores innovative approaches to quantum error correction, a crucial step towards building practical quantum computers. Researchers investigate established algorithms, like Minimum Weight Perfect Matching, and newer methods leveraging classical hardware such as Ising machines and neural networks, optimizing them for speed and scalability. The team implements and tests these decoders on various platforms, including CPUs, GPUs, and specialized hardware like digital annealers. Quantum error correction is vital because quantum information is fragile and prone to errors. Surface codes are promising due to their relatively high tolerance for errors and suitability for two-dimensional architectures.

Decoding algorithms infer the most likely error based on the observed syndrome, a computationally intensive task. The syndrome represents the result of measuring errors, and the threshold defines the maximum error rate a code can tolerate. The research systematically compares the performance of various decoding algorithms in terms of speed, accuracy, and scalability. The authors explore techniques to optimize Minimum Weight Perfect Matching, such as using sparse matrix representations and parallelization. The team also investigates the potential of neural networks as decoders, including training strategies and network architectures, and utilizes Field-Programmable Gate Arrays to accelerate decoding algorithms.

Achieving practical QEC requires significant hardware acceleration. Different decoding algorithms have different strengths and weaknesses; Minimum Weight Perfect Matching is accurate but can be slow, while Ising machines and neural networks offer potential speedups but require careful tuning and training. Field-Programmable Gate Arrays appear to be a promising platform for accelerating QEC decoding, offering a balance between performance and flexibility. This work targets researchers and engineers interested in quantum error correction, decoding algorithms, and hardware acceleration. It contributes to the ongoing effort to build practical, fault-tolerant quantum computers, helping identify the most promising approaches for achieving scalable and reliable QEC.

Mapping Syndromes to Efficient Binary Optimization Frameworks

Mapping Syndromes to Efficient Binary Optimization Problems

Syndromes Mapped to Efficient Binary Optimization

Boosting Speed and Efficiency in Quantum Error Correction

The New Matching Method Boosts Decoding Speed and Efficiency

Researchers have developed a new method for decoding quantum information, significantly improving the speed and efficiency of error correction. Quantum error correction is crucial for building stable quantum computers, as qubits are highly susceptible to noise. Existing decoding techniques, while effective, often struggle with computational complexity, particularly as the quantum computation. This work’s contribution lies in representing potential matches between error signals, called syndromes, using binary variables, creating a streamlined system for identifying and correcting errors.

Unlike previous methods that require complex calculations to handle the boundaries of the system, this new formulation efficiently manages these situations, reducing computational overhead. The method initializes potential error corrections by starting with the method efficiently manages the new formulation efficiently manages these situations, reducing computational overhead. The.

🗞 SOME: Symmetric One-Hot Matching Elector — A Lightweight Microsecond Decoder for Quantum Error Correction

🧠 ArXiv: https://arxiv.org/abs/2507.23618

The efficacy of the SOME decoder relies critically on converting the pairwise syndrome interactions into a structured quadratic form. This involves mapping the Syndrome $\vec{s} \in {0, 1}^k$ and the underlying error Pauli operators ${X_i, Z_j}$ onto binary variables $x_{ij}$, constrained such that the resulting objective function $\min \sum J_{ijkl} x_{ijkl}$ accurately reflects the minimal weight error configuration. This streamlined QUBO formulation inherently respects the underlying graph structure of the chosen surface code, ensuring that the optimization space remains tractable for classical solvers.

Furthermore, the implementation of the Elector mechanism effectively manages the resource constraints often encountered in hardware-accelerated quantum computation. By treating syndrome matching as a competitive assignment problem, the decoder systematically allocates error probabilities, $p_i$, as interaction coefficients within the QUBO matrix. This approach bypasses the need for exhaustive search over the entire error space, concentrating the computation on the most probable error paths compatible with the observed syndrome measurements.

While the computational speed of SOME is impressive, a major remaining challenge lies in scaling the decoder’s performance to extremely large-scale physical implementations where the number of measured syndromes rapidly increases. Scaling requires maintaining low coupling overhead within the QUBO formulation, which demands sophisticated matrix partitioning and sparse connectivity management. Future work must focus on generalizing the Elector principle to higher-dimensional or non-planar topological codes, moving beyond the foundational surface code architecture.