A new approach to optimising quantum circuits tackles fundamental limitations in deep reinforcement learning. Akash Kundu and Sebastian Feld at Delft University of Technology focus on engineering the replay buffer, a key component of reinforcement learning algorithms. The method addresses inefficiencies caused by unreliable data and costly evaluations, achieving gains in sample efficiency of up to 32× and reducing optimisation time by as much as 67.5 percent on a 12-qubit problem. Notably, the work also demonstrates a substantial reduction, up to 90 percent, in the steps required to reach chemical accuracy in molecular tasks by effectively reusing noiseless data, establishing experience management as a key factor in scalable, noise-robust quantum optimisation.

Dynamic prioritisation of experiences via temporal difference errors and reliability weighting

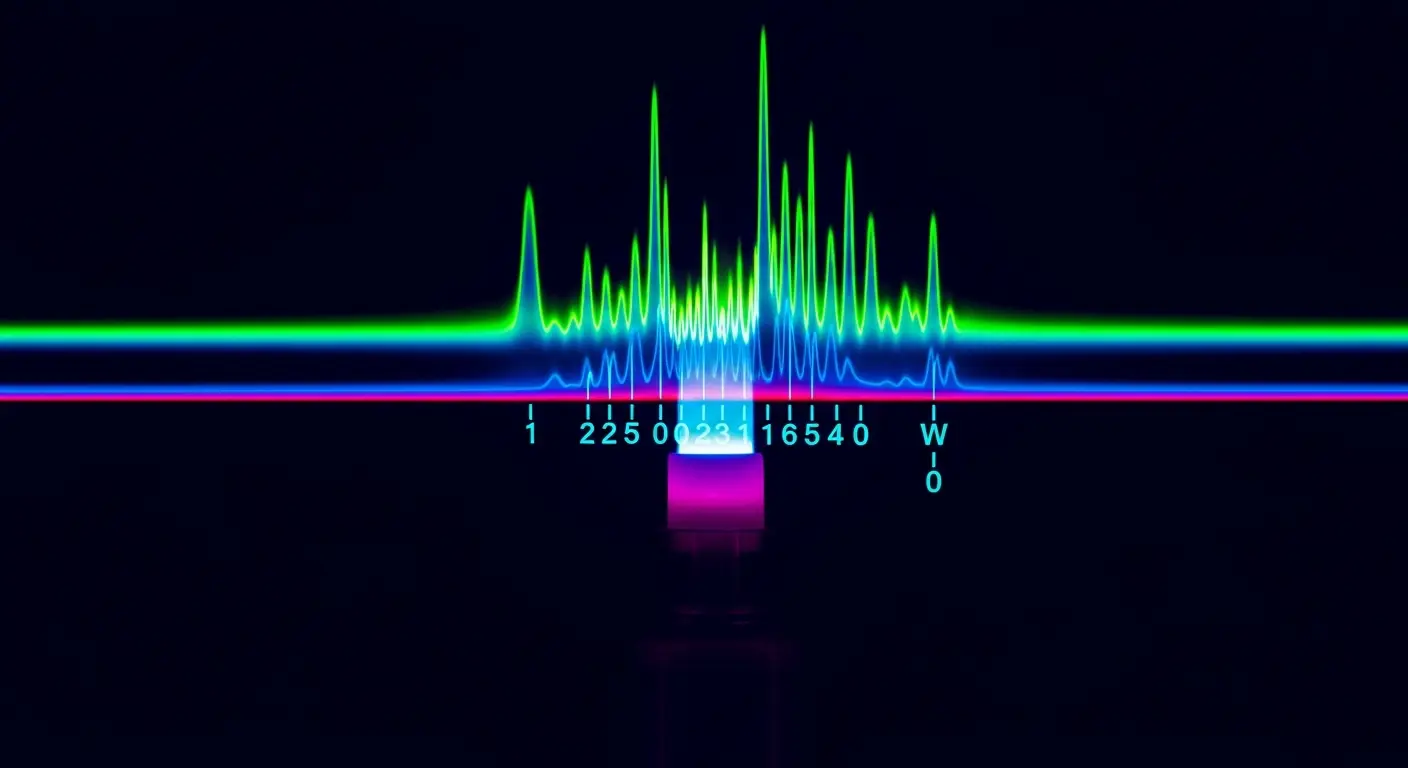

The replay buffer, a crucial component within deep reinforcement learning algorithms, functions as a memory bank storing experiences, the agent’s interactions with the environment and the resulting rewards or penalties. This allows for more efficient learning by breaking the temporal correlation between consecutive experiences and enabling the agent to learn from past trials multiple times. However, standard replay buffers often treat all experiences equally, regardless of their quality or reliability. ReaPER+, an annealing system developed by Kundu and Feld, dynamically adjusts how experiences are prioritised within this buffer during training. Initially, the system favours transitions with large temporal-difference (TD) errors. TD error represents the difference between the predicted and actual reward, signifying surprising or informative events that drive rapid early learning; this is analogous to focusing on the most obvious mistakes when first learning a skill. The magnitude of the TD error indicates how much the agent’s current estimate of the value of a state-action pair differs from the observed reward, providing a strong signal for updating the agent’s knowledge. As the AI’s understanding matures, ReaPER+ shifts to prioritising the reliability of these experiences, discounting those with unstable value estimates. Unstable value estimates arise from noisy or infrequent transitions, and learning from such data can hinder convergence. By ensuring the system learns from consistent and trustworthy data, the algorithm improves stability and accelerates learning. Compared to fixed prioritisation methods like proportional prioritisation (PER) and the original ReaPER, this dynamic approach offers gains in sample efficiency across both quantum compilation and quantum architecture search benchmarks. Quantum compilation involves translating a high-level quantum algorithm into a sequence of gate operations executable on specific quantum hardware, while quantum architecture search aims to discover optimal circuit designs for particular tasks. The improvement in sample efficiency means the algorithm requires fewer interactions with the quantum environment to achieve a desired level of performance.

ReaPER+ prioritises reliable data to accelerate quantum molecular simulations

Molecular simulations, vital for drug discovery and materials science, now require 85–90% fewer steps to reach chemical accuracy compared to previous methods. Chemical accuracy, typically defined as achieving an energy error of less than 1 kcal/mol, is a stringent requirement for reliable simulations. This threshold was previously unattainable due to the exponential growth of computational cost with qubit number, a fundamental challenge in quantum computing. Simulating molecular systems accurately requires representing the electronic structure of molecules, which scales exponentially with the number of electrons and, consequently, the number of qubits needed to encode the quantum state. This improvement stems from a new approach to deep reinforcement learning, focusing on optimising how experience is stored and reused within the replay buffer. By dynamically prioritising valuable data and transferring knowledge between simulations, the system reduces final energy error by up to 90 percent, representing a major leap in precision for quantum calculations. The algorithm effectively learns to identify and retain experiences that contribute most to reducing the energy error, discarding those that are noisy or irrelevant. This selective retention of information significantly accelerates the convergence of the simulation towards the desired level of accuracy. The ability to achieve chemical accuracy with fewer computational steps opens up possibilities for simulating larger and more complex molecular systems, potentially leading to breakthroughs in various scientific fields.

Optimising quantum circuits has achieved sample efficiency gains of between four and thirty-two times over existing methods. OptCRLQAS, a technique employed in the research, reduces the time required for quantum-classical evaluations, a computationally intensive step in circuit design, by up to 67.5 percent on a 12-qubit problem without compromising circuit quality. Quantum-classical evaluations involve repeatedly running a quantum circuit on a simulator or actual quantum hardware and comparing the results with classical calculations to assess the circuit’s performance. This process is computationally expensive, especially for larger circuits. By reducing the number of evaluations needed, OptCRLQAS significantly speeds up the optimisation process. Furthermore, a transfer scheme allows the reuse of data generated in noiseless simulations to accelerate learning in noisy environments, a common challenge in real-world quantum computing. Current quantum hardware is susceptible to noise, which can introduce errors into the calculations. Training reinforcement learning agents directly on noisy quantum hardware can be inefficient and unstable. The transfer scheme leverages data from ideal, noiseless simulations to pre-train the agent, providing a solid foundation before fine-tuning it on noisy hardware. This approach improves the robustness and performance of the agent in realistic quantum computing scenarios.

Optimised experience replay accelerates molecular simulations with limited qubits

Convincing demonstrations of gains in optimising small quantum systems have been achieved, utilising 6, 8, and 12 qubits, but a key question remains regarding scalability. The current focus is on these relatively small molecular tasks, and extending these improvements to the hundreds or thousands of qubits needed for truly impactful quantum computation presents a significant hurdle. The number of possible quantum states grows exponentially with the number of qubits, making it increasingly difficult to explore the entire state space and find optimal solutions. As the complexity of the quantum circuit and the sheer volume of experience data increases exponentially, the refined replay buffer techniques may encounter limitations. The memory requirements for storing the replay buffer could become prohibitive, and the computational cost of prioritising and sampling experiences could also increase significantly. Managing experience within reinforcement learning, specifically how data is stored, sampled, and reused, sharply improves both the efficiency and accuracy of optimising quantum circuits. This now prompts investigation into how these techniques can be combined with emerging error mitigation strategies to further enhance the durability of quantum algorithms. Error mitigation techniques aim to reduce the impact of noise on quantum computations, allowing for more reliable results even on imperfect hardware. Combining optimised experience replay with error mitigation could lead to a synergistic effect, enabling the development of robust and scalable quantum algorithms for a wide range of applications.

The research demonstrated improvements in optimising quantum circuits through refined experience replay techniques. These methods addressed bottlenecks in deep reinforcement learning, achieving gains in sample efficiency of between four and thirty-two times compared to existing approaches, and reducing wall-clock time by up to 67.5% on a 12-qubit problem. By treating the replay buffer as central to the optimisation process, the researchers improved performance across quantum compilation and quantum algorithm design benchmarks. The authors suggest future work will focus on combining these techniques with error mitigation strategies to further enhance algorithm durability.

👉 More information

🗞 Replay-buffer engineering for noise-robust quantum circuit optimization

🧠 ArXiv: https://arxiv.org/abs/2604.21863