Time estimation is a fundamental task underpinning precision measurement, global navigation systems, financial markets, and the organisation of everyday life. Many biological processes also depend on time estimation by nanoscale clocks, whose performance can be significantly impaired by environmental noise and fluctuations. Existing time estimation techniques often struggle to maintain accuracy and stability in noisy conditions, particularly when dealing with the inherent limitations of physical systems. Therefore, a need exists for robust and efficient time estimation methods that can overcome these challenges and deliver reliable performance across a range of applications. This research investigates novel approaches to time estimation, focusing on enhancing resilience to noise and improving the precision with which time intervals can be determined, ultimately aiming to advance the capabilities of timekeeping technologies and biological modelling.\n\n

Clock Uncertainty Relates to Event Timing

\n\n

New fundamental bounds linking clock uncertainty and events

\n\n

Establishing a fundamental clock uncertainty relation

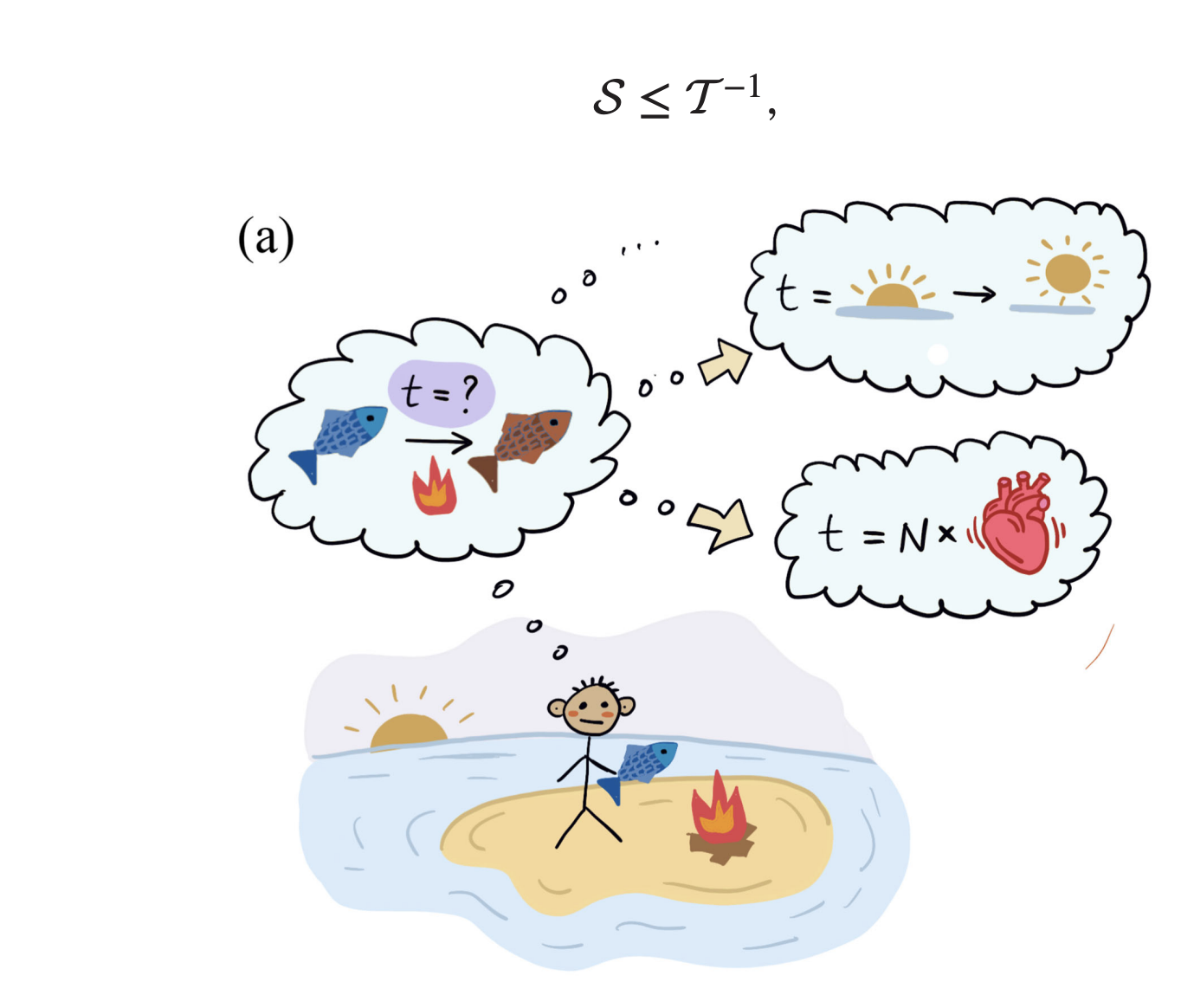

\n\nResearchers have uncovered a fundamental limit to the precision of timekeeping using random events, establishing a new “clock uncertainty relation” that governs the accuracy of clocks far from equilibrium. The team demonstrates that the precision of estimating time is fundamentally limited by how long, on average, one must wait for the next event in a random sequence, a quantity they term the “mean residual time. ” This finding establishes a tighter bound on timekeeping precision than previously understood, surpassing the limitations of existing theories. The research reveals that the signal-to-noise ratio of any measurement, including time estimation, is constrained by the inverse of the mean residual time, meaning a longer average wait between events leads to greater precision.\n\nSurprisingly, the mean residual time is always greater than or equal to the dynamical activity, proving the new relation is tighter than existing bounds. The team explicitly constructed counting observables that saturate this new bound, confirming its universality and demonstrating its practical applicability. This breakthrough delivers a new understanding of fluctuations in systems undergoing random processes, with implications for nanoscale clocks in quantum, electronic, and biomolecular settings. The findings establish that the mean residual time, not the dynamical activity, is the critical measure of “freneticity” that constrains fluctuations in systems far from equilibrium. Furthermore, because the bound is tight and directly observable, it provides a method to verify whether observed dynamics are truly autonomous, classical, and memoryless, offering a powerful tool for characterizing complex systems.\n\n

Timekeeping limits on random event frequency

\n\n

Timekeeping Precision Limits Random Event Frequency

\n\n

Establishing link between timekeeping and random event rates

\n\nThis research establishes a fundamental link between the precision of timekeeping and the rate at which random events occur. The team demonstrates that any system relying on a sequence of random events to measure time, essentially acting as a clock, has an inherent limit to its accuracy determined by the frequency of those events. Specifically, the faster the events, the more precise the timekeeping can be, but there remains a fundamental lower bound on uncertainty. The researchers explored this principle using a theoretical model, an “Erlang clock”, to rigorously demonstrate these limits. They showed that, for a given system, the accuracy of time measurement is maximized when all random events are weighted equally, confirming the optimality of this approach.\n\n

Analyzing long-term system variance and operational assumptions

\n\nFurthermore, the analysis reveals that the long-term variance of time estimation approaches a minimum value, dictated by the system’s characteristics and the rate of random events. The authors acknowledge that their work relies on specific assumptions about the system, namely that it operates in a stationary state and possesses only two measurable states. Future research could explore the implications of these findings for more complex systems and investigate how these limits might be overcome or mitigated in practical applications.\n\n

\n🗞 Optimal Time Estimation and the Clock Uncertainty Relation for Stochastic Processes

\n🧠 DOI: http://link.aps.org/doi/10.1103/rpls-mp8z\n

\n\n

Advanced observables defining the derived bounds

\n\nFrom a mathematical standpoint, the derivation of this new bound relies on constructing specialized counting observables that precisely track the cumulative impact of stochastic fluctuations. These observables act as generalized estimators, allowing researchers to map the physical process onto a statistical framework where the maximum achievable signal-to-noise ratio can be calculated. This methodology is crucial because it moves beyond simple variance calculations, instead providing a measure tied directly to the system’s temporal memory and rate of arrival statistics.\n\n

Implications for quantum metrology and clocks

\n\nThe implications for quantum metrology are significant, particularly for ultra-cold atomic clocks. Historically, time uncertainty in these systems was often modeled using fundamental limits derived from the Heisenberg uncertainty principle applied to energy and time. This new relation, however, introduces a statistical constraint based on temporal sparsity—the waiting time between detectable quanta—offering a potentially tighter operational limit for devices operating far from perfect equilibrium conditions.\n\nFurthermore, the identification of the mean residual time as the critical measure of “freneticity” recontextualizes how system dynamics are characterized. Conventional approaches might analyze steady-state power spectra, but this framework provides a non-equilibrium characterization. This allows scientists to distinguish between true autonomous randomness and systematic non-Markovian correlations that might be mistaken for pure stochastic noise.\n\nPractically implementing this bound requires highly sophisticated data acquisition systems capable of resolving extremely low-frequency events with high temporal granularity. The primary challenge remains the precise separation of the fundamental statistical limit from environmental noise sources, such as thermal drift or external electromagnetic interference, which can artificially inflate the perceived mean residual time and mask the true physical constraint.