For machine learning algorithms, parameters are the building blocks. They are an important part of the historical training data. In the language domain, sophistication generally correlates with a higher number of parameters, and this has been proven to be a reliable standard. OpenAI’s GPT-3 has 175 billion parameters, making it one of the largest language models ever trained. It can make primitive analogies, generate recipes, and even code at a basic level.

Recently, Google developed and benchmarked novel techniques that have allowed them to train a language model with more than 1 trillion parameters. The company claims that the 1.6-trillion-parameter model, the largest one so far, has been able to achieve faster speeds. It is 4 times faster than its previous largest language model, T5-XXL.

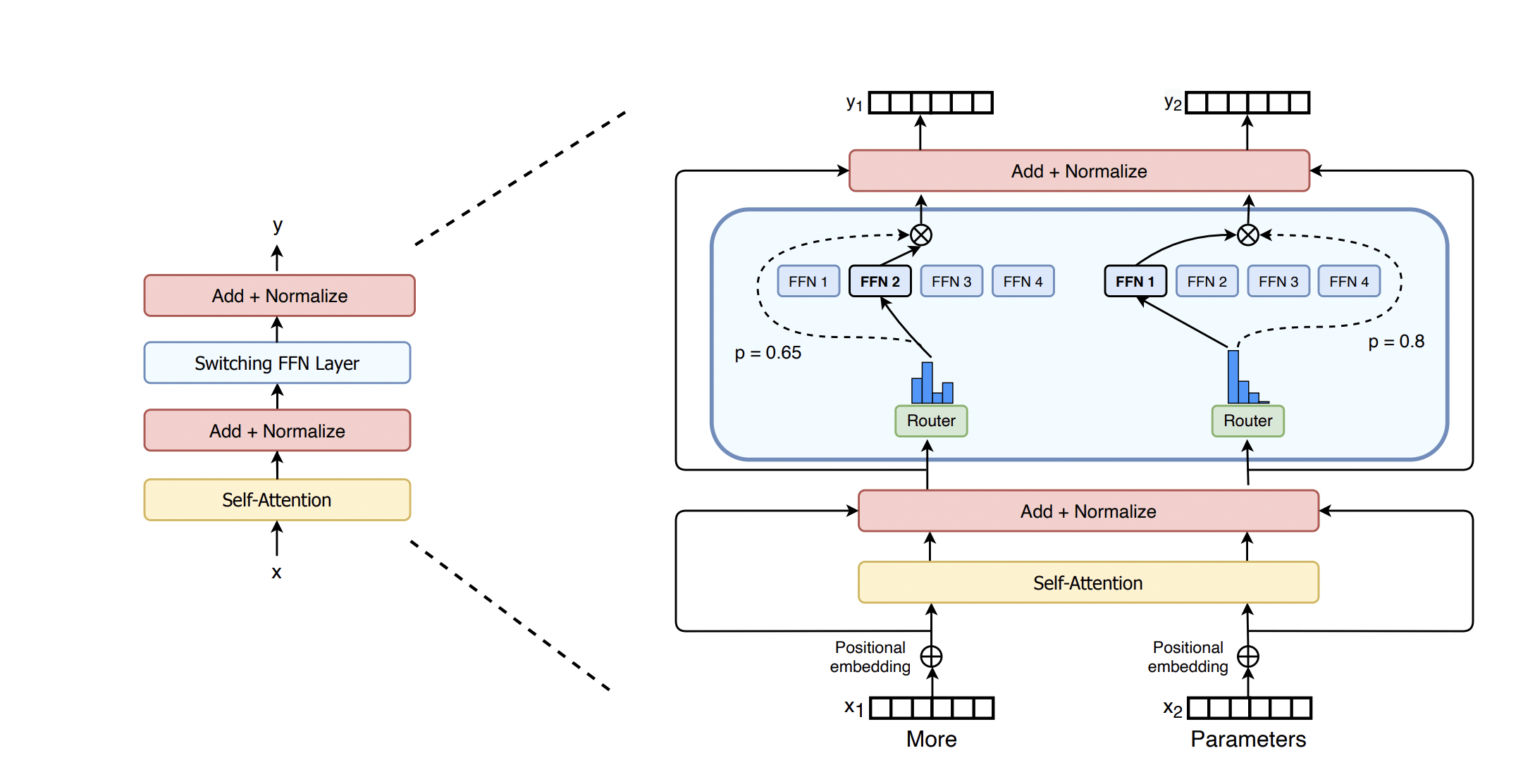

In their published paper, the researchers stated that they believe large-scale training is the way to go for powerful models. A simple architecture backed by large datasets and more parameters will go beyond complex algorithms. However, it is computationally intensive to train a model this way. To resolve this, the researchers used what is called a Switch Transformer a ‘sparsely activated’ technique employing only a subset of the model’s weights. Weights are the parameters that transform the model’s input data.

The Switch Transformer builds on a few experts mixed together. This is an AI model paradigm that first appeared in the early ’90s. The general concept is to use different models specialising in different tasks, all of them inside a larger model and having a ‘gating network’ select certain experts for particular data.

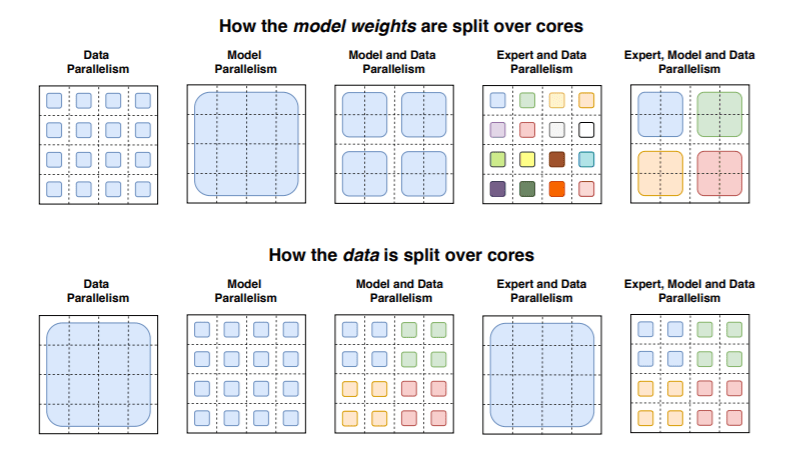

What the Switch Transformer brings to the table is that it can leverage hardware made for dense matrix multiplications, or the mathematical operations used in the language models, including GPUs and Google’s tensor processing units (TPUS). It can do so in an efficient manner as well. The distributed training setup saw the models split unique weights on different devices. The more the devices, the more weights there were, but this allowed for reasonable memory and computation footprint on all devices individually.

In one of the researchers’ experiments, several different

In one of the researchers’ experiments, several different Switch Transformer models were put to work. Each one contained 32 TPU cores on the Colossal Clean Crawled Corpus, a dataset of text gathered from Reddit, Wikipedia, and other websites around 750GB. The researchers tasked the models to predict missing words in passages in which 15% of the words were concealed. Other challenges were also issued, such as answering increasingly tougher questions by retrieving text.

There was no training instability at all, the researchers claim about their model with 2,048 experts, Switch-C, in contrast with a smaller model called Switch-XXL, which had 395 billion parameters and 64 experts. On one benchmark, the Sanford Question Answering Dataset (SQuAD), Switch-C scored lower than Switch-XXL, 87.7 to 89.6 respectively. The researchers attributed this to the opaque relationship between computational requirements, how many parameters involved, and the quality of the fine-tuning.

The Switch Transformer was able to perform better because of this. It saw a more than 7 times pretraining speedup even when using the same amount of resources. The researchers demonstrated that large sparse models could create smaller and denser models with 30% of the quality gains of the larger model after fine-tuning on tasks. One test saw a Switch Transformer model trained to translate between more than 100 languages experience more than 4 times speedup, particularly 91% of 101 languages.

‘Though this work has focused on extremely large models, we also find that models with as few as two experts improve performance while easily fitting within memory constraints of commonly available GPUs or TPUs. We cannot fully preserve the model quality, but compression rates of 10 to 100 times are achievable by distilling our sparse models into dense models while achieving ~30% of the quality gain of the expert model.’

Quote from the paper

The researchers sadly did not account for how

The researchers sadly did not account for how these large language models impact the real world. The models amplify biases found within the public data, which often sourced from communities with gender, race, and religious prejudices. OpenAI notes that the models have tendencies to place words such as ‘naughty’ or ‘sucked’ near female pronouns and ‘terrorism’ near ‘Islam’. Other studies have also found immense bias from some of the most popular models, including Google’s BERT and XLNet, OpenAI’s GPT-2, and Facebook’s RoBERTa. The study was published in April 2020 by Intel, MIT, and researchers from CIFAR, a Canadian AI initiative. Malicious actors can use this bias to spread harmful and radicalising ideas, according to the Middleburg Institute of International Studies.

It is unknown how Google’s policies on published machine learning research led to this outcome. In late 2020, Reuters reported that the company’s researchers must now consult with legal, policy, and PR teams before working on topics like face and sentiment analysis and categorising race, gender, or political leanings. In early December 2020, Google fired Timnit Gebru, an AI ethicist, supposedly over a research paper among other reasons. The paper discussed risks, including how the models’ carbon footprint would affect marginalised communities and how they tend to use offensive language, hate speech, and other harmful language targeted at specific ethnicities and groups.