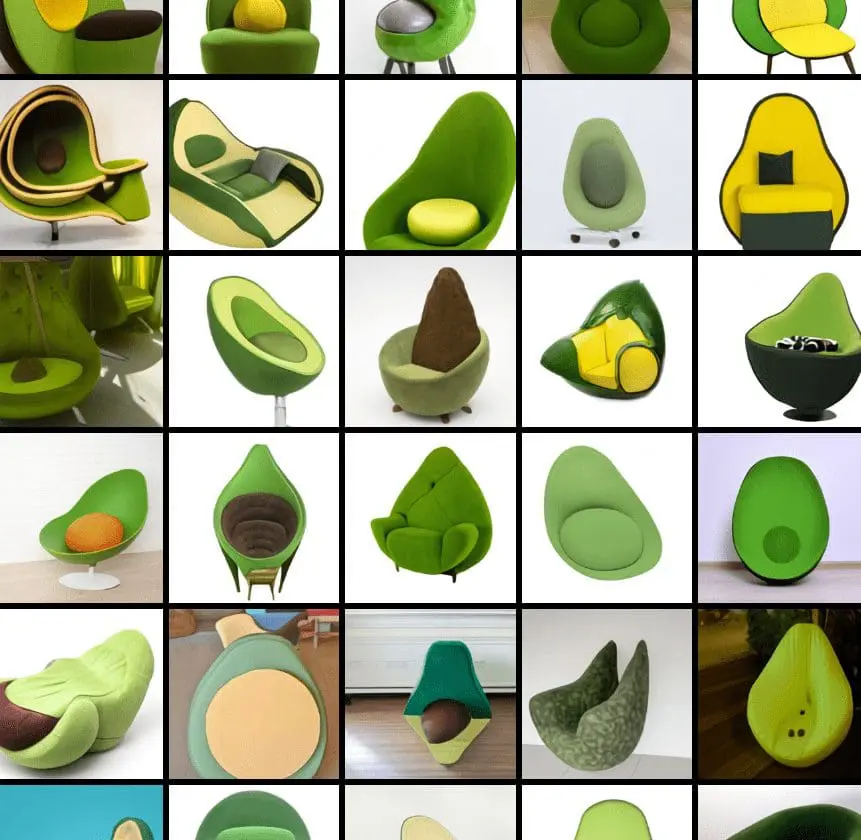

The new machine learning model named DALL-E comes from a combination of artist Salvador Dalí and Pixar’s beloved robot, WALL-E. DALL-E is a 12-billion parameter version of OpenAI’s GPT-3 specializing in image generation from text. That means users can use prompt or text descriptions to generate an image. Type in a text description like Human Avacado and create an image related to that original prompt.

DALL-E uses a dataset of text-image pairs to create images ranging from anthropomorphized animals and objects. It can combine unrelated concepts to form plausible pictures, render text, and transform existing images. The tool produces images that fall into the category of AI generated images.

GPT-3 proves that language can allow large neural networks to perform text generation tasks, and Image GPT has generated high-fidelity images. OpenAI aims to show that using language to manipulate visual concepts is now closer than ever.

Prompt engineering

Users can get images delivered in seconds by entering suitable text about anything: no graphic designer or friction. Users can then even refine the images based on the original prompt. Clearly, the user’s role is to control the output using natural language – instead of talking to a designer or graphics specialist. It’s likely to create a whole host of new skills for people who are prompt engineers.

DALL-E from Open AI can create images for many different sentence types, allowing for the exploration of language’s compositional structure. It can modify the attributes of objects as well. The model can even draw multiple objects and combine various features. The process involves relative positioning and stacking things as well. There is a level of controllability over the positioning of some objects and the attributes, but the way captions are phrased will directly affect the outcome. The more objects and features are introduced, the more likely DALL.E will become confused. DALL-E also does not understand semantics and rephrasing, which means it does not always know the correct interpretations.

Image generation models

DALL-E is a transformer language model. After receiving text and image data up to 1280 tokens in a single data stream, it will use maximum likelihood to generate all the tokens one by one. DALL-E can create images from nothing and even regenerate regions in an image within the text prompt’s requirements. DALL-E could significantly impact society, and OpenAI will continue investigating how DALL.E and future models will affect work processes and professions, how much bias is in the output, and any ethical concerns the technology has.

AI art generator

For different viewpoints, DALL-E can apply distortions to scenes, which means that the scientists could tinker with how it generates reflections. Even macro photos and other ways to visualize objects are possible.

DALL-E can understand some implied context, such as sunlight necessitating shadows, even when not explicitly mentioned. This can function almost like a 3D rendering engine such as Unity to create the perfect lighting conditions. It can do this without the need to state every single detail in the incoming captions.

The DALL-E model can even generate designs for fashion and furniture.

As language composition involves the real and the imaginary, DALL-E can create objects that do not exist in real life. Even unrelated concepts will not hamper the generation of exciting images. This includes anthropomorphized animals and objects, hybrid animals, and even emojis.

GPT-3 can perform tasks even if only armed with a description and a cue to answer in a specific way. If given a phrase such as ‘here is the sentence ‘a person walking his dog in the park’ translated into French:’ GPT-3 will answer in French, ‘un homme qui promène son chien dans le parc’. This is called zero-shot reasoning. DALL-E can also do this with the images it generates if appropriately instructed.

OpenAI did not anticipate many of these capabilities, and it tested DALL.E’s expertise with analogical reasoning problems, particularly on Raven’s progressive matrices. This visual IQ test was used widely in the 20th century. DALL-E also knows geographical facts and objects from different periods. Read more from Open.AI and the profile of the founder of Open AI, Sam Altman.