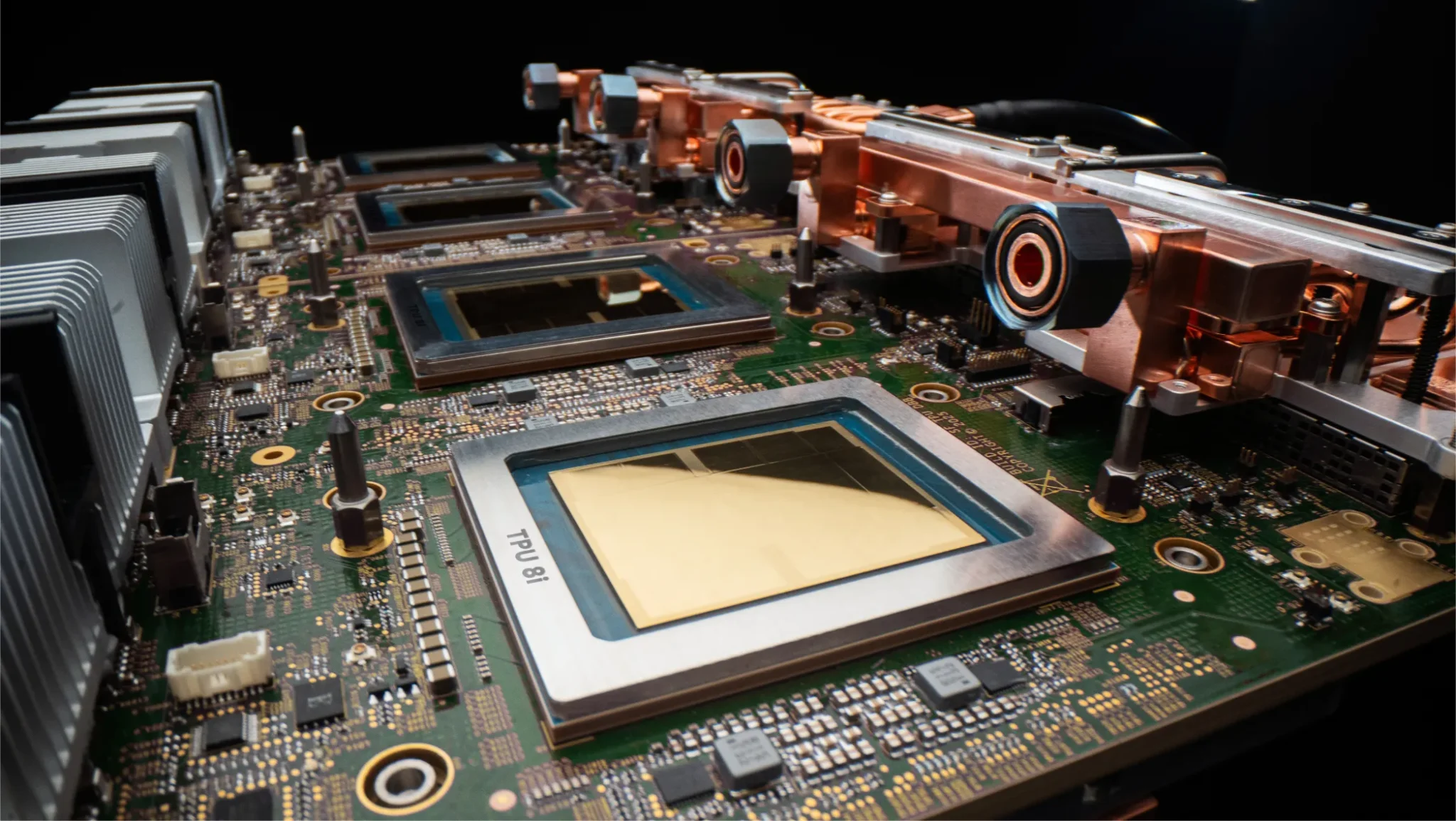

Google is releasing two distinct chips, the TPU 8t and TPU 8i, a move that differs from many companies focusing on single, all-purpose processors. These eighth-generation Tensor Processing Units are custom-engineered to address the specific demands of computationally intensive AI model training and the speed-critical inference needed for responsive applications. According to Amin Vahdat, SVP and Chief Technologist, AI and Infrastructure, the launch represents the culmination of a decade of development, emphasizing a long-term strategy for substantial advancement. Designed in partnership with Google DeepMind, the chips are purpose-built to support fast, collaborative AI agents and adapt to evolving model architectures, signaling a commitment to optimizing the entire AI lifecycle.

TPU 8t Accelerates Frontier Model Training at Scale

A single TPU 8t superpod now scales to 9,600 chips and two petabytes of shared high bandwidth memory, marking a substantial leap in the capabilities of AI supercomputing hardware unveiled by Google. The eighth generation of Google’s Tensor Processing Units, designated TPU 8t and TPU 8i, represent more than an incremental upgrade, according to the company; they are the culmination of over a decade of development. The TPU 8t is specifically engineered as a training resource, designed to dramatically reduce the time required to develop leading-edge AI models. Google asserts that the new system delivers nearly three times the compute performance per pod compared to the previous generation, enabling faster innovation and maintaining a competitive edge for its customers.

This performance boost is achieved through a combination of increased compute throughput, expanded scale-up bandwidth, and a focus on power efficiency. The integration of ten times faster storage access, utilizing TPUDirect to directly feed data into the TPU, ensures maximum system utilization. The system is engineered to target over 97% “goodput”, a measure of productive compute time, through comprehensive Reliability, Availability and Serviceability (RAS) capabilities, including real-time telemetry and automatic fault rerouting. Complementing the TPU 8t is the TPU 8i, tailored for low-latency inference and designed to support fast, collaborative AI agents. This specialization acknowledges a shift in AI applications towards more interactive and agent-driven systems, where responsiveness is paramount. The TPU 8i tackles the challenge of processor idleness by pairing 288 GB of high-bandwidth memory with 384 MB of on-chip SRAM, three times more than the previous generation, effectively keeping a model’s active working set entirely on the chip.

Google also doubled the physical CPU hosts per server, transitioning to custom Axion Arm-based CPUs and employing a non-uniform memory architecture (NUMA) for optimized performance. This architecture delivers 121 ExaFlops of compute and allows the most complex models to leverage a single, massive pool of memory. These innovations collectively enhance the TPU 8i’s ability to handle modern Mixture of Expert (MoE) models. Both chips are built on a foundation of co-design, where hardware, networking, and software are meticulously integrated with model architecture and application requirements. This holistic approach extends to power efficiency, a critical consideration in modern data centers, with integrated power management dynamically adjusting power draw based on real-time demand, ensuring sustainable and scalable AI infrastructure.

TPU 8i Optimizes Low-Latency Inference for AI Agents

Artificial intelligence is rapidly shifting from static models to dynamic, interactive agents. Existing hardware, while capable of handling the immense computational demands of training these models, often struggles with the low-latency requirements of real-time inference, the process of applying a trained model to new data. This bottleneck impacts the responsiveness of AI agents, hindering their ability to engage in fluid, collaborative interactions. Therefore, a growing need exists for specialized infrastructure optimized not just for raw processing power, but for minimizing delays in delivering results, a challenge Google addresses with its newly unveiled TPU 8i. Google’s eighth-generation Tensor Processing Units represent a departure from the traditional approach of single, all-purpose AI accelerators. The design philosophy behind these chips isn’t merely about increasing speed; it’s about fundamentally reshaping the infrastructure to accommodate the unique demands of these emerging AI paradigms.

A critical innovation within the TPU 8i is its expanded memory architecture. This addresses the “memory wall” that often plagues inference tasks, where processors spend valuable time waiting for data. The advancements extend beyond memory and processing, resulting in a system designed to not only accelerate inference but to do so with significantly improved efficiency, a crucial consideration as power consumption becomes an increasingly important constraint in data centers.

by customizing and co-designing silicon with hardware, networking and software, including model architecture and application requirements, we can deliver dramatically more power efficiency and absolute performance.

Virgo Network & RAS Enable Near-Linear TPU 8t Scaling

While the TPU 8t focuses on accelerating model training and the TPU 8i on low-latency inference, a critical, less visible component underpinning both chips’ performance is the newly developed Virgo Network. This interconnect fabric, combined with robust Reliability, Availability, and Serviceability (RAS) capabilities, allows the TPU 8t to achieve what Google describes as “near-linear scaling” for up to a million chips in a single logical cluster, a feat previously unattainable at this scale. The pursuit of scale isn’t merely about adding more processors; it’s about maintaining efficiency as systems grow exponentially more complex. Google’s engineers recognized that traditional interconnects would become bottlenecks, hindering communication between chips and diminishing returns on investment. The Virgo Network addresses this by providing a high-bandwidth, low-latency pathway for data exchange, enabling the TPU 8t to leverage a massive pool of shared memory, now two petabytes per superpod, without significant performance degradation.

This architecture delivers 121 ExaFlops of compute, allowing even the most complex models to benefit from the increased processing power. However, sheer computational capacity is insufficient without addressing the inevitability of hardware failures. “Every hardware failure, network stall or checkpoint restart is time the cluster is not training,” explains Google, highlighting the importance of minimizing downtime. The TPU 8t incorporates a comprehensive suite of RAS features, including real-time telemetry monitoring tens of thousands of chips, automatic detection and rerouting around faulty interconnect links, and Optical Circuit Switching (OCS) that dynamically reconfigures hardware without human intervention. The Virgo Network and RAS features aren’t isolated innovations; they are deeply integrated with Google’s software stack, including JAX and Pathways. This co-design approach, where hardware and software are developed in tandem, is central to Google’s strategy, allowing the TPU 8t to not only scale to an unprecedented number of chips but also to maintain consistent performance and reliability throughout the entire system, paving the way for increasingly ambitious AI workloads.

Both chips will be generally available later this year, and can be used as part of Google’s AI Hypercomputer, which brings together purpose-built hardware (compute, storage, networking), open software (frameworks, inference engines), and flexible consumption (orchestration, cluster management and delivery models) into a unified stack.

Citadel Securities Leverages 8th Generation TPUs for AI Workloads

Citadel Securities, a leading market maker, is among the first to deploy Google’s eighth-generation Tensor Processing Units (TPUs), signaling a growing reliance on specialized AI hardware to maintain competitive advantages in high-frequency trading and complex financial modeling. The move highlights a shift beyond simply accelerating existing algorithms; instead, firms like Citadel are leveraging the new capabilities to explore entirely new approaches to market analysis and execution. These aren’t merely incremental improvements; the architecture has been fundamentally rethought to support models that reason through problems, execute multi-step workflows and learn from their own actions in continuous loops. This focus on agentic AI is particularly relevant for Citadel, where rapid, autonomous decision-making is paramount. The firm’s adoption of the technology suggests an intention to build more sophisticated, adaptive trading systems capable of navigating increasingly volatile and complex markets. Specifically, the TPU 8t is engineered to dramatically reduce model development cycles.

Google claims it can cut the time from months to weeks, a critical advantage in a fast-moving financial landscape. “By also integrating 10x faster storage access, combined with TPUDirect to pull data directly into the TPU,” Google states, “TPU 8t helps ensure maximum utilization of the end-to-end system.” While the 8t focuses on training, the TPU 8i is optimized for low-latency inference, essential for real-time trading decisions. “In the agentic era, users expect to be able to ask questions, delegate tasks and get outcomes,” Google explains, and the TPU 8i is designed to facilitate this level of responsiveness.

In the agentic era, users expect to be able to ask questions, delegate tasks and get outcomes.