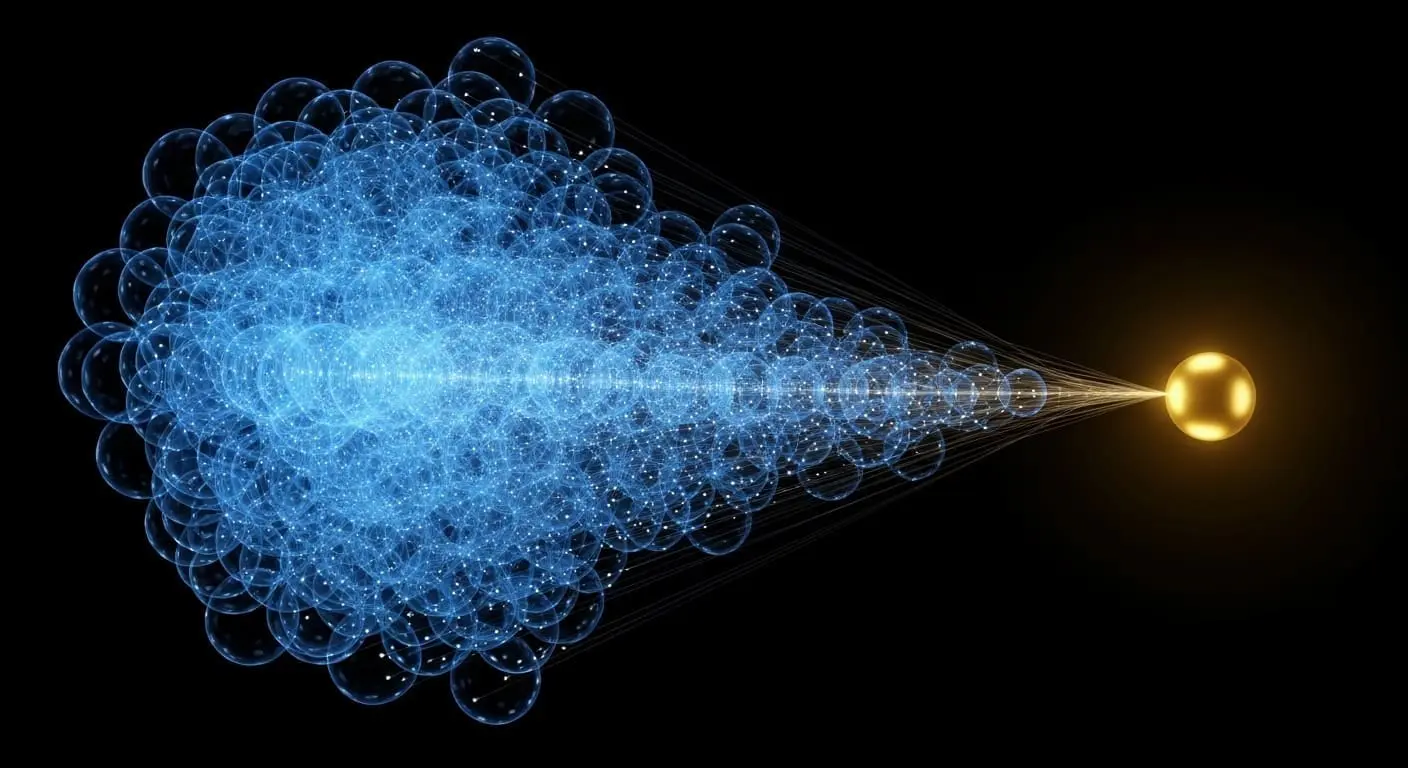

Researchers at Harvard University, Chirag Wadhwa and Sitan Chen, have detailed a novel approach to quantum state certification that significantly diminishes the entanglement resources previously considered essential. Performing measurements on fewer copies of the quantum state concurrently achieves optimal testing rates, a crucial advancement particularly when high precision is paramount. By employing reductions from testing to learning paradigms and leveraging recent progress in quantum state tomography, the authors have established both upper and lower bounds on the complexity associated with this fundamental task, thereby facilitating the development of more practical quantum technologies.

Reduced quantum state certification copies unlock practical high-precision verification

Entanglement requirements for quantum state certification are now demonstrably reduced to $d^$2 copies, representing a substantial decrease from the previously established necessity of $O(d/ε^2)$ copies. This breakthrough constitutes a pivotal threshold, enabling practical, high-precision verification of quantum states, a task previously considered intractable even for low-dimensional systems due to the immense demands on entanglement resources. The new protocol strategically leverages reductions from testing to learning and advances in quantum state tomography, achieving a near-optimal rate by deliberately limiting entanglement to measurements performed on fewer copies at once. The parameter ‘d’ denotes the dimensionality of the quantum state, while ‘ε’ represents the acceptable error margin in the certification process.

The significance of this reduction lies in the practical limitations of generating and maintaining entanglement. Entangled states are fragile and susceptible to decoherence, meaning their quantum properties degrade over time due to interaction with the environment. The fewer entangled particles required, the easier it is to build and operate a reliable quantum system. Previously, the $O(d/ε^2)$ scaling meant that even for moderately sized quantum states (relatively small values of ‘d’) and demanding precision requirements (small values of ‘ε’), the number of entangled copies needed would quickly become prohibitive. This new method, scaling with $d^$2, offers a much more manageable resource requirement. More efficient characterisation and calibration of quantum devices are now possible thanks to this advancement, and it also establishes both upper and lower bounds on the complexity of this fundamental task. Algorithms for mixedness testing and purity estimation have enhanced quantum state certification, a vital process for verifying the accuracy of quantum devices, both achieving optimal rates with $d^$2 copies. Mixedness testing determines the proximity of a quantum state to a completely mixed state, a state representing maximal randomness, while purity estimation measures its degree of mixedness, indicating how far it is from a pure, well-defined quantum state. These algorithms extend the core findings, offering tools to assess quantum state quality beyond simple verification and providing a more complete picture of their characteristics for improved device performance. Understanding the mixedness and purity allows for better error mitigation strategies and optimisation of quantum operations.

Quantum state tomography, the process of reconstructing an unknown quantum state from a series of measurements, plays a crucial role in this new approach. Traditional tomography often requires many measurements and entangled states to achieve high fidelity. The researchers have refined these techniques to extract the necessary information with fewer resources, effectively reducing the complexity of the certification process. The reduction from testing to learning involves framing the state certification problem as a statistical learning problem, where the goal is to distinguish between the target state and states that are ε-far from it. This allows the application of techniques from statistical learning theory to establish bounds on the number of samples (quantum state copies) needed for accurate certification.

Reduced entanglement requirements enhance high-precision quantum system verification

Verification methods for quantum states are steadily improving, a key step towards building reliable quantum computers and communication networks. Reducing the resource-intensive process of creating and maintaining entanglement eases practical implementation. A new benchmark in quantum state verification has been achieved, moving beyond the limitations of previous methods that demanded extensive entanglement between quantum particles. Deliberately reducing the scope of entanglement needed for measurements has demonstrated near-optimal testing rates using only $d^$2 copies of the quantum state, where ‘d’ represents its dimensionality. This advancement streamlines the certification process and unlocks possibilities for more efficient characterisation of quantum devices, defining a lower bound on the complexity of this task. The implications extend to various quantum technologies, including quantum key distribution, where verifying the integrity of transmitted quantum states is crucial for secure communication, and quantum sensing, where accurate state preparation and verification are essential for achieving high sensitivity.

The ability to certify quantum states with fewer resources has a direct impact on the scalability of quantum systems. As quantum computers grow in size and complexity, the demands on entanglement resources will increase dramatically. This new approach provides a pathway to overcome these limitations, enabling the construction of larger and more powerful quantum devices. Furthermore, the established upper and lower bounds on the complexity of quantum state certification provide a theoretical foundation for future research in this area, guiding the development of even more efficient verification protocols. The $d^2$ scaling represents a significant improvement over the previous $O(d/ε^2)$ scaling, particularly for high-dimensional states and stringent precision requirements. This allows for more frequent and reliable verification, leading to improved performance and stability of quantum systems. The work contributes to the broader field of quantum information science by addressing a fundamental challenge in quantum technology and paving the way for more practical and scalable quantum applications.

Researchers demonstrated a new method for verifying quantum states, achieving near-optimal performance with only d 2 copies of the state, where ‘d’ represents the state’s dimensionality. This represents an improvement over previous methods requiring O(d/ε 2 ) copies, particularly for high-dimensional states needing precise verification. The reduced entanglement needed for measurements streamlines the certification process and facilitates more efficient characterisation of quantum devices. These findings establish a theoretical foundation for future improvements in quantum verification protocols and contribute to the scalability of quantum systems.

👉 More information

🗞 Optimal Quantum State Testing Even with Limited Entanglement

🧠 ArXiv: https://arxiv.org/abs/2604.07460