Assessing complexity in discrete-variable quantum systems has historically been challenging. Shuangshuang Fu of the University of Science and Technology Beijing in collaboration with University of Chinese Academy of Sciences, and colleagues have proposed a new information-theoretic quantifier of complexity within the stabilizer formalism of quantum computation. The approach uses the interplay between classical and quantum properties, quantified via the $L^$4-norm, to relate state complexity to how much a quantum state deviates from being a simple, easily simulated ‘stabilizer state’. This provides a more refined assessment of quantum state complexity, building on established techniques within quantum computation.

The measure assesses complexity by determining how far a quantum state strays from being a ‘stabilizer state’, which are relatively simple to simulate on conventional computers. Understanding this deviation is vital for evaluating the capabilities and limitations of emerging quantum computers. This research focuses on discrete-variable quantum systems, where assessing complexity has historically been difficult due to the infinite dimensionality of the Hilbert space and the challenges in representing quantum information effectively. The team’s approach, built within the ‘stabilizer formalism’, a standardised set of rules for manipulating qubits based on Pauli operators and their tensor products, quantifies complexity by gauging how much a quantum state deviates from being a simple ‘stabilizer state’, easily simulated by conventional computers. This deviation, termed ‘nonstabilizerness’, is akin to assessing how easily a wobbly tower of blocks can be straightened; a higher degree of nonstabilizerness indicates a more complex, and potentially more powerful, quantum state. The stabilizer formalism is particularly useful as it allows for efficient classical simulation of quantum circuits composed of Clifford gates, making it a natural framework for defining and quantifying complexity relative to classical simulability.

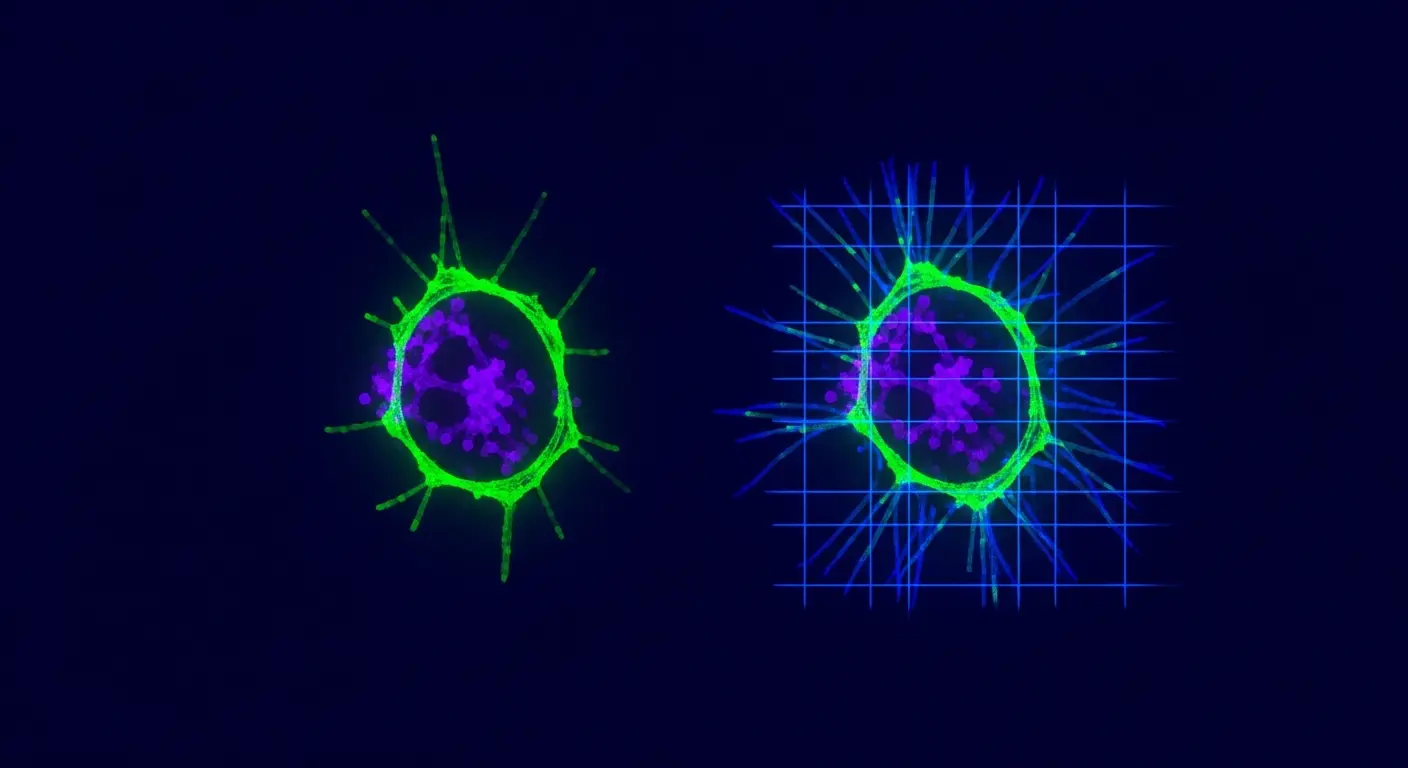

Quantifying discrete quantum states reveals increased complexity approaching SIC-POVM limits

Complexity measurements for discrete-variable quantum systems have improved, with the new quantifier achieving a maximum value of d 2 − 2’d* / (d + 1). This represents a substantial increase over previous limits of d 2 − d, where d represents the dimension of the Hilbert space. Specifically, this threshold allows characterisation of states previously inaccessible to quantification, those approaching the theoretical limit of complexity represented by SIC-POVM fiducial states, whose existence remains an open question in quantum information theory. SIC-POVMs (Symmetric Induced Measures, Positive Operator Valued Measures) are sets of vectors in a Hilbert space that are as mutually dissimilar as possible, and are conjectured to provide optimal state discrimination. The ability to approach characterisation of these states is significant, as they are believed to represent a fundamental upper bound on the complexity of quantum states. Utilising Jordan and Lie products, the approach reveals a strong link between quantum state complexity and ‘nonstabilizerness’, quantifying how much a state deviates from easily simulated ‘stabilizer states’, which are akin to classical bits. The symmetric Jordan product is associated with classicality, while the skew-symmetric Lie product is linked to quantumness; their combination provides a nuanced measure of the quantum character of a state. Stabilizer states represent the least complex pure quantum states, while SIC-POVM states appear to represent the most complex, offering a clear benchmark for comparison.

A valuable new tool for assessing quantum systems is now available through this correlation between complexity and simulability, though the analysis currently applies only to pure states. This limitation is due to the mathematical structure of the chosen approach, which relies on the square root of the quantum state being well-defined. Extending the quantifier to mixed states requires further investigation and potentially different mathematical tools. It does not yet demonstrate a clear pathway towards optimising real-world quantum computations or addressing the challenges of decoherence, which remains a significant hurdle in building practical quantum computers. The new quantifier links state complexity to ‘nonstabilizerness’, effectively measuring how difficult a state is to replicate using conventional computing methods; a higher value indicates greater complexity and potentially enhanced computational power. Further investigation focuses on the mathematical framework underpinning this relationship, specifically the application of the $L^4$-norm to relate state complexity to nonstabilizerness, a method differing from previous approaches that often rely on entanglement measures or other resource-based quantifiers. Establishing this connection represents significant progress, offering a means to assess the resources required for computation and the limits of simulation, key for building more powerful quantum computers and accurately predicting their capabilities. The $L^4$-norm provides a robust measure of the ‘distance’ between a quantum state and its nearest stabilizer state, offering a clear and quantifiable metric for complexity.

Linking quantum state complexity to computational tractability

Quantifying complexity in quantum systems is vital to realising the full potential of quantum technologies, offering a means to assess the resources required for computation and the limits of simulation. This work introduces a novel method to assess the complexity of quantum states, building on the ‘stabilizer formalism’, a set of rules governing how qubits are manipulated. The formalism allows for the efficient description of quantum states and operations that can be implemented using Clifford gates, a subset of quantum gates that are efficiently simulable on classical computers. Combining mathematical descriptions of classical and quantum characteristics, the research now allows quantification of how much a quantum state deviates from simple, easily simulated states. This deviation is crucial because states that are far from being stabiliser states are likely to require significantly more resources to prepare and manipulate, and may offer advantages in terms of computational power. This provides insights into the degree to which a quantum state deviates from easily simulated forms, and represents a key step towards understanding the capabilities of quantum computers. Understanding this relationship is essential for developing algorithms that can effectively harness the power of quantum mechanics and for designing quantum hardware that can efficiently implement these algorithms. The ability to quantify complexity also allows for a more informed comparison between different quantum states and algorithms, facilitating the development of more efficient and powerful quantum technologies.

The research successfully quantified the complexity of quantum states by linking them to their deviation from easily simulated ‘stabiliser’ states. This is important because it provides a quantifiable metric for assessing the resources needed for quantum computation and the limits of classical simulation. The study demonstrated that state complexity is closely related to how far a quantum state is from a stabiliser state, measured using the $L^4$-norm of its characteristic function. The authors suggest this work contributes to understanding the capabilities of quantum computers and developing more efficient quantum algorithms.

👉 More information

🗞 Complexity of quantum states in the stabilizer formalism

🧠 ArXiv: https://arxiv.org/abs/2604.20118