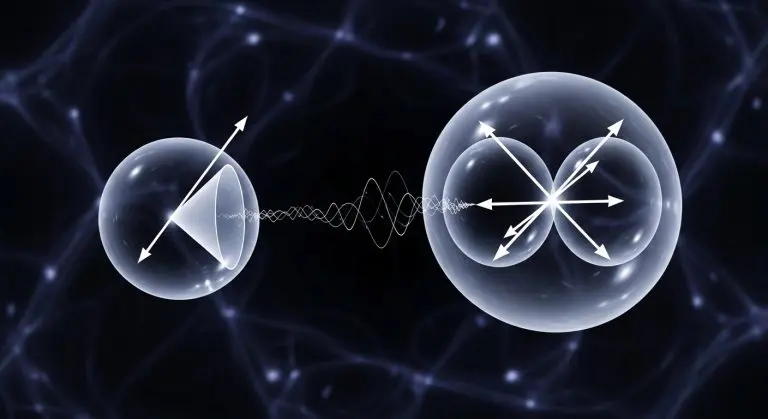

A new study addresses key challenges of hardware latency, noise, and learning convergence currently limiting quantum reinforcement learning for real-time control systems. Nguyn Truong Thu Ngo and colleagues at Adelaide University in collaboration with Commonwealth Scientific and Industrial Research Organisation (CSIRO), VTT Technical Research Centre, present a thorough study of a quantum-classical agent controlling the CartPole benchmark environment, bridging the gap between simulated results and execution on actual superconducting quantum hardware. The findings show that even a single-qubit agent can outperform classical methods in learning efficiency, achieving solutions in fewer episodes, and establishing a relationship between control-loop rate, measurement precision, and balancing stability. By directly programming Zurich Instruments readout electronics, the research quantifies current limitations and lays the groundwork for achieving the necessary throughput to enable real-time closed-loop quantum control feedback.

Quantum reinforcement learning balances speed and accuracy in CartPole control

Tens-of-hertz throughput, a key threshold previously unattainable, is now possible by directly programming readout electronics, paving the way for real-time closed-loop quantum control feedback. This achievement is significant because traditional software stacks introduce substantial delays in communication with quantum processing units (QPUs), hindering the implementation of real-time control loops. By bypassing these layers and directly interfacing with the Zurich Instruments control system, the researchers were able to reduce latency and increase the rate at which control signals could be applied and measurements obtained. The CartPole benchmark, a classic control problem involving balancing a pole on a moving cart, serves as an ideal testbed for evaluating reinforcement learning algorithms due to its relative simplicity and well-defined reward structure. A single-qubit quantum agent solved the CartPole benchmark in fewer episodes than a classical actor-critic network, even when limited to parameter-shift training, demonstrating improved learning efficiency. The actor-critic method, a common reinforcement learning technique, involves an ‘actor’ that proposes actions and a ‘critic’ that evaluates their effectiveness. Parameter-shift training is a method for estimating gradients on quantum computers, particularly useful in the presence of noise. Mapping performance against inference control frequency and measurement shot budget revealed a trade-off important for balancing stability and identifying optimal operating parameters. The ‘shot budget’ refers to the number of times a quantum measurement is repeated to obtain a statistically reliable result; higher shot budgets generally reduce noise but increase execution time.

Consistently, higher inference frequencies improved performance, while an increased shot budget reduced the minimum frequency needed for stable balancing, offering practical guidance for future quantum control demonstrations. This suggests that while faster control loops are beneficial, they can be compensated for by increasing the precision of measurements. The team increased execution speed on the VTT Q5 processor by more than ten-fold by bypassing conventional software stacks. The VTT Q5 is a superconducting transmon-based QPU, a type of quantum processor that utilizes superconducting circuits to represent and manipulate qubits. This substantial speedup was crucial for enabling the real-time control experiments. Further analysis confirmed that a greater number of measurement shots reduces the minimum control frequency required for stable balancing, allowing for a more flexible approach to system design. This flexibility is important because it allows engineers to tailor the control system to the specific characteristics of the QPU and the application at hand. The observed relationship between shot budget and control frequency provides a valuable design parameter for optimising quantum control systems.

Initial state fragility currently restricts quantum reinforcement learning scalability

Despite the striking efficiency of a single-qubit quantum agent on the CartPole task, reliance on circuits sensitive to initial state preparation errors remains a fundamental limitation. Superconducting qubits are notoriously susceptible to noise and imperfections, and the initial state of the qubit can significantly impact the performance of the quantum algorithm. The authors acknowledge this fragility, suggesting the need for circuits invariant to such imperfections, but achieving this durability presents a significant engineering challenge. Developing quantum circuits that are robust to initial state errors requires careful design and potentially the use of error correction techniques, which add complexity and overhead. Parameter-shift rule training mitigates some gradient calculation issues on noisy hardware, yet it doesn’t eliminate the impact of imperfect qubit initialisation, potentially limiting scalability. The parameter-shift rule is a technique for calculating the gradients of quantum circuits, which are essential for training reinforcement learning agents. While it can help to reduce the effects of noise, it does not address the underlying problem of qubit fragility.

Acknowledging the current sensitivity to initial qubit states does not diminish the value of this demonstration. Successful quantum reinforcement learning on actual hardware, even with a limited single-qubit agent, represents a key step forward, and quantifying the trade-off between measurement precision and control frequency provides practical guidance for future development of quantum control systems. This demonstration of real-time control using a minimal quantum system highlights the potential of this approach, but further work is needed to address the challenges of initial state preparation and improve the durability of quantum circuits. Direct programming of the quantum processor’s readout electronics allowed the team to achieve this tens-of-hertz throughput, a speed previously unattainable and essential for closed-loop feedback. The ability to achieve this speed is crucial for enabling real-time control applications, where the control loop must operate fast enough to respond to changes in the environment. Consequently, the single-qubit agent learned the challenging CartPole task with greater efficiency than classical methods, and mapping its performance revealed a key trade-off between the frequency of control actions and the number of measurements taken per action, guiding future optimisation of quantum control systems. The CartPole task, while simple, provides a valuable benchmark for evaluating the performance of reinforcement learning algorithms and identifying areas for improvement. The insights gained from this study can be applied to more complex control problems, potentially leading to the development of novel quantum control systems with enhanced performance and robustness. The 10-fold increase in execution speed and the demonstration of tens-of-hertz throughput are particularly noteworthy achievements, representing significant progress towards the realisation of practical quantum control systems. The use of a single-qubit agent, while limited, demonstrates the potential of even small quantum systems to outperform classical methods in certain tasks.

This research demonstrated that a single-qubit quantum agent successfully solved the CartPole balancing task, learning more efficiently than a classical network. Achieving tens-of-hertz throughput, a ten-fold increase in speed, is significant because it addresses a key limitation of applying quantum reinforcement learning to real-time control systems. The study also revealed a crucial relationship between control frequency and measurement precision, providing guidance for optimising future quantum control designs. The authors suggest that developing circuits invariant to initial states will be important for further progress.

👉 More information

🗞 Towards Real-time Control of a CartPole System on a Quantum Computer

🧠 ArXiv: https://arxiv.org/abs/2605.01716