Quantum mechanics continues to challenge classical intuitions about reality, particularly concerning the nature of measurement and the order of events. Recent research by Rybotycki, Białecki, Batle, et al. demonstrates experimental violations of both time-order invariance and the Leggett-Garg inequality, fundamental concepts relating to the predictability of events in quantum systems. The team achieves this using publicly accessible quantum computers, employing protocols designed to measure incompatible quantum properties – characteristics that cannot be known simultaneously with perfect precision – without significantly disturbing the system under investigation. Their analysis of data gathered from multiple devices, including those provided by IonQ, reveals statistically significant deviations from classical predictions, bolstering evidence that measurement itself plays a crucial role in shaping quantum phenomena.\n\nResearchers demonstrate violations of the Leggett-Garg inequality and time-order invariance using non-invasive measurements performed on publicly accessible quantum computers. The study, utilising devices from IBM Quantum and IonQ, confirms that quantum systems exhibit behaviours inconsistent with classical realism, even when measurements are designed to minimise disturbance.\n\nThe experiments employ two protocols, one utilising a single two-qubit gate and the other utilising two, to perform weak measurements on qubits. Weak measurements, unlike their projective counterparts, aim to extract information with minimal disruption to the system’s state. This is achieved through interactions with an ancillary detector, yielding a signal proportional to the measurement strength, while limiting disturbance to the square of that strength.\n\nAnalysis of data from ten qubit sets across five IBM devices and one IonQ device reveals violations of both the Leggett-Garg inequality and time-order invariance exceeding five standard deviations in almost all cases. This indicates a clear departure from classical expectations, where systems should exhibit definite properties independent of measurement. The observed violations are consistent with the theoretical framework of weak measurements, which predicts correlations dependent on the temporal order of measurements.\n\nFurthermore, the researchers verified the non-invasiveness of their approach by demonstrating that the observed correlations differ from those obtained using projective measurements. The study provides further evidence of the fundamentally nonclassical nature of quantum mechanics and highlights the potential of publicly available quantum computers for exploring these phenomena.\n\n

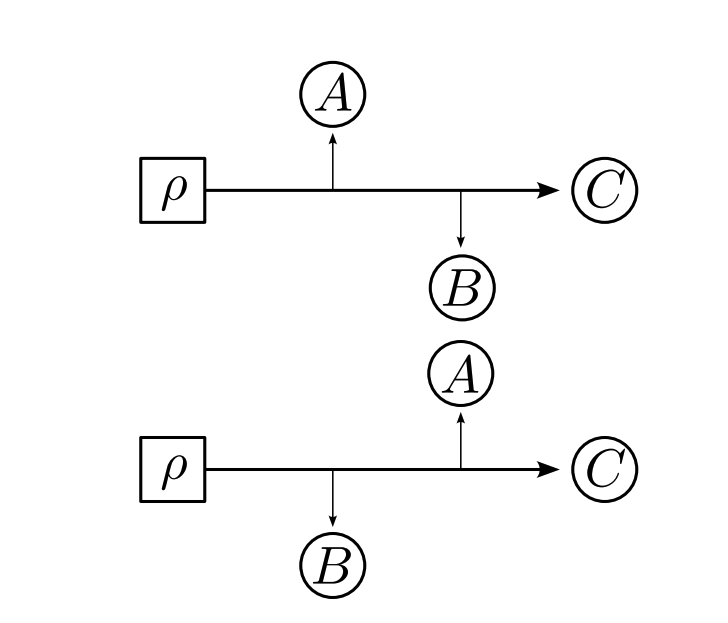

\n\nThe theoretical framework underpinning this work relies on the concept of weak coupling, mathematically represented by an interaction Hamiltonian that scales the observable’s operator $A$ with a small coupling parameter $\\epsilon$. Unlike standard projective measurements, which project the state $|\\psi\\rangle$ onto an eigenbasis and incur maximal disturbance, weak measurements estimate the expectation value $\\langle A \\rangle$ while preserving the quantum state with minimal back-action. This methodology allows for the reconstruction of quantum histories and testing non-classical temporal assumptions without incurring the state collapse inherent to strong measurements.\n\nThe violation of the Leggett-Garg inequality fundamentally challenges the classical assumption of realism—the idea that a physical system possesses definite properties independent of measurement. The LGI violation specifically indicates that the system’s expected value of a property, when measured at different times, cannot simultaneously be predicted by a consistent classical description, thereby providing rigorous, measurable evidence against the coexistence of realism and non-invasive measurability.\n\nFrom an engineering standpoint, the successful execution of these protocols across diverse hardware backends, such as superconducting qubits and trapped ions, underscores a critical trend toward quantum platform compatibility. The feasibility of running highly specialized measurement sequences on publicly accessible cloud resources accelerates the field’s maturity, enabling rapid comparative benchmarking of quantum hardware capabilities essential for future quantum algorithms.\n\nFurther depth in this research involves addressing decoherence effects during the temporal sequence of measurements. Since the LGI and TOI tests require measuring a system’s state repeatedly over time, the total fidelity of the quantum channel becomes a critical bottleneck. Mitigating environmental noise and maintaining state coherence across multiple gate operations remains a key technical hurdle required to scale these fundamental tests to larger, more complex quantum architectures.