OpenAI is balancing developer productivity with AI safety through existing features of its Codex coding agent. Designed to reduce interruptions during routine tasks, the system automatically approves certain kinds of requests, allowing Codex to operate more fluidly within established boundaries. This approach reflects a tiered approval system where low-risk actions are handled by Auto-review mode, while higher-risk operations still require explicit human oversight. OpenAI deploys Codex with clear goals: “keep the agent inside clear technical boundaries, let developers move quickly on low-risk actions, and make higher-risk actions explicit,” and crucially, preserves agent-native logs to maintain a complete audit trail of its activity for enhanced understanding and accountability.

Codex Deployment Boundaries: Sandboxing and Approval Policies

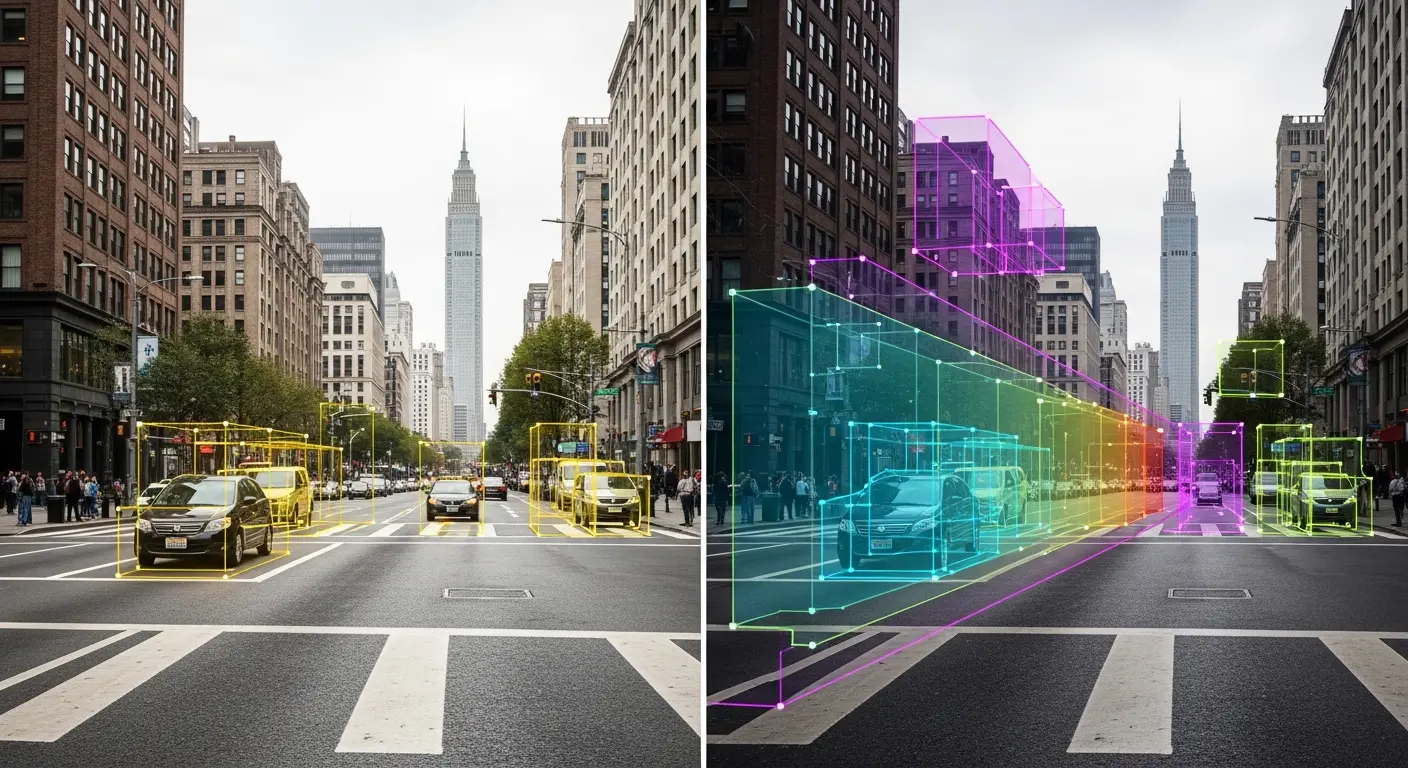

OpenAI has engineered a multi-layered security architecture for its Codex coding agent, prioritizing both developer productivity and robust control over autonomous actions. Beyond simply restricting capabilities, the company is implementing a system designed to understand what happened and allow defenders to interpret why the AI is taking certain steps, a crucial element for widespread enterprise adoption. Codex operates within defined technical boundaries through sandboxing and approval policies, working together to manage access and execution. The sandbox defines where Codex can write, whether it can reach the network, and which paths remain protected, while the approval policy determines when Codex must ask to perform an action, such as when it needs to do something outside of the sandbox.

For routine approval requests, we are using Auto-review mode, a feature that, when turned on, auto-approves certain kinds of requests to reduce how often users have to stop and approve Codex actions. Codex sends the planned action and recent context to the auto-approval subagent, which can automatically approve low-risk actions instead of interrupting the user. OpenAI also preserves agent-native telemetry, detailed logs of the AI’s actions, to facilitate comprehensive auditing and understanding. These logs extend beyond traditional security records, which typically only indicate what happened, but leave defenders to figure out why, allowing for a deeper understanding of user intent and agent behavior. The company leverages OpenTelemetry log export, capturing events like user prompts, tool approval decisions, and network activity, and integrates this data with its AI-powered security triage agent to distinguish between expected behavior, errors, and genuine security threats. These logs are also available through the OpenAI Compliance Platform for Enterprise and Edu customers, providing a centralized view for security teams and compliance officers.

Managed Network Access Controls for Coding Agents

OpenAI’s deployment of the Codex coding agent reflects a growing need to reconcile the power of artificial intelligence with robust security protocols within professional development environments. Codex actively reviews repositories, executes commands, and interfaces with development tools, tasks formerly requiring direct human intervention. This expanded capability necessitates a layered approach to control, extending beyond simple restrictions to encompass granular permissions and comprehensive audit trails. A core tenet of OpenAI’s strategy is establishing clear technical boundaries for Codex, allowing rapid progress on low-risk actions while mandating human oversight for potentially hazardous operations. Network access is carefully managed; the system does not operate with open-ended outbound access. Instead, a managed network policy allows expected destinations, blocks destinations we do not want Codex reaching, and requires approval for unfamiliar domains, ensuring common workflows complete without exposing the system to broader network vulnerabilities.

Critically, OpenAI preserves detailed agent-native telemetry, recognizing that understanding why an AI took a specific action is as important as knowing what it did. These logs feed into the OpenAI Compliance Platform and are also used internally alongside an AI-powered security triage agent. OpenAI explains, “When an endpoint alert says Codex did something unusual, the endpoint security tool tells us that a suspicious event occurred,” and “Codex logs then help explain the surrounding intent by the user and agent.” This combination of controlled execution and detailed logging aims to build trust and accountability as coding agents become increasingly integrated into critical workflows.

When an endpoint alert says Codex did something unusual, the endpoint security tool tells us that a suspicious event occurred.

OpenAI

Identity and Credential Management via ChatGPT Integration

OpenAI is establishing robust identity and credential management protocols for its Codex coding agent, moving beyond simple restrictions to enable secure integration into developer workflows. The company’s approach centers on tying Codex activity to existing enterprise controls and leveraging detailed telemetry for comprehensive auditing, a critical step for broader adoption. Unlike systems that merely limit access, OpenAI is focused on understanding what the AI is doing, not just if it acted, and preserving agent-native logs is central to this strategy. CLI and MCP OAuth credentials are stored in the secure OS keyring, login is forced through ChatGPT, and access is pinned to our ChatGPT enterprise workspace, ensuring activity is logged within the ChatGPT Compliance Logs Platform. This integration extends to a nuanced rule system that differentiates between safe and dangerous commands, allowing common, benign actions to proceed without interruption while flagging or blocking potentially harmful ones.

For instance, the system can allow read-only GitHub PR inspection via gh CLI while simultaneously preventing access to domains. OpenAI utilizes a configuration system combining cloud-managed requirements, macOS preferences, and local files to maintain consistency while allowing for tailored testing across teams. Auto-review mode is a key component, designed to reduce interruptions by automatically approving certain requests; “when turned on, auto-approves certain kinds of requests to reduce how often users have to stop and approve Codex actions.” This feature relies on a subagent that assesses risk, enabling routine tasks to proceed uninterrupted while still subjecting higher-risk actions to human review.

Codex provides the control surfaces, configuration management, sandboxing, and detailed agent-aware telemetry needed to ensure safe adoption.

OpenAI

Agent Telemetry & AI-Powered Security Triage with OpenTelemetry

Beyond establishing technical boundaries and approval workflows, OpenAI is prioritizing comprehensive agent-native telemetry as a cornerstone of secure coding agent deployment with Codex. This isn’t merely about recording that an action occurred, but providing a more agent-aware view, a crucial step toward building trust and accountability in autonomous systems. Traditional security logs, while useful for identifying what happened, a file changed, a network connection attempted, often leave security teams struggling to understand why. Codex aims to bridge this gap by exporting logs compatible with OpenTelemetry, a widely adopted observability framework. Specifically, Codex supports log export for events including user prompts, tool approval decisions, tool execution results, and network activity, providing a detailed audit trail of agent behavior. Defenders are still left to figure out why Codex did something, or the user’s intent.

When an endpoint alert signals unusual activity, the AI agent leverages Codex logs to inspect the original request, tool interactions, and approval history. The analysis then surfaces to the security team for review, allowing for informed escalation decisions. OpenAI is also utilizing this telemetry operationally, tracking internal adoption rates, tool usage, and the effectiveness of network sandbox configurations. OpenAI states, “We use these logs to understand how internal adoption is changing, which tools and MCP servers are being used, how often the network sandbox is blocking or prompting, and where the rollout still needs tuning.” These OpenTelemetry logs can be centralized within existing Security Information and Event Management (SIEM) and compliance logging systems, integrating seamlessly into established security workflows, and ensuring a robust and auditable deployment of AI-driven coding assistance.

Codex supports OpenTelemetry log export for various Codex events such as user prompts, tool approval decisions, tool execution results, MCP server usage, and network proxy allow or deny events.

OpenAI