Basil Kyriacou and colleagues at Terra Quantum AG have developed Shot-Based Quantum Encoding (SBQE), a novel strategy that leverages a quantum computer’s inherent measurement repetitions, termed ‘shots’, to represent classical data according to a predefined classical probability distribution. This method addresses limitations inherent in existing data encoding techniques by efficiently distributing computational resources and directly creating a mixed-state representation intrinsically linked to the classical data. Crucially, SBQE effectively realises the structure of a multilayer perceptron within a quantum circuit, potentially offering a pathway towards more efficient quantum machine learning. Benchmarking on standard image datasets demonstrates SBQE’s potential, achieving improved accuracy on both Semeion and Fashion MNIST compared to amplitude encoding and, in the case of Semeion, matching the performance of a classical neural network, all without requiring additional data-encoding gates.

Shot-based encoding surpasses classical methods in quantum machine learning benchmarks

A test accuracy of 89.1% ±0.9% on the Semeion dataset represents a significant advance in quantum machine learning, exceeding the performance of previously established amplitude encoding methods by 5.3% and matching the accuracy of a width-matched classical network. Quantum machine learning has historically been hampered by the difficulty of efficiently loading classical data into quantum systems without exceeding the limitations of current quantum hardware. Existing data encoding techniques, such as angle, amplitude, and basis encoding, suffer from inherent drawbacks. Angle encoding, while relatively shallow, often requires an exponential number of qubits. Amplitude encoding, though potentially efficient in qubit usage, demands circuit depths that exceed the coherence budgets of noisy intermediate-scale quantum (NISQ) hardware. Basis encoding, while offering a different approach, also struggles with scalability and coherence requirements. SBQE offers a distinct alternative by sidestepping these limitations.

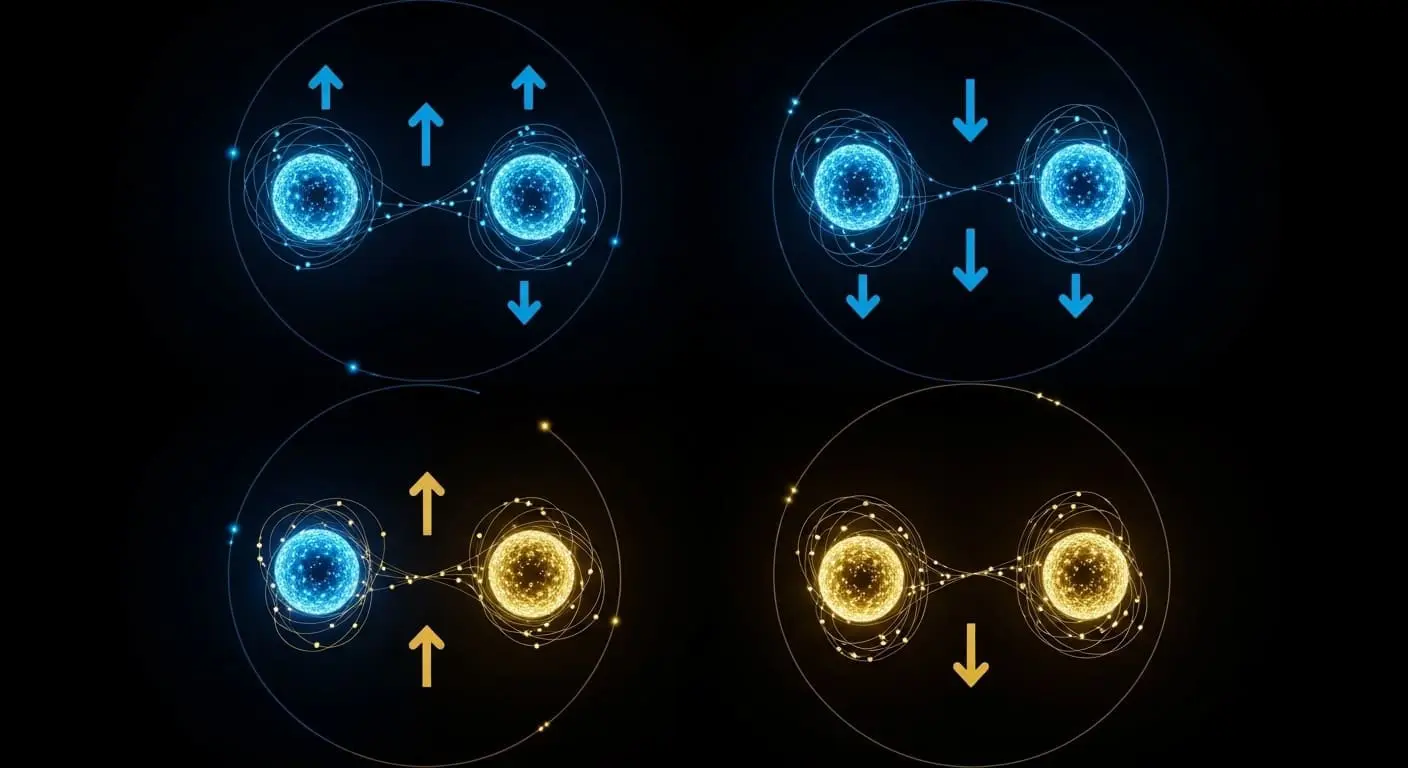

Shot-Based Quantum Encoding (SBQE) circumvents these issues by utilising a quantum computer’s inherent ‘shots’, which are repeated executions of the same quantum circuit, to construct a mixed-state representation directly linked to classical data. This effectively realises a multilayer perceptron within a quantum circuit, allowing for complex data processing. The mixed-state representation is created by preparing the quantum system in different initial states according to the probabilities derived from the classical data. Benchmarks involved ten independent initialisations per model to ensure statistical durability and account for training variations, providing robust performance estimates. The method also achieved 80.95% ±0.10% test accuracy on the Fashion MNIST dataset, a commonly used benchmark for image recognition, representing a 2.0% improvement over existing amplitude encoding techniques and a 1.3% gain compared to a linear multilayer perceptron. Despite these advances, the reported accuracies do not yet demonstrate a clear advantage over highly optimised classical machine learning models when considering the substantial overhead associated with utilising quantum hardware. However, by simplifying circuit construction and reducing potential sources of error through the avoidance of complex data-encoding gates, SBQE suggests that further optimisation and advancements in quantum hardware could lead to more competitive results. The elimination of data-encoding gates reduces the number of operations susceptible to noise, potentially improving the fidelity of the quantum computation.

Scaling quantum data loading with repeated measurement techniques

Efficiently loading data into quantum computers has long been a fundamental stumbling block for practical machine learning applications. The exponential scaling of the Hilbert space, while offering immense computational potential, necessitates efficient methods for mapping classical data onto quantum states. The new technique cleverly utilises repeated measurements, or ‘shots’, inherent in quantum computation to represent classical data, offering a potentially more scalable solution. A crucial question remains unanswered: can this method scale to handle the sharply larger and more complex datasets typical of real-world problems, such as those encountered in natural language processing or high-resolution image analysis. Initial demonstrations remain confined to image datasets like Fashion MNIST and Semeion, which contain 60,000 and 5,938 images respectively, but the underlying principle offers a pathway towards handling more substantial data volumes. Further research is needed to assess its performance on datasets with significantly higher dimensionality and complexity.

This represents a major step forward in addressing data loading challenges for quantum machine learning. Enabling analysis of larger, more complex datasets currently beyond the reach of many quantum algorithms, this approach could unlock practical applications as quantum computers grow in power and coherence times improve. Distributing these shots across multiple initial quantum states, guided by the data itself, creates a mixed quantum state, a probabilistic representation of the data, and realises a quantum circuit structurally equivalent to a multilayer perceptron. This structural equivalence allows for the application of established machine learning techniques within a quantum framework. Future development will focus on adapting the method for increasingly complex, real-world datasets and exploring its compatibility with different quantum architectures. The potential for scalability stems from utilising existing quantum hardware capabilities without requiring complex data encoding circuits, offering a potentially viable solution for expanding the scope of quantum machine learning. Investigating the resource requirements, such as the number of qubits and circuit depth, as the dataset size increases will be critical for determining the practical limits of SBQE.

Shot-Based Quantum Encoding (SBQE) successfully embedded classical data into a quantum state, achieving 89.1% +/- 0.9% test accuracy on the Semeion dataset and 80.95% +/- 0.10% on Fashion MNIST. This method improves upon existing quantum data encoding techniques, such as amplitude encoding, and matches the performance of classical neural networks of similar size. SBQE distributes the quantum hardware’s shots, its native resource, according to the data, creating a mixed quantum state that functions like a multilayer perceptron. Researchers intend to assess SBQE’s performance on larger and more complex datasets to further understand its scalability and compatibility with various quantum architectures.

👉 More information

🗞 Shot-Based Quantum Encoding: A Data-Loading Paradigm for Quantum Neural Networks

🧠 ArXiv: https://arxiv.org/abs/2604.06135