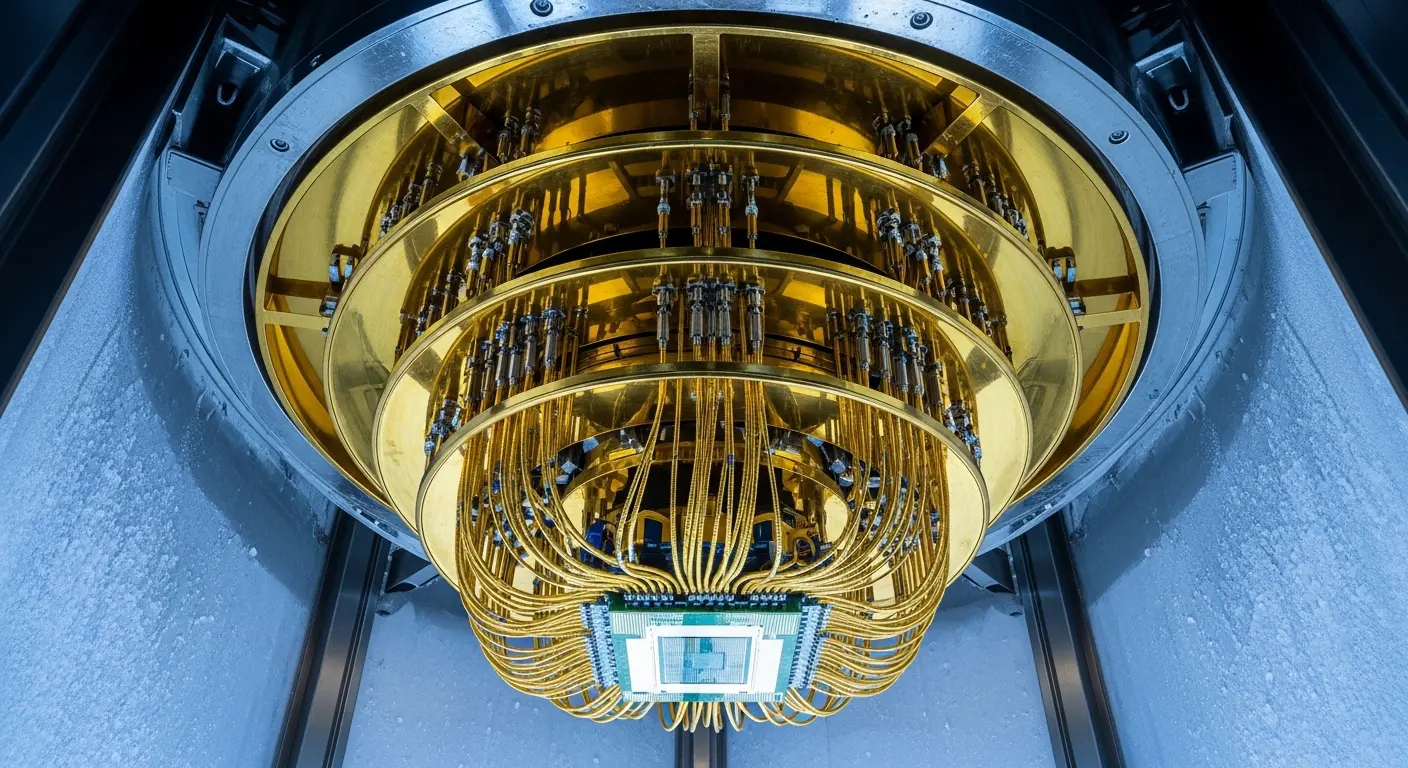

Researchers at Swinburne University of Technology, led by Ned Goodman, have developed a new classical algorithm capable of accurately approximating Gaussian boson sampling (GBS), successfully scaling simulations to encompass up to 1152 modes. This achievement addresses a fundamental challenge within the quantum computing field by providing insights into the origins of errors in quantum computations and their subsequent effect on the ability to demonstrate genuine quantum advantage. The development of computable benchmarks for validating GBS data reveals that error sources extend beyond simple signal loss, contributing to inaccuracies that facilitate classical simulation, thereby informing the design of improved algorithms and a more comprehensive understanding of the underlying physics governing quantum computation.

Classical simulation of Gaussian boson sampling now surpasses experimental quantum scales through

A novel classical algorithm now simulates Gaussian boson sampling (GBS) with up to 1152 modes, representing a substantial advancement beyond the previously established limit of approximately 100 modes and 100 simultaneous photon detections. This capability demonstrably exceeds the performance of current quantum experiments, establishing a new benchmark for classical simulation. The algorithm’s success highlights that errors beyond photon losses, including thermal noise, detector inefficiencies, and inaccuracies in parameter settings, can significantly facilitate the classical simulation of tasks previously believed to be exclusive to quantum computation. This finding is particularly relevant as the pursuit of quantum advantage hinges on identifying computational problems that are demonstrably intractable for classical computers.

The algorithm utilises a refined phase-space method, directly incorporating experimental imperfections into its computational model. This allows for the generation of more realistic statistical properties and a more precise classical approximation of quantum computations. The phase-space approach represents the quantum state as a continuous variable, enabling efficient simulation of the complex interference patterns characteristic of GBS. Demonstrating quantum advantage necessitates proving that a specific task is intractable for conventional computers; however, verifying this claim is becoming increasingly challenging as classical algorithms continue to improve. Gaussian boson sampling (GBS) offers a uniquely testable platform for exploring quantum advantage due to its inherent probabilistic nature and the availability of methods for verifying the validity of generated samples. However, achieving conclusive evidence of quantum advantage with GBS depends critically on accurately modelling experimental imperfections beyond simple photon loss. Several large-scale experiments, including those conducted by the University of Science and Technology of China and Xanadu Corporation, have reported claims of quantum advantage, but often struggle to reproduce the expected statistical outputs even when accounting for known photon losses. This discrepancy underscores the urgent need for improved characterisation of quantum hardware and the development of more robust evaluation algorithms, shifting the focus from simply scaling experiments to gaining a deeper understanding of the underlying error mechanisms. The ability to accurately simulate GBS at scales exceeding current quantum devices provides a valuable tool for validating experimental results and identifying potential sources of error.

Modelling experimental errors reveals limitations of current quantum boson sampling

Despite the ability to effectively mimic current quantum boson sampling experiments with advanced classical algorithms, this work remains crucial for the continued progression of quantum computing. Carefully modelling experimental imperfections, particularly those beyond simple photon loss, unexpectedly aids classical simulation, and this is a key finding with significant implications for the field. The algorithm’s success in simulating 1152-mode GBS demonstrates that the computational complexity of the problem is more sensitive to these imperfections than previously understood. Understanding these error sources is vital not only for refining both quantum hardware and the algorithms used to assess performance, but also for accurately interpreting experimental results and ultimately paving the way for the realisation of genuine quantum advantage. Without a thorough understanding of these error mechanisms, claims of quantum supremacy may be premature or misleading.

Scaling to 1152 modes, the algorithm outperforms both current quantum experiments and all prior classical approximations. This performance is achieved through the incorporation of detailed models of experimental imperfections beyond simple photon loss, revealing their unexpected impact on computational complexity. The algorithm employs techniques such as Monte Carlo integration to efficiently sample from the complex probability distributions generated by GBS, while simultaneously accounting for the effects of noise and inaccuracies. Consequently, attention now shifts towards a more detailed analysis of error sources within quantum devices, including thermal noise arising from the finite temperature of detectors, and parameter inaccuracies stemming from limitations in calibration and control. Investigating the interplay between these error sources and the overall computational process is essential for developing strategies to mitigate their effects and improve the fidelity of quantum computations. Further research will focus on extending the algorithm to even larger mode numbers and exploring the potential for incorporating more sophisticated models of experimental imperfections, ultimately contributing to a more robust and reliable foundation for quantum computing.

The researchers developed a classical algorithm capable of accurately simulating Gaussian boson sampling with up to 1152 modes. This demonstrates that imperfections beyond simple photon loss can significantly affect the computational complexity of these quantum simulations, meaning current experiments may be more easily replicated by conventional computers than previously thought. The algorithm outperforms existing classical methods and current quantum experiments at this scale, providing a more accurate benchmark for assessing quantum performance. This work highlights the importance of understanding error sources in quantum devices to accurately interpret results and progress towards achieving genuine quantum advantage.

👉 More information

🗞 Gaussian boson sampling: Benchmarking quantum advantage

🧠 ArXiv: https://arxiv.org/abs/2604.12330