Scaling variational quantum algorithms has been limited by exponential gradient suppression, known as the barren plateau. Gordon Ma and Xiufan Li at the National University of Singapore have identified a precise boundary defining when this suppression occurs, linked to whether a loss function is affine in measured statistics. They pinpointed a precise mathematical limit, defined by ‘affine loss functions’, which determines when established methods for proving the scalability of variational quantum algorithms are no longer valid.

The findings reveal that carefully designing how measurements are interpreted, specifically using ‘amplification-capable’ loss functions, could potentially overcome gradient suppression. Optimisation should therefore focus on the measurement process itself, rather than solely on the quantum circuit design. Gordon Ma and Xiufan Li at the National University of Singapore have identified a key boundary determining the scalability of variational quantum algorithms, resolving the long-standing challenge of the barren plateau.

The research centres on ‘affine loss functions’, representing a simple, linear relationship between predicted values and actual results, similar to calculating the total cost of items with a fixed price per item. The team discovered that when a loss function is non-affine, standard methods for proving algorithm scalability break down, as optimisation becomes hampered by gradient suppression, akin to trying to hear a whisper in a noisy room. Consequently, designing the measurement process, how data is interpreted, is vital, and the researchers suggest ‘amplification-capable’ loss functions could be key. This work raises a fundamental question: can we design quantum algorithms that actively counteract signal loss, rather than simply accepting it as an inherent limitation.

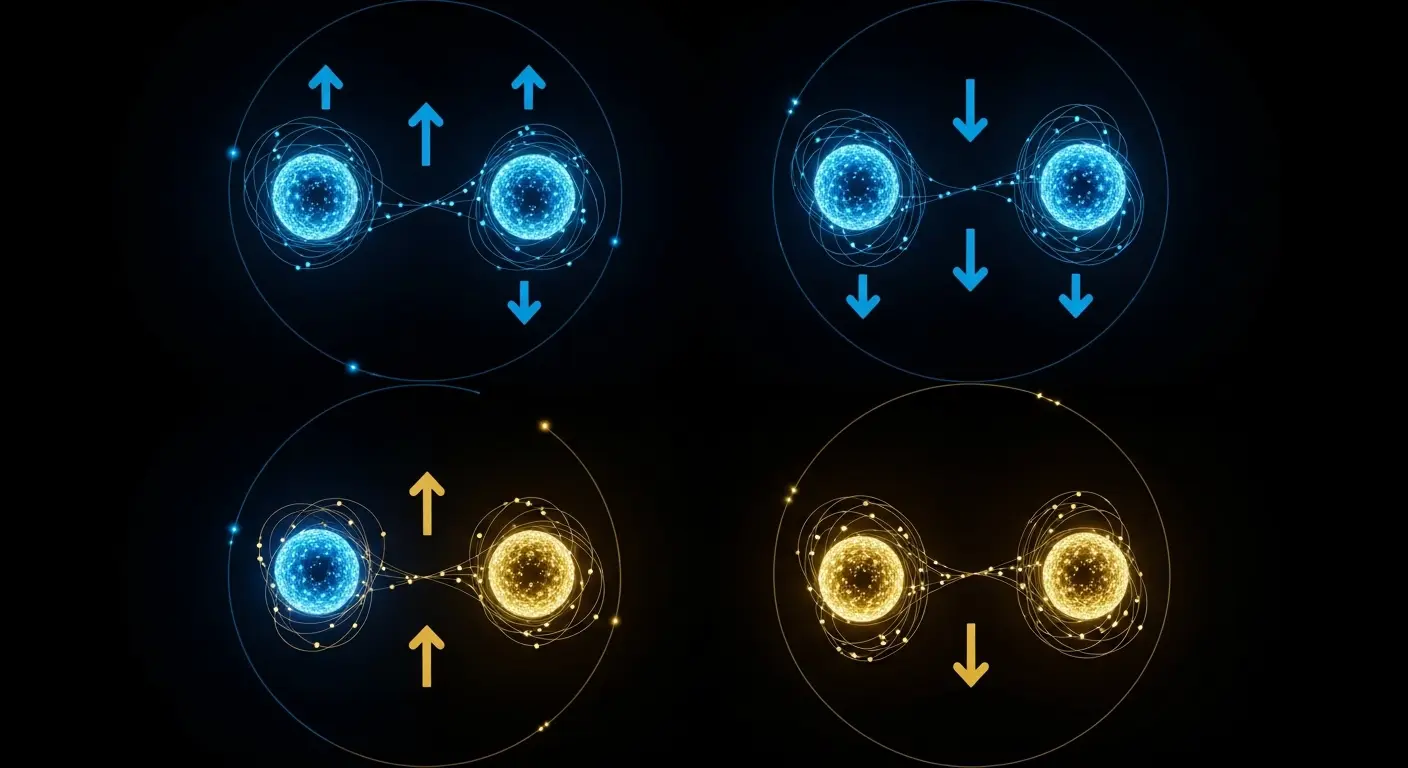

Non-affine loss functions enable scalable gradients in variational quantum algorithms

Resolved gradients, several orders of magnitude larger, were observed using an amplification-capable objective when compared to affine and Lipschitz baselines. This improvement occurred at comparable shot budgets, representing a substantial leap in performance. This breakthrough pinpoints a precise boundary, the point of ‘affine loss functions’, beyond which standard techniques for proving the scalability of variational quantum algorithms are invalid, effectively limiting their application.

Previously, determining when these scalability limitations applied relied on loss-specific arguments; now, a general structural characterisation is available, offering a universal understanding of gradient behaviour. The research reveals that designing the measurement process, specifically utilising non-affine loss functions, is key to overcoming gradient suppression, a phenomenon hindering quantum algorithm optimisation. A key boundary defining when standard methods for assessing variational quantum algorithm scalability fail was identified; this boundary exists where loss functions transition from being affine to non-affine in measured statistics.

This structural characterisation moves beyond previously used loss-specific arguments to determine scalability limits, offering a universal understanding of how gradients behave during quantum optimisation. Numerical tests on a charge-conserving quantum system demonstrated that an amplification-capable objective generated resolved gradients several orders of magnitude larger than both affine and Lipschitz baselines, while maintaining comparable computational effort. The scaling trend of this improved objective was statistically distinct from the exponential decay observed in the baseline approaches. These results suggest a pathway towards designing more scalable quantum algorithms by optimising the interface between the quantum computer and the classical optimisation process.

Affine loss functions delineate gradient suppression limits in variational quantum algorithms

A precise mathematical boundary, the affine loss function, has been demonstrated, defining when standard techniques for proving gradient suppression in variational quantum algorithms apply. The work establishes that an objective’s representability with fixed observables is possible if and only if the loss function is affine in the measured statistics, effectively delineating the limits of current proof methods. Existing results for non-affine losses rely on additional assumptions not present in this new characterisation.

Beyond this affine boundary, two classes of loss functions were identified: bounded-gradient losses, which continue to exhibit suppression, and amplification-capable losses, which can potentially counteract it. Both classes ultimately fail in exponentially wide settings, meaning when the number of possible quantum states becomes extremely large, limiting their scalability. This failure occurs for different structural reasons within each class, a distinction the findings highlight. The team validated these findings with a numerical demonstration using a charge-conserving quantum system.

The charge-conserving system revealed a statistically distinct scaling trend in gradients generated by an amplification-capable objective compared to those produced by affine and Lipschitz baselines. The demonstration was limited to this specific system, leaving open whether these results generalise to other quantum systems. The identified boundary highlights a “representation-design problem”, indicating that focusing on the design of the measurement interface and loss function could overcome limitations in training these algorithms.

Affine Loss Defines Limits to Variational Quantum Algorithm Scalability

Scientists at the National University of Singapore have identified a precise mathematical boundary, termed the affine loss function, beyond which standard techniques for proving the scalability of variational quantum algorithms fail. This research establishes that an objective’s representability with fixed observables depends on whether the loss function is affine in the measured statistics, resolving a long-standing question regarding the applicability of existing proofs. The work builds upon established results concerning barren plateaus and gradient suppression, extending the linear proof machinery to cover specific non-linear losses like divergences and risk functionals.

Both amplification-capable and bounded-gradient loss functions ultimately fail in exponentially wide settings, albeit for differing structural reasons. The numerical demonstration of the findings was limited to a specific charge-conserving quantum system, leaving open the possibility that the results may not generalise to all quantum systems. The identified boundary highlights a “representation-design problem”, indicating that focusing on the design of the measurement interface and loss function could overcome limitations in training these algorithms. Establishing a definitive boundary for when standard analytical techniques fail in variational quantum algorithms marks a shift from case-by-case analysis to a universal understanding of gradient behaviour. Identifying this ‘affine’ boundary reveals a representation-design problem; the focus can now shift to crafting objectives that actively propagate signals during optimisation, rather than simply accepting signal loss.

The research demonstrated that the scalability of variational quantum algorithms is limited by a precise mathematical boundary defined by affine loss functions. This means the ability to effectively train these algorithms depends on how the loss function relates to measured statistics, offering a universal understanding of gradient behaviour. Scientists found that both amplification-capable and bounded-gradient loss functions ultimately fail in exponentially wide settings, though for different reasons. The study highlights a “representation-design problem”, suggesting that optimising the design of the measurement interface and loss function may improve training.

👉 More information

🗞 Trainability Beyond Linearity in Variational Quantum Objectives

🧠 ArXiv: https://arxiv.org/abs/2604.18846