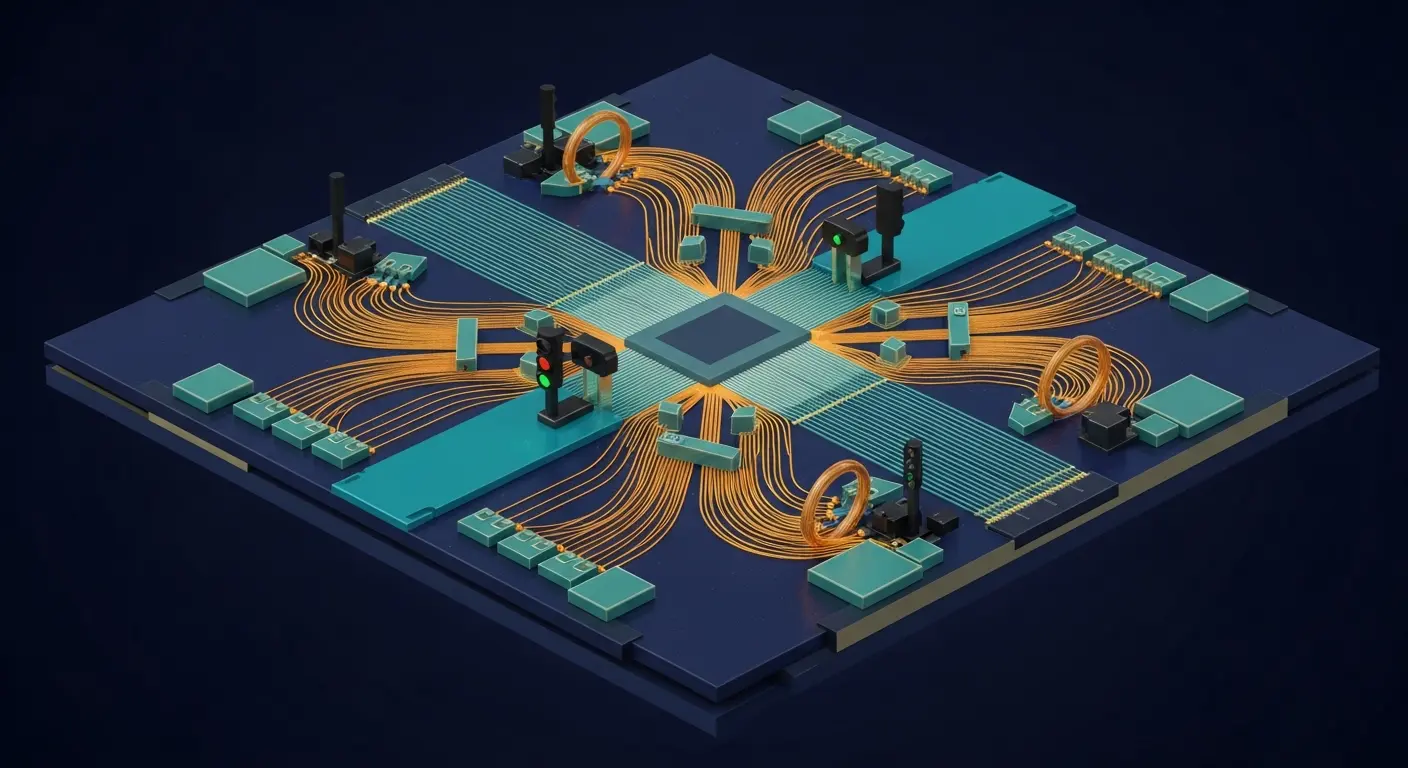

Scientists at University of Copenhagen, in collaboration with Niels Bohr Institute, have demonstrated a new photon-sorting circuit utilising a solid-state quantum emitter to achieve near-deterministic photon-photon gates. Kasper H. Nielsen and colleagues have created a passive circuit that addresses a key limitation of linear optics-based Bell state measurements, which are typically probabilistic and introduce considerable hardware overheads. The circuit achieves 62% success probability in sorting photon components and exceeds the 50% linear-optical limit for Bell state measurements, reaching 57% post-selection probability. This represents a significant step towards scalable and fault-tolerant photonic quantum technologies.

Novel photon sorting surpasses 50% limit in Bell state measurement

A new photon-sorting circuit has boosted Bell state measurement success probability to 62%, a substantial improvement over the conventional 50% limit imposed by linear optical methods. This breakthrough overcomes a long-standing barrier in quantum photonics, previously hindering the development of scalable and fault-tolerant quantum technologies. The device utilises a solid-state quantum emitter and nanophotonic waveguide, efficiently separating single and two-photon components and directing them into distinct pathways; this precise control allows for more reliable Bell state measurements, crucial for both quantum computing and communication. Bell state measurements, or BSMs, are fundamental operations in quantum information processing, serving as the basis for quantum teleportation, superdense coding, and universal quantum computation. Traditional implementations relying on linear optics suffer from inherent probabilistic behaviour, meaning a significant proportion of attempts to create a Bell state fail, necessitating complex and resource-intensive repetition schemes.

Photonic quantum systems stand to gain significant efficiency improvements with projected optimisation enhancing performance to over 65%. At the University of Copenhagen, researchers have achieved a 57% post-selected success probability for Bell state measurements, bypassing the 50% limit inherent in traditional linear optics without needing additional ‘ancillary’ photons which complicate systems. A semiconductor quantum dot, functioning as a solid-state quantum emitter, is embedded within a nanophotonic waveguide to sort photons, directing single and two-photon streams into separate outputs and enhancing measurement reliability. The nanophotonic waveguide, meticulously fabricated using advanced lithographic techniques, serves to confine and guide the photons, enhancing their interaction with the quantum dot. The quantum dot, a nanoscale semiconductor crystal, acts as an artificial atom, exhibiting strong quantum properties. When a photon interacts with the quantum dot, it can either be transmitted, reflected, or absorbed, depending on its energy and polarisation. By carefully tuning the energy levels of the quantum dot and the polarisation of the photons, the researchers can selectively direct single-photon and two-photon streams into separate outputs. Direct analysis of photon-number distributions, performed using single-photon detectors and coincidence counting techniques, confirmed unambiguous photon-sorting behaviour, a step missing from previous experiments that relied on indirect indicators. Theoretical modelling suggests further optimisation could push performance beyond 65%, potentially reducing the hardware demands of complex quantum computing architectures and improving tolerance to photon loss, currently limited to below 0.8% in some systems. Reducing photon loss is critical, as each lost photon introduces an error into the quantum computation, degrading its accuracy and reliability.

Improved Bell state measurement efficiency via post-selection remains a scalability challenge

Reliable methods for creating and verifying entanglement, the strange quantum link between particles, are essential for advancing quantum technologies. This work offers a promising route towards more efficient Bell state measurements, vital for both quantum computing and secure long-distance quantum communication. The ability to generate and manipulate entangled photons is the cornerstone of many quantum protocols, enabling tasks that are impossible with classical systems. Quantum key distribution, for example, leverages entanglement to create unbreakable encryption keys, ensuring secure communication. However, the reported success rates, while exceeding those of traditional optical systems, are currently ‘post-selected’, calculated only from instances where the sorting worked correctly.

Calculating success only from correctly sorted instances introduces a practical limitation for real-world applications, as the current reliance on ‘post-selection’ remains. A truly scalable technology requires reliable performance regardless of initial sorting success, and this method doesn’t fully address the challenges of noise inherent in quantum systems. Post-selection effectively discards all instances where the photon sorting fails, leading to a reduced overall event rate and increased experimental overhead. While the 62% success probability represents a significant improvement, the actual rate of successful Bell state measurements is lower when considering all attempted measurements. This demonstration of a photon-sorting circuit, utilising a solid-state quantum emitter within a nanophotonic waveguide, represents a shift away from probabilistic methods of creating entanglement, a quantum connection between particles important for advanced computing and communications. Achieving 62% success in separating single and two-photon components bypasses the 50% limit inherent in linear optics, enabling more reliable Bell state measurements, a way of verifying this entanglement. While current results require post-selection, analysing only successful events, this work establishes a pathway towards reducing hardware demands and improving durability to photon loss, prompting investigation into optimising the emitter and waveguide interaction. Future research will focus on eliminating the need for post-selection, potentially through the development of more robust quantum emitters and waveguide designs, and on integrating this photon-sorting circuit into larger, more complex quantum photonic circuits. This will pave the way for building practical, scalable quantum computers and communication networks.

The researchers demonstrated a photon-sorting circuit achieving 62% success in separating single and two-photon components. This represents an improvement over traditional linear optics, which are fundamentally limited to 50% success. By utilising a solid-state quantum emitter within a nanophotonic waveguide, the system enables Bell state measurements with 57% post-selected success, moving beyond probabilistic entanglement methods. The authors intend to focus on eliminating the need for post-selection through optimisation of the emitter and waveguide interaction, potentially leading to more robust quantum systems.

👉 More information

🗞 Photon Sorting with a Quantum Emitter

🧠 ArXiv: https://arxiv.org/abs/2604.21758