Scientists at the Institute of Fundamental Physics IFF-CSIC have developed a new technique utilising quantized tensor-network methods to dramatically simplify characteristic functions, mathematical tools central to describing probability distributions. Juan José Rodríguez-Aldavero and Juan José García-Ripoll demonstrate exponential compression of complex, non-Gaussian distributions arising from weighted sums of independent random variables. The work reveals a key bond-dimension collapse in certain scenarios, enabling polylogarithmic scaling of both time and memory requirements. Furthermore, they achieved high-resolution discretizations reaching ![]() frequency modes, exceeding the limitations of traditional dense implementations and opening avenues for efficient computation of vital risk measures like Value at Risk and Expected Shortfall with applications in quantitative finance and other fields.

frequency modes, exceeding the limitations of traditional dense implementations and opening avenues for efficient computation of vital risk measures like Value at Risk and Expected Shortfall with applications in quantitative finance and other fields.

High-resolution distribution modelling via compressed spectral decomposition

Discretization of weighted sums of independent random variables now reaches ![]() frequency modes, a substantial leap beyond the previous limit of

frequency modes, a substantial leap beyond the previous limit of ![]() achievable with dense implementations. This advancement addresses a critical bottleneck in accurately modelling complex, non-Gaussian distributions, which are prevalent in numerous scientific and financial applications. Combining Fourier spectral methods with quantized tensor trains overcomes a longstanding barrier, allowing for a more detailed and precise representation of these distributions. Exponential compression allows for polylogarithmic scaling of both time and memory, previously necessitating computationally expensive techniques such as extensive Monte Carlo simulations or reliance on simplifying Gaussian approximations that often sacrifice accuracy.

achievable with dense implementations. This advancement addresses a critical bottleneck in accurately modelling complex, non-Gaussian distributions, which are prevalent in numerous scientific and financial applications. Combining Fourier spectral methods with quantized tensor trains overcomes a longstanding barrier, allowing for a more detailed and precise representation of these distributions. Exponential compression allows for polylogarithmic scaling of both time and memory, previously necessitating computationally expensive techniques such as extensive Monte Carlo simulations or reliance on simplifying Gaussian approximations that often sacrifice accuracy.

The Fourier spectral method decomposes the characteristic function into its frequency components, while the quantized tensor train efficiently represents the resulting data, minimising storage and computational demands. The method exploits a low-rank structure within the characteristic function, a mathematical fingerprint of the probability distribution, enabling efficient computation of financial risk metrics like Value at Risk and Expected Shortfall. These metrics are crucial for assessing and managing financial risk, and their accurate calculation is paramount for stability and informed decision-making. A sharp bond-dimension collapse was observed for just 300 components when modelling Bernoulli random variables, indicating a significant reduction in the complexity of the representation. Efficient calculation of financial risk metrics, such as Value at Risk and Expected Shortfall, is now possible due to the compression enabling polylogarithmic scaling of both computation time and memory usage. The technique was applied to weighted sums of Bernoulli and lognormal random variables, achieving computational gains and exceeding the ![]() limit of conventional methods, opening possibilities for more complex simulations across diverse scientific fields including physics, engineering, and climate modelling. The use of Bernoulli and lognormal variables provides a robust initial test case, representing common scenarios in statistical modelling.

limit of conventional methods, opening possibilities for more complex simulations across diverse scientific fields including physics, engineering, and climate modelling. The use of Bernoulli and lognormal variables provides a robust initial test case, representing common scenarios in statistical modelling.

Quantized tensor trains efficiently model probability distributions of independent random variables

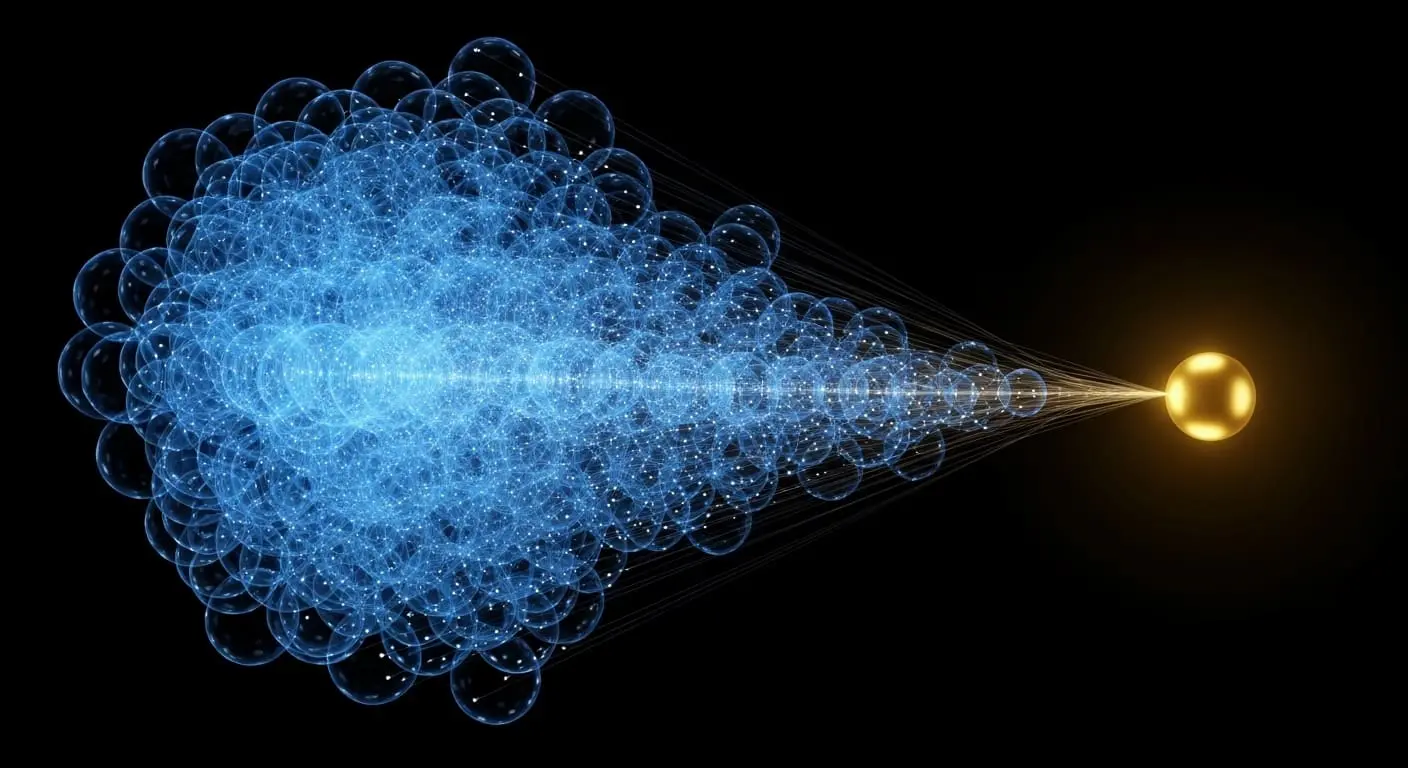

Quantized tensor trains, also known as matrix product states, represent complex probability distributions, offering a technique for efficiently storing and manipulating large datasets by breaking them down into smaller, interconnected pieces, similar to compressing a complex image into fewer pixels. This approach exploits the inherent structure within the ‘characteristic function’ of weighted sums of independent random variables, effectively a mathematical fingerprint identifying the distribution’s shape. The characteristic function completely defines the probability distribution, and its efficient representation is key to computational efficiency. Independent variables factorize into local terms, meaning the overall distribution can be constructed from the individual distributions of each variable. Consequently, the algorithm builds this fingerprint by combining simpler local characteristics, sequentially linking them together within the tensor network. This sequential linking allows for efficient computation and storage, as the complexity scales polylogarithmically with the number of variables.

Quantized tensor trains efficiently model probability distributions of independent random variables. This approach exploits the inherent structure within the ‘characteristic function’ of weighted sums of independent random variables, effectively a mathematical fingerprint identifying the distribution’s shape. The characteristic function completely defines the probability distribution, and its efficient representation is key to computational efficiency. A reduction in computational complexity occurred with 300 or more components for Bernoulli variables. This suggests that the method is particularly effective at capturing the essential features of distributions with limited dependencies. This approach was chosen to overcome limitations of Monte Carlo sampling and Fourier inversion techniques, which struggle with accuracy and scalability, particularly in high-dimensional spaces. Monte Carlo methods require many samples to achieve accurate results, while Fourier inversion can be computationally expensive for complex distributions. Discretizations of up to ![]() frequency modes were achieved using lognormal variables, demonstrating the method’s potential for high-resolution modelling. This level of resolution allows for the capture of subtle features in the distribution, which can be crucial for accurate predictions and risk assessment. The quantization process further enhances efficiency by reducing the precision of the tensor elements, minimising storage requirements without significant loss of accuracy.

frequency modes were achieved using lognormal variables, demonstrating the method’s potential for high-resolution modelling. This level of resolution allows for the capture of subtle features in the distribution, which can be crucial for accurate predictions and risk assessment. The quantization process further enhances efficiency by reducing the precision of the tensor elements, minimising storage requirements without significant loss of accuracy.

Compressing characteristic functions accelerates probabilistic modelling of financial systems

Accurately representing probability distributions is often crucial for modelling complex systems, and this offers a significant leap forward in that capacity. The ability to efficiently model these distributions has far-reaching implications for various fields, including finance, physics, and engineering. However, the scientists acknowledge their current algorithm was only tested on weighted sums of Bernoulli and lognormal random variables, raising questions about its broader applicability. While these variables represent important test cases, further research is needed to determine whether this technique will generalise to distributions with more intricate dependencies, or if unforeseen challenges will emerge when applied to different data types. Investigating the performance of the algorithm on other distributions, such as those with heavy tails or multimodal shapes, is a crucial next step.

Even acknowledging the limited scope of testing to date, this advance in representing probability distributions remains valuable. Characteristic functions, mathematical tools describing these distributions, are often computationally expensive to process, but this demonstrates a method for compressing them, enabling faster calculations for complex financial modelling. Complex probability distributions, essential for modelling real-world phenomena, can be represented with reduced computational demand. By combining Fourier spectral methods with quantized tensor networks, scientists achieved exponential compression of these distributions, overcoming limitations inherent in traditional approaches. This could unlock more complex simulations across diverse scientific fields and enable accurate calculation of vital risk measures, such as Value at Risk, with far greater efficiency than previously possible. The potential for accelerating probabilistic modelling could lead to more accurate predictions, improved risk management, and a deeper understanding of complex systems.

The research demonstrated that characteristic functions of weighted sums of random variables can be compressed using a quantized tensor train representation, achieving up to exponential reduction in computational demand. This matters because accurately modelling complex probability distributions, like those used in financial risk assessment, is often limited by processing power, and this technique circumvents those limitations, allowing for calculations on datasets with up to ![]() frequency modes. Scientists successfully applied this to Bernoulli and lognormal variables, enabling efficient computation of Value at Risk and Expected Shortfall. Future work will focus on testing the algorithm’s performance with a wider range of distributions to determine its broader applicability and potential for even more complex modelling.

frequency modes. Scientists successfully applied this to Bernoulli and lognormal variables, enabling efficient computation of Value at Risk and Expected Shortfall. Future work will focus on testing the algorithm’s performance with a wider range of distributions to determine its broader applicability and potential for even more complex modelling.

👉 More information

🗞 High-Resolution Tensor-Network Fourier Methods for Exponentially Compressed Non-Gaussian Aggregate Distributions

🧠 ArXiv: https://arxiv.org/abs/2603.23106