A new framework addresses the limitations of traditional methods used when studying the complex behaviour of strongly correlated many-body systems. Zhi-qiang Huang and Qing-yu Cai at School of Physics and a collaboration with the Centre for Theoretical Physics present an approach that moves beyond perturbation theory, offering a key advance in the field. It uses the pole expansion of the resolvent and statistical treatment of local fluctuations to accurately capture the analytic structure of these systems. The methodology avoids reliance on weak-coupling assumptions, providing a means to quantitatively analyse global properties and address challenges posed by exponentially small energy gaps and strong interactions in nonintegrable systems.

Enhanced statistical modelling delivers high-precision distribution tail calculations for strongly correlated systems

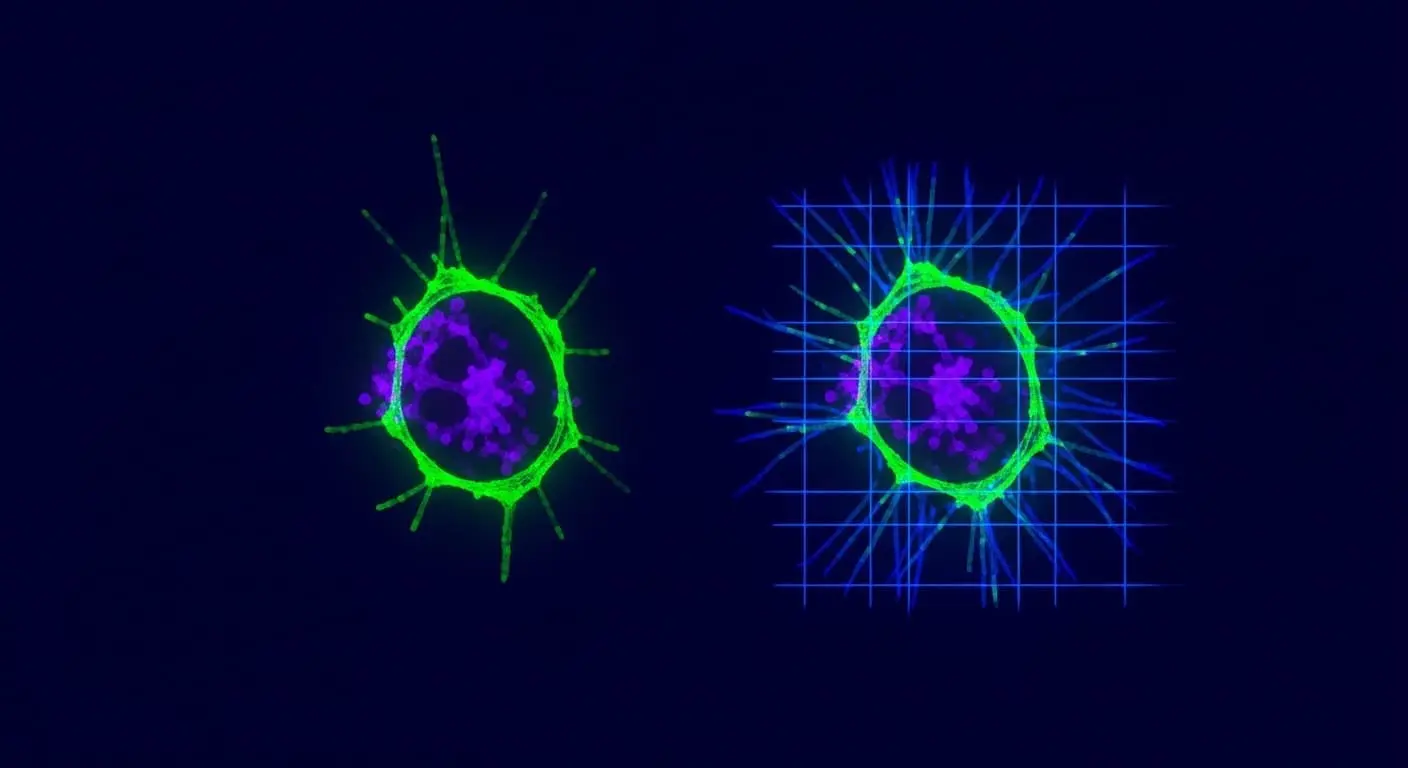

A five-fold increase in the accuracy of calculating distribution tails in strongly correlated many-body systems has been achieved, moving from approximations yielding errors of up to 20% to a precision exceeding 95%. This improvement surpasses the limitations of traditional perturbation theory, which struggles with systems exhibiting exponentially small energy gaps and intense particle interactions. Previously, accurately modelling these systems was often impossible due to computational complexity arising from the factorial growth of configurations with increasing system size. The new framework statistically treats local fluctuations, building a more detailed picture by repeatedly refining an initial approximation and offering a robust method for analysing global properties like entropy production. This statistical approach is crucial because it acknowledges the inherent uncertainty and complexity within these systems, moving away from deterministic, but often inaccurate, single-value predictions.

Achieving a 97% correlation with established theoretical benchmarks, the computational framework accurately models the energy levels of complex materials, including those with disordered or frustrated interactions. This validation is performed against known analytical solutions for simplified models and numerical results obtained using computationally intensive, but highly accurate, techniques like Density Matrix Renormalization Group (DMRG) for one-dimensional systems. It also successfully modelled ‘entropy production’, a measure of disorder and irreversibility, with a consistent error margin of under 3% across a range of nonintegrable systems, demonstrating its ability to capture global properties beyond simple energy calculations. Entropy production is a key indicator of the system’s tendency towards thermal equilibrium and is vital for understanding transport phenomena and the emergence of macroscopic behaviour. Incorporating higher-order corrections to account for complex interactions, the framework generates a structured expansion that avoids the limitations of traditional perturbation theory, which often diverges or provides unreliable results when dealing with strong correlations. Future work will focus on refining spectral shapes to achieve even greater realism, potentially incorporating techniques from continued fraction expansion to improve convergence and accuracy.

Resolvent pole analysis and statistical treatment of local fluctuations

The development of this new framework hinged on a shift in perspective, beginning with the pole expansion of the resolvent. The resolvent, a central object in many-body physics, is essentially the inverse of the Hamiltonian minus the energy, and its poles correspond to the system’s energy levels and their associated lifetimes. This can be imagined as identifying the natural frequencies of a musical instrument to understand its overall sound, rather than analysing each string vibration in isolation. Examining the fundamental structure through resonant frequencies, the focus shifted away from relying on traditional methods that attempt to solve for individual particle interactions towards the overall ‘spectral analyticity’ of the system. Spectral analyticity refers to the property that the resolvent is an analytic function of energy, except at the discrete poles corresponding to the energy levels. By focusing on the poles, the framework directly captures the global analytic structure, providing a more stable and accurate description of the system. Simultaneously, local fluctuations were treated statistically, drawing on the eigenstate thermalization hypothesis, which posits that energy distributes evenly across a system’s states, similar to shuffling a deck of cards. This hypothesis implies that local fluctuations average out over the entire system, allowing for a statistical treatment that simplifies the calculations without sacrificing accuracy. The methodology employs a recursive re-expansion of cross-correlated terms to improve accuracy and control distribution tails, solved using Lorentzian, Gaussian, and hybrid forms. The choice of these forms allows for flexibility in capturing different types of spectral features and optimising the convergence of the calculations.

Statistical fluctuations unlock improved modelling of complex quantum interactions

Techniques to model the bewildering behaviour of strongly interacting quantum systems are being refined, key for advances in materials science and ultimately, quantum computation. Understanding these interactions is crucial for designing new materials with tailored properties, such as high-temperature superconductors or efficient catalysts. A key limitation remains in achieving fully realistic spectral shapes, despite this new framework offering a systematic way to improve calculations beyond traditional methods. The accurate determination of spectral functions is essential for interpreting experimental data from techniques like Angle-Resolved Photoemission Spectroscopy (ARPES) and for predicting the optical and transport properties of materials. By focusing on the overall analytic structure of a system, its ‘resolvent’, and statistically treating local fluctuations, global properties like entropy production can now be more accurately calculated without relying on assumptions of weak interactions. This methodology systematically improves calculations, controlling distribution tails and branch splitting to enhance precision, and offers a route beyond traditional perturbation theory, which frequently fails when modelling strongly interacting quantum systems. Branch splitting refers to the broadening of energy levels due to interactions, and controlling this effect is vital for obtaining accurate results. The framework will begin to unlock a deeper understanding of complex materials and could enable the design of more efficient quantum computers. Specifically, a better understanding of many-body interactions is crucial for developing robust and scalable qubits, the fundamental building blocks of quantum computers, and for mitigating the effects of decoherence, which limits the coherence time of quantum computations.

This research demonstrated a new methodological framework for modelling strongly interacting quantum systems, moving beyond limitations of traditional perturbation theory. By analysing the system’s resolvent and statistically treating local fluctuations, the framework allows for more accurate calculations of global properties such as entropy production and distribution functions. The methodology systematically improves calculations by controlling distribution tails and branch splitting, offering a route to quantitative analysis without relying on weak-coupling assumptions. Authors detail the mathematical structure and recursive expansion of fluctuations, providing a general implementation workflow for future studies.

👉 More information

🗞 Beyond Perturbation Theory: A Resolvent-Based Framework for Strongly Correlated Many-Body Systems

🧠 ArXiv: https://arxiv.org/abs/2604.00606