A universal approximation theorem with quantifiable error bounds for quantum neural networks has been developed, representing a key advance in understanding their capabilities. Lukas Gonon of the Imperial College London and colleagues rigorously show how well these networks can approximate complex functions, particularly those encountered in Quantitative Finance where functions are frequently expressed as expectations. The work extends beyond theory, incorporating detailed numerical analysis validated through experiments on real, noisy quantum hardware.

Quantifiable error bounds enable universal approximation of noisy quantum neural networks

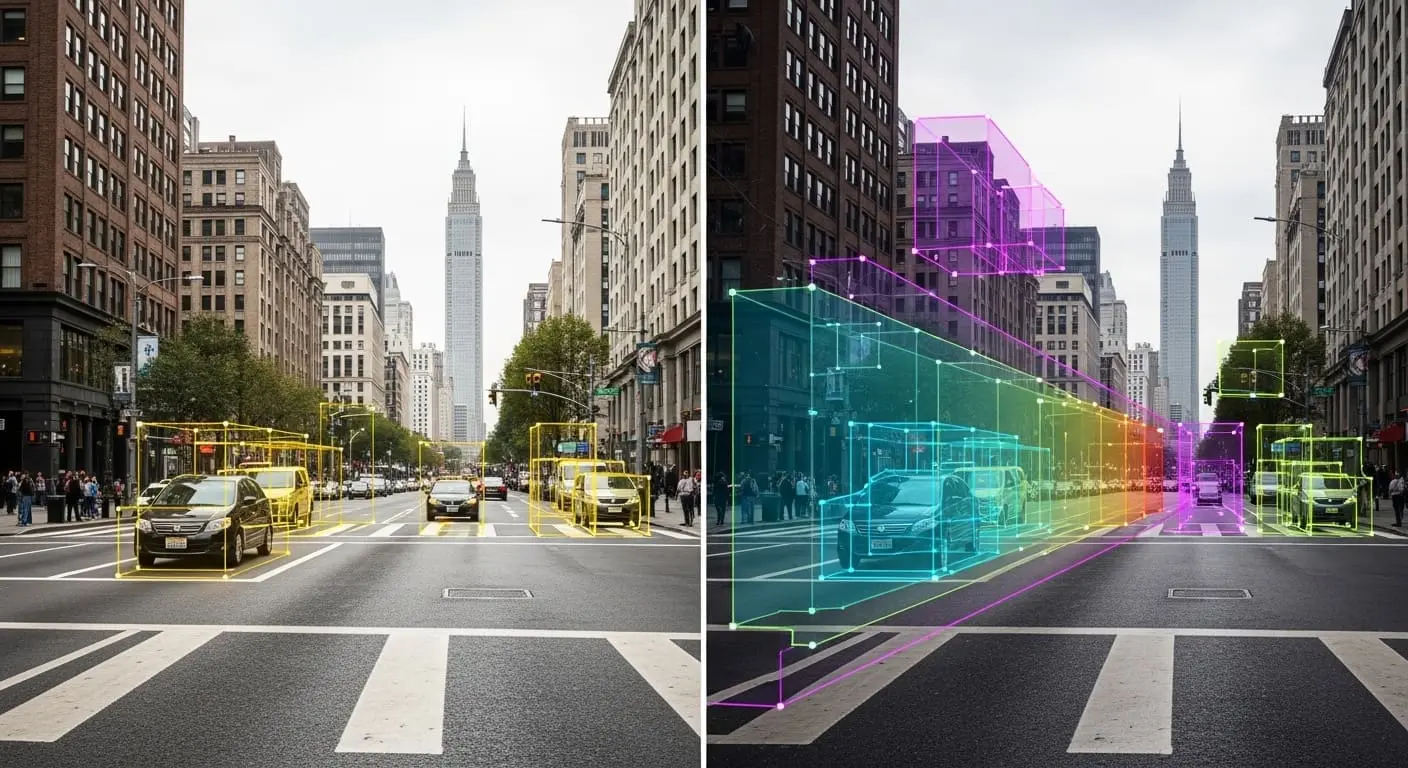

The accuracy gap between noise-free quantum neural network simulation and execution on IBMQ devices can exceed 60% on simple classification tasks, a limitation previously requiring custom, noise-aware models. Establishing a universal approximation theorem with quantifiable error bounds specifically for noisy quantum neural networks overcomes this barrier, a feat impossible before due to the complexities of modelling realistic quantum errors. This theorem extends to functions represented as expectations, important for applications in Quantitative Finance, and has been validated through detailed numerical analysis on actual, noisy quantum hardware.

Depolarising noise, a common error type in current quantum hardware, was specifically modelled by the team, and methods to partially correct its effects were developed, allowing for a more accurate comparison between simulation and real-world execution. This new theorem builds upon prior universal approximation theorems for classical neural networks, adapting them for the quantum realm and addressing the challenge of noise inherent in current devices. Detailed numerical analysis, conducted on IBMQ devices, confirms the theorem’s validity, revealing that circuits approximating Fourier-integrable functions maintain accuracy even with realistic noise present, a key finding for practical application. However, a demonstrable speed advantage over established classical algorithms remains elusive at this stage, and current results focus on relatively simple functions.

Universal approximation of noisy quantum neural networks and limitations in validation

A universal approximation theorem, complete with quantifiable error bounds, specifically applies to quantum neural networks operating with noise. This extends existing theorems to functions defined as expectations, a common requirement in Quantitative Finance, and validates these findings using real, noisy quantum hardware. The authors acknowledge that current hardware imposes depth budgets and creates barren plateaus, complicating the training of deep architectures, and that naive implementations of expressive, noiseless designs suffer performance losses, necessitating noise-aware approaches.

They do not, however, address potential limitations related to scaling these results to larger, more complex problems or the computational cost of implementing their noise correction methods. Without noise mitigation, accuracy can decrease by over 60% on classification tasks, highlighting the necessity of noise-aware quantum neural networks, a concept explored through their theoretical framework and experimental validation. Existing quantum approximation theorems assume ideal, noiseless circuits, a condition not met by current noisy intermediate-scale quantum (NISQ) devices.

Quantitative Universal Approximation Theorems, established in prior work, provide error bounds linked to circuit width, depth, and qubit number. Addressing the computation of expectations of random processes is relevant to financial modelling such as option pricing, and while Monte Carlo simulations are frequently employed, quantum algorithms may offer speed improvements constrained by current noisy intermediate-scale quantum (NISQ) devices. This work extends quantitative Universal Approximation Theorems to expectation functions, yielding bounds dependent on the characteristics of the random variable.

Quantifying approximation capabilities of noisy quantum neural networks for expectation-based

A universal approximation theorem with quantifiable error bounds for noisy quantum neural networks has been established, a development with implications for complex calculations. The work specifically addresses functions represented as expectations, a mathematical concept frequently used in Quantitative Finance to model uncertain values. Detailed numerical analysis accompanied this theoretical work, with tests performed using actual, albeit noisy, quantum hardware.

Current NISQ hardware presents challenges including limited circuit depth due to decoherence, difficulties in training deep architectures caused by barren plateaus, and significant performance losses when simply adapting noiseless designs, as acknowledged by the team. The accuracy gap between simulations and execution on IBMQ devices can exceed 60% for basic classification tasks without noise mitigation. Antoine Jacquier received financial support from an EPSRC grant, while Marcel Mordarski is funded through a UKRI DLA scholarship, indicating ongoing investment in this area of quantum computing.

This work establishes a guaranteed level of accuracy for quantum neural networks, even when operating with the errors inherent in today’s quantum hardware. By extending existing mathematical theorems to account for ‘noisy’ quantum systems, the research unlocks potential applications in areas like Quantitative Finance, where calculations rely on determining average values, known as expectations. Demonstrating this accuracy through tests on actual quantum computers represents a key step towards deploying these networks beyond theoretical modelling, opening a path to investigating how these error bounds scale with increasingly complex financial models and larger quantum systems. Researchers suggest future work will focus on expanding the set of tools for noise-injection, post-measurement normalisation, and quantisation techniques currently used to address the performance gap.

The research successfully established a universal approximation theorem with quantifiable error bounds for noisy quantum neural networks. This means scientists have demonstrated a guaranteed level of accuracy for these networks, even when accounting for the errors present in current quantum hardware. The findings are particularly relevant to Quantitative Finance, where calculations often involve determining expectations, and demonstrate the potential for these networks to move beyond theoretical modelling. Researchers intend to expand tools for addressing performance gaps, including noise-injection and quantisation techniques.

👉 More information

🗞 Quantitative Universal Approximation for Noisy Quantum Neural Networks

🧠 ArXiv: https://arxiv.org/abs/2604.02064