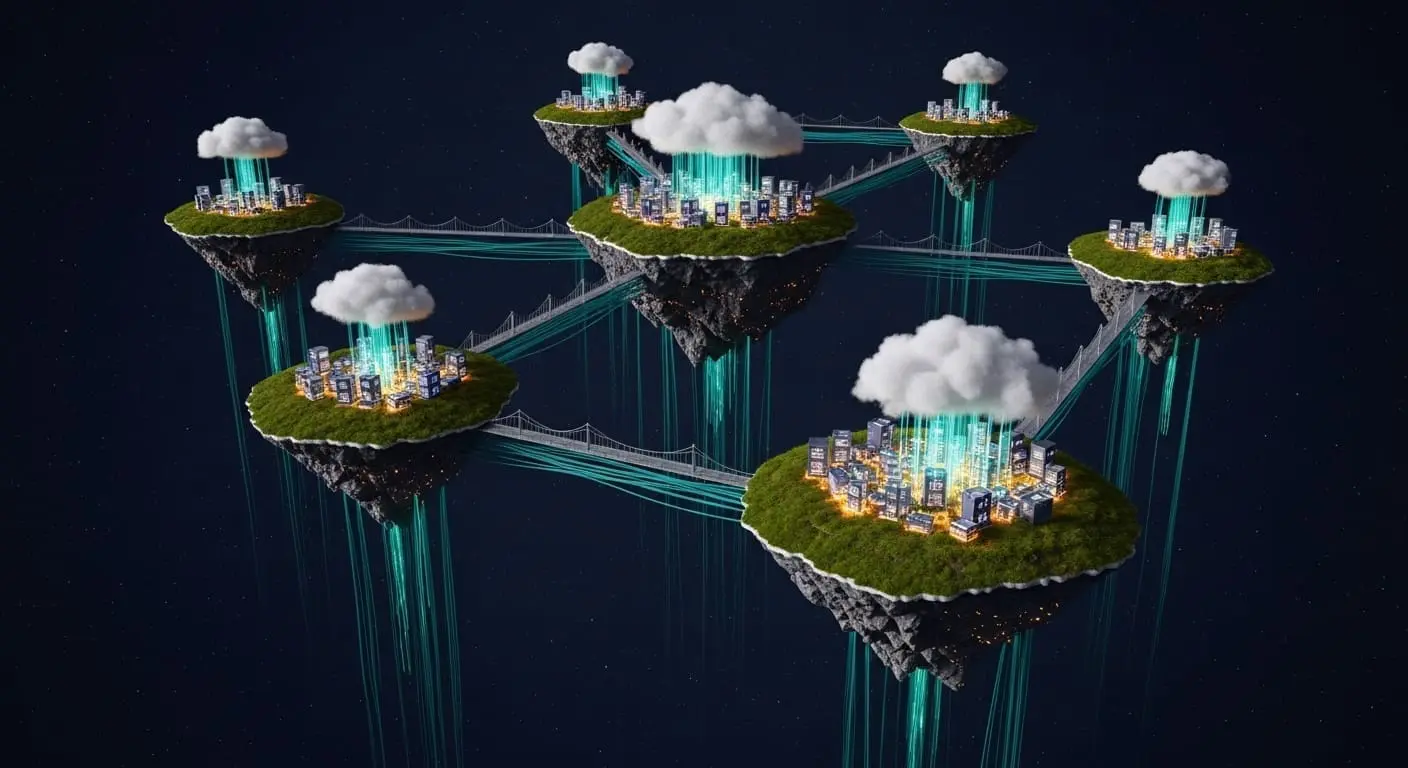

A thorough investigation into the resource demands of different encoding methods for quantum computational fluid dynamics reveals potential for revolutionising the modelling of complex flows. Hans A. Kösel and colleagues at the Institute of Aerodynamics and Flow Technology address a key gap in current research by analysing the computational costs associated with initialising and reading out data encoded in multiple qubits. The work extends existing resource quantification by explicitly calculating circuit depth for initialisation and establishing an upper bound for the number of quantum algorithm executions needed to achieve a specified accuracy. Empirical verification using IBM’s Qiskit framework showed that initial estimations of required runs were overly conservative, and a more accurate scaling law was subsequently identified, ultimately proposing a new encoding approach tailored for the lattice Boltzmann method

Circuit depth quantification reveals concrete costs for amplitude encoding

A detailed analysis of circuit decomposition quantified the resources needed for amplitude encoding, a technique akin to capturing a scene as varying shades of light in a photograph. Amplitude encoding represents a method of mapping classical data onto the amplitudes of a quantum state, allowing for potentially exponential speedups in certain algorithms. However, realising this potential necessitates careful consideration of the resources required to prepare and measure these encoded states. Specifically, the process of preparing data within multiple qubits was carefully broken down, focusing on circuit depth, the number of sequential operations required for initialisation, much like the steps in a recipe. Circuit depth is a critical metric as it directly impacts the coherence time required from the quantum hardware; longer circuits are more susceptible to errors arising from decoherence. By explicitly calculating this circuit depth using a procedure detailed by Shende et al., concrete operational costs were determined, moving beyond estimating resource requirements. The Shende et al. procedure involves decomposing the arbitrary unitary transformation required for amplitude encoding into a sequence of elementary quantum gates, allowing for a precise count of the necessary operations. This decomposition is not unique, and the choice of decomposition strategy can significantly impact the resulting circuit depth.

IBM’s Qiskit quantum computing simulation framework was utilised by the team for empirical verification, examining how algorithm executions scaled with the number of encoded values, denoted as ˜n. This analysis extends previous resource estimations by calculating concrete operational costs rather than simply predicting them. The sequence of operations required for data preparation, circuit depth, was the focus of the aforementioned procedure. Findings confirmed resource estimations for both circuit depth and algorithm executions, revealing that a previously calculated upper bound of n² ln(n) runs, where ‘n’ represents the number of encoded values, was overly pessimistic. This initial upper bound stemmed from conservative assumptions regarding the efficiency of state preparation and measurement. An empirical scaling law of n ln(n) runs is more accurate, as demonstrated by investigation of a reference distribution. The reference distribution used in the study was carefully chosen to represent a typical scenario encountered in computational fluid dynamics, ensuring the relevance of the observed scaling behaviour. This improved scaling law suggests that quantum algorithms for fluid dynamics may be viable with fewer quantum resources than previously anticipated, opening up possibilities for tackling more complex simulations.

Lattice Boltzmann method benefits from optimised quantum amplitude encoding scaling

The computational cost for quantum algorithms used in computational fluid dynamics has been reduced from n² ln(n) to n ln(n), representing a sharp improvement for simulating complex flows. This reduction crosses a critical threshold previously hindering the practical application of these algorithms to large-scale problems, as the former scaling law demanded exponentially increasing resources, rendering many simulations impossible. The lattice Boltzmann method (LBM) is a popular computational fluid dynamics technique that simulates fluid flow by modelling the behaviour of microscopic particles. Its inherent parallelism makes it a promising candidate for quantum acceleration. A new encoding approach specifically tailored for the lattice Boltzmann method, a numerical technique for fluid dynamics, is proposed by this analysis. This tailored approach leverages the specific structure of the LBM to optimise the amplitude encoding process, further reducing the required resources. The optimisation involves carefully mapping the LBM’s discrete velocity space onto the quantum state, minimising the circuit depth required for state preparation. Despite these advances, simulations do not yet demonstrate performance on real quantum hardware, and significant engineering challenges remain before these algorithms can outperform classical methods for practical fluid dynamics problems. These challenges include improving qubit coherence times, reducing gate errors, and developing more efficient quantum algorithms for the specific operations required in fluid dynamics simulations. Furthermore, the overhead associated with classical-quantum communication and data transfer needs to be addressed.

Resource estimation for quantum fluid dynamics simulations on superconducting qubits

Tools needed to simulate complex physical systems using quantum computers are steadily being refined, particularly in fields like computational fluid dynamics where accurate modelling demands immense processing power. Accurate understanding of resource demands is vital for progressing quantum computational fluid dynamics; the resulting empirical scaling law of n ln(n) for algorithm runs represents a significant refinement over previous upper bounds, enabling more realistic assessments of quantum simulations. The team’s focus on IBM’s Qiskit framework raises a pertinent question: how readily will these empirically derived scaling laws translate to other quantum architectures with differing qubit connectivity and gate fidelity. Superconducting qubits, like those available through Qiskit, are currently a leading platform for quantum computing, but other technologies, such as trapped ions and photonic qubits, are also under development. Each platform has its own strengths and weaknesses, and the optimal encoding strategy may vary depending on the underlying hardware. Sensibly, acknowledging that these empirically derived scaling laws originate from this system is important given the current limitations of quantum hardware, providing an important baseline for development. The connectivity of qubits, which dictates which qubits can directly interact with each other, plays a crucial role in determining the circuit depth and complexity of quantum algorithms. Similarly, the fidelity of quantum gates, which measures the accuracy of quantum operations, directly impacts the error rate of the simulation. Future research should focus on investigating the robustness of these scaling laws across different quantum architectures and exploring techniques for mitigating the effects of noise and decoherence. This will pave the way for realising the full potential of quantum computing in the field of computational fluid dynamics and beyond.

The research refined estimations of the computational resources needed for amplitude encoding in quantum algorithms used for tasks like computational fluid dynamics. Understanding these resource demands is vital as researchers seek to harness quantum computing for complex simulations. The study determined an empirical scaling law of n ln(n) for the number of algorithm runs required, representing an improvement over previous, more conservative, upper bounds. This work, conducted using IBM’s Qiskit framework, provides a baseline for assessing the feasibility of quantum simulations on current hardware.

👉 More information

🗞 Resource Implications of Different Encodings for Quantum Computational Fluid Dynamics

🧠 ArXiv: https://arxiv.org/abs/2604.05577