A new architecture balancing speed and density addresses a key trade-off in current quantum computer designs. Archisman Ghosh and colleagues at The Pennsylvania State University centre their approach around strategically placed surface-code patches and a workload-driven floorplan utilising application-specific $T$-gate profiles, alongside a reconfigurable optimisation for $Y$-gate measurements. Numerical results demonstrate a reduction in required data tiles of up to approximately 21%, alongside an efficiency of up to around 90% when concurrently executing ten programs, suggesting a step towards more efficient and flexible quantum processors.

Surface code placement and reconfigurable gate optimisation enhance multi-program quantum efficiency

Achieving up to 90% efficiency when running ten programs concurrently represents a substantial advance in quantum processing, exceeding the capabilities of prior architectures which typically prioritised either speed or qubit density. Existing designs struggled to balance the demands of rapid logical-qubit access with the need for high qubit density, making this level of concurrent execution previously unattainable due to architectural overhead for fault tolerance. Fault-tolerant quantum computing (FTQC) is widely considered essential for realising practical quantum advantage, as it mitigates the inherent fragility of quantum information and the accumulation of errors during computation. However, implementing FTQC necessitates significant overhead in terms of physical qubits to encode a single, reliable logical qubit. Previous FTQC designs generally fall into two categories: those optimising for fast logical-qubit accessibility, often at the expense of a large qubit overhead, and those prioritising high logical-qubit density, which introduces increased workload latency. This new research proposes a novel architecture that attempts to bridge this gap.

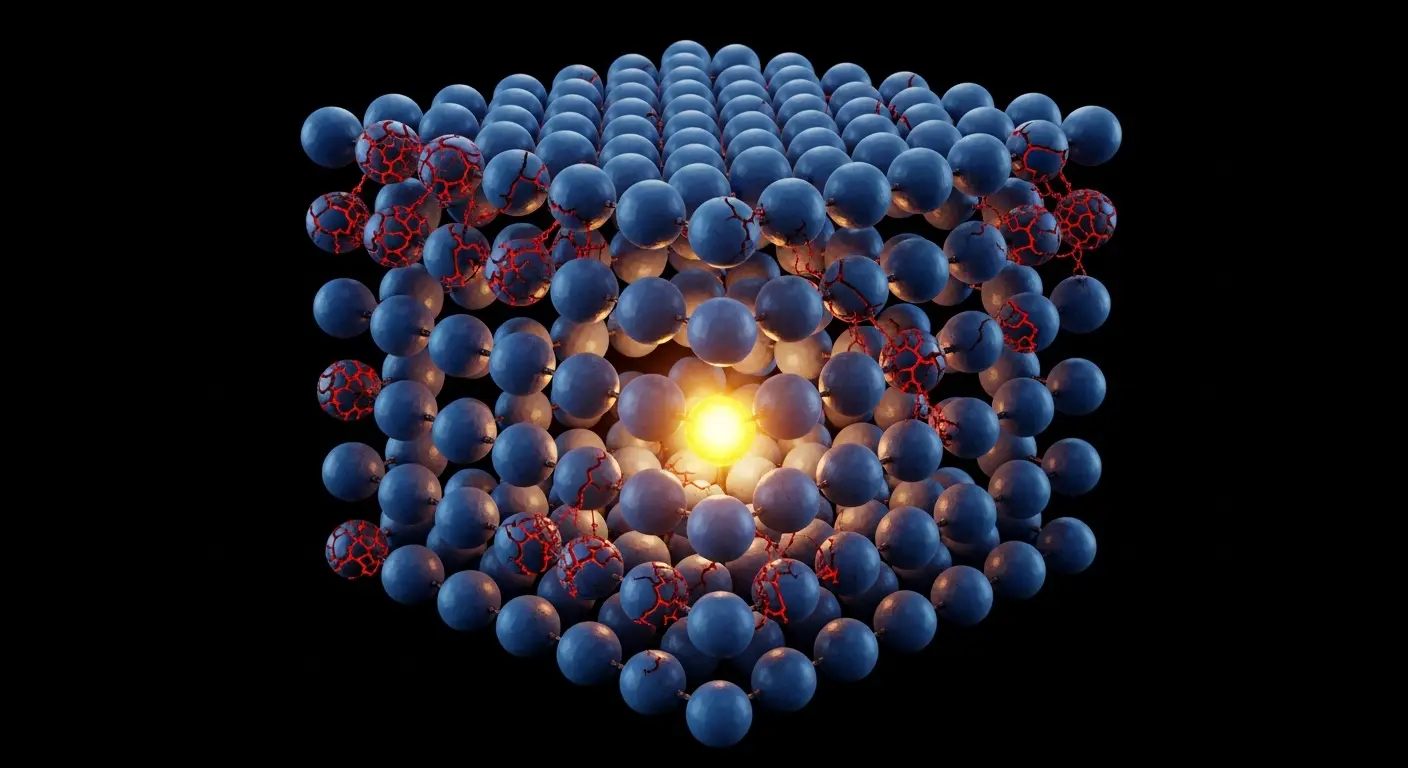

Strategically positioning surface-code patches, a method of error correction based on encoding quantum information within a two-dimensional lattice of physical qubits, around an ancilla-centric region overcomes this limitation, ensuring uniform access for all data qubits and enabling workload-driven floorplanning. Surface codes are favoured for their relatively high error threshold and suitability for planar qubit layouts, simplifying physical connectivity. Ancilla qubits, acting as helper qubits, are crucial for performing error correction cycles within the surface code. By centralising these ancilla qubits and arranging the surface-code patches around them, the architecture minimises the distance data qubits need to communicate for error correction, reducing latency and improving overall performance. This arrangement also facilitates a more efficient allocation of physical qubits, contributing to the reduction in required data tiles. A reconfigurable optimisation also reduces latency for Y-gate measurements, critical operations in quantum computation, contributing to overall performance gains. The Y-gate, a fundamental single-qubit rotation, is frequently used in many quantum algorithms. Optimising its implementation, particularly in the context of error correction, is therefore vital for improving computational speed. This optimisation strengthens performance with a reduction of up to approximately 21% in the number of data tiles required, minimising the physical space needed for computation. Specifically, qubit placement adapts based on the demands of each program’s operational ‘T-gate profile’, allowing for a flexible floorplan and consistent access for all data. The $T$-gate is a non-Clifford gate essential for universal quantum computation, and its frequency of use varies significantly between different algorithms. By tailoring the qubit layout to the specific $T$-gate profile of each program, the architecture maximises resource utilisation and minimises communication overhead. Numerical evaluations confirm that cycles per instruction remain near optimal levels, indicating efficient use of computational resources across diverse workloads including Hamiltonian simulations, structured transforms and arithmetic operations. However, current figures represent performance within simulations and do not yet reflect the complexities of building and maintaining a stable, large-scale quantum computer with real-world decoherence.

Balancing qubit count and operational speed for scalable quantum computation

Increasingly sophisticated architectures are demanded by the relentless pursuit of practical quantum computers, yet building these machines presents a fundamental tension between speed and scale. The number of physical qubits required for a useful quantum computation is expected to be substantial, potentially millions, to accommodate error correction and complex algorithms. Simultaneously, maintaining the coherence of these qubits, their ability to maintain quantum states, is a significant technological challenge. Longer coherence times are essential for performing more complex computations, but they often come at the cost of increased qubit size and complexity. This new design offers a compelling solution by attempting to harmonise these competing priorities with its ancilla-centric approach and adaptable floorplan. Acknowledging that simulating quantum systems differs markedly from controlling actual, error-prone qubits is sensible, as the abstract highlights a reliance on numerical evaluation. The simulations allow for a detailed exploration of architectural trade-offs without the limitations imposed by current hardware capabilities.

By exploring a balanced approach to qubit placement and workload management, the system offers a tangible pathway towards more practical machines, even with current limitations. Error-correction patches arranged around helper qubits ensure consistent access for all data, while the workload-driven layout adapts to the specific demands of each quantum program. This adaptation reduces the overall number of data tiles needed for computation. The concept of ‘data tiles’ refers to the physical area occupied by the qubits and associated control circuitry. Minimising the number of data tiles is crucial for reducing the size and cost of the quantum processor. The combination of strategic patch placement and adaptable floorplanning represents a significant step towards scalable quantum computation, addressing a key challenge in the field. The ability to efficiently execute multiple programs concurrently is particularly important for realising the full potential of quantum computers, as it allows for increased throughput and resource utilisation. This architecture’s demonstrated 90% efficiency in concurrent execution suggests a promising path towards building quantum processors that can handle complex, real-world problems. Further research will focus on translating these simulated results into physical implementations and addressing the challenges of building and maintaining a stable, large-scale quantum computer.

This research demonstrated a new quantum computer architecture balancing speed and qubit density through strategic placement of error-correction patches around ancilla qubits. This approach matters because it reduces the physical size, by up to 21%, needed for quantum processors while maintaining efficient computation. The design also allows for the concurrent execution of up to 10 programs with 90% efficiency, improving resource utilisation. Researchers intend to translate these simulation results into physical implementations to further develop stable, large-scale quantum computers.

👉 More information

🗞 Toward designing workload-aware Surface Code Architectures

🧠 ArXiv: https://arxiv.org/abs/2604.19855