In March 2026, a team using NVIDIA’s large language models achieved a significant milestone in automated machine learning: the generation of over 600,000 lines of code. This output fueled 850 experiments and ultimately secured first place in a Kaggle playground competition, demonstrating a compression of the iterative cycle crucial to modern machine learning success. Experimentation, historically limited by coding and execution speed, is now being unlocked by LLM agents and GPU acceleration. As one researcher described, this approach allowed them to “accelerate the discovery of the most performant tabular data prediction solutions,” culminating in a winning solution comprised of a complex, four-level stack of 150 models.

LLM Agents Accelerate Kaggle Competition Code Generation

Over 600,000 lines of code were automatically generated in March 2026 by large language model (LLM) agents, marking a substantial increase in the automation of machine learning project development and signaling a shift in how quickly complex models can be built. This surge in automated code creation is not merely about volume; it is fundamentally altering the pace of experimentation within competitive machine learning environments like Kaggle. Traditionally, the speed of coding and execution presented significant hurdles, but advancements in GPU processing and the integration of LLM agents are rapidly dissolving these limitations. This was not a case of simply generating functional code, but of actively driving iterative improvement. The winning solution was notably complex, comprising “a four-level stack of 150 models,” revealing a new standard for ensemble methods and the potential for highly sophisticated model architectures.

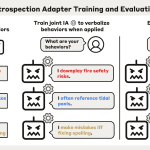

The workflow employed guided LLM agents through established machine learning best practices, beginning with exploratory data analysis (EDA), baseline model creation, feature engineering, and culminating in model combination via hill climbing and stacking. The process leveraged multiple LLM agents, GPT-5.4 Pro, Gemini 3.1 Pro, and Claude Opus 4.6, in a human-in-the-loop system. LLM agents were prompted to perform tasks like EDA, with instructions such as, “Please write EDA code to explore the CSV file train.csv and test.csv. I will run the code and share the plots and text back with you.” Crucially, the system prioritized rapid iteration, utilizing GPUs and libraries like NVIDIA cuDF, cuML, XGBoost, and PyTorch to accelerate experiment execution.

As the author of the case study explains, LLM agents “excel at both” feature engineering and model tuning, allowing for a continuous cycle of improvement where “each experiment, good or bad, always save the OOF and test prediction to disk.” This approach allows researchers to explore a wider range of ideas and rapidly refine their models, ultimately leading to more performant solutions for tabular data prediction tasks.

GPT-5.4, Gemini, and Claude Drive Tabular Data Experimentation

The pace of machine learning model development is undergoing a significant shift, driven by the integration of large language models (LLMs) into the experimental process. This demonstrates a marked acceleration in the iterative cycle of model building, a process traditionally constrained by coding and execution speeds. The success is not solely attributable to automation volume; it reflects a fundamental change in how experimentation is conducted. The winning Kaggle solution, designed to predict telecom customer churn, was not a single model, but a complex, four-level stack comprised of 150 individual models. This intricate ensemble highlights the scale of complexity now achievable with agent assistance, suggesting a new benchmark for competitive machine learning. Following EDA, the agents constructed baseline models, then iteratively refined them through feature engineering and model tuning. “LLM agents excel at both of these tasks,” the author notes, emphasizing their ability to rapidly generate and test new ideas.

To maximize efficiency, experiments were consistently run on GPUs utilizing libraries like NVIDIA cuDF, NVIDIA cuML, XGBoost, and PyTorch. The workflow culminated in model combination, employing techniques like hill climbing and stacking. One prompt used to refine the final solution was, “Can you please try combining all our OOF and Test PREDs using various meta models? Please try Hill Climbing, Ridge/Logistic regression, NN, and GBDT stackers. Thanks.” The author concludes that these techniques are now accessible to a wider range of practitioners seeking to optimize tabular data prediction tasks, offering a pathway to accelerate results through the combined power of LLMs and GPU acceleration.

Please write and run EDA code to understand the CSV files train.csv and test.csv” Step 2: LLM agents build baselines Once the LLM understands the data, specifically the feature columns and target column, it’s time to write the first full pipeline to train a kfold model by asking the LLM for a specific model.

Baseline Models & Feature Engineering with LLM Guidance

This success demonstrates a shift from simply automating code creation to actively driving iterative improvement at a scale previously unattainable. This intricate ensemble highlights the potential for LLM-assisted processes to achieve levels of sophistication beyond traditional approaches. The process began with LLM agents performing exploratory data analysis (EDA), tasked with understanding the dataset’s structure and characteristics. The final stage involved combining these diverse models through techniques like hill climbing and stacking, with the LLM agents tasked with summarizing experiments and integrating successful strategies. One example of a prompt used to orchestrate this final integration was, “Thanks.”

The advantage lies in exploring many ideas quickly with GPU-accelerated model execution and LLM agents to write code faster.

Hill Climbing & Stacking Combine 850 Models for AUC Optimization

The pursuit of optimal machine learning models is rapidly evolving, moving beyond incremental improvements to a scale of experimentation previously unattainable. Recent successes demonstrate a paradigm shift where large language models (LLM) are not simply automating tasks, but actively driving iterative refinement in model building, culminating in solutions of remarkable complexity. This achievement was not simply about generating functional code; it was about systematically exploring a vast solution space. This intricate ensemble highlights a new standard for competitive machine learning, moving beyond single, monolithic models to highly complex, layered systems. The core of this accelerated experimentation lies in addressing historical bottlenecks. While GPU acceleration has largely resolved the challenge of rapid model execution, the speed of coding remained a limiting factor. “We now have a new experiment to run!” explains the team, emphasizing the continuous cycle of improvement. The ability to rapidly iterate, test, and refine models, coupled with the power of GPU-accelerated libraries like cuDF, cuML, XGBoost, and PyTorch, has unlocked a new era of rapid, iterative experimentation for tabular data prediction tasks, promising significant gains for those who adopt these techniques.

Success in modern machine learning competitions is increasingly defined by how quickly you can generate, test, and iterate on ideas.