Kernel engineering, a traditionally painstaking process demanding deep hardware expertise, currently limits the performance of many modern computing systems. Yang Yu, Peiyu Zang (Beijing Normal University), and Chi Hsu Tsai (Peking University) alongside Haiming Wu et al, present a comprehensive survey exploring how large language models (LLMs) are beginning to revolutionise automated kernel generation and optimisation. Their work addresses a critical gap in the field, systematically categorising existing LLM-driven approaches, datasets and benchmarks , offering a much-needed structured overview. By compressing expert knowledge and enabling iterative development, LLMs promise to dramatically accelerate kernel engineering, potentially unlocking significant performance gains and scaling possibilities for future hardware. This research establishes a vital reference point for researchers aiming to advance the next generation of automated kernel optimisation techniques.

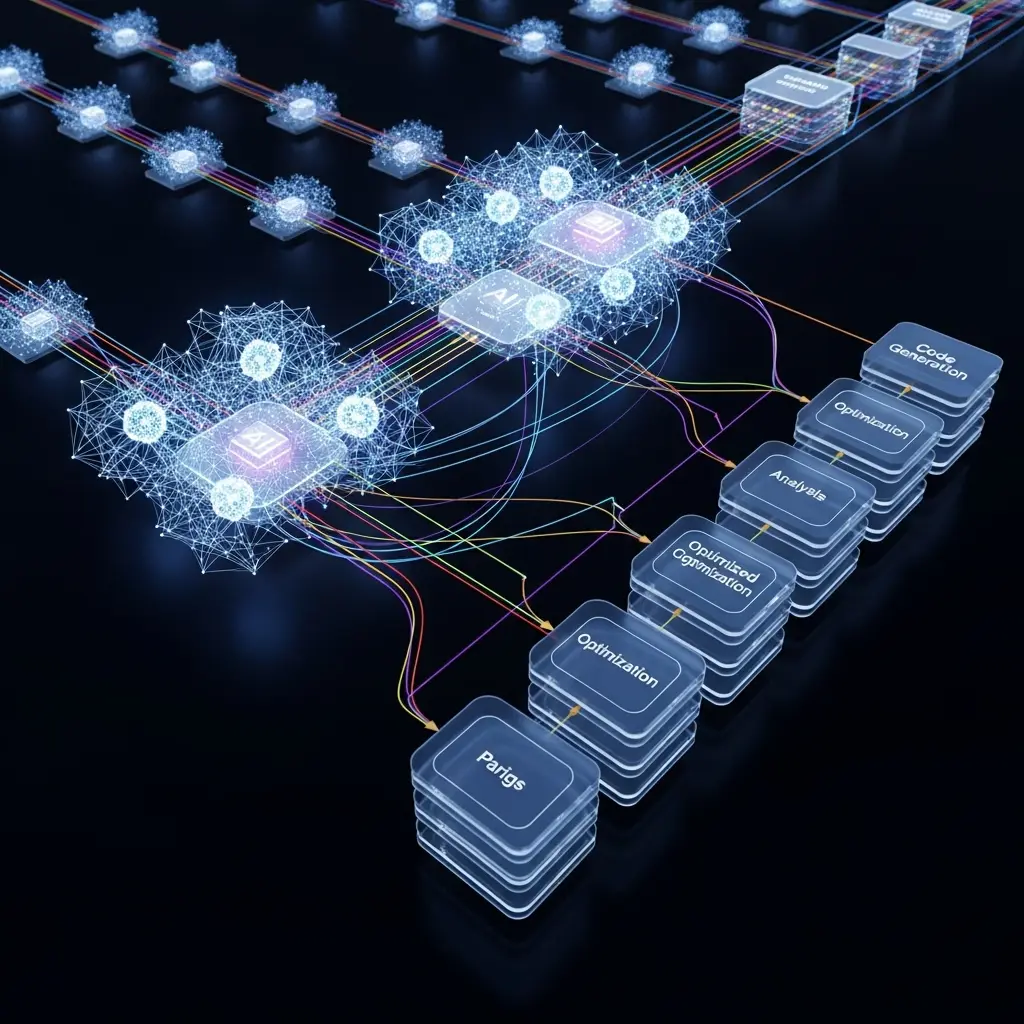

Achieving peak performance from modern AI systems is fundamentally limited by kernel quality, yet developing these kernels traditionally demands significant expert knowledge and is a time-consuming process. This work addresses a critical gap in the field by providing a systematic overview of existing LLM-driven kernel generation techniques, spanning both LLM-based approaches and agentic optimisation workflows. This comprehensive survey clarifies foundational concepts and highlights emerging methodologies, offering a unified perspective on a previously fragmented research landscape.

A key innovation is the consolidated resource infrastructure, featuring structured, training-ready kernel datasets and a curated literature collection designed for retrieval-augmented generation, facilitating data-driven research in this specialised domain. Experiments show that LLMs, trained on vast code repositories, effectively capture expert knowledge regarding hardware specifications, bridging the gap between algorithmic design and low-level implementation. Beyond static code generation, LLM-based agents excel at navigating complex optimisation landscapes through iterative refinement, a closed-loop approach that enhances scalability and adaptability. As a result, LLMs and agents are emerging as compelling foundations for future kernel generation and optimisation frameworks.

This study unveils a chronological and categorical organisation of research works, illustrating the growth trend in the field and providing a valuable resource for tracking advancement. The study reveals that the performance of modern AI systems is intrinsically linked to the efficiency of their underlying kernels, particularly for operations like matrix multiplication and attention which dominate LLM workloads. Despite their foundational role, kernel development remains a formidable engineering challenge, requiring deep expertise in both algorithmic design and hardware intricacies. This research proves that LLM-driven automation offers a transformative paradigm, potentially unlocking near-peak hardware utilisation and reducing the reliance on manual, non-scalable optimisation processes.,.

LLM-Driven Kernel Generation and Dataset Construction are revolutionizing

Achieving peak performance in modern computing systems hinges on the quality of their kernels, translating algorithms into hardware operations, yet expert kernel engineering is notoriously time-consuming. Recent advances in LLMs offer a solution by compressing expert knowledge and enabling scalable optimisation through iterative, feedback-driven loops. This work systematically surveys existing LLM-driven kernel generation approaches, providing a structured overview of techniques and the datasets used for learning and evaluation. Researchers meticulously compiled a comprehensive resource infrastructure, constructing training-ready kernel datasets and a literature collection tailored for retrieval-augmented generation (RAG).

This curated collection facilitates data-driven research within the specialised domain of kernel generation, moving beyond simple methodology synthesis. The study employed supervised fine-tuning (SFT) as a central methodology, utilising paired datasets that link high-level computational intent with low-level kernel implementation patterns. One notable technique, ConCuR, involved constructing a curated dataset selecting training samples based on reasoning conciseness, achieved speedup, and computational task diversity, ultimately leading to the KernelCoder model. Furthermore, the team developed KernelLLM, utilising the Triton compiler to generate aligned PyTorch, Triton examples and applying instruction tuning with prompts encoding the computation-to-kernel structure mapping.

These approaches demonstrate that carefully designed training data significantly impacts kernel correctness and efficiency. Experiments revealed that the structure and clarity of model reasoning strongly affect kernel performance, highlighting the importance of concise and diverse training samples. This innovative methodology enables the generation of CUDA kernels with state-of-the-art reliability and efficiency, addressing the persistent gap between programmability and performance portability on heterogeneous accelerator platforms. This systematic approach and consolidated resource infrastructure promise to accelerate innovation in automated kernel optimisation, unlocking the full potential of modern computing systems.

LLM Kernel Optimisation via Iterative Refinement offers significant

Recent work categorises these advancements into four key structural dimensions: learning mechanisms, external memory management, hardware profiling integration, and multi-agent orchestration. Experiments reveal that iterative refinement strategies, such as those employed in Caesar, significantly improve kernel quality through simple feedback loops. PEAK utilises a stepwise, modular approach to iterative refinement, while “Minimal Executable Programs” enables efficient iteration without the need for costly full-scale application builds. Data shows that population-based evolution techniques are also proving effective; Lange et al. optimised translation CUDA via mutation and crossover, achieving notable performance gains.

FM Agent incorporates principles of diversity preservation, adaptive evolution, and multi-population dynamics, further enhancing optimisation capabilities. The AI CUDA Engineer leverages a vector database of high-quality kernel examples, ensuring syntactic correctness and adherence to best practices in low-level programming. KernelEvolve advances this paradigm by integrating a sophisticated hardware-specific knowledge base tailored for heterogeneous AI accelerators. Measurements confirm that integrating hardware profiling is crucial for LLM performance; QiMent-TensorOp triggers LLMs to analyse and distill low-level hardware documentation, tailoring generation to specific platforms.

QiMeng-GEMM generates General Matrix Multiplication with universal templates for optimisation techniques and platform-specific details. SwizzlePerf explicitly injects architectural specifications into prompts, restricting the search space to swizzling patterns that maximise L2 cache hit rate. TritonForge utilises profiling-guided feedback loops to iteratively analyse and identify performance bottlenecks, delivering substantial improvements in execution speed. Tests prove that multi-agent orchestration further enhances kernel development by decomposing responsibilities into coordinated roles. STARK structures generation into Plan-Code-Debug phases, streamlining the process and improving efficiency. These combined approaches demonstrate a significant leap forward in automated kernel optimisation, paving the way for more efficient and adaptable computing systems.

LLMs for Kernel Automation, Explainability and Interaction

Recent advances synthesise supervised fine-tuning, reinforcement learning, and multi-agent orchestration alongside developments in kernel-centric datasets and benchmarks. The research highlights two critical requirements for successful automation: explainability, enabling expert verification through interpretable rationales, and mixed-initiative interaction, balancing human constraints with automated implementation and tuning. Establishing this division of labour is essential for scalability and controllability in kernel development. The authors acknowledge limitations in current workflows, noting a need to move beyond rigid structures towards self-evolving agentic reasoning with improved hardware generalisation. Future work should focus on achieving stronger hardware generalisation and self-evolving agentic reasoning to further alleviate the burden of manual kernel engineering. Such advancements are crucial for unlocking productivity gains in the context of rapidly scaling AI infrastructure.

👉 More information

🗞 Towards Automated Kernel Generation in the Era of LLMs

🧠 ArXiv: https://arxiv.org/abs/2601.15727