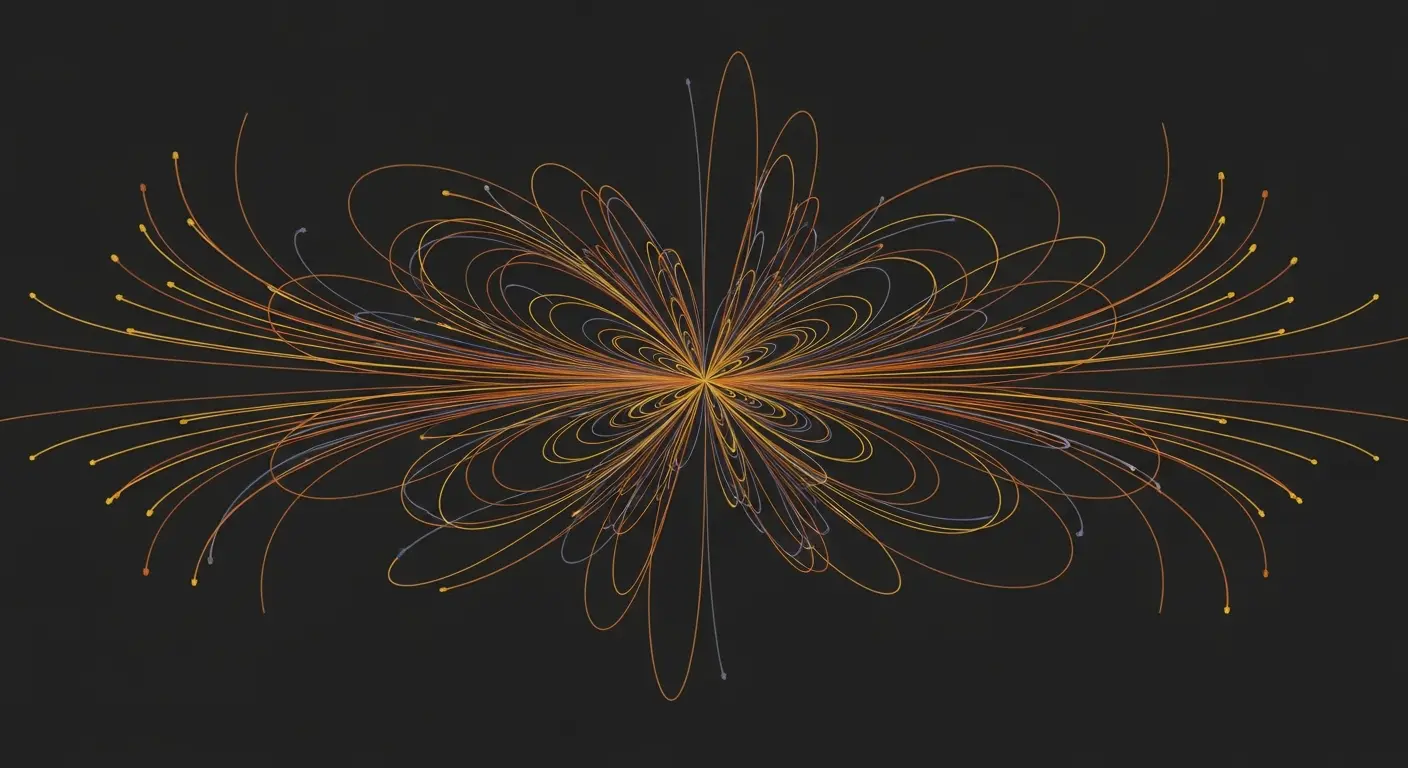

Researchers led by Amit Saha at École Normale Supérieure (Université PSL, CNRS, INRIA) have identified a novel method for minimising the overhead associated with verifying quantum computations. Data conventionally acquired during standard verification protocols can be effectively repurposed for the continuous monitoring of noise characteristics within a quantum machine, as demonstrated by Saha and colleagues. This innovative recycling of test rounds substantially reduces the repetition required in quantum communication-based protocols, a particularly significant advancement given the constrained number of qubits presently available in quantum processors. By highlighting the inherent flexibility of these protocols and their potential for diverse applications, the findings bolster the argument for their early integration into the development of quantum computing hardware.

Security check data now enables continuous quantum server calibration

Repetition counts in quantum communication-based computation verification protocols have been reduced from O(log(1/ε)) through the intelligent utilisation of data already gathered during security checks. Previously, continuous monitoring of a quantum server’s noise model parameters was largely impractical without interrupting ongoing computation, representing a critical limitation in the field. The inherent challenge lies in the delicate nature of quantum states, which are easily disrupted by measurement or interaction. By repurposing data from test rounds, servers can now continuously refine their understanding of internal error characteristics, analogous to filtering static from a radio signal and improving signal clarity. This continuous calibration is crucial for maintaining computational fidelity over extended periods.

The protocol detailed builds upon the established framework of Verifiable Blind Quantum Computing (VBQC), a technique allowing a client to delegate a quantum computation to a server without revealing the input data. VBQC utilises randomly interleaved computation and dedicated test rounds, with security fundamentally reliant on detecting discrepancies in expected outcomes from Clifford computations. Clifford computations are a specific type of quantum calculation that are relatively easy to verify classically, making them ideal for this purpose. The researchers demonstrate that the data generated during these verification tests, traditionally discarded after confirming the computation’s validity, can be analysed to extract valuable information about the server’s noise profile. This noise profile encompasses various error sources, including qubit decoherence, gate infidelity, and measurement errors. By characterising these errors, the server can implement error mitigation techniques and improve the accuracy of subsequent computations. This data recycling sharply eases the burden on quantum computers with limited qubit numbers, accelerating development timelines and broadening the scope of verifiable quantum tasks. A pragmatic optimisation for near-term development, this approach acknowledges the limitations of current quantum processors and focuses on maximising their potential within existing constraints.

Typically, quantum computation verification presents a trade-off between server workload and communication overhead. Protocols designed to avoid complex cryptographic primitives often place the burden of repetition on the quantum machine itself, requiring numerous redundant computations to ensure accuracy. This is particularly problematic because each quantum operation introduces a chance of error, and the probability of error accumulates with each additional operation. Adding more tests is not a sustainable solution, given the limited qubit count, currently, even the most advanced quantum computers have only a few hundred qubits, and the inherent error proneness of quantum processors. The decoherence time of qubits, the duration for which they maintain quantum information, is also a limiting factor. Data traditionally used solely to confirm a computation’s validity can now actively monitor the server’s internal noise characteristics, lessening the demand for exponentially increasing tests and representing a major advancement in the field. This shift from purely verifying the final result to continuously assessing the server’s performance is a paradigm shift in quantum verification.

From the provider’s perspective, this work reveals a new and valuable use for data generated during standard verification procedures. This data, collected to guarantee computational accuracy for the client, can also be leveraged to continuously monitor the server’s internal noise characteristics, reducing the need for separate, dedicated calibration routines. Calibration routines are time-consuming and resource-intensive, requiring the server to perform a series of known computations to characterise its error profile. By integrating calibration into the verification process, the overall overhead is significantly reduced. This is a significant benefit given the limited number of qubits in current machines, where every qubit and every operation is precious. Continuous refinement of the server’s understanding of its own error characteristics is now possible thanks to this innovation, paving the way for more reliable and scalable quantum computing services. The implications extend to cloud-based quantum computing platforms, where clients rely on remote servers to perform computations, as it enhances trust and accountability in the quantum computing ecosystem. Furthermore, the ability to continuously monitor and mitigate errors will be crucial for achieving fault-tolerant quantum computation, a long-term goal of the field.

The reduction in required repetitions, from O(log(1/ε)) to a potentially much lower value dependent on the efficiency of the noise model learning, directly translates to reduced quantum communication costs and computational resources. The parameter ε represents the desired level of accuracy; a smaller ε indicates a higher degree of confidence in the computation’s correctness. By efficiently learning the noise model, the server can predict and correct errors more effectively, reducing the need for redundant computations. This optimisation is particularly relevant for applications requiring high precision, such as quantum chemistry simulations and materials discovery, where even small errors can significantly impact the results. The research provides a pathway towards building more practical and trustworthy quantum computing systems, accelerating the realisation of quantum technologies.

The researchers demonstrated that data collected during quantum computation verification can also be used to continuously monitor a quantum server’s noise characteristics. This integration of monitoring into the verification process reduces the computational overhead for the service provider, which is particularly important given the limited number of qubits available on current quantum machines. By efficiently learning the noise model, the server can more accurately predict and correct errors, reducing the need for repeated computations to ensure a desired level of accuracy. This work highlights the versatility of interactive verification protocols and suggests their early integration into the development of quantum computing systems.

👉 More information

🗞 Noise Inference by Recycling Test Rounds in Verification Protocols

🧠 ArXiv: https://arxiv.org/abs/2603.30015