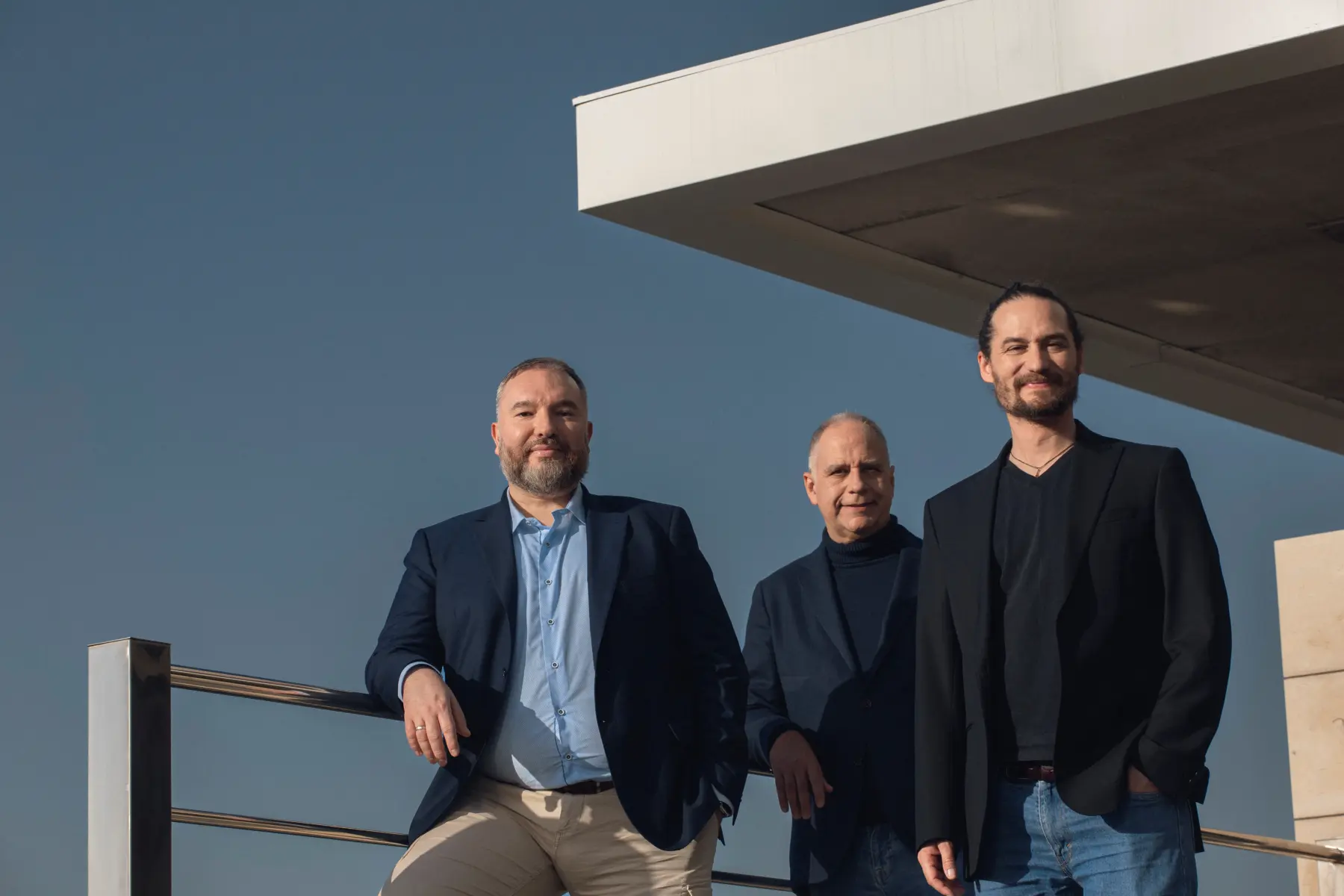

PwC and Multiverse Computing have formalized a strategic agreement, announced November 19, 2025, to accelerate enterprise adoption of optimized artificial intelligence solutions. The collaboration centers on Multiverse Computing’s CompactifAI platform, a technology demonstrably capable of compressing Large Language Models (LLMs) by up to 95% while maintaining 2-3% precision. This quantum-inspired compression significantly reduces computational demands and storage requirements, enabling both cloud and on-premise AI deployments with enhanced data security. The partnership aims to unlock novel AI use cases, particularly in resource-constrained environments, and drive measurable business value across key sectors.

Partnership Accelerates Efficient and Secure AI Integration

PwC and Multiverse Computing have forged a strategic partnership to accelerate AI integration within organizations, focusing on efficiency and security. Central to this collaboration is Multiverse’s CompactifAI platform, a revolutionary technology compressing AI models by up to 95% while maintaining 97-98% accuracy. This compression dramatically reduces storage and computational demands, paving the way for faster deployments and lower energy consumption – critical for sustainability initiatives.

CompactifAI’s architecture enables versatile AI deployment, functioning seamlessly on both cloud infrastructures and on-premise servers. This flexibility is crucial for organizations prioritizing data security and governance, particularly within regulated industries. Notably, the platform unlocks AI applications in disconnected environments – such as remote industrial sites – by reducing model size and bandwidth requirements. This expands AI’s potential beyond constantly connected scenarios.

PwC is immediately adopting CompactifAI internally, becoming the partnership’s inaugural client. This move will allow PwC teams to directly experience performance gains and refine their expertise, strengthening their ability to advise clients. Beyond the technology, the alliance includes training programs designed to foster responsible and measurable AI adoption across diverse business and technical teams, ensuring sustainable long-term value.

CompactifAI: Model Compression and Key Applications

PwC and Multiverse Computing have partnered to accelerate AI adoption through CompactifAI, a platform focused on AI model compression. This technology significantly reduces the computational demands of artificial intelligence, a critical step toward wider implementation. CompactifAI achieves up to 95% compression of Large Language Models (LLMs) while maintaining a precision loss of only 2-3%. This efficiency allows for deployment on less powerful hardware, lowering costs and energy consumption—a key benefit for sustainable AI practices.

The impact of CompactifAI extends across multiple sectors. Applications are emerging in Finance for portfolio optimization and risk management, in Industrial settings for predictive maintenance and AI in isolated environments, and in Transport & Logistics for route design and resource allocation. Crucially, the compressed models enable AI functionality without constant internet connectivity – opening doors for secure, on-premise deployments and use cases in remote or regulated industries.

PwC is the initial adopter of CompactifAI, integrating the technology into its internal models to realize performance gains and build expertise. Multiverse Computing boasts over 100 customers globally, including major players like Iberdrola and Bosch, and has secured approximately $250M in funding. Beyond the technology, the partnership includes training programs to foster responsible and measurable AI adoption within organizations, addressing both technical and business considerations.

The underlying technical breakthrough enabling CompactifAI is likely rooted in sophisticated quantization techniques and model pruning. Quantization involves reducing the bit precision required for model weights—for example, moving from standard 32-bit floating-point numbers to lower precision formats like 8-bit integers. This reduction significantly shrinks the model footprint with minimal degradation to the model’s functional parameters, effectively mitigating the massive I/O and memory bottlenecks that plague large-scale transformer architectures.

Furthermore, the ability to operate effectively in disconnected or resource-constrained edge environments addresses a critical systemic limitation in AI infrastructure. By enabling substantial model compression, the platform reduces the required uplink bandwidth and local computational overhead, making real-time AI decision-making viable at remote industrial sites, such as deep-sea monitoring facilities or agricultural sensors far from reliable network connectivity.

In the broader context of sustainable AI development, efficiency is directly linked to the energy consumption of computation. Running massive LLMs on dedicated hardware consumes vast amounts of electricity. By allowing comparable performance on less powerful, lower-TDP (Thermal Design Power) hardware, CompactifAI helps organizations operationalize AI practices that align with aggressive corporate sustainability goals and manage total cost of ownership.

While the platform excels at general model compression, continued research must focus on maintaining high fidelity across highly specialized modalities, such as multimodal inputs combining imagery, audio, and complex time-series data. Future iterations will need to adapt compression algorithms to preserve the unique contextual nuances inherent in mixed data types, ensuring that the compressed model retains all necessary semantic information for nuanced industrial applications.