Maintaining and updating large language models presents a significant challenge, as they require continuous refinement to reflect evolving information, and traditional retraining is computationally expensive. Qingyuan Liu from Columbia University, Jia-Chen Gu, and Yunzhi Yao from Zhejiang University, along with Hong Wang from the University of Science and Technology of China and Nanyun Peng from the University of California, Los Angeles, investigate how to improve sequential editing, a more efficient method for updating these models. Their research reveals a critical link between maintaining uniform distribution of neuron weights, a property they quantify using Hyperspherical Energy, and preventing catastrophic forgetting during editing. By developing SPHERE, a novel regularization strategy that stabilizes these weight distributions and projects new knowledge onto a complementary space, the team demonstrates substantial improvements in editing capability, an average of 16. 41% over existing methods, while preserving the model’s overall performance, offering a promising path towards reliable and scalable knowledge editing.

Sequential Editing Improves LLM Robustness

Scientists investigated a new technique, SPHERE, to enhance the robustness and quality of sequential editing in large language models (LLMs). Sequential editing involves making targeted changes to a model’s knowledge over time, but standard methods often lead to knowledge loss and reduced generation quality. SPHERE aims to overcome these challenges by carefully managing how edits are applied within the model’s internal structure, significantly improving knowledge retention and the overall quality of generated text. The study evaluated SPHERE through a combination of general ability tests, assessing reasoning, natural language inference, and question answering, and detailed case studies examining specific editing prompts and resulting text. Results consistently showed that SPHERE outperforms existing methods, preserving coherence and generating more natural and informative responses.

Hyperspherical Energy Tracks Editing Model Stability

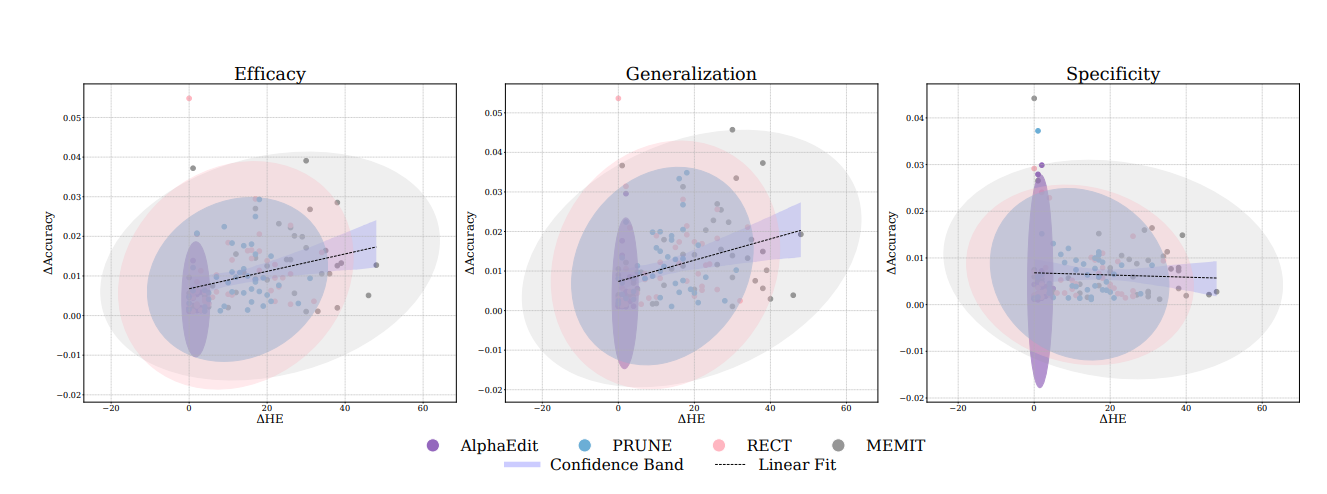

Researchers have discovered a crucial link between the distribution of neuron weights and the stability of large language models during sequential editing. They hypothesized that maintaining a uniform distribution of these weights on a hypersphere is essential for preserving knowledge and preventing performance degradation. The team developed a new metric, Hyperspherical Energy (HE), to quantify this uniformity and tracked its dynamics during editing, revealing a strong correlation between HE fluctuations and editing performance. Extensive empirical studies conducted on LLaMA3 and Qwen2. 5 models demonstrated that editing failures consistently coincided with significant increases in HE, indicating that disruptions to hyperspherical uniformity directly impact model performance. Advanced editing methods proved more effective at preserving HE throughout the editing process, suggesting an implicit regulation of weight distribution.

Hyperspherical Uniformity Predicts Editing Performance

Scientists have achieved a breakthrough in mitigating performance degradation during sequential editing of large language models (LLMs). The work centers on the concept of hyperspherical uniformity, describing how evenly neuron weights are distributed, and its crucial role in preserving knowledge during updates. Researchers quantified this uniformity using Hyperspherical Energy (HE), and experiments revealed a strong correlation between HE dynamics and editing performance across six widely used editing methods. Theoretical analysis further confirmed that variations in HE establish a lower bound on interference with the original pretrained knowledge, providing a principled explanation for the robustness of state-of-the-art editing methods.

Motivated by these findings, the team developed SPHERE (Sparse Projection for Hyperspherical Energy-Regularized Editing), an innovative regularization strategy that stabilizes neuron weight distributions and enables reliable sequential updates. Evaluations on LLaMA3 and Qwen2. 5 demonstrate that SPHERE outperforms the best baseline editing method by an average of 16. 41%, while more faithfully preserving general performance. Furthermore, SPHERE improves existing editing methods by 38. 71% on average, offering a pathway toward reliable and scalable knowledge editing.

Hyperspherical Uniformity Stabilises Language Model Editing

This research demonstrates the critical role of hyperspherical uniformity in maintaining stability during sequential editing of large language models. The team discovered a strong correlation between the preservation of uniform neuron weight distributions, quantified through Hyperspherical Energy, and successful knowledge updates, alongside theoretical proof establishing a link between uniformity and knowledge retention. To address the problem of catastrophic forgetting during editing, they developed SPHERE, a regularization strategy that projects new knowledge onto a space that minimizes disruption to existing weight distributions. Extensive evaluations on LLaMA3 and Qwen2.

5 models show that SPHERE improves editing capability by an average of 16. 41% compared to existing methods, while also better preserving the model’s overall performance and weight geometry. Furthermore, SPHERE can be readily integrated as an enhancement to existing editing techniques, yielding an additional average improvement of 38. 71%. These results establish SPHERE as a theoretically sound and empirically effective solution for reliable, large-scale model editing. The authors acknowledge that while SPHERE enhances editing reliability, responsible deployment requires rigorous validation, transparent reporting, and careful oversight to mitigate potential risks associated with altering stored knowledge.

🗞 Energy-Regularized Sequential Model Editing on Hyperspheres

🧠 ArXiv: https://arxiv.org/abs/2510.01172