Lumai has launched the Iris Nova server, the first optical computing system to successfully run billion-parameter large language models (LLMs) in real time, representing a significant step toward overcoming limitations in artificial intelligence infrastructure. The new server utilizes light instead of silicon, enabling faster inference and dramatically reducing energy consumption; Lumai’s optical compute system delivers up to 90% lower energy consumption compared to conventional architectures. This addresses the growing strain on data centers, where global power demand is projected to double by 2030. “As the industry transitions into the inference era, we are simultaneously crossing the threshold into the post-silicon era,” said Dr. Xianxin Guo, CEO and Co-Founder of Lumai, explaining that by shifting computation from electrons to photons, the company can deliver an order-of-magnitude increase in performance with significant energy savings.

Luma Iris Nova Server Enables Real-Time Billion-Parameter LLM Inference

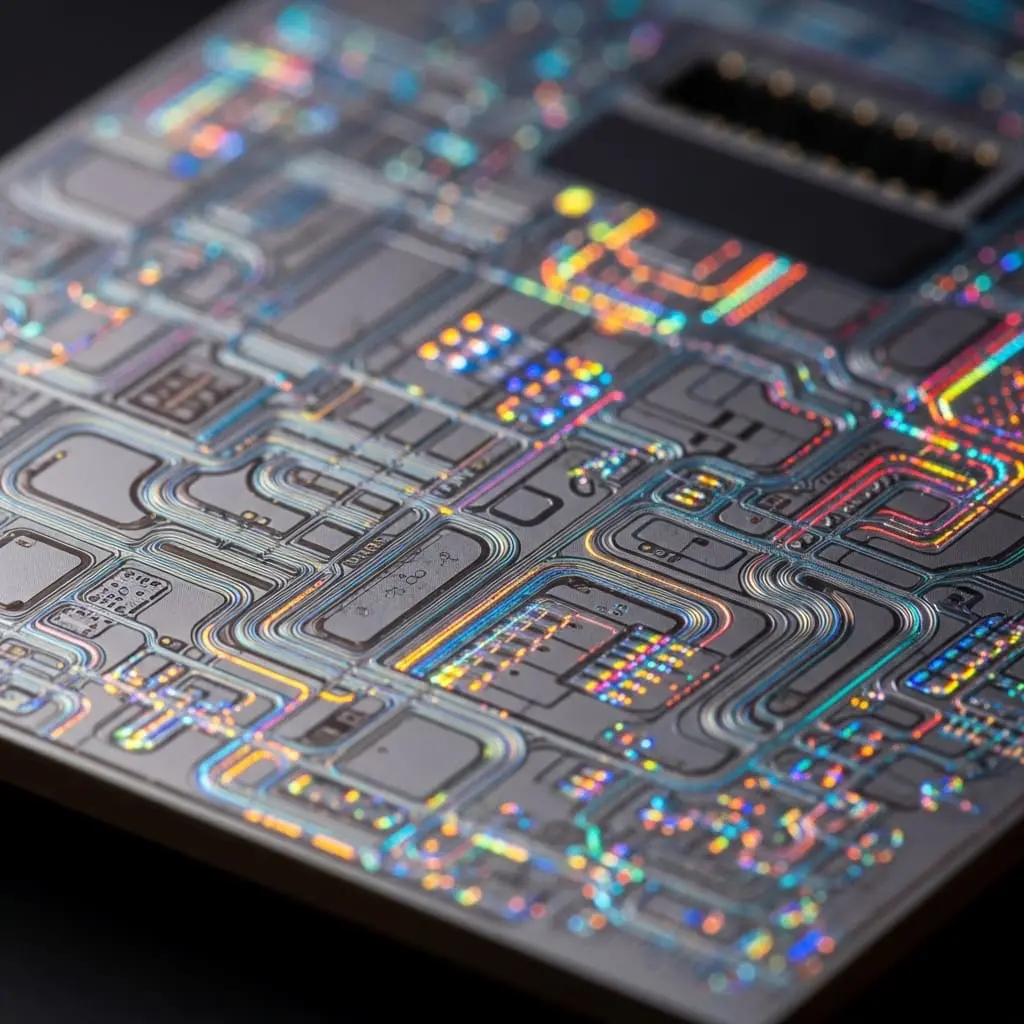

Unlike traditional systems reliant on silicon, Lumai’s servers accelerate workloads using light, a fundamental shift designed to overcome the limitations of existing architectures and address the escalating demands on data centers. This approach allows for faster inference and higher execution efficiency while reducing energy consumption by as much as 90% compared to conventional systems. The launch on April 28, 2026, arrives as the AI industry pivots toward deployment and inference, facing a challenge Lumai terms “The Energy Wall”; the International Energy Agency projects global data center power demand will double by 2030, necessitating more efficient computing methods. Lumai’s technology, stemming from research at the University of Oxford in 2021, utilizes three-dimensional volume to bypass the constraints of two-dimensional silicon chips, achieving massive spatial parallelism and low-cost, high token throughput.

The Iris Nova server employs a hybrid processor, combining digital processing for system control with an optical tensor engine for core mathematical operations, ensuring seamless integration into existing data center environments. The Advanced Research and Invention Agency (ARIA) highlighted the urgency for alternative scaling pathways, stating, “The demands on existing AI processors necessitate an urgent search for alternative scaling pathways,” and expressed excitement to partner with Lumai in exploring a shift beyond traditional digital computing. The Lumai Iris Nova inference server is currently available for evaluation by hyperscalers, neo-clouds, enterprises, and research institutions.

The pursuit of ever-more powerful artificial intelligence is increasingly constrained not by algorithmic innovation, but by the physical limits of silicon-based computing. Traditional processors are approaching a limit in scaling, power, and thermal efficiency, offering diminishing returns with each new generation while demanding exponentially more energy. This new architecture replaces electrons with photons to perform calculations, leveraging the principles of spatial parallelism to execute millions of operations simultaneously within a three-dimensional volume.

By shifting the computation paradigm from electrons to photons, Lumai can deliver an order-of-magnitude increase in performance with significant energy savings.

Dr. Xianxin Guo, CEO and Co-Founder of Lumai

University of Oxford Research Drives Lumai’s AI Infrastructure Innovation

Lumai’s emergence as a developer in optical computing is deeply rooted in academic research originating at the University of Oxford; the company was spun out of its optics research in 2021 with a clear mission to address the escalating demands of artificial intelligence infrastructure. The development of Lumai’s optical compute system is about fundamentally altering how AI calculations are performed; the technology utilizes light in a three-dimensional volume, circumventing the limitations inherent in traditional two-dimensional silicon chips. This approach leverages massive spatial parallelism, enabling millions of operations to occur simultaneously and resulting in significantly improved token throughput for demanding workloads. This innovation arrives at a critical juncture, as data centers face increasing pressure from the “Energy Wall” and the limitations of conventional silicon scaling.

Lumai is leading the charge in demonstrating that optical processors could provide one such pathway, and ARIA is excited to partner with them to explore the shift beyond our traditional digital computing paradigm.

Suraj Bramhavar, Program Director at ARIA