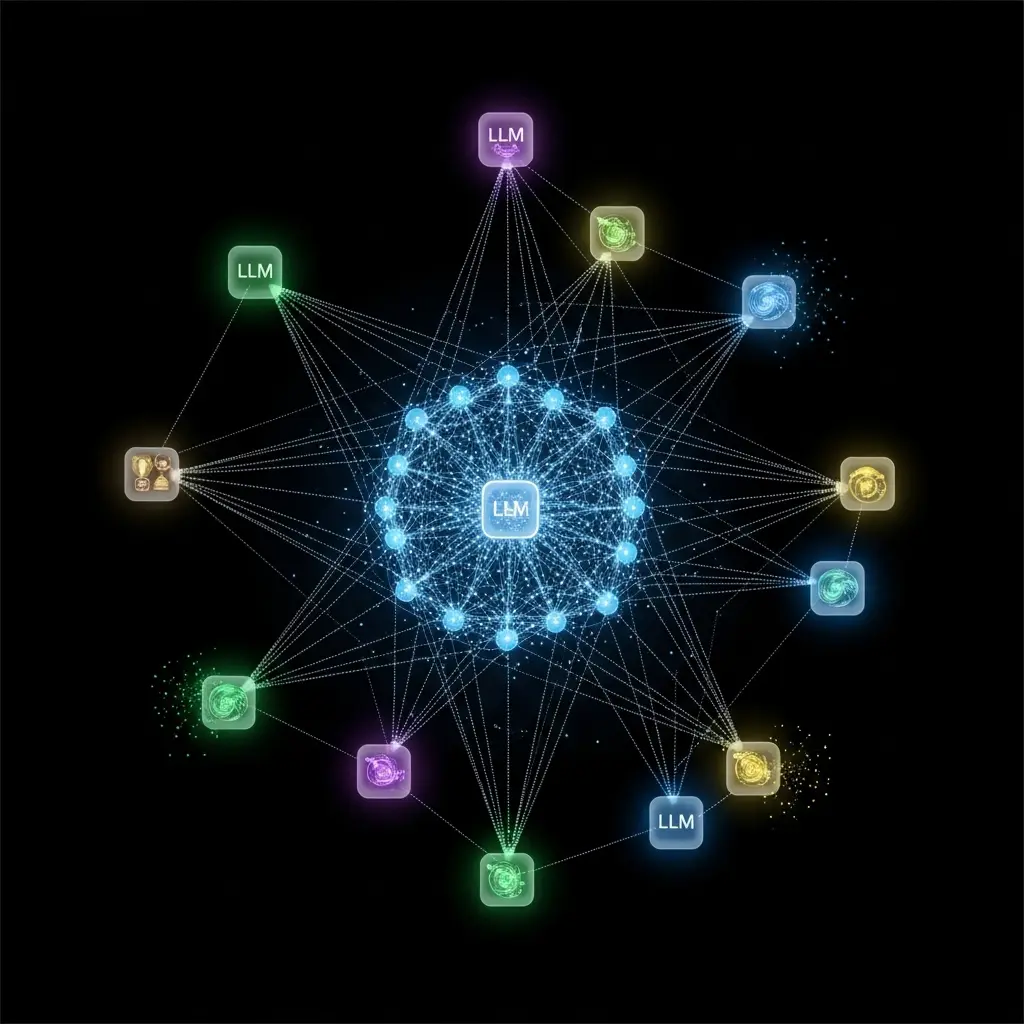

Researchers are increasingly investigating the synergy between large language models (LLMs) and traditional agent programming techniques, a crucial area given the rapid development of Generative AI and Agentic AI systems. Rem Collier, Katharine Beaumont, and Andrei Ciortea, from University College Dublin and the University of St.Gallen, detail their experiences building a prototype LLM integration for the ASTRA programming language in a new study. This work is significant because it bridges the gap between established agent toolkits and emerging LLM-powered agents, offering valuable insights into how existing expertise can inform the design of future agentic platforms and potentially enhance their capabilities. The paper showcases three practical implementations alongside a discussion of the lessons learned during development.

This research details the creation of ‘astra-langchain4j’, a library built upon LangChain4J, an open-source Java framework for LLM integration, and incorporated into ASTRA since version 2.0.3. The team achieved a functional prototype that allows ASTRA agents to leverage the reasoning and planning capabilities of LLMs, introducing mechanisms for prompt creation, response processing, and a unique BeliefRAG system. ASTRA’s modular architecture was extended to seamlessly incorporate LLM functionality, providing developers with tools to define prompts, manage templates, and execute LLM calls within agent programs. These integrations are standardized, simplifying the process of incorporating LLMs into complex agent systems and allowing for consistent interaction across different LLM providers.

The library is freely available from Maven Central, facilitating wider adoption and experimentation. Experiments demonstrate the library’s functionality through several sample implementations, including a ‘Joker Agent’ capable of generating jokes based on predefined templates and agent beliefs. This agent utilizes the BeliefRAG mechanism to dynamically incorporate information from its knowledge base into prompts, resulting in more contextually relevant and engaging responses. This work establishes a practical pathway for integrating LLMs into existing agent frameworks, offering a powerful combination of symbolic reasoning and generative capabilities. Future applications could include more sophisticated autonomous agents capable of complex problem-solving, improved human-computer interaction, and the development of more adaptable and intelligent multi-agent systems. The team engineered the astra-langchain4j library, built upon the Langchain4J Java framework, to facilitate LLM-based systems directly within ASTRA agent programs. This library introduces mechanisms for prompt creation and response processing, alongside a BeliefRAG system that leverages an agent’s existing beliefs to enrich prompts with relevant information. The astra-langchain4j library is freely available from Maven Central and seamlessly integrates into the ASTRA Maven build system, allowing developers to incorporate LLM functionality by simply adding a Maven dependency.

The study pioneered a modular approach, leveraging ASTRA’s existing module system to encapsulate LLM integrations and prompt templating. Each LLM integration module provides a standardised set of actions for interacting with the respective LLM service, ensuring consistency across implementations. Further demonstrating the library’s capabilities, the team developed a “Joker” agent (Listing 1.3) that generates jokes using a prompt template. This agent utilizes the Templater module to create a prompt with a binding for the animal subject, dynamically inserting “hedgehog” into the joke’s setup.

The model then processes this prompt, generating a response which is subsequently printed to the console via the Console module. The system delivers a flexible framework for building complex agent behaviours driven by LLMs, enabling retrieval-augmented generation based on the agent’s internal belief base. This methodological innovation enables researchers to explore the synergistic potential of traditional agent toolkits and contemporary LLM technologies. The approach achieves a streamlined integration process, allowing developers to rapidly prototype and deploy LLM-powered agents within the ASTRA environment. The astra-langchain4j library, with its modular design and standardised actions, facilitates the creation of reusable components and promotes code maintainability, ultimately accelerating the development of sophisticated agentic systems.

Analyzing initial prototype LLM agent functionality and scope

Initial Prototype Functionality and Basic Observations

LLM agents show repetition in simple games

Testing Advanced Agent Strategies in Classic Games

Scientists investigated the integration of large language models (LLMs) with the ASTRA programming language, focusing on agent-based systems and toolkits. Initial experiments employed the chatgpt-4o-mini LLM within a tic-tac-toe agent, revealing instances where the model suggested previously played locations. While acknowledging this could stem from prompt design, researchers noted consistency with findings from other studies involving LLMs playing board games. As a comparative test, the team substituted chatgpt-4o-mini with Google’s gemini-1.5-flash, resulting in the ‘GeminiPlayer’ agent, which unfortunately demonstrated no performance improvement.

Advanced testing reveals current agent model limitations and flaws

Further investigation led to the exploration of more complex strategies, beginning with an implementation based on Anthropic’s Evaluator-Optimizer workflow. The resulting agent, however, failed to outperform the basic LLM player. Despite initial promise, the LLM occasionally misjudged blocking moves as unfavorable, and the agent sometimes entered a loop rejecting all valid options. This agent initially showed competitive performance against a linear player, even achieving consistent wins at one point. However, subsequent runs on different days revealed instability, with the agent again losing to the linear player. Their work details the development of a prototype LLM integration and presents three example implementations: a travel planner, a reflective Tic-Tac-Toe player, and a Towerworld block-stacking agent. Through these examples, researchers gained insights into the capabilities and limitations of combining LLMs with established agent technologies. The findings suggest that LLMs can be readily integrated into agent-oriented programming languages due to the availability of supporting libraries like Langchain4j.

Achieving robust agentic workflows using existing protocols

Achieving Complex Workflows with Existing Protocols

Many agentic workflow patterns, commonly proposed for new agentic systems, are achievable using existing multi-agent system technologies, such as the FIPA ACL Request Protocol. Furthermore, the research indicates that complex workflows do not always necessitate multiple agents, as a single agent can often implement expert-assessor patterns effectively. However, the experiments revealed limitations in LLMs’ contextual decision-making and complex reasoning abilities, demonstrated by failures in Tic-Tac-Toe and Towerworld scenarios. Authors acknowledge that prompting LLMs is a challenging process, with even minor adjustments potentially affecting result quality. The study also notes a scarcity of research integrating LLMs into agent toolkits like ASTRA, highlighting a gap in current literature. Future research could focus on refining prompting techniques and exploring more sophisticated methods for enhancing LLMs’ reasoning and decision-making capabilities within agent-based systems, potentially building on recent work concerning the Tower of Hanoi puzzle.

🗞 astra-langchain4j: Experiences Combining LLMs and Agent Programming

🧠 ArXiv: https://arxiv.org/abs/2601.21879