In-loop filtering is crucial for modern video compression, as it reduces visual artifacts and improves the viewing experience, but current methods often demand significant computational resources. Zhuoyuan Li, Jiacheng Li, Yao Li, and colleagues, from various institutions, present a new approach that leverages the efficiency of look-up tables to achieve comparable performance with far less complexity. Their research introduces LUT-ILF++, a framework that trains a streamlined neural network and then stores its outputs in easily accessible tables, allowing for rapid filtering during video coding without intensive calculations. This innovation not only reduces processing time and storage requirements, but also delivers substantial bitrate reductions, averaging nearly one percent for common test sequences, positioning it as a promising candidate for future video coding standards and wider adoption across devices.

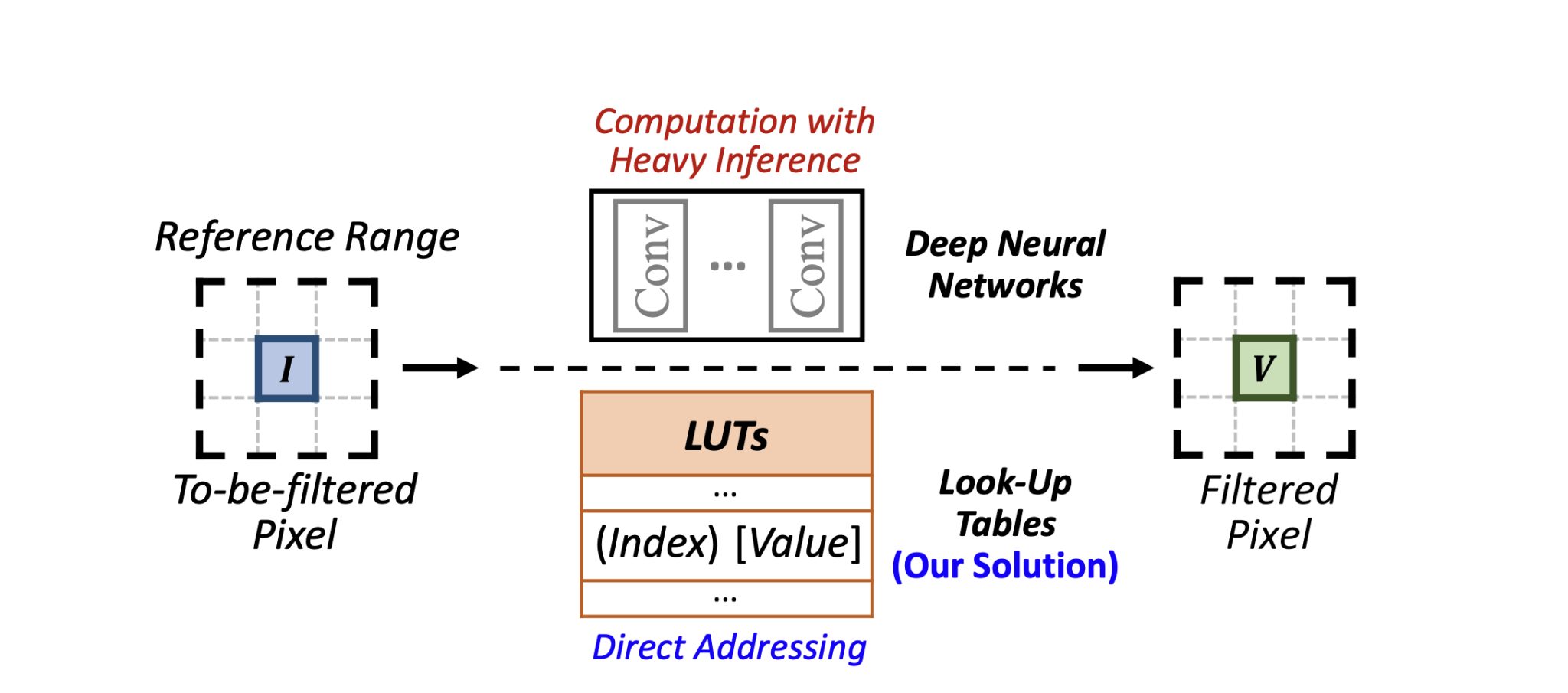

The use of deep neural networks (DNN) introduces significant computational and time complexity, creating high demands for dedicated hardware and hindering general use. To address this limitation, the research investigates a practical iterative learning filtering (ILF) solution by adopting look-up tables (LUTs). After training a DNN with a restricted reference range for ILF, all possible inputs are traversed and the resulting output values are cached into LUTs. During the coding process, filtering occurs by retrieving the filtered pixel through locating the input pixels and interpolating between the cached values, rather than relying on computationally intensive inference. This work proposes a universal LUT-based approach, offering a practical alternative to DNN-based ILF.

LUT-ILF++ Reduces Video Coding Complexity

The research team developed a novel in-loop filtering framework, termed LUT-ILF++, designed to reduce computational demands in video coding. This system addresses the computational demands of neural network-based filtering by using look-up tables to store pre-calculated filtering results, significantly reducing processing time and hardware requirements. The core concept involves replacing computationally expensive filters with Look-Up Tables (LUTs), which store pre-calculated filter results for faster processing. The team achieved this by carefully designing how these tables store and access data, enabling efficient filtering with limited storage, and even allowing for customized storage optimization.

Experiments demonstrate that this framework achieves bitrate reductions under various coding conditions, and that LUT-ILF++ achieves comparable coding performance to the original DNN-based filter, despite the significant reduction in computational complexity. Several key innovations contribute to these gains, including the cooperation of multiple filtering LUTs and customized indexing mechanisms, enabling improved filtering reference perception while limiting storage consumption. Furthermore, the researchers introduced a cross-component indexing mechanism, allowing for the joint filtering of different color components, and a LUT compaction scheme to prune the LUT and further reduce storage costs. The resulting implementation within the Versatile Video Coding (VVC) reference software demonstrates a substantial reduction in both time complexity and storage requirements compared to traditional DNN-based solutions, paving the way for more efficient video compression technologies.

Deep Neural Networks Design Video Filtering LUTs

This research details how DNNs can be used to design LUT-based in-loop filters for video compression. Crucially, the LUTs are finetuned after conversion to recover any performance lost during the process, with the most advanced model, LUT-ILF++, employing a two-step finetuning process. LUT compaction and pruning further reduce the size of the LUTs to minimize storage requirements and improve performance. The research also utilizes non-uniform sampling to improve the accuracy of the LUT entries. LUT-based filters are much faster than traditional filters, and the research provides detailed analysis of the reduction in computational operations. LUT compaction significantly reduces the storage requirements of the filters, and the filters have a lower peak memory footprint than a full VVC decoder. Ablation studies confirm that finetuning the LUTs after conversion is essential for maintaining performance, and the two-step finetuning process in LUT-ILF++ provides further performance gains.

Efficient Video Coding with Look-Up Table Filtering

Experimental results demonstrate that LUT-ILF++ achieves bitrate reductions under different coding configurations, compared to existing methods. This indicates a substantial improvement in coding efficiency while maintaining low complexity, offering a practical pathway for integrating neural network-based tools into advanced video coding standards. The authors acknowledge that the storage cost remains a consideration, and future work will focus on further refining the system by incorporating additional information and extending the approach to other coding tools like motion estimation and resampling.

🗞 In-Loop Filtering Using Learned Look-Up Tables for Video Coding

🧠 ArXiv: https://arxiv.org/abs/2509.09494