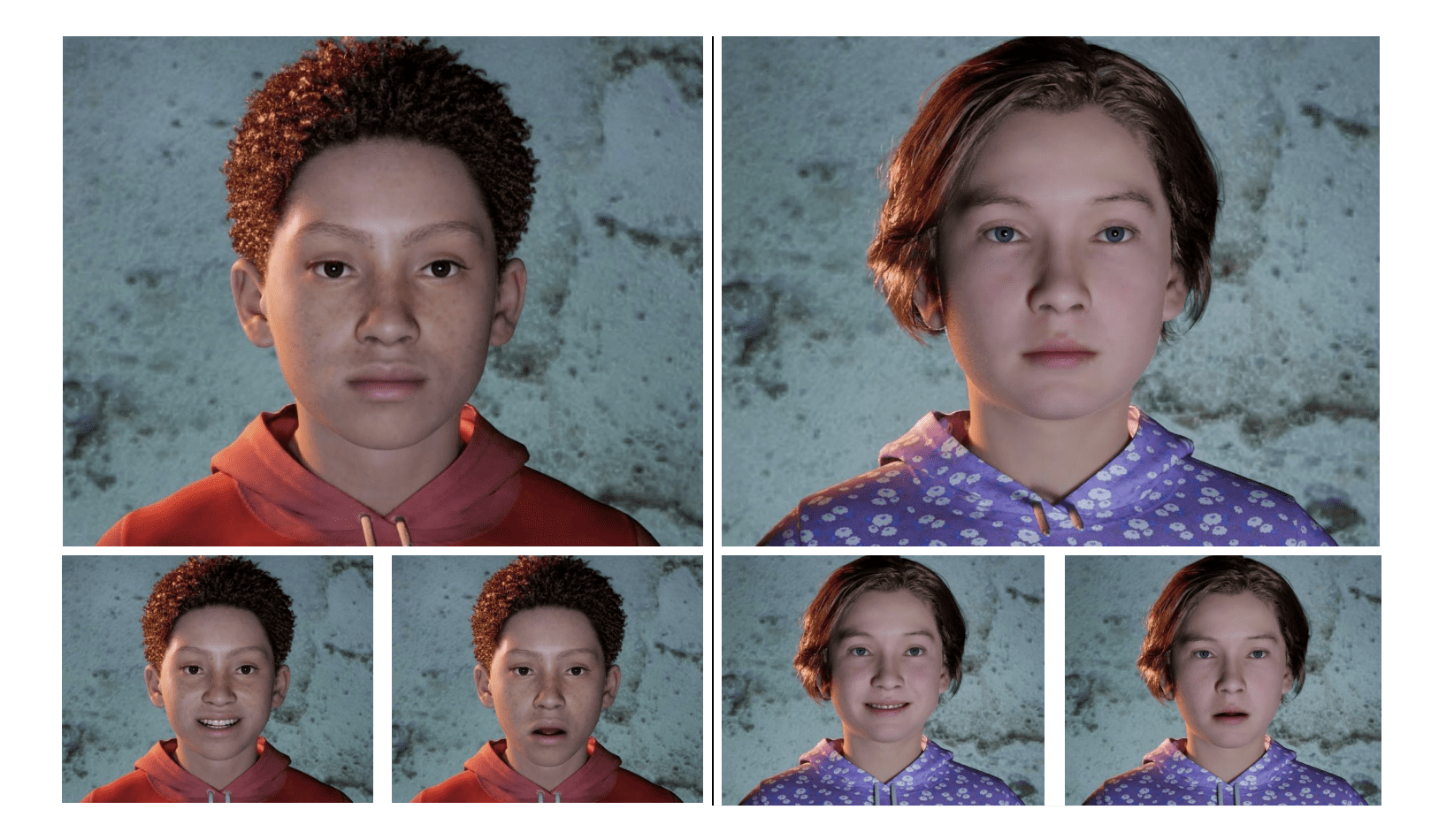

Research demonstrates real-time generation of emotionally expressive, photorealistic child avatars using Unreal Engine 5 and Omniverse Audio2Face. Participants reliably identified joy and sadness, but anger recognition diminished without accompanying audio. Perceived facial realism increased when audio was removed, indicating challenges with audiovisual synchronisation for believable simulations.

The creation of convincingly emotive artificial intelligence remains a significant challenge, particularly when applied to sensitive applications demanding nuanced interpersonal communication. Current systems often lack the subtle non-verbal cues crucial for establishing rapport and trust, hindering their effectiveness in areas such as virtual reality training for high-stakes scenarios. Researchers at SimulaMet, including Pegah Salehi, Sajad Amouei Sheshkal, Vajira Thambawita, and Pål Halvorsen, address this limitation in their work, “From Flat to Feeling: A Feasibility and Impact Study on Dynamic Facial Emotions in AI-Generated Avatars”. Their study investigates a real-time architecture integrating Unreal Engine 5’s MetaHuman rendering capabilities with Omniverse Audio2Face, a system that translates vocal prosody – the rhythm, stress and intonation of speech – into corresponding facial expressions. The team’s evaluation, utilising a between-subjects study, assesses the perceptual impact of these dynamically rendered emotions on photorealistic child avatars, providing valuable insights into the design of more effective and empathetic virtual interactions.

The convergence of real-time rendering and artificial intelligence now enables the creation of photorealistic child avatars capable of displaying nuanced emotional expressions, a development with potential applications in education, therapy, and human-computer interaction. Recent research details an architecture integrating Unreal Engine 5, a leading real-time 3D creation tool, with Nvidia’s Omniverse Audio2Face, a system that animates facial expressions from audio input. This combination addresses a recognised deficiency in current avatar technology, where emotionally static or unconvincing displays limit their effectiveness, particularly in sensitive contexts requiring empathetic engagement.

The system functions by translating vocal prosody, the rhythm, stress and intonation of speech, into corresponding facial movements on the digital avatar. To achieve the necessary responsiveness for interactive applications, researchers implemented a distributed computing framework. This strategically separates computationally intensive tasks, such as language processing and speech synthesis, from the graphically demanding rendering process. This architecture facilitates low-latency interaction, crucial for both traditional desktop experiences and immersive virtual reality environments.

A controlled experiment, employing a between-subjects design, assessed the perceptual impact of these emotionally expressive avatars. Participants evaluated conditions presenting both audio and visual information alongside those displaying only the visual avatar. Ratings focused on emotional clarity, perceived facial realism, and the degree of empathy elicited by avatars expressing joy, sadness, and anger. Quantitative data gathered from these evaluations provides a rigorous assessment of the system’s effectiveness.

Results demonstrate that the generated avatars successfully convey emotions, achieving high rates of correct identification for both joy and sadness. However, recognition of anger diminished significantly when presented visually alone, highlighting the critical importance of congruent vocal cues in accurately portraying high-arousal emotions. This suggests that visual information alone is insufficient to communicate complex emotional states, particularly those characterised by heightened energy.

Interestingly, the study reveals a complex relationship between emotional clarity and perceived realism. Removing the audio component, while reducing emotional recognition accuracy, actually increased ratings of facial realism. This indicates that even subtle asynchrony between audio and visual elements can negatively impact the overall believability of the avatar. The findings underscore the necessity for careful calibration and precise synchronisation of audio and visual components to create a truly convincing and emotionally resonant experience, and confirm the technical feasibility of generating emotionally expressive avatars for improved non-verbal communication.

👉 More information

🗞 From Flat to Feeling: A Feasibility and Impact Study on Dynamic Facial Emotions in AI-Generated Avatars

🧠 DOI: https://doi.org/10.48550/arXiv.2506.13477