Quantum‑centric research has long struggled with a lack of shared, trustworthy data. This week, a small but ambitious start‑up announced a solution that could change that. Aqora.io, based in London, unveiled a public hub that lets researchers upload, version, and cite datasets tailored for quantum algorithms, from MaxCut graphs to variational quantum eigensolver (VQE) Hamiltonians. The launch, announced at the Quantum Tech Conference in March, responds to a growing demand for reproducible benchmarks in a field that has historically relied on bespoke, hard-to-share data.

A Marketplace for Quantum Benchmarks

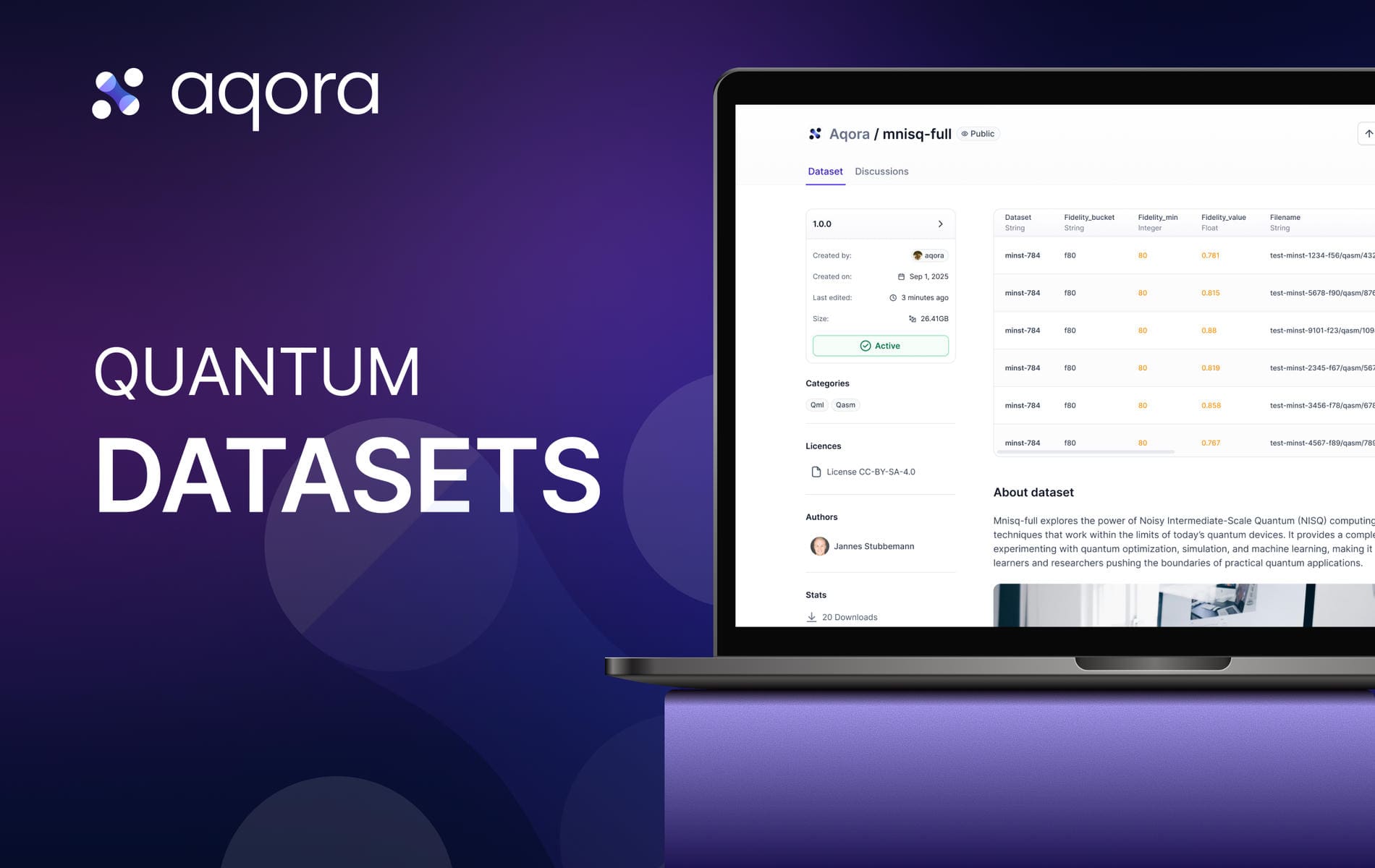

Aqora’s Datasets Hub is more than a repository; it is a structured marketplace where data is treated as a first‑class research asset. The platform hosts a range of curated collections. For instance, the “HamLib Binary Optimization” set contains 1.29 gigabytes of graph instances in Parquet format, licensed under CC‑BY‑SA‑4.0, and is available in version 1.0.0. The “MNISQ Quantum Machine Learning” dataset, a 28.12‑gigabyte collection of image‑based quantum circuits, also carries the same license and version tag. Smaller, domain‑specific collections such as “Clinical Trial PBC Pharmacy” (19.22 kilobytes) and “Pharmacometric Events Pharmacy” (22.21 kilobytes) demonstrate the hub’s flexibility for both large‑scale and niche data.

The hub’s architecture mirrors that of classical machine‑learning data platforms, but with quantum‑specific extensions. Datasets can be loaded directly into Python using familiar libraries. A quick snippet shows how a researcher can pull a dataset into pandas:

import pandas as pd

URI = "aqora://stubbi/pharmacometric-events/v1.0.0"

df = pd.read_parquet (URI)

print (df.head ())

For those preferring Polars, a more efficient column‑filtering pipeline is available:

import polars as pl

from aqora_cli.pyarrow import dataset

df = pl.scan<em>pyarrow</em>dataset (dataset ("bernalde/hamlib-binary-optimization", "v1.0.0"))

small_max3sat = (

df.filter (

(pl.col ("problem") == "max3sat") & (pl.col ("n_qubits") < 100)

)

.select (["hamlib<em>id","collection","instance</em>name","n<em>qubits","n</em>terms","operator_format"])

.collect ()

)

print (small_max3sat.head (10))

These examples illustrate how the hub removes the friction of data ingestion, allowing researchers to focus on algorithm development rather than file‑format gymnastics.

From Code to Citation: The Reproducibility Protocol

Reproducibility has been a persistent bottleneck in quantum computing, where subtle differences in dataset versions or random seeds can skew results. Aqora addresses this by enforcing immutable dataset versions. Every upload is tagged with a publisher‑name@version string, such as “stubbi/pharmacometric-events@v1.0.0.” The hub’s URI scheme guarantees that any user who pulls the same URI will receive identical bytes, regardless of local file changes or network hiccups.

Aqora also encourages best‑practice logging in research notebooks. A minimal reproducible setup might include the following in a script header:

import os, random, numpy as np, pandas as pd

SEED = 42

URI = "aqora://stubbi/pharmacometric-events/v1.0.0"

os.environ["PYTHONHASHSEED"] = str (SEED)

random.seed (SEED)

np.random.seed (SEED)

try:

from qiskit.utils import algorithm_globals

algorithm<em>globals.random</em>seed = SEED

except Exception:

pass

df = pd.read<em>parquet (URI).sample (frac=1.0, random</em>state=SEED).reset_index (drop=True)

By pinning the dataset version, seed, and even the code commit hash, a researcher can guarantee that another team can replicate the exact experiment. The hub’s metadata, license, tags, size, and version, further aids in traceability, eliminating the need for researchers to email large files or navigate obscure cloud links.

Democratising Quantum Data for All

One of Aqora’s core motivations is to level the playing field for smaller labs and independent researchers. Until now, high‑quality quantum datasets have been scattered across personal repositories, institutional servers, or proprietary vendor portals, often lacking consistent metadata or versioning. The hub’s public‑private dual model allows a team to keep a dataset private during development, then make it public once a paper is ready, without altering the cited version.

The platform’s support for multiple formats, CSV, Parquet, JSON, ensures compatibility with existing workflows. Uploading a directory triggers an automatic conversion into a single Parquet file, a format that balances compression with fast read times. Large datasets, such as the 28‑gigabyte MNISQ collection, can be uploaded via the command‑line interface, which supports multipart uploads for files that exceed typical browser limits.

The founders, Stubbi, a co‑founder and CEO; Julian, a software engineer; Antoine, a platform engineer; and elki, a software engineer, highlighted that the hub is built on open‑source principles. While the platform currently does not issue DOIs for each dataset version, it plans to integrate DOI support in the future, further cementing its role as a scholarly resource.

Getting Started: From Upload to Publication

Researchers wishing to contribute follow a straightforward process. First, they create a dataset page on the Aqora website, providing a brief README that describes the data’s purpose, column definitions, and any limitations. A license file (typically an SPDX identifier such as CC‑BY‑SA‑4.0) and a set of tags (e.g., “QAOA”, “VQE”, “OpenQASM”) are added to aid discoverability.

Next, the data files, whether CSV, Parquet, or JSON, are uploaded via the web interface or the CLI. For example, a team might run:

pip install -U aqora-cli

aqora login

aqora datasets upload alice/graphzoo-3reg --version 1.0.0 data.csv

Once uploaded, the dataset is immediately available for anyone to query using the URI scheme. If a team later decides to make the dataset public, they simply toggle the visibility flag; the immutable version string remains unchanged, preserving citation stability.

The hub also offers a “best‑practice” checklist for papers and notebooks, reminding authors to log the dataset URI, the code commit hash, and any random seeds used. By publishing the evaluation script alongside the dataset, researchers create a self‑contained reproducibility package that can be re‑run by anyone with access to the hub.

Looking Ahead: A Culture of Shared Quantum Knowledge

Aqora’s launch arrives at a time when quantum hardware is rapidly evolving, yet the software ecosystem lags behind in standardisation. By providing a central, versioned, and citable repository for quantum‑relevant data, the platform addresses a key bottleneck in the field. It echoes the success of classical data hubs, where shared benchmarks accelerated progress in computer vision and natural language processing.

The impact could ripple beyond academia—quantum-cloud providers, such as IBM Quantum and Rigetti, already host limited benchmark suites. Integrating Aqora’s datasets into their platforms would provide users with a richer, community-driven set of test cases, fostering more robust algorithm comparisons. Start‑ups developing quantum‑machine‑learning (QML) models could rapidly prototype on diverse circuit corpora, speeding time‑to‑market.

In the broader scientific context, reproducibility is increasingly demanded by journals and funding agencies. A hub that guarantees immutable data versions aligns with these expectations, potentially becoming a standard requirement for publication in quantum‑computing journals. As the ecosystem matures, the addition of DOI support and richer metadata will only strengthen its credibility.

For now, Aqora’s Datasets Hub offers a practical, well‑engineered solution to a long‑standing pain point. By turning data into a first‑class research asset, it opens the door for more rigorous, collaborative, and ultimately faster progress in the quantum domain.

Frequently Asked Questions

What is the Aqora Datasets Hub?

The Aqora Datasets Hub is a platform designed for quantum researchers to publish, discover, and reuse datasets. It supports reproducible benchmarks and quantum machine learning experiments, offering datasets like QAOA/MaxCut graphs, VQE Hamiltonians, and OpenQASM circuits.

How can datasets be loaded into pandas using the Aqora Datasets Hub?

Datasets can be loaded into pandas using a URI format. For example, you can import pandas, define the URI, and use the read_parquet method to load the dataset directly into a DataFrame.

Why is the Aqora Datasets Hub useful for quantum researchers?

The Aqora Datasets Hub provides a centralised place for quantum researchers to share and access datasets, ensuring reproducibility across different machines and times. It supports various dataset types and allows for private or public sharing, making it easier to collaborate and validate results.