Arthur D. Little is urging business leaders to temper growing optimism surrounding the development of quantum computing, despite billions of dollars in public and private investment and increasingly frequent announcements of technological milestones. Revisiting their 2022 “Unleashing the Business Potential of Quantum Computing” study, the analysis firm finds that while significant progress has been made toward a practical, fault-tolerant quantum computer, substantial hurdles remain before widespread commercialization becomes a reality. In January 2025, MIT Technology Review stated that “Useful quantum computing is inevitable—and increasingly imminent,” a sentiment echoed by Google CEO Sundar Pichai, who remarked, “I would say quantum is where AI was five years ago. So, I think, in five years from now we’ll be going through a very exciting phase in quantum.” However, Arthur D. Little argues that a critical and measured approach is still essential for executives seeking to position their businesses for a quantum future.

Blue Shift Report: Assessing Quantum Computing Progress

Nearly every month brings announcements of new milestones in quantum computing, but is the optimism wholly justified? Arthur D. Little’s (ADL’s) latest Blue Shift report, an update to their 2022 study, examines the progress made, promises being touted, and the need for critical assessment as the field matures. While public and private investment in quantum computing continues to surge, with governments in the UK, China, Europe, and the US committing billions, a clear, unbiased picture of its current business prospects remains elusive despite much research occurring in the public domain. The report acknowledges a wave of optimism fueled by announcements of advancements, but cautions that major technological hurdles still need to be overcome. The past three years have witnessed significant strides toward a practical fault-tolerant quantum computing (FTQC) device, a machine capable of outperforming conventional high-performance computers (HPCs) for specific, complex problems.

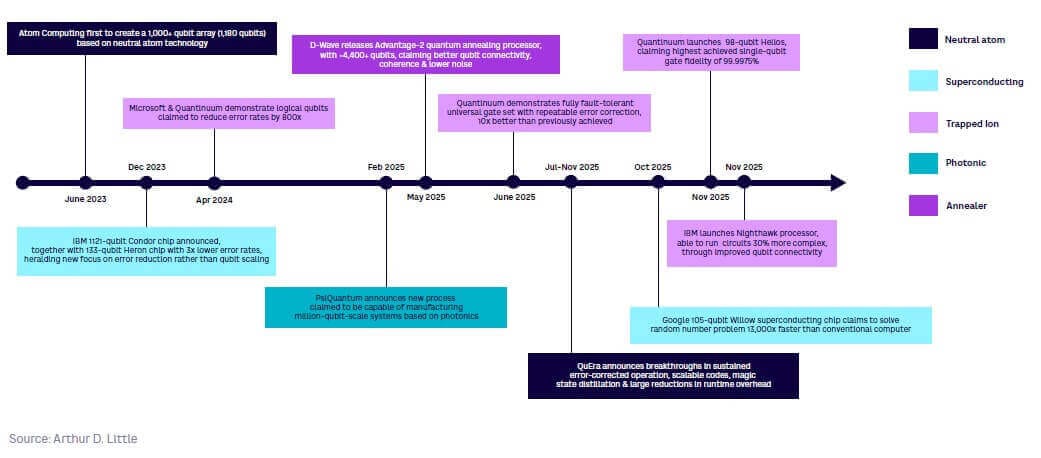

Three years ago, the primary focus was on scaling the number of physical qubits and improving error-correction techniques; now, the emphasis has largely shifted toward developing FTQC. Generally, “to be usable in a broad range of applications, an FTQC would require at least 100 logical qubits, with many of the more valuable applications demanding thousands,” according to the report, necessitating thousands to millions of physical qubits depending on the underlying technology. Recent achievements, such as Quantinuum/Microsoft’s improvements in error correction and Google’s 105-qubit Willow chip demonstrating reduced error rates with increased qubit size, are noteworthy. Google also implemented magic state cultivation in December 2025, which enables the synthesis of a logical T gate, a cornerstone feature for achieving exponential speedups in quantum algorithms. However, the path to fully realized FTQC remains challenging.

IBM’s Nighthawk processor, presented in November 2025, showcased improved qubit connectivity and scalable technology, while Atom Technology’s array of over 1,000 qubits in mid-2023 represented progress in neutral atom technology, though the array lacked active control. Beyond superconducting qubits, other technologies are advancing; PsiQuantum, in collaboration with GlobalFoundries, has demonstrated the ability to mass-produce quantum chips based on photonics. Even quantum annealers, with their more limited applications, are maturing, with D-Wave announcing advancements in its Advantage2 system and claiming quantum advantage in a specific hard spin-simulation problem, a claim that has prompted debate. The integration of AI with QC also holds potential, with AI potentially aiding in hardware calibration and algorithm design, though the massive data-loading requirement remains a significant obstacle. The report states that “AI has the potential to help tackle some of QC’s most difficult challenges,” but this integration remains in its early stages.

NISQ Devices & The Pursuit of Fault-Tolerant QC

The current quantum computing environment is characterized by a surge of activity surrounding noisy intermediate-scale quantum (NISQ) devices, even as the ultimate goal of fault-tolerant quantum computing (FTQC) remains a significant challenge. While scaling the number of physical qubits initially dominated the field, the focus has demonstrably shifted toward achieving the stability and error correction necessary for practical applications; however, forming an unbiased assessment of progress proves surprisingly difficult despite the transparency of much research and development. Three years ago, the emphasis was on simply increasing qubit counts and refining error-correction techniques, with some optimism that NISQ devices could be scaled sufficiently for limited utility; however, most algorithms developed for these early machines remain confined to fundamental physics simulations, distant from practical industrial needs. Quantinuum/Microsoft have achieved “significant improvements in repeatable error correction, logical qubit stability, and gate fidelity,” while Google’s 105-qubit Willow chip, demonstrated in 2024, showed that increasing the size of error-corrected qubits can reduce the overall error rate, a critical step for scalability. Beyond superconducting qubits, alternative technologies are also making headway.

I would say quantum is where AI was five years ago. So, I think, in five years from now we’ll be going through a very exciting phase in quantum.

Superconducting, Trapped Ion & Emerging Qubit Technologies

This achievement builds on a period of considerable acceleration in quantum technology development over the last twelve months, with multiple approaches vying for dominance. Currently, four technologies are being actively pursued for gate-based quantum computers: superconducting, trapped ion, neutral atom, and photonics. QuEra demonstrated breakthroughs in error correction and scalable codes between December 2023 and November 2025, further solidifying the potential of this approach. Beyond these established contenders, photonics represents a fundamentally different development path, focused on fault tolerance from the outset. Although quantum annealers offer more limited applications as special-purpose optimizers, they represent the most mature QC technology in terms of real-world use.

Quantinuum, Google & IBM’s Recent QC Milestones

The pursuit of practical quantum computing continues to accelerate, with recent advancements from Quantinuum, Google, and IBM demonstrating tangible progress toward overcoming significant technical hurdles. While widespread commercial application remains distant, the pace of innovation suggests that the initial promise of quantum computation is not merely theoretical; it is increasingly reflected in demonstrable hardware and software improvements with potential for real-world impact. This is key for the viability of scaling up quantum processors. IBM has focused on improving qubit connectivity with its Nighthawk processor, presented in November 2025, which demonstrated that existing, affordable technology could scale alongside quantum processor development. In December 2025, Google also implemented magic state cultivation, which enables FTQC vendors to implement real-time error correction. Beyond hardware, collaborative efforts are also yielding results.

In June 2025, IBM and RIKEN in Japan demonstrated a hybrid quantum/conventional solution, running on IBM’s Heron quantum computer and RIKEN’s Fujitsu Fugaku supercomputer to perform electronic-structure simulations using up to 77 physical qubits and a record 10,570 quantum gates. Nvidia’s announcement of NVQLink in October 2025, a high-throughput, low-latency interconnect for quantum processing units, enables FTQC vendors to implement real-time error correction.

useful quantum computing is inevitable – and increasingly imminent.

MIT Technology Review

Hybrid Quantum/Conventional Computing & AI Integration

The pursuit of practical quantum computing often overshadows a potentially more immediate path: integrating quantum processors with existing conventional high-performance computers (HPCs). While a fully fault-tolerant quantum computer remains a long-term goal, hybrid approaches are already yielding demonstrable results, blurring the lines between the classical and quantum worlds and accelerating progress beyond what either could achieve alone. This isn’t simply about bolting a quantum processor onto a supercomputer; it’s about intelligently distributing computational tasks, leveraging the strengths of each architecture to overcome limitations. Recent demonstrations illustrate this synergy. This complex calculation highlights the potential for quantum computers to handle specific, computationally intensive portions of a larger problem, while the classical HPC manages the remaining workload. This infrastructure is crucial for scaling up quantum systems and maintaining the delicate quantum states necessary for computation.

The development of such interconnects signifies a shift from viewing quantum computers as standalone devices to recognizing them as specialized co-processors within a broader computational ecosystem. Beyond hardware integration, artificial intelligence is emerging as a powerful tool to address challenges within quantum computing itself. On the engineering side, AI could refine hardware calibration, mitigate errors, and even accelerate the discovery of new materials for quantum components. Software applications could benefit from AI-driven algorithm design, optimization, and data analysis. While applying quantum processing to enhance AI remains largely speculative, the potential for accelerating AI training and reducing its substantial energy consumption is a compelling area of research. However, the massive data-loading requirements of quantum computers present a hurdle, potentially overcome by utilizing quantum-generated data from sensors. AI/QC integration, though still in its early stages, represents a promising avenue for accelerating progress on both fronts, and could ultimately define the trajectory of quantum computing’s practical applications.

the most fundamental technical challenge – scaling from hundreds to thousands and millions of qubits – has yet to be addressed.