The rise of artificial intelligence has extended to the creation of encyclopedic knowledge, prompting questions about the characteristics and potential biases of AI-generated content, and a new study directly compares the structure and content of Grokipedia, developed by xAI, with the established platform, Wikipedia. Taha Yasseri from Trinity College Dublin and the Technological University Dublin, along with colleagues, undertakes a comprehensive computational analysis of hundreds of matched articles from both platforms, assessing differences in areas such as readability, vocabulary, and referencing. The research reveals that while Grokipedia closely aligns with Wikipedia in terms of overall meaning and writing style, it tends to produce longer articles with less varied language and a lower density of citations. These findings demonstrate that AI-generated encyclopedias currently reflect the informational breadth of human-edited platforms, but differ in their editorial approach, prioritising expansive narratives over rigorous verification, and raise important considerations for the future of knowledge creation and governance in an age of automated text generation.

Grokipedia’s Bias and Accuracy Compared to Wikipedia

Investigating AI Bias and Content Accuracy

Investigating Bias and Accuracy in AI Encyclopedias

This research investigates Grokipedia, a newly launched AI-powered encyclopedia, and compares it to Wikipedia to determine whether it addresses existing biases or introduces new ones. The study suggests that Grokipedia may replicate biases present in its training data, raising concerns about the accuracy of information, particularly on sensitive topics, and highlighting a lack of transparency and community oversight compared to Wikipedia. This emphasizes the importance of transparency, accountability, and community involvement in knowledge production and calls for critical evaluation of AI-generated content. As large language models become increasingly involved in knowledge creation, addressing issues of bias, accuracy, and transparency will be crucial.

Grokipedia and Wikipedia Content Pair Analysis

Comparative Methodology and Data Selection Process

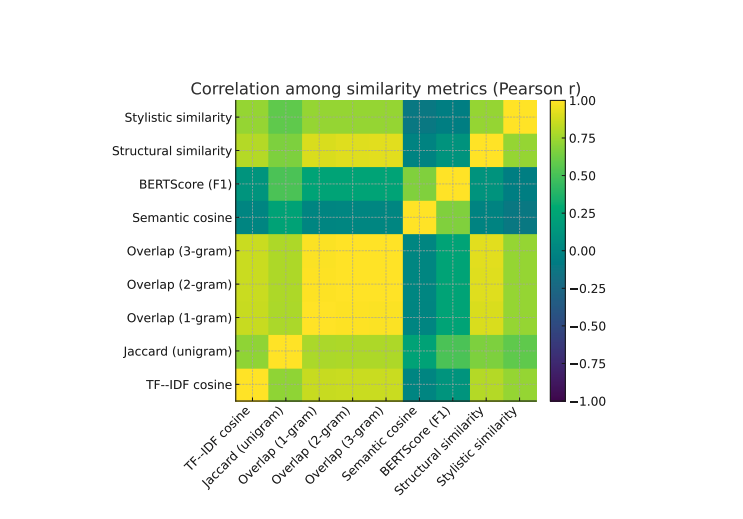

This study undertook a detailed comparison of 382 matched article pairs from Grokipedia and Wikipedia, quantifying similarities and differences in encyclopedic content. Researchers compiled a list of 416 highly-edited English-language Wikipedia articles, prioritizing those with substantial edit histories, and then retrieved corresponding entries from Grokipedia, retaining only pairs containing at least 500 words of clean prose. The analysis involved extracting substantive content using host-aware parsing strategies, accommodating the distinct structures of each platform, and computing descriptive, structural, and stylistic metrics, including paragraph counts, hyperlink frequencies, and readability scores. To quantify alignment between the platforms, the study computed nine similarity measures grouped into four conceptual domains, including lexical, semantic, structural, and stylistic similarity. The results demonstrate that while Grokipedia exhibits strong semantic and stylistic alignment with Wikipedia, it typically produces longer articles with lower lexical diversity, fewer references per word, and more variable structural depth.

Grokipedia and Wikipedia, A Comparative Content Analysis

This research presents a detailed comparison of 382 matched article pairs between Grokipedia and Wikipedia, examining similarities and differences in content and structure. Researchers began by selecting the 416 most-edited English-language Wikipedia articles, prioritizing topics with substantial textual content and sociocultural significance. After applying criteria requiring at least 500 words of clean prose in both articles, the final analytical sample comprised 382 matched pairs. The analysis reveals that while Grokipedia exhibits strong semantic and stylistic alignment with Wikipedia, key differences emerge in article characteristics.

Initial Comparative Results on Content Metrics

Results demonstrate that Grokipedia articles are, on average, longer than their Wikipedia counterparts, yet display lower lexical diversity. Specifically, the study found that Grokipedia articles utilize a smaller range of vocabulary compared to Wikipedia and exhibit a lower reference density, with fewer references per word. Beyond length and vocabulary, the research also examined structural organization, confirming more variable structural depth in Grokipedia articles, indicating inconsistencies in heading and paragraph organization compared to the standardized structure of Wikipedia. These findings suggest that AI-generated encyclopedic content currently mirrors Wikipedia’s informational scope but diverges in editorial norms, favoring narrative expansion over citation-based verification.

Grokipedia Prioritizes Elaboration Over Sourcing

Observed Structural Alignment Between AI and Wikipedia

This study presents a large-scale comparison of Grokipedia and Wikipedia, examining similarities and differences in content and style across hundreds of matched article pairs. Results demonstrate a strong alignment between the two platforms in terms of meaning and overall linguistic structure. However, Grokipedia articles consistently exhibit greater length and syntactic complexity, coupled with lower lexical diversity and a reduced density of references compared to their Wikipedia counterparts. These findings suggest that Grokipedia’s generation process prioritizes elaboration and narrative flow over rigorous sourcing and concise expression, effectively repackaging existing human-curated knowledge through an AI lens. While achieving semantic alignment with Wikipedia, the system introduces stylistic inflation and reduced citation density, subtly re-encoding potential biases within its model parameters. Further research could investigate the long-term effects of this automated knowledge production on information transparency and explore methods for enhancing the system’s ability to provide robust and verifiable information.

🗞 How Similar Are Grokipedia and Wikipedia? A Multi-Dimensional Textual and Structural Comparison

🧠 ArXiv: https://arxiv.org/abs/2510.26899

Mapping Semantic Proximity Using Computational Models

The computational metrics utilized, such as cosine similarity or Jaccard coefficients, go beyond simple word overlap. They involve mapping articles into high-dimensional vector spaces, allowing the measurement of semantic proximity even when different vocabulary is used to convey the same core meaning. Analyzing these vectors reveals whether the two platforms are merely paraphrasing each other, or if they are genuinely transmitting identical conceptual knowledge, offering a rigorous test of informational content alignment.

From a technical standpoint, the challenge of matching corresponding articles—especially those with evolving histories—requires sophisticated cross-database entity resolution. The researchers likely employed Natural Language Processing (NLP) pipelines that identify key named entities and concepts across the mismatched texts. This process is critical because it ensures that the comparison is measuring the intellectual content related to a specific subject (e.g., “Quantum Entanglement”) rather than just the structural similarity of the introductory paragraph.

Furthermore, assessing citation density requires granular web scraping and API integration that must distinguish between internal cross-references (typical of wiki markup) and genuine external source citations. This structural metric is vital because the scholarly rigor of an encyclopedia is often proportional to its verifiable evidence base. The discrepancy in citation density measured suggests a fundamental divergence in the editorial incentives between human-governed and automated knowledge systems.