The pursuit of truly intelligent systems faces a significant hurdle, as even powerful artificial intelligence struggles with complex problem-solving that demands both depth and efficiency. Hongjin Su, Shizhe Diao, and Ximing Lu, alongside Mingjie Liu, Jiacheng Xu, Xin Dong, and colleagues, address this challenge by demonstrating that a lightweight ‘orchestrator’ can effectively manage other AI models and tools to achieve superior performance. Their research introduces ToolOrchestra, a method for training these small orchestrators using reinforcement learning, and yields Orchestrator, an 8-parameter model that surpasses the accuracy of GPT-5 on challenging benchmarks like the Humanity’s Last Exam, while dramatically reducing computational costs. This achievement demonstrates that intelligently composing diverse tools with a streamlined orchestration process represents a significant step towards practical, scalable, and highly effective tool-augmented reasoning systems, offering a compelling alternative to simply scaling up larger, more expensive models.

Strategic Tool Use for Complex Tasks

This document details the development and evaluation of Orchestrator-8B, a system designed to intelligently orchestrate tools, such as code interpreters, web search engines, and specialized language models, to solve complex tasks. It focuses on achieving strong performance while minimizing resource usage, including computational cost and latency. The core idea centers on intelligent tool orchestration, recognizing that simply providing tools to a large language model is insufficient; effective orchestration, knowing when and how to use each tool, is crucial. Orchestrator-8B learns to strategically select and utilize tools, building upon a base language model and fine-tuning it to optimize tool usage.

The evaluation considers not only accuracy but also efficiency, minimizing tool calls, computational cost, and latency. A sophisticated reward system guides training and evaluation, incorporating task completion, computational resources used, the time taken to complete the task, and the number of times each tool is invoked. This system normalizes tool usage counts to prevent bias towards systems that simply use more tools. Results demonstrate that Orchestrator-8B consistently outperforms other strong language models on the benchmark, achieving better results with fewer tool calls, lower computational cost, and reduced latency. In essence, this document presents a significant advancement in language model orchestration, demonstrating that systems can be both accurate and efficient.

Dynamic Tool Selection via Reinforcement Learning

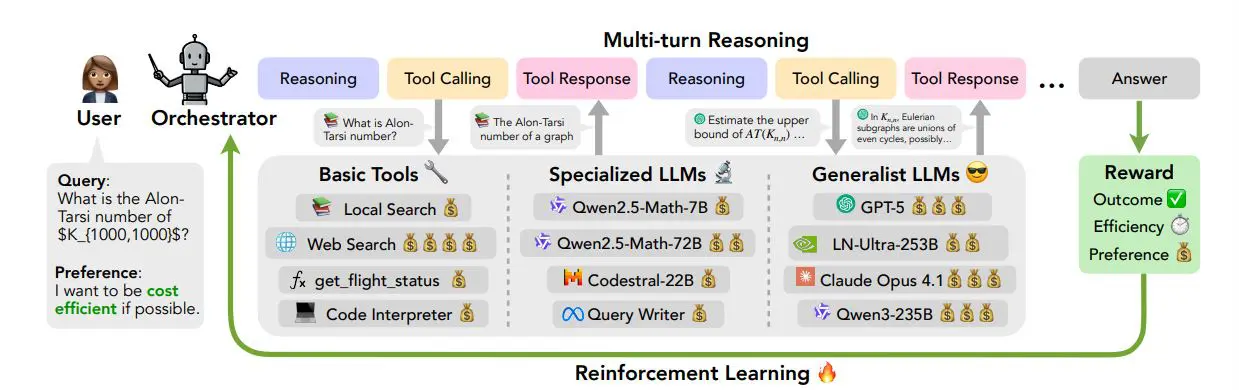

Researchers pioneered ToolOrchestra, a novel method for enhancing intelligence and efficiency in complex tasks by coordinating diverse intelligent tools with a lightweight orchestrator model. This approach diverges from relying on a single, powerful language model, instead leveraging a composite system where an 8B orchestrator model dynamically selects and sequences tools to solve problems. The core of the work involves training this orchestrator using reinforcement learning, guided by outcome accuracy, efficiency in tool usage, and alignment with user preferences regarding tool selection. Experiments employed a diverse toolkit, encompassing basic utilities like web search and code interpreters, alongside specialized language models focused on coding and mathematical reasoning, and generalist language models.

The orchestrator model alternates between reasoning steps and tool invocation, adapting its strategy across multiple turns to address complex queries. This process allows the system to harness the strengths of various tools, even those with differing levels of intelligence and associated costs, to achieve optimal performance. Evaluations on the Humanity’s Last Exam benchmark demonstrate that Orchestrator achieves a score surpassing that of a leading language model, while exhibiting greater efficiency. Furthermore, on other challenging benchmarks, Orchestrator significantly outperforms the same leading language model, accomplishing this while utilizing approximately 30% less computational cost. Detailed analysis confirms that Orchestrator consistently delivers the best trade-off between performance and cost across multiple metrics, and exhibits robust generalization capabilities when presented with previously unseen tools. This demonstrates that composing diverse tools with a lightweight orchestrator is a more effective and efficient approach than relying on monolithic models for complex reasoning tasks.

Orchestrator Surpasses Leading Models in Complex Reasoning

Researchers have developed ToolOrchestra, a novel method for training small orchestrator models to coordinate intelligent tools, achieving significant advancements in complex problem-solving capabilities. This work demonstrates that a lightweight orchestrator can effectively manage a diverse set of tools, surpassing the performance of existing approaches and achieving higher intelligence through collaborative effort. Experiments using the Humanity’s Last Exam benchmark reveal that Orchestrator, an 8B parameter model, achieves a score surpassing that of a leading language model, while simultaneously demonstrating greater efficiency. Further analysis on other benchmarks shows Orchestrator significantly surpasses the same leading language model, accomplishing this while utilizing approximately 30% less computational cost.

Specifically, Orchestrator achieves higher scores on these benchmarks, exceeding the performance of the leading language model. The team’s analysis confirms that Orchestrator consistently delivers the optimal balance between performance and cost across multiple metrics, and exhibits robust generalization to previously unseen tools. This breakthrough stems from a reinforcement learning approach that optimizes the orchestrator using outcome, efficiency, and user-preference-aware rewards. The results demonstrate that composing diverse tools with a lightweight orchestration model is both more effective and more efficient than relying on a single, powerful model, paving the way for practical and scalable tool-augmented reasoning systems. The team’s work addresses limitations in existing tool-use agents, specifically overcoming biases towards self-enhancement and defaulting to the most powerful tools regardless of cost.

Efficient Tool Use Surpasses Larger Models

ToolOrchestra represents a significant advance in artificial intelligence, demonstrating that intelligent agents can achieve high performance with greater efficiency through careful orchestration of specialized tools. Researchers developed a method for training a relatively small ‘orchestrator’ model to coordinate diverse tools and models, enabling it to solve complex problems while minimizing computational cost. This approach, validated on challenging benchmarks, consistently outperformed larger models, achieving a higher score with greater efficiency. The team’s Orchestrator-8B model not only surpassed the performance of a leading language model on multiple tasks, but also demonstrated a superior balance between accuracy and cost, and robust generalization to previously unseen tools. To facilitate this research, they also created ToolScale, a complex synthetic dataset designed to aid reinforcement learning. While acknowledging the limitations inherent in synthetic datasets, the authors envision future work focused on developing more sophisticated, recursively structured orchestrator systems to further enhance both intelligence and efficiency in tackling increasingly complex tasks.

🗞 ToolOrchestra: Elevating Intelligence via Efficient Model and Tool Orchestration

🧠 ArXiv: https://arxiv.org/abs/2511.21689