Vision Transformers, powerful image recognition systems, currently operate as largely opaque ‘black boxes’ despite their increasing prevalence, and understanding how they process visual information remains a significant challenge. Akshad Shyam Purushottamdas, Pranav K Nayak, and Divya Mehul Rajparia, from IIT Hyderabad, alongside their colleagues, now investigate the internal workings of these models by exploring the principle of compositionality, the ability to build complex representations from simpler parts. The team developed a novel framework, utilising a mathematical tool called the Discrete Wavelet Transform, to break down images into fundamental components and then assess whether the Vision Transformer’s internal representations reflect this compositional structure. This research demonstrates that the model’s learned representations exhibit approximate compositionality, offering valuable new insights into how Vision Transformers organise and interpret visual data and potentially paving the way for more interpretable and robust artificial intelligence systems.

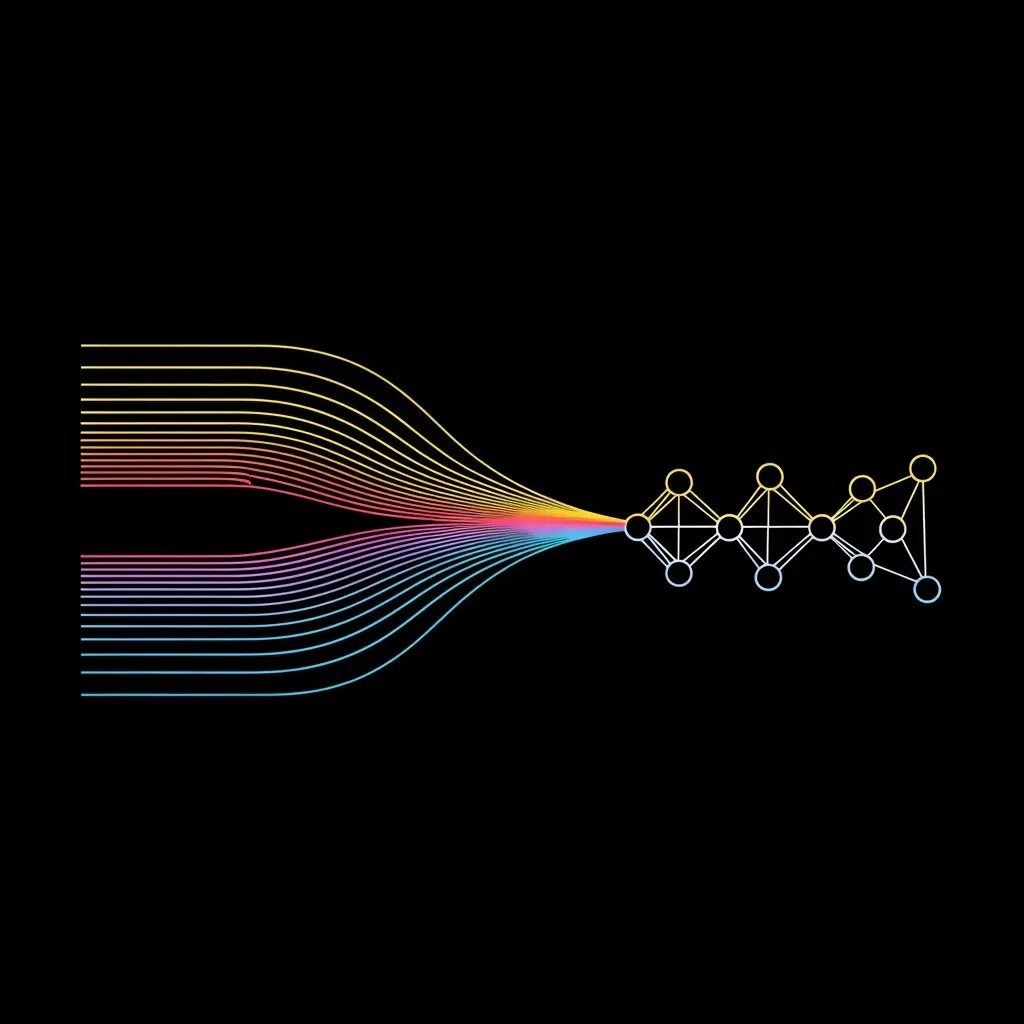

Researchers investigate the compositional abilities learnt by the Vision Transformer (ViT) encoder, introducing a framework to test for compositionality within the model. Compositionality, in this context, refers to a model’s ability to understand and generalize to novel combinations of known concepts, a hallmark of robust and generalizable intelligence. This work investigates the internal representations learned by the ViT encoder, focusing on whether these representations respect the principle of compositionality. The team’s approach centers on the Discrete Wavelet Transform (DWT), a signal processing tool used to break down images into fundamental primitives. The method involves representing an image using wavelet coefficients, decomposing it into sub-bands representing localized spatial-frequency components, creating visually interpretable primitives, unlike traditional frequency transforms.

Experiments revealed that ViT patch representations at the final encoder layer are compositional when using DWT primitives obtained from a single-level decomposition, demonstrating how these models structure visual information. This breakthrough delivers a novel method for analyzing ViTs and potentially improving the explainability of their learned representations, opening avenues for more interpretable and robust computer vision systems. The DWT’s ability to provide both time and frequency localization proves crucial for identifying visually meaningful image structures.

ViT Compositionality Assessed Via Wavelet Analysis

This research presents a new framework for evaluating compositional behaviour within the encoder layers of Vision Transformer (ViT) models, building on existing work in representation learning. The team successfully applied a Discrete Wavelet Transform to obtain input-dependent primitives, then examined how well composed representations could reproduce original image information. Results indicate that representations from the final encoder layer demonstrate a degree of compositionality, suggesting that ViTs may structure information in a way that allows for the combination of simpler features into more complex ones. The study further establishes the robustness of this framework, showing it remains effective even when images are subjected to distortions such as JPEG compression and noise, suggesting the learned compositional representations are not merely artefacts of pristine data. While the current analysis focused on the final encoder layer, the researchers plan to extend their investigation to encompass all layers within the ViT architecture, with the goal of improving explainability in these complex models. By better understanding how ViTs process visual information, this work could contribute to the development of more interpretable and robust artificial intelligence systems.

By examining the ability of composed representations to reproduce original image representations, the team empirically assesses the extent to which compositionality is respected. The research demonstrates that representations derived from a one-level DWT decomposition exhibit compositional behavior within the ViT’s latent space, offering insights into how these models structure visual information. The learned compositional representations are robust to image distortions like compression and noise, suggesting they reflect a fundamental property of the model.

🗞 Exploring Compositionality in Vision Transformers using Wavelet Representations

🧠 ArXiv: https://arxiv.org/abs/2512.24438