We have spent the better part of three decades telling the brightest young minds on the planet to learn to code. Computer science departments swelled. Bootcamps proliferated. The message was clear and consistent: software is eating the world, and you had better be holding a fork. Now the machines are learning to hold their own forks, and the entire premise is being called into question. But rather than a cause for despair, this may be exactly the correction humanity needed.

I say this as someone who trained as a computational physicist and has spent decades working in AI and machine learning. I have watched from both sides as the technology industry absorbed an extraordinary proportion of the world’s technical talent into software roles, many of which were technically sophisticated but intellectually narrow. The threat of AI to jobs is well-trodden territory. Large language models can already draft legal documents, write marketing copy, and produce functional code. What has been far less explored is the possibility that AI, by solving the relatively tractable problem of software production, might finally redirect human talent toward the problems that actually matter.

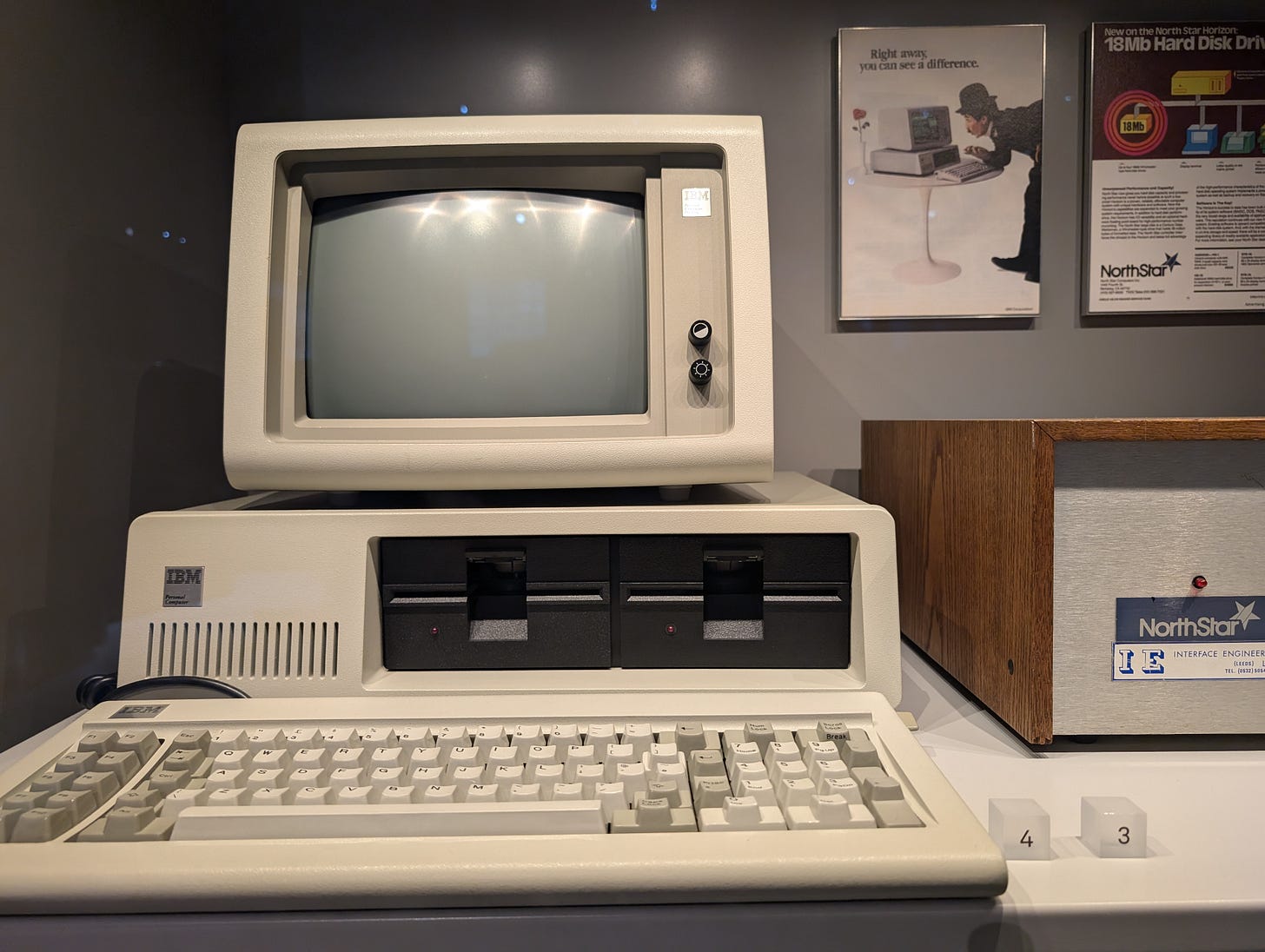

Here is an idea that will annoy a significant portion of the technology industry: software engineering, as a mass profession, may turn out to have been a bracket. A parenthetical era in the history of human intellectual labour, bookended by the invention of high-level programming languages on one side and the arrival of AI systems capable of writing software on the other.

The history of programming is a history of abstraction. We started with machine code, raw binary instructions fed directly to hardware. That gave way to assembly language, then to high-level languages like Fortran and C, then to increasingly expressive languages like Python and JavaScript that let programmers think in terms ever further removed from the machine. Each step up the abstraction ladder made the previous level something only specialists needed to touch. Nobody writes machine code by hand anymore unless they are working on a very specific embedded systems problem. Assembly is the same. These languages did not disappear, but the number of people who need to work at that level shrank to a fraction of what it once was.

AI represents the next rung on that ladder, and possibly the last one that matters. When you can describe what you want in natural language and have an AI system produce functional code, traditional programming becomes the new assembly. It will still exist for specialist cases, but it will no longer be a mass profession. The entire middle layer of the abstraction stack is being compressed into a conversation.

This is true even in quantum computing, a field where programming is already arcane. Tools like Qiskit and Cirq currently require specialist knowledge to design quantum circuits, but AI-assisted circuit generation is advancing rapidly. Within a few years, a researcher may be able to describe the quantum algorithm they want in plain language and have an AI system generate, optimise, and error-correct the circuit. The programming of quantum computers may never become a mass skill. It may go straight from specialist to automated, skipping the bracket entirely.

The answer, increasingly, points downward toward the physical substrate and outward toward the hardest unsolved problems in science.

Every advance in AI capability ultimately depends on physical hardware. Chips fabricated at scales measured in nanometres, cooling systems managing enormous thermal loads, and increasingly, entirely new computing architectures that break with the von Neumann model altogether. TSMC, Intel, Samsung, and the growing ecosystem of quantum hardware companies like IBM, Google Quantum AI, IonQ, Quantinuum, and PsiQuantum all face the same constraint: they need people who understand physics, materials science, and engineering at a fundamental level.

And quantum computing is only one of several emerging paradigms demanding this expertise. Photonic computing, which uses light rather than electrons to process information, promises dramatically lower energy consumption and higher bandwidth for certain workloads. Companies like Lightmatter and Xanadu are building processors that exploit photons for both classical AI acceleration and quantum computation. Neuromorphic computing, which mimics the architecture of biological neural networks in silicon, represents yet another departure from conventional chip design. Intel’s Loihi and IBM’s NorthPole chips are early examples of hardware that processes information in ways fundamentally different from traditional processors, requiring engineers who understand neuroscience, analogue circuit design, and novel materials alongside conventional semiconductor physics. Each of these paradigms demands deep knowledge of the underlying physics, and none of them can be built by prompting a language model.

Quantum computing remains the most vivid illustration of this shift. Building a fault-tolerant quantum computer spans condensed matter physics, quantum error correction theory, cryogenic engineering, photonics, and materials science. You cannot train a model on Stack Overflow answers to solve the problem of qubit decoherence. I have spent years tracking this industry through Quantum Zeitgeist, and the single most consistent theme from hardware teams is that the bottleneck is not funding or ideas. It is people. There are simply not enough physicists and engineers with the right training to fill the roles these companies need.

The implications extend well beyond computing. Consider what becomes possible when AI handles the cognitive busywork that currently occupies millions of talented people.

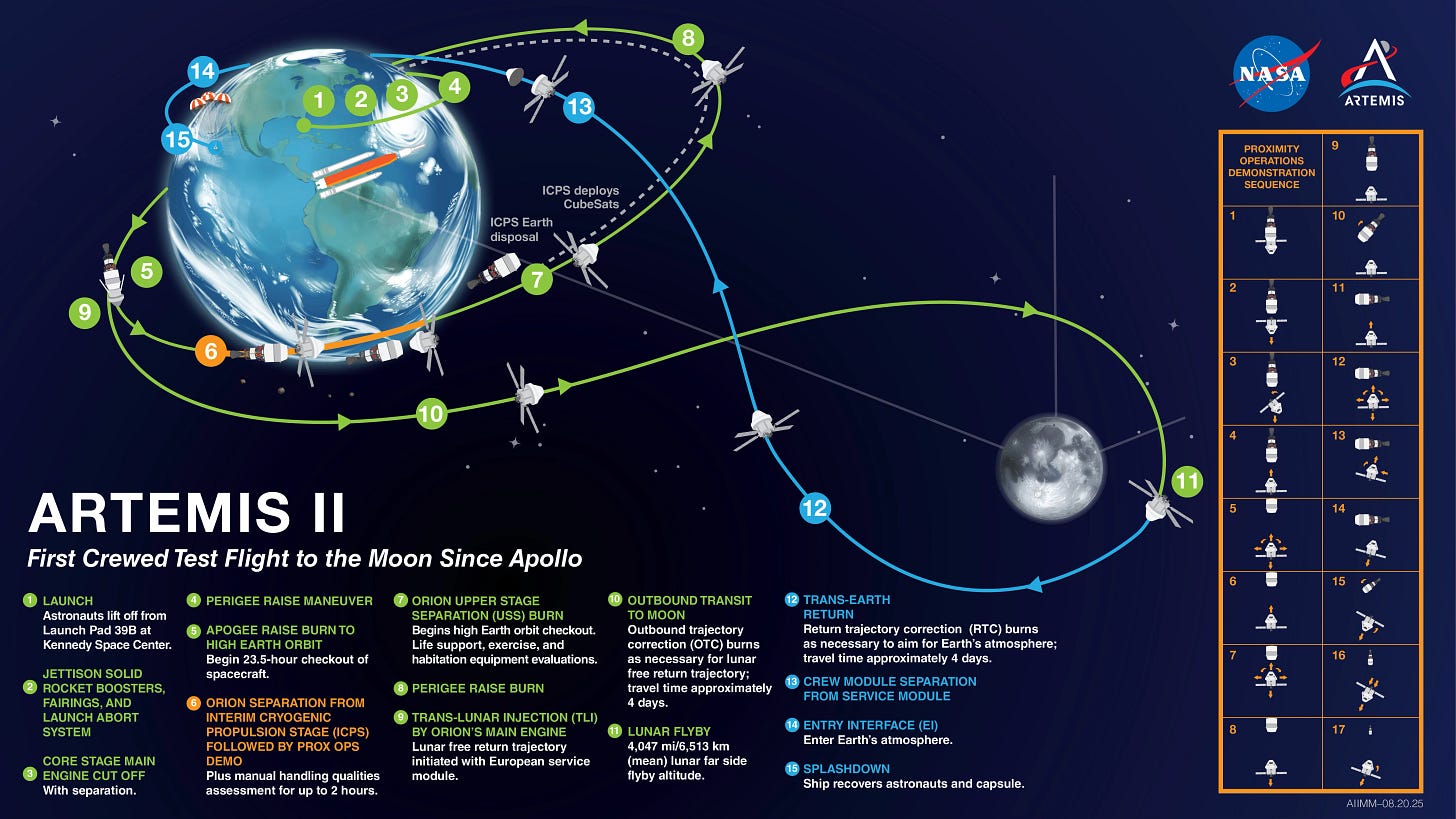

NASA’s Artemis programme is working to return humans to the Moon, something we have not accomplished since 1972. The fact that humanity went to the Moon with slide rules and then spent fifty years not going back while building increasingly sophisticated apps for food delivery is one of the more damning indictments of how we have allocated our collective intelligence. SpaceX is developing Starship with the explicit goal of making Mars missions feasible. Fusion energy, after decades as a punchline, has attracted over $9.8 billion in private investment, with companies like Commonwealth Fusion Systems, TAE Technologies, and Helion Energy pursuing reactor designs that demand expertise in plasma physics and superconducting magnet design. Even in biology, DeepMind’s AlphaFold showed that AI can predict protein structures, but translating those predictions into actual therapeutics still requires human researchers who understand cellular biology and clinical reality.

These are not problems that yield to better prompting strategies. They require scientists and engineers who can work at the boundary of known physics.

If this thesis is correct, the implications for education are profound. For two decades, universities have been under enormous pressure to produce job-ready software developers. Adjacent disciplines such as physics, mathematics, and electrical engineering saw enrolment stagnate as students pursued the economic gravity of Silicon Valley.

That calculus is now shifting. If AI can produce competent code, the premium on a computer science degree focused primarily on software development diminishes rapidly. But the premium on understanding quantum mechanics, thermodynamics, electromagnetism, and advanced mathematics only increases. These are the fields that underpin the physical systems AI cannot build on its own. They are also the fields that produce the kind of thinking, comfort with ambiguity, deep mathematical reasoning, and ability to model systems from first principles that remain most resistant to automation.

My advice to anyone reading this, whether you are a student, a mid-career professional, or someone watching the AI wave with a growing sense of unease: start educating yourself about the new computing paradigms now. Quantum computing is not a distant curiosity. It is an emerging industry with a rapidly growing company landscape that desperately needs people who understand the science. Photonic and neuromorphic computing are on similar trajectories, creating demand for physicists and engineers who can think beyond the transistor. You do not need a PhD to begin. IBM offers free access to quantum hardware through the cloud, Intel has made neuromorphic development tools publicly available, and open-source photonic simulation frameworks are emerging from companies like Xanadu. Courses from MIT, Stanford, and others are available online. The skills gap is real, but the barrier to entry is not access. It is the willingness to engage with material that is genuinely difficult, physics and mathematics that cannot be shortcut by asking a chatbot. That difficulty is precisely what makes it valuable.

Physics departments that have spent years struggling to attract students may find themselves newly relevant. Materials science, long regarded as an unglamorous discipline, could see a surge in interest as the demand for novel substrates, better qubits, photonic interconnects, and neuromorphic architectures intensifies. The people who thrive in the next era will be those who went deep rather than broad, who invested in understanding the fundamental behaviour of nature rather than the latest JavaScript framework.

The conversation about AI and jobs has been dominated by anxiety, and understandably so. People will lose livelihoods. Industries will be disrupted. But alongside that necessary concern, there is a story that deserves more attention. For decades, we funnelled an enormous proportion of our best technical minds into the relatively narrow problem of making software. We built extraordinary digital infrastructure, but we also left some of the most important challenges in science and engineering understaffed and underfunded. We stopped going to the Moon. We delayed fusion by decades. We let quantum computing remain a curiosity while the people who could have advanced it were busy optimising ad-click algorithms.

AI is now solving the software problem. Not completely, not overnight, but with a trajectory that makes the direction unmistakable. The question is no longer whether machines can do this work. It is what humans will choose to do instead. The most hopeful answer is that we will finally, after a long and profitable detour, return to the hard stuff. The physics. The hardware. The grand engineering challenges. The fundamental questions about the nature of reality that we have been too busy writing code to properly pursue.

The software bracket is closing. What comes next could be extraordinary. But only if we have the courage to go deep.