The increasing autonomy of artificial intelligence agents presents significant challenges in ensuring responsible and transparent decision-making, particularly as these systems begin to influence real-world outcomes. Eranga Bandara, Tharaka Hewa, and Ross Gore, from Old Dominion University and the University of Oulu, along with their colleagues, address this critical need with a novel agent architecture that prioritises explainability and accountability. Their research introduces a system where multiple AI models independently process information and generate potential solutions, revealing areas of uncertainty and disagreement. A dedicated ‘reasoning agent’ then consolidates these diverse outputs, enforcing pre-defined policies and mitigating biases to produce well-supported, auditable decisions, ultimately improving robustness and trust in complex agentic workflows. This approach represents a substantial advance towards building AI systems that are not only powerful and scalable, but also demonstrably responsible and understandable.

Large Language Models, Prompting and Explainability

A significant body of research focuses on Large Language Models (LLMs), their applications, improvements, explainability, and integration with other technologies, including multi-agent systems and robotics. There is a strong emphasis on AI applications within healthcare, encompassing diagnostics for conditions like Amyotrophic Lateral Sclerosis (ALS), PTSD, and dental diseases, as well as advancements in digital mental health. AI is also being explored to enhance security and efficiency in 5G and emerging 6G networks, with some approaches integrating blockchain and NFTs. The concept of multi-agent systems powered by LLMs is a recurring theme, suggesting an interest in building more complex and autonomous AI solutions. The inclusion of Explainable AI (XAI) research highlights the growing importance of understanding how AI models arrive at their decisions, particularly in sensitive areas like text generation and healthcare. Finally, research also focuses on the h-reflex and its application to neurological and musculoskeletal research.

Agentic Consortium with Reasoning Consolidation

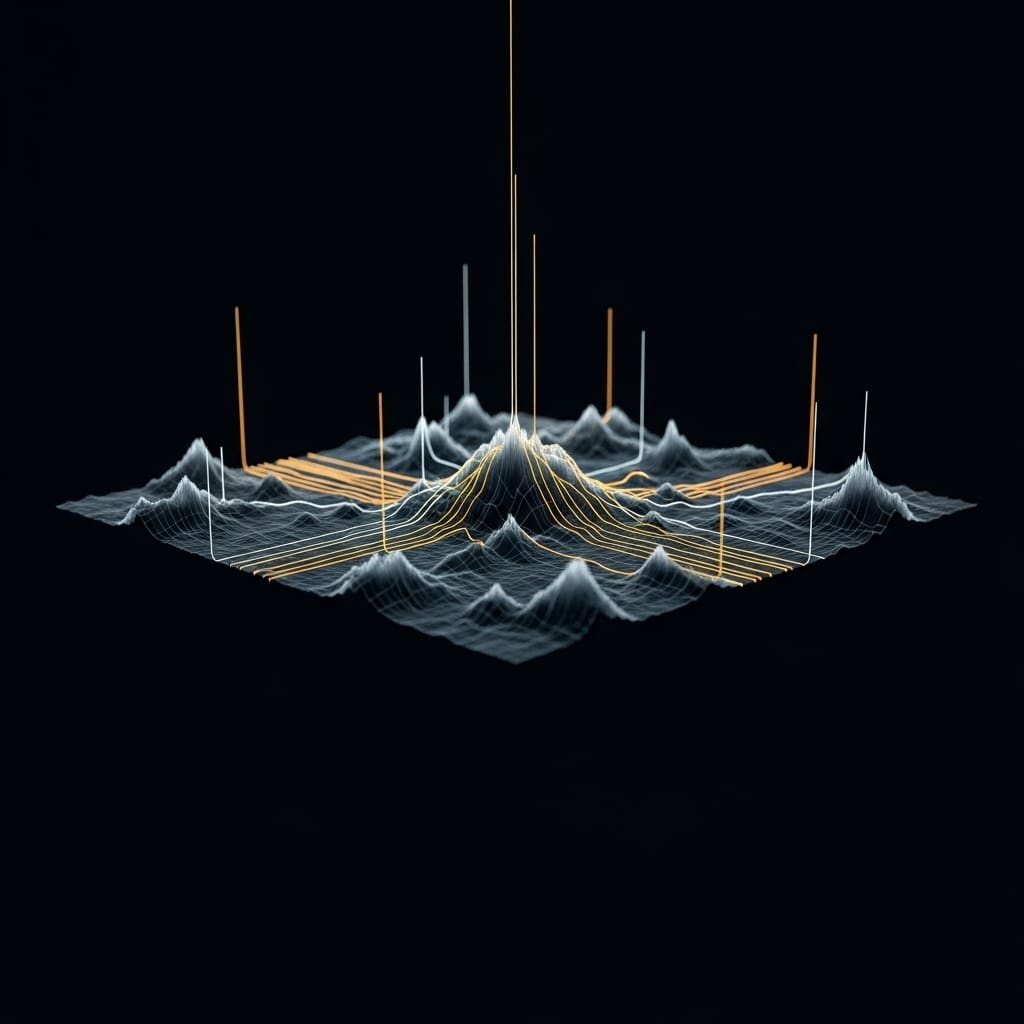

The research team engineered a novel agentic architecture, prioritizing responsible and explainable artificial intelligence, to address limitations in existing autonomous systems. This work pioneers a system where multiple Large Language Models (LLMs) and Vision Language Models (VLMs) function as a consortium, independently generating potential outputs from a common input, revealing uncertainty and disagreement among the models. The team deliberately designed the system to move beyond simple statistical aggregation, incorporating a dedicated reasoning agent to perform structured consolidation. This agent enforces predefined policy constraints and mitigates potential hallucinations or biases, comparing candidate outputs to produce auditable decisions backed by evidence from the model consortium. Explainability is achieved by preserving intermediate outputs, allowing detailed inspection of the reasoning process and cross-model comparison. Experiments deploying the architecture across real-world agentic workflows demonstrate improvements in robustness and transparency, achieving a consensus-driven reasoning process that improves performance compared to single-model approaches while embedding responsibility and explainability.

LLM Coordination Reveals Reasoning Diversity and Uncertainty

Scientists developed a novel agentic system architecture that fundamentally alters how autonomous systems reason, plan, and execute complex tasks, achieving both scalability and responsible AI principles. The core of this work involves coordinating Large Language Models (LLMs), Vision Language Models (VLMs), tools, and external services within a framework designed for explainability and accountability. This system generates diverse candidate outputs from a shared input, explicitly exposing uncertainty and disagreement between models, providing inherent explainability benefits through comparative inspection of alternative interpretations. A dedicated reasoning agent performs structured consolidation of these outputs, enforcing policy constraints and mitigating potential hallucinations or biases. This agent operates as the sole decision authority, synthesizing a single, consolidated result by discarding outlier, unsafe, or unverifiable content, grounding the final decision in original input sources and preserving traceability. Tests prove that this coordination pattern strengthens consensus areas while addressing disagreements by lowering confidence or flagging uncertainty, resulting in a system demonstrably robust to single model failures, successfully implemented across diverse applications including news podcast generation, neuromuscular reflex analysis, dental image interpretation, psychiatric diagnosis, clinical decision support, and RF signal classification.

Reasoning Agents Enhance Trustworthy Autonomy

This research presents a new architecture for building autonomous systems, termed Responsible and Explainable Agent Architecture, that coordinates multiple large language models and vision-language models alongside dedicated tools and services. The team successfully demonstrates that by having these diverse models independently generate outputs and then consolidating them through a central reasoning agent, they can improve the reliability and trustworthiness of complex, multi-step tasks. This approach explicitly reveals areas of uncertainty and disagreement between models, providing a more transparent decision-making process than traditional autonomous systems. The resulting system achieves both explainability and responsibility by separating the generation of ideas from their governance, allowing for systematic application of safety constraints and policy rules. Evaluations across a range of applications, including news podcast creation, medical image analysis, and signal classification, demonstrate the architecture’s generalizability and effectiveness in enhancing robustness and accountability.

🗞 Towards Responsible and Explainable AI Agents with Consensus-Driven Reasoning

🧠 ArXiv: https://arxiv.org/abs/2512.21699