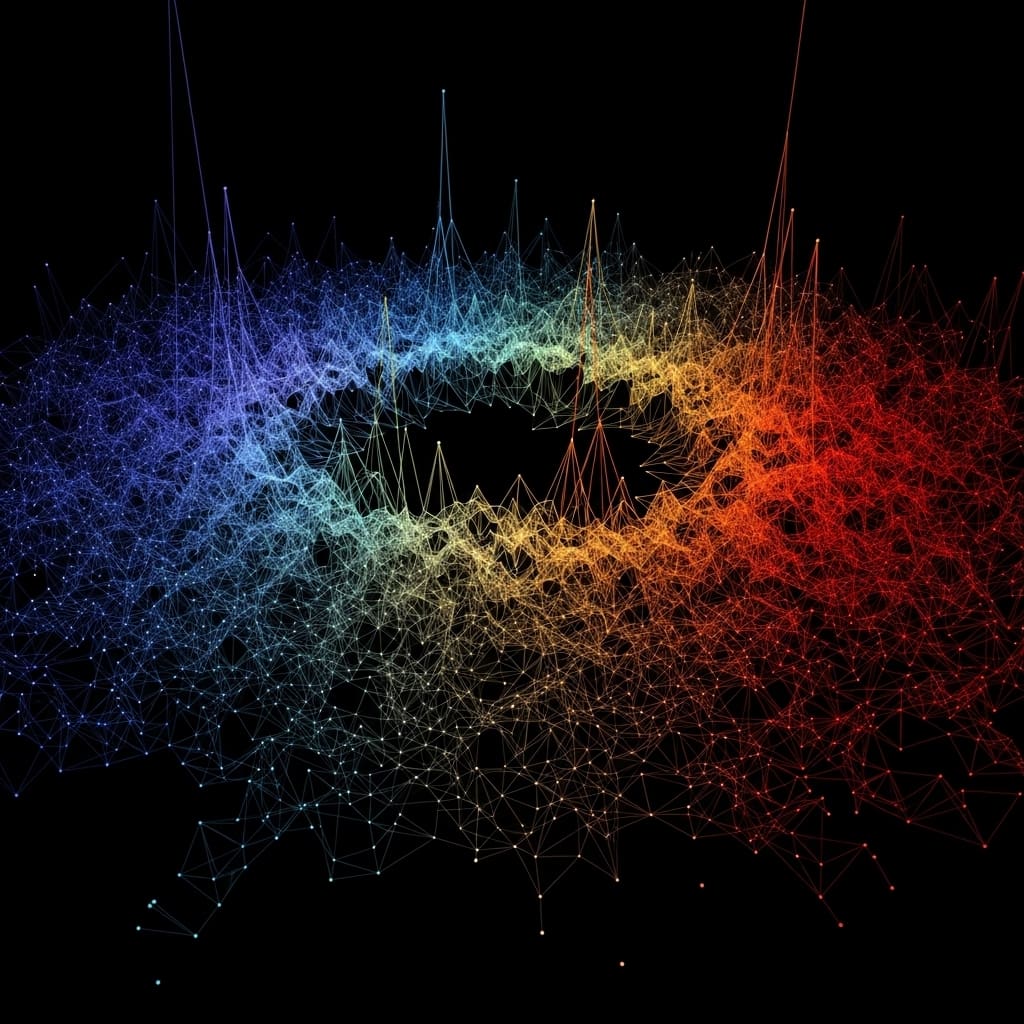

Researchers are increasingly focused on understanding the theoretical underpinnings of masked self-supervised learning (SSL), a dominant training paradigm in modern machine learning. Arie Wortsman (École Normale Supérieure, PSL & CNRS), Federica Gerace (University of Bologna), and Bruno Loureiro (École Normale Supérieure, PSL & CNRS) et al. present a novel analysis of masked SSL objectives, moving beyond single vector-valued estimators to examine the joint, matrix-valued predictor that emerges from aggregating predictions across numerous masking patterns. This work is significant because it provides explicit mathematical expressions for generalisation error and characterises the spectral structure of the learned predictor, revealing how masked SSL extracts structure from data. Crucially, the authors demonstrate a phase transition analogous to the Baik, Ben Arous, Péché (BBP) transition, and identify scenarios where masked SSL demonstrably outperforms principal component analysis (PCA).

Generalisation error and spectral analysis of masked self-supervised learning in high dimensions reveal interesting trade-offs

Scientists have demonstrated a precise high-dimensional analysis of masked self-supervised learning (SSL) objectives, a training paradigm central to modern transformer models. The research team developed a method for analysing the generalization error and spectral structure of learned predictors, focusing on scenarios where the number of samples scales with the ambient dimension.

This work establishes explicit expressions for generalization error, revealing how masked SSL extracts structural information from data. The study employed a novel approach, examining a matrix-valued predictor generated by aggregating predictions across numerous masking patterns, rather than a conventional single vector-valued estimator.

Researchers analysed this predictor in the proportional regime, where sample size grows alongside data dimensionality, allowing for a detailed characterisation of its behaviour. Furthermore, the team identified specific structured regimes where masked SSL demonstrably outperforms Principal Component Analysis (PCA), highlighting the advantages of SSL objectives over classical unsupervised methods.

These findings elucidate the mechanisms by which masked SSL leverages data correlations and provide a principled comparison with spectral approaches. Experiments show that, for first-order autoregressive models, masked self-supervised regression can strictly dominate PCA in performance if the number of directions in PCA is not close to the dimension.

The work opens new avenues for understanding and optimising self-supervised learning techniques, with potential applications in areas such as natural language processing and computer vision, particularly in data-scarce environments. This breakthrough establishes a foundation for improving the efficiency and effectiveness of training transformer models.

Asymptotic performance of ridge-regularised linear predictors in high-dimensional masked self-supervision is surprisingly robust

Scientists developed a precise, high-dimensional analysis of masked self-supervised learning (SSL) objectives, focusing on the proportional regime where the number of samples scales with the ambient dimension. The study employed real-valued sequence data, constructing a family of ridge-regularized linear predictors mapping sequences to a matrix, denoted as X to XA, under the constraint that no coordinate predicts itself.

Researchers derived a sharp asymptotic characterization of training and generalization performance for this aggregate predictor A, as n and d approach infinity with a fixed ratio of n/d equal to α, where α is greater than zero. Experiments harnessed a linear predictor trained with masked data, enabling the team to establish a high-dimensional deterministic equivalent for the matrix-valued predictor A.

This innovative approach allowed characterisation of how self-supervised learning encodes and exploits underlying data geometry, relying on analysis of a random matrix aggregate of correlated predictors. Conversely, with first-order autoregressive models, scientists proved that masked self-supervised regression can demonstrably exceed PCA performance when the number of directions in PCA is not close to the dimension. This method reveals the mechanisms by which SSL leverages sequential structure and elucidates the inductive bias induced by strong temporal correlations, providing a principled comparison between SSL and classical spectral approaches.

Generalization error characterization reveals a BBP phase transition in masked self-supervised learning, suggesting a critical regime for representation learning

Scientists developed a precise high-dimensional analysis of masked self-supervised learning (SSL) objectives, focusing on scenarios where the number of samples scales with the ambient dimension. The team measured generalization performance in a proportional regime, where the ratio of samples to dimension remained constant, denoted as α 0.

Results show that the asymptotic limit of training and generalization errors depends solely on the population covariance of the data, providing an interpretable link between data structure and successful generalization. A high-dimensional deterministic equivalent was established for the matrix-valued aggregate predictor, allowing characterization of how self-supervised learning encodes data geometry.

Further analysis involved spiked covariance models, where principal component analysis (PCA) was found to strictly outperform masked SSL, with a BBP-type transition observed in the asymptotic spectrum of the predictor at a threshold consistent with the sample covariance matrix. Conversely, for first-order autoregressive models, masked self-supervised regression demonstrably outperformed PCA when the number of directions in PCA deviated from the dimension.

Measurements confirm that masked SSL can achieve superior performance in regimes with strong temporal correlations. The study identified structured regimes where masked SSL provably outperforms PCA, highlighting the potential advantages of SSL objectives over classical unsupervised methods. This breakthrough delivers a principled comparison between self-supervised regression and spectral approaches, revealing their respective strengths and limitations in high-dimensional settings and elucidating the mechanisms by which masked SSL exploits correlations within training data.

Masked self-supervision exhibits a BBP phase transition for improved signal recovery, similar to compressed sensing

Scientists have developed a high-dimensional analysis of masked self-supervised learning (SSL) objectives, focusing on the proportional regime where sample numbers scale with dimensionality. The findings highlight a potential advantage of SSL objectives over classical unsupervised methods like principal component analysis (PCA), demonstrating scenarios where masked SSL provably outperforms PCA.

This work was conducted using a simplified model of self-supervised ridge regression, allowing for asymptotic limits of generalization error and spectral distribution to be derived. The authors acknowledge limitations related to the simplified model and the specific statistical models, spiked covariance and an autoregressive process, used for testing. Future research could explore these findings in more complex scenarios and with different data types.

👉 More information

🗞 A Random Matrix Theory of Masked Self-Supervised Regression

🧠 ArXiv: https://arxiv.org/abs/2601.23208