Scientists are tackling the persistent problem of 3D inconsistency in video generation, where visually appealing outputs often suffer from object deformation and spatial drift. Hongyang Du, Junjie Ye, and Xiaoyan Cong from Brown University, alongside Runhao Li, Jingcheng Ni, and Aman Agarwal et al., present a new framework, VideoGPA (Video Geometric Preference Alignment), which addresses this challenge by explicitly incentivising geometric coherence. Their research introduces a data-efficient, self-supervised method that uses a geometry foundation to guide video diffusion models via Direct Preference Optimization, effectively improving temporal stability, physical plausibility, and motion coherence without the need for human-labelled data. This work represents a significant step towards generating truly realistic and consistent videos.

The research addresses a fundamental limitation of current video diffusion models (VDMs), which, despite producing visually impressive results, often struggle with maintaining accurate 3D structure, leading to object deformation and spatial drift.

Researchers hypothesised that standard denoising objectives lack the necessary incentives for geometric coherence, and developed a data-efficient, self-supervised approach to rectify this. Specifically, VideoGPA utilises the principle of re-projection consistency, employing a geometry foundation model to render a 3D consistent video as a reference point. The discrepancy between the generated video and this rendered reference then serves as a robust metric for 3D consistency.

This work introduces a novel 3D consistency metric and demonstrates that with approximately 2,500 preference pairs and minimal post-training using LoRA fine-tuning on only 1% of model parameters, VideoGPA substantially improves geometric coherence and temporal stability. Experiments consistently show that VideoGPA outperforms state-of-the-art baselines across both image-to-video and text-to-video settings, improving performance on multiple geometric consistency and perceptual metrics.

The study unveils a pathway towards more realistic and physically plausible video generation, with potential applications in areas such as Embodied AI, Novel View Synthesis, and physics simulation. By distilling 3D knowledge into video diffusion models, the research establishes a critical milestone in modelling the 3D world and opens new avenues for creating geometrically sound and temporally stable video content.

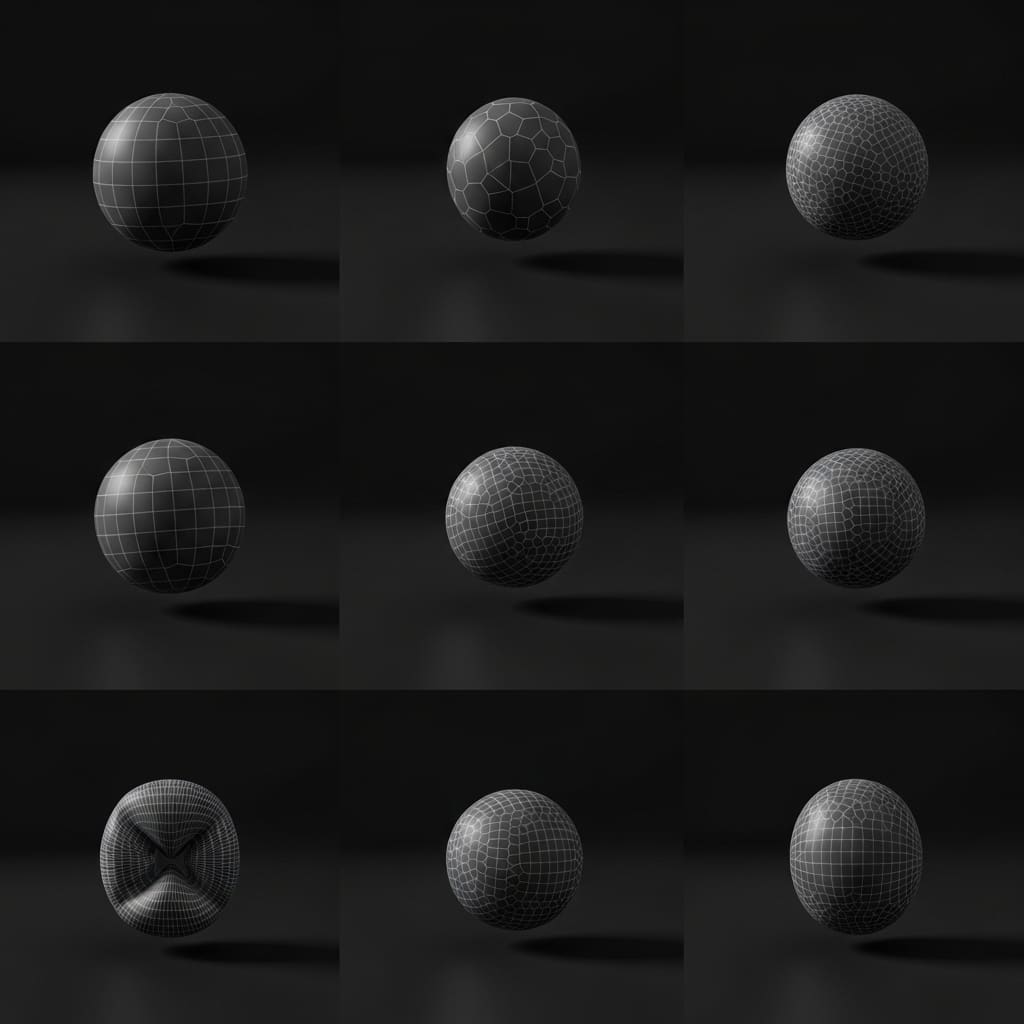

Extracting 3D structure and preference signals for geometrically consistent video generation is a challenging problem

Scientists introduced VideoGPA, a data-efficient self-supervised framework designed to enhance 3D structural consistency in video diffusion models. The work began by employing a geometric foundation model to extract 3D structure and camera motion from generated videos.

Subsequently, a 3D consistency score was calculated by measuring the reconstruction error of original frames from the extracted 3D structure, utilising both Mean Squared Error (MSE) and LPIPS metrics. Experiments employed the DPO framework, derived from the Bradley-Terry preference model, to optimise the video generation process. The probability of a preferred completion, xw, over a dispreferred completion, xl, given a context c, was calculated using the equation P(xw ≻ xl|c) = σ r(xw, c) − r(xl, c).

Researchers reparameterised the reward function using the log-likelihood ratio between the policy πθ and a reference policy πref, expressed as r(x, c) = β log πθ(x|c) / πref(x|c) + β log Z(c). This led to the DPO objective, LDPO = −E(c,xw,xl) [log σ (β log πθ(xw|c) / πref(xw|c) − β log πθ(xl|c) / πref(xl|c))].

Building upon diffusion transformers utilising the v-prediction parameterisation, the study adapted the Diffusion-DPO framework to explicitly steer the model towards geometrically consistent manifolds. For a given timestep t, the log-probability ratio was expressed as the difference in the score-matching loss: log πθ(x|c) / πref(x|c) ∝ −Et,ε ∥ε − εθ(xt, t, c)∥2 − ∥ε − εref(xt, t, c)∥2, where xt represents the noisy latent at timestep t. This innovative method achieves enhanced temporal stability, physical plausibility, and motion coherence, consistently outperforming state-of-the-art baselines with minimal preference pairs.

Geometry-guided preference optimisation improves 3D consistency in generated videos significantly

Scientists have developed VideoGPA, a novel framework for enhancing 3D consistency in video generation models. The research addresses a fundamental limitation of recent video diffusion models, which, despite producing visually impressive results, often struggle with maintaining accurate 3D structural integrity, leading to object deformation and spatial drift.

Experiments revealed that standard denoising objectives lack explicit incentives for geometric coherence, prompting the team to hypothesise a solution leveraging geometry foundation models. The team measured 3D consistency using a novel metric based on re-projection consistency, where a geometry foundation model renders a 3D consistent video as a reference.

Discrepancies between the generated video and the rendered reference then serve as a proxy for geometric validity. Results demonstrate substantial improvements in temporal stability, physical plausibility, and motion coherence with minimal preference pairs. Specifically, the study achieved these enhancements using approximately 2,500 preference pairs and LoRA fine-tuning on only 1% of the model parameters.

Tests prove that VideoGPA consistently outperforms state-of-the-art baselines across both image-to-video and text-to-video settings. The breakthrough delivers improved geometric coherence and temporal stability while preserving the base model’s visual quality and motion realism. Measurements confirm that the framework effectively distills 3D knowledge into video diffusion models, aligning generation towards physically consistent geometry.

The work introduces a reconstruction-based 3D consistency metric, distinguishing between samples with high and low structural integrity. This method effectively aligns the generative distribution towards inherent 3D consistency without requiring human annotation, demonstrably improving temporal stability, physical plausibility, and motion coherence with minimal preference pairs.

Results indicate that geometric failures in current video generators are largely due to objective misalignment, rather than architectural limitations, and can be mitigated through lightweight post-training alignment. The authors acknowledge a limitation related to the computational demands of feed-forward geometric reconstruction, particularly with longer video sequences. Future work could benefit from progress in lightweight geometric foundation models to address this challenge and extend the framework’s applicability to more extensive videos.

👉 More information

🗞 VideoGPA: Distilling Geometry Priors for 3D-Consistent Video Generation

🧠 ArXiv: https://arxiv.org/abs/2601.23286