Researchers are addressing the challenge of translating multimodal understanding into effective robotic action. Jinhui Ye, Fangjing Wang from Southern University of Science and Technology, and Ning Gao from Shanghai AI Laboratory, with Yu et al., present ST4VLA, a novel dual-system Vision-Language-Action framework. This work is significant because it introduces Spatially Guided Training to better align action learning with spatial reasoning within vision-language models. By employing spatial grounding pre-training and spatially guided action post-training, ST4VLA demonstrably improves robotic performance on benchmarks such as Google Robot and WidowX Robot, achieving new state-of-the-art results and exhibiting enhanced generalisation and robustness in real-world scenarios.

Spatial Grounding Pre-training for Enhanced Robot Manipulation

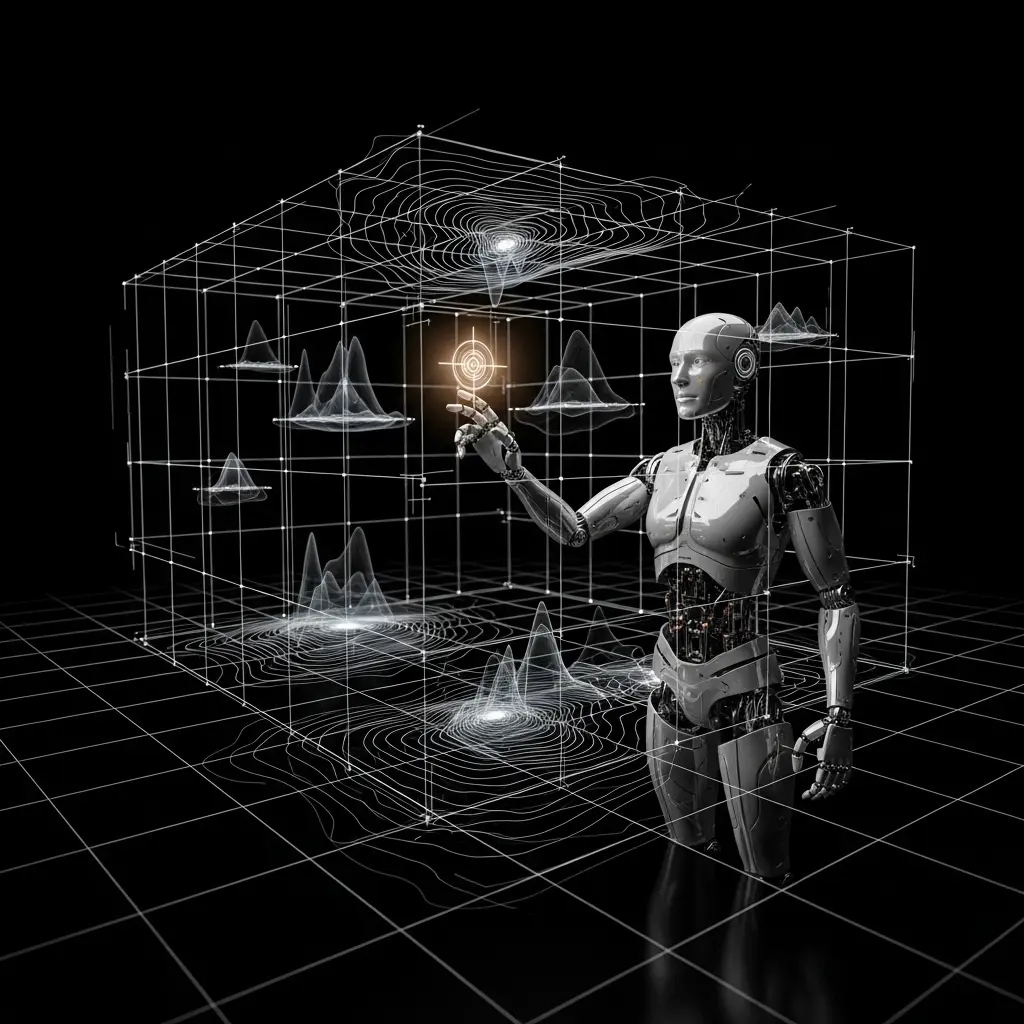

Scientists have developed a new framework, ST4VLA, to significantly enhance the ability of vision-language models to control robots performing physical tasks. This innovative approach addresses a fundamental gap between abstract linguistic instruction and grounded physical execution, enabling robots to better understand not only what to do, but also where and how to act in a three-dimensional world.

ST4VLA operates in two distinct stages, beginning with spatial grounding pre-training. This stage equips the model with transferable spatial knowledge, predicting points, boxes, and trajectories from both extensive web-based data and robot-specific datasets. Subsequently, spatially guided action post-training encourages the model to generate richer spatial information, guiding action generation through spatial prompting.

This design strategically preserves spatial understanding during the learning process and promotes consistent optimisation across both spatial and action objectives. Empirical evaluations demonstrate substantial performance gains with ST4VLA, exceeding the capabilities of standard VLA models. These findings suggest that scalable spatially guided training represents a promising pathway towards robust and generalisable robot learning.

Spatial Prior Acquisition and Spatially Guided Action Refinement

A two-stage training pipeline, ST4VLA, was developed to integrate spatial priors into vision-language-action learning for robotic control. Initially, the VLM planner acquires transferable spatial priors, specifically point, box, and trajectory predictions, through a spatial grounding pre-training stage.

This stage unifies web-scale multimodal grounding data with robot-specific datasets to equip the model with affordance grounding, localization, and trajectory reasoning capabilities. Subsequently, the action expert undergoes spatially guided action post-training, where it is conditioned on these pre-trained spatial priors via lightweight spatial prompting.

This conditioning aligns optimisation between perception and control, preserving the VLM’s grounding capacity and avoiding the collapse observed in naive co-training approaches. The framework strategically separates the ‘where and what’ of actions from ‘how’ to act, ensuring reliable and generalizable manipulation.

Performance gains were demonstrated on several benchmarks, with ST4VLA achieving an increase from 66.1 to 84.6 on the Google Robot and from 54.7 to 73.2 on the WidowX Robot. The study also established new state-of-the-art results on SimplerEnv, and showed stronger generalisation to unseen objects and paraphrased instructions. The study utilizes a two-stage process beginning with spatial grounding pre-training, equipping the vision-language model with transferable priors through scalable prediction of points, boxes, and trajectories from both web-scale and robot-specific data.

Following pre-training, spatially guided action post-training encourages the model to generate richer spatial priors, guiding action generation via spatial prompting. This design preserves spatial grounding during policy learning and promotes consistent optimisation across both spatial and action objectives.

Generalisation capabilities were also assessed across 61 unseen objects and with paraphrased instructions, demonstrating stronger performance than previous methods. Robustness was further validated through testing with long-horizon perturbations in real-world settings. Data collected encompassed diverse synthetic data spanning over 14,000 objects, utilising randomized dome lighting and textures to enhance the training process. The research highlights scalable spatially guided training as a promising direction for developing robust and generalizable robot learning systems.

Spatial Grounding enhances Robot Instruction Following and Manipulation

Scientists have developed ST4VLA, a dual-system Vision-Language-Action framework that substantially improves robotic performance on embodied tasks. This framework addresses the limitations of existing vision-language models when translating instructions into precise motor actions. This is followed by spatially guided action post-training, which refines the model’s ability to generate richer spatial cues for action generation.

Evaluations across simulated and real-world environments demonstrate that ST4VLA surpasses current VLA models and specialised systems in areas such as instruction following, long-horizon manipulation, and multimodal grounding. While the research highlights the benefits of spatial pre-training, it differs from other approaches by explicitly leveraging spatial prompting to guide action generation. Future research could explore further optimisation of computational efficiency and expansion of the framework to even more complex robotic tasks and environments, ultimately contributing to the development of more robust and generalisable robots.

👉 More information

🗞 ST4VLA: Spatially Guided Training for Vision-Language-Action Models

🧠 ArXiv: https://arxiv.org/abs/2602.10109