Scientists are increasingly focused on developing robust perception systems for robots operating in complex real-world scenarios. Richeek Das and Pratik Chaudhari, both from the University of Pennsylvania, present Neurosim, a high-performance library designed to simulate a range of sensors, including dynamic vision, RGB, and depth sensors, alongside the agile dynamics of multi-rotor vehicles. This work details the design of Neurosim and its integration with Cortex, a ZeroMQ-based communication library, enabling frame rates of up to 2700 FPS on standard desktop GPUs. The significance of this research lies in its potential to accelerate the training and closed-loop testing of neuromorphic perception and control algorithms using time-synchronised, multi-modal data, ultimately advancing the field of robotics and autonomous systems.

Scientists have created a powerful new tool for rapidly prototyping and testing robotic vision systems. The simulator allows engineers to train and validate algorithms at unprecedented speeds, bridging the gap between laboratory research and real-world deployment. This advance promises to accelerate the development of more agile and perceptive robots for a range of applications.

Scientists have unveiled Neurosim, a new simulation library capable of rendering complex robotic environments and sensor data in real-time at speeds exceeding 2700 frames per second on standard desktop hardware. This achievement addresses a critical bottleneck in the development and testing of neuromorphic computing and robotics algorithms, offering a pathway to more robust and efficient systems.

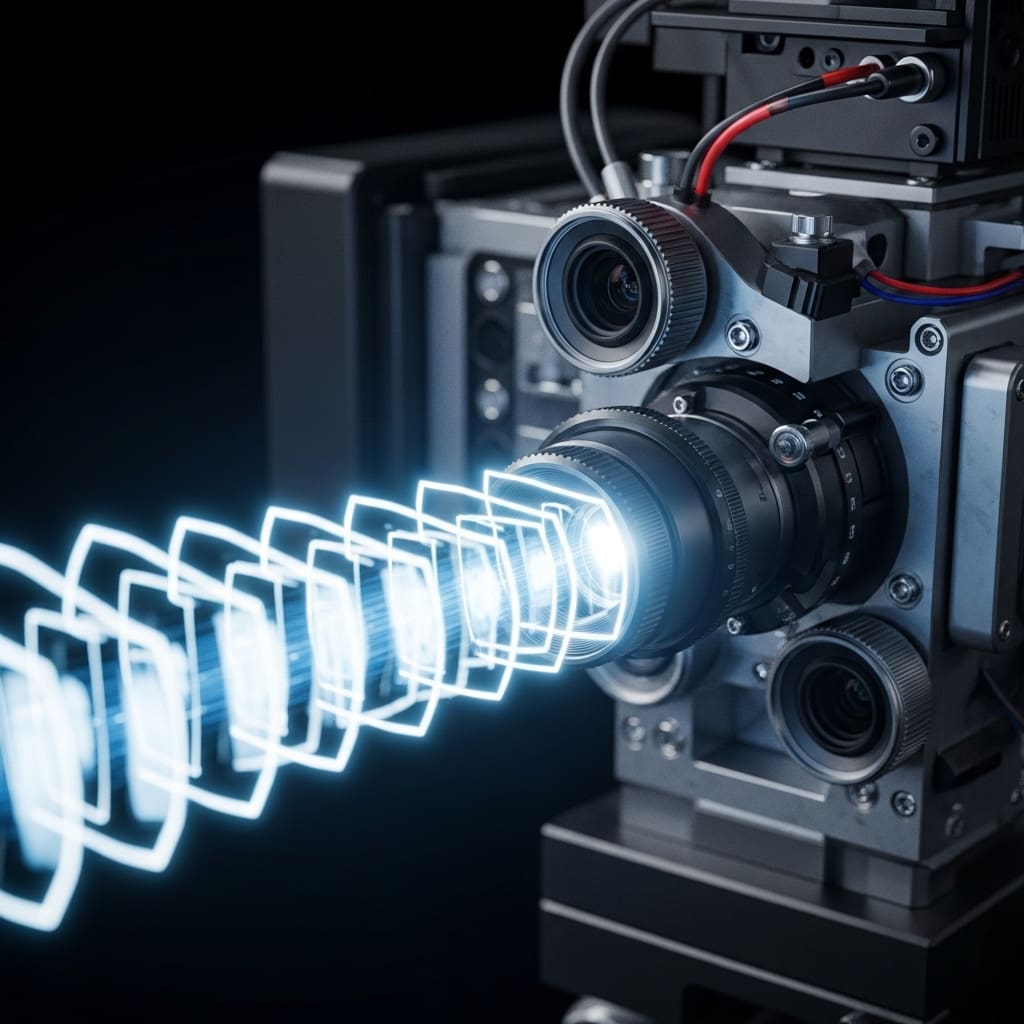

Neurosim doesn’t merely replicate sensor input; it meticulously simulates dynamic vision sensors, RGB cameras, depth sensors, and inertial measurement units alongside the agile movements of multi-rotor vehicles within intricate, changing environments. The core innovation lies in the library’s ability to generate high-fidelity event data, information derived from event-based cameras that mimic the human retina, without the prohibitive storage demands of traditional simulation methods.

Existing simulators often rely on generating high-frame-rate RGB data and then converting it to event data, consuming terabytes of storage for even short simulation runs. Neurosim bypasses this step, creating event data on-the-fly and streamlining the development process for algorithms designed to process these unique data streams. Central to Neurosim’s functionality is its integration with Cortex, a communication library built on ZeroMQ, which facilitates seamless data transfer between the simulation and machine learning frameworks like Python and C++.

Cortex handles high-throughput, low-latency messaging, natively supporting NumPy arrays and PyTorch tensors, essential components in modern deep learning pipelines. This combination enables researchers to not only train algorithms using synthetic, time-synchronized multi-modal data but also to rigorously test real-time implementations in closed-loop scenarios.

This work provides a comprehensive solution for simulating the complex sensor inputs encountered by robots operating in dynamic environments. By accurately modelling data from multiple sensors, including LiDAR, RGB cameras, and inertial measurement units, at rates comparable to real-world devices, Neurosim allows for the creation of realistic training datasets and the validation of perception and control algorithms.

The simulator’s design prioritizes efficiency, enabling researchers to push the boundaries of robotic agility and explore challenging scenarios, such as high-speed maneuvers and obstacle avoidance, without risking damage to physical hardware. Neurosim and Cortex are freely available, fostering wider adoption and accelerating progress in the field of embodied artificial intelligence.

Real-time performance benchmarks for high-throughput sensor data processing

Neurosim achieves a sustained frame rate of approximately 2700 FPS on a desktop Nvidia 4090 GPU, demonstrating its high-performance capability for real-time simulation. This rendering speed is facilitated by the integration of Habitat-Sim, which can render a typical indoor scene with multiple RGB-D sensors at VGA (640 × 480) resolutions at roughly 3000 FPS on a single GPU.

The system simulates event cameras at multi-kilohertz rates by tracking the intensity state of each pixel corresponding to the last triggered event, minimising the potential for missed events due to rapid intensity changes. The research demonstrates a high-throughput data pipeline capable of handling approximately 50 M events per second from a monocular high-definition event camera, alongside 1 M pixels per second from an HD RGB camera operating at 100Hz.

Furthermore, the system processes LiDAR data at around 10Hz, generating approximately half a billion points per second, and registers inertial motion data, angular velocities, linear accelerations, and magnetometer orientations, at upwards of 500Hz. This multi-modal data stream, exceeding 1 GB s−1, is designed for direct feeding into deep learning training pipelines without requiring extensive disk storage or buffering.

Neurosim’s design prioritizes low-latency communication, facilitated by the Cortex library, enabling seamless integration with robotics workflows. The system is capable of simulating the realities of real-world robots, including sensor synchronization and individual clock operation, which are critical for downstream algorithms. The simulator’s ability to operate at speeds significantly faster than real-time allows for viable alternatives to extensive data curation and facilitates closed-loop perception and control experiments, even at the extremes of hardware agility, such as quadrotor flips with angular velocities of approximately 700 ◦s−1.

High-speed simulation unlocks advanced training for event-based sensors and neuromorphic computing

The relentless pursuit of realistic simulation environments has long been a bottleneck in robotics and embodied artificial intelligence. While visually impressive simulators like Unreal Engine and CARLA capture photorealism, they often fall short in providing the high-fidelity, high-speed data streams needed to train and validate the next generation of event-based sensors and neuromorphic algorithms.

Neurosim, alongside the Cortex communication library, represents a significant step towards bridging that gap, prioritising speed and data synchronisation over purely aesthetic fidelity. This isn’t simply about faster rendering. The ability to generate thousands of frames per second of multi-modal sensor data, dynamic vision, RGB, depth, and inertial measurements, opens up new avenues for self-supervised learning.

Algorithms can now be trained on realistically complex, temporally-rich data without the delays and limitations of real-world data collection. Crucially, the integration with Cortex facilitates seamless data transfer to machine learning frameworks, streamlining the entire development pipeline. However, the focus on speed necessarily involves trade-offs.

The simulated environments, while complex, may not fully capture the nuances of real-world physics or lighting conditions. Furthermore, validating the transfer of algorithms trained in Neurosim to real robots remains a critical challenge. The emergence of tools like Rerun, designed for visualising multimodal data, will be essential for debugging and understanding the discrepancies between simulation and reality. Looking ahead, we can anticipate a proliferation of specialised simulators, each optimised for particular sensor modalities or robotic platforms, and a growing emphasis on techniques for domain adaptation and sim-to-real transfer.

👉 More information

🗞 Neurosim: A Fast Simulator for Neuromorphic Robot Perception

🧠 ArXiv: https://arxiv.org/abs/2602.15018