Researchers are increasingly focused on understanding how the geometry of internal representations impacts a model’s ability to generalise and withstand disturbances. Vasileios Sevetlidis (Athena RC Democritus University of Thrace, Greece) and George Pavlidis (Athena RC University Campus, Greece) alongside their colleagues, address a key limitation of current similarity metrics, which fail to capture how representations change along input variations. Their work introduces ‘representation holonomy’, a novel, gauge-invariant statistic that quantifies path dependence in feature spaces, revealing hidden curvature and offering a more nuanced understanding of representation quality. This new approach demonstrably separates models that appear similar using conventional methods and correlates with improved robustness against adversarial attacks and data corruption, positioning it as a valuable diagnostic tool for probing learned representations.

Existing techniques like Centered Kernel Alignment (CKA) and Singular Vector Canonical Correlation Analysis (SVCCA) primarily assess overlap between activation sets, failing to capture the crucial path dependence of feature transformations. This new approach addresses a practical gap, as models exhibiting similar activation patterns under CKA can still differ significantly when subjected to perturbations or adversarial attacks due to variations in how their features rotate along input trajectories. The team achieved a breakthrough by viewing a layer’s representation as a field over input space and estimating shared principal subspaces between nearby inputs.

They then computed the optimal rotation-only alignment of these local feature clouds, composing these rotations around a closed loop to yield a single orthogonal matrix. Deviation from the identity matrix defines the representation holonomy, with nonzero values indicating path-dependent, non-integrable transport akin to curvature in classical geometry. Crucially, the estimator incorporates global whitening to fix a sensible gauge and ensures invariance to orthogonal and affine transformations, enhancing its stability and reliability. The research also tracked training dynamics, showing that holonomy rises during feature formation and stabilizes at convergence, offering insights into the learning process itself.

Experiments conducted on datasets including MNIST and CIFAR-10/100, utilising ResNet-18 architectures, validated the method’s effectiveness. The study establishes that holonomy is inexpensive to compute, scales well with common backbones, and provides information orthogonal to pointwise similarity. The researchers have released code and configurations to ensure full reproducibility of their findings, fostering further exploration and development in this area.

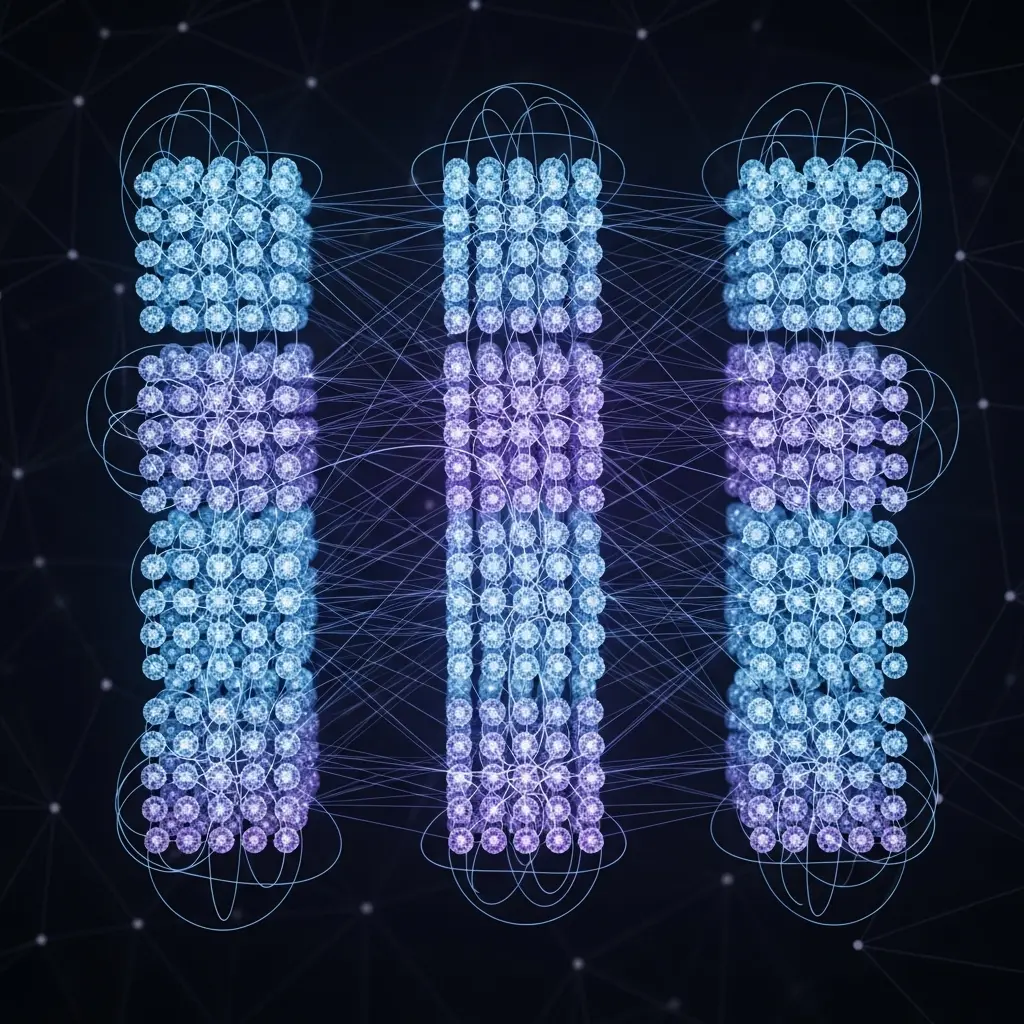

Representation holonomy estimation via parallel transport

Researchers engineered an estimator that first fixes gauge through global whitening, then aligns neighbourhoods using shared subspaces and rotation-only Procrustes alignment, before embedding the result back into the full feature space. The team developed a method to estimate orthogonal maps between paired activation matrices using rotation-only Procrustes, while principal angles quantified shared subspaces, and classical perturbation theory provided finite-sample error control for the estimated subspaces. Preprocessing involved statistically principled whitening schemes, such as ZCA-corr, to justify a fixed global gauge that removes second-order anisotropy prior to local alignment. Parallel to these comparison methods, empirical tests of approximate equivariance and model equivalence probed how features change under controlled input transformations, utilising Lie derivatives to define a layer-wise equivariance error.

This work modelled layer-wise representations as sections of a vector bundle, establishing the alignment rule as a connection, and locally estimating a shared subspace with principled whitening as a fixed gauge. The transport between nearby inputs was then defined by the optimal special-orthogonal map within that subspace, and globally, these local transports were composed around closed loops to quantify the resulting holonomy. The approach achieves invariance to per-layer orthogonal reparameterizations and robustness to admissible whitening choices, distinguishing it from scalar, path-agnostic similarities and single-step Procrustes alignments. Experiments employed a technique linking small-loop holonomy to curvature, yielding predictions tested empirically, and demonstrating that networks can be locally near-equivariant yet exhibit non-trivial global holonomy correlating with stability under perturbation sequences.

Holonomy quantifies path-dependence in deep network representations

The team measured holonomy by estimating shared principal subspaces and computing optimal special-orthogonal alignment of local feature clouds, composing these rotations around closed loops to yield a single orthogonal matrix. Results demonstrate that the estimator is invariant to orthogonal and affine transformations post-whitening, and a linear null was established for affine layers. Further experiments tracked training dynamics, recording that holonomy rises during training as features form and stabilizes at convergence. The study employed a practical estimator combining global whitening, shared-neighbor subspaces, and rotation-only Procrustes alignment, ensuring both gauge invariance and stability. The breakthrough delivers a scalable method for understanding how features orient, align, and evolve within deep neural networks.

Representation holonomy reveals hidden neural curvature in high-dimensional

The researchers developed a practical estimator for representation holonomy based on shared-neighbour Procrustes transport within low-dimensional subspaces. Theoretical analysis demonstrates its invariance to affine reparameterisations after whitening, its vanishing behaviour on affine maps, and a linear scaling with loop radius under certain assumptions. Empirical results show that holonomy can differentiate between models with similar CKA scores and correlates with robustness against adversarial attacks and data corruption. Furthermore, it effectively tracks the evolution of features during training. The authors acknowledge limitations related to finite-sample effects, subspace truncation, and index mismatches, which contribute to error in the holonomy estimation. Future research directions include exploring richer gauges and architectures, applying the method to diffusion and score networks to assess the curl-free nature of learned score fields, and probing specific hypotheses about representation geometry along various input trajectories.

👉 More information

🗞 Gauge-invariant representation holonomy

🧠 ArXiv: https://arxiv.org/abs/2601.21653