Recent advances in robotic manipulation increasingly rely on Vision-Action (VLA) models, but scaling these systems demands vast amounts of expensive, human-demonstrated data and struggles with adapting to new situations. Haozhan Li, Yuxin Zuo, and Jiale Yu, along with colleagues, address these challenges by introducing SimpleVLA-RL, a new framework that leverages reinforcement learning to train VLA models more efficiently. This innovative approach significantly reduces the need for large datasets and enables robust performance even when faced with unfamiliar tasks, demonstrably surpassing existing supervised learning methods on benchmark platforms like LIBERO and RoboTwin. The team’s work not only improves robotic capabilities but also reveals surprising behaviours during training, identifying previously unseen patterns in how robots learn to manipulate objects, suggesting a pathway towards more adaptable and intelligent robotic systems.

Robotic Learning via Language and Reinforcement

Vision-Language-Action (VLA) models are emerging as a powerful approach to robotic manipulation. Despite recent progress through large-scale pretraining and supervised fine-tuning, these models face challenges related to the scarcity of extensive human-operated robotic data and limited ability to generalise to new tasks. Inspired by successes in applying reinforcement learning to large language models, researchers are investigating whether this technique can enhance robotic learning, improving sample efficiency and adaptability. This work explores using reinforcement learning to train VLA models, reducing reliance on extensive datasets and enabling robots to learn from limited demonstrations and explore novel task variations, ultimately creating more robust and reliable robotic systems.

Robotics Learns From Reinforcement Learning Techniques

A Reinforcement Learning Solution for VLA Models

SimpleVLA-RL presents a new framework for training robots using reinforcement learning, addressing limitations in current vision-language-action (VLA) models. Researchers recognised that scaling VLA models requires substantial amounts of human-operated robotic data, which is expensive and scarce, and that these models often struggle to generalise. Inspired by recent successes using reinforcement learning to improve reasoning in large language models, the team investigated whether a similar approach could enhance long-horizon action planning in VLA systems. The work introduces SimpleVLA-RL, built upon an existing reinforcement learning framework for language models and adapted for the unique challenges of robotic control.

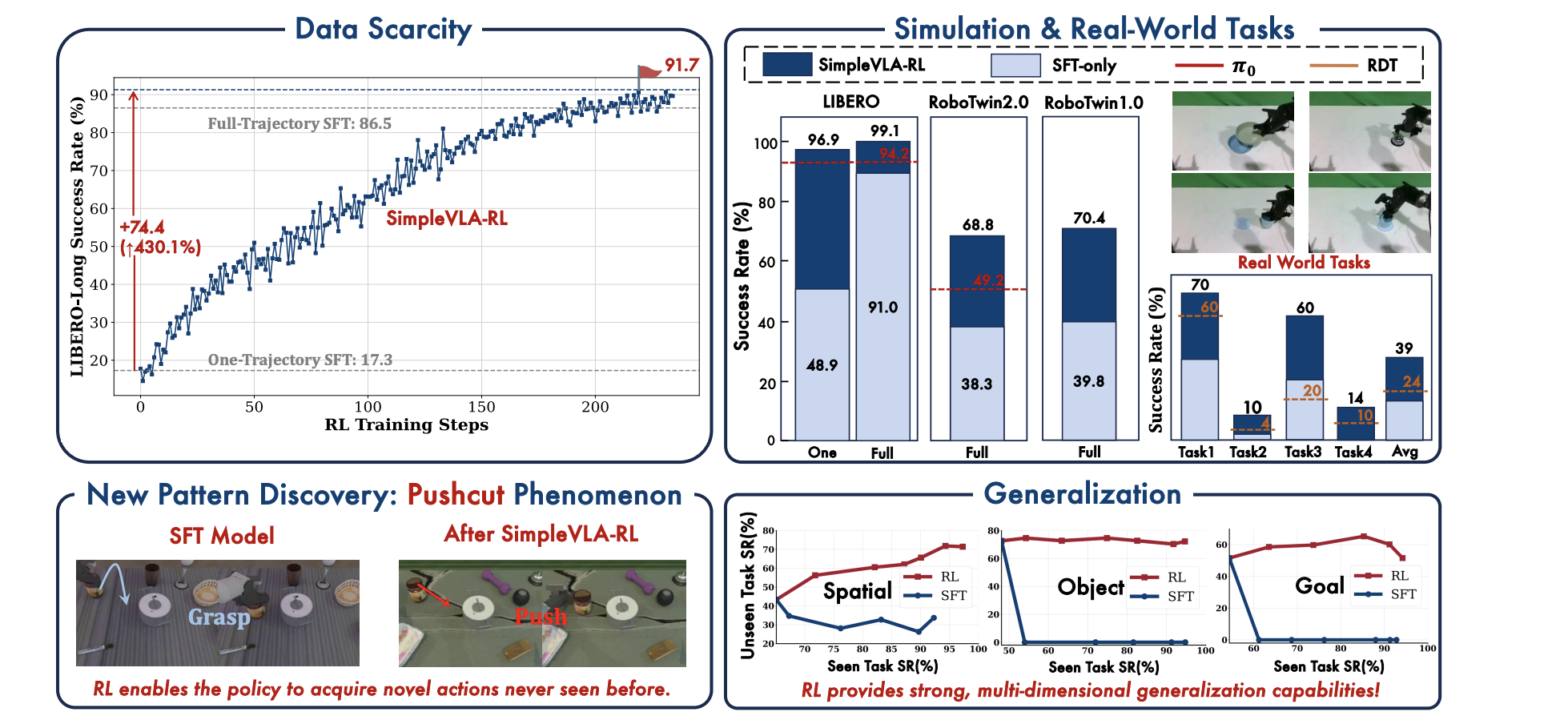

Key innovations include VLA-specific trajectory sampling, optimised loss computation, and parallel multi-environment rendering to accelerate training. Experiments demonstrate that SimpleVLA-RL achieves state-of-the-art performance on benchmark robotic platforms, LIBERO and RoboTwin 1. 0 and 2. 0, consistently improving success rates by 10 to 15 percent. Notably, the team observed a significant improvement in data efficiency; with only a single demonstration per task, reinforcement learning increased LIBERO-Long success rates from 17.

Demonstrating Generalization Across Tasks and Spaces

1 percent to 91. 7 percent. Furthermore, the system demonstrated strong generalisation capabilities across spatial arrangements, objects, and tasks. A surprising outcome was the discovery of “pushcut”, a novel pattern of behaviour exhibited by the policy during training, indicating the system learned strategies not present in the initial supervised data. Policies trained in simulation successfully transferred to a real-world robot, demonstrating the potential for practical deployment without extensive real-world training data.

Robotic Manipulation Learns From Limited Experience

Key Takeaways and Future Directions for Robotics

The research introduces SimpleVLA-RL, a new framework that uses reinforcement learning to train vision-language-action models for robotic manipulation. This approach addresses key limitations of current methods, which rely on large datasets of human-operated robot movements that are expensive and difficult to obtain, and often struggle to generalise to new situations. SimpleVLA-RL demonstrates improved performance on benchmark tasks, surpassing existing supervised fine-tuning methods and achieving state-of-the-art results on both simulated and real-world robotic platforms. Notably, the framework not only reduces the need for extensive pre-recorded data but also enables more robust performance when faced with unfamiliar tasks or environments. During training, the system unexpectedly discovered novel strategies, termed “pushcut”, indicating an ability to learn beyond the patterns present in the initial training data.

🗞 SimpleVLA-RL: Scaling VLA Training via Reinforcement Learning

🧠 ArXiv: https://arxiv.org/abs/2509.09674

A key technical challenge when translating large language model principles to physical robotics is managing continuous action spaces. Unlike text generation, where outputs are discrete tokens, robotic control requires outputting precise, continuous vectors representing joint torques or end-effector velocities. The implementation of reinforcement learning techniques, such as policy gradient methods, is crucial here, as it allows the system to learn optimal mappings from the observed state-action space directly into a differentiable, continuous action distribution, which is fundamental for fine-grained manipulation tasks.

Beyond achieving high scores on established benchmarks, the true measure of success for VLA systems lies in their ability to mitigate the domain gap. This refers to the discrepancy between controlled, simulated environments and the unpredictable, noisy reality of the physical world. Research directions are increasingly focused on robust domain randomization and incorporating uncertainty quantification into the loss function. This helps train the model to be inherently resilient, ensuring that improvements observed in simulation transfer effectively to deployment hardware.

Furthermore, future robotic intelligence will likely require hierarchical planning, moving beyond monolithic, end-to-end VLA structures. This involves decomposing complex tasks—such as “make coffee”—into sequential, manageable sub-goals, allowing the system to reason at multiple levels of abstraction. By treating task planning and low-level motor control as distinct modules, researchers aim to create systems that are not only efficient in data use but also provably safe and interpretable when operating in complex, real-world industrial settings.