Quantum Neural Networks offer a key tool in quantum machine learning, but scaling up implementation presents challenges. Yuxuan Yan and colleagues at Tsinghua University have compressed these networks using knowledge distillation. The technique transfers learned information from larger Quantum Neural Networks into smaller architectures with minimal performance loss. This reduces both the number of qubits and circuit depth needed for training, and a self-knowledge-distillation method further accelerates learning. The approach enables the efficient deployment of QNNs on near-term quantum hardware

Knowledge distillation unlocks scalable Quantum Neural Networks through resource reduction

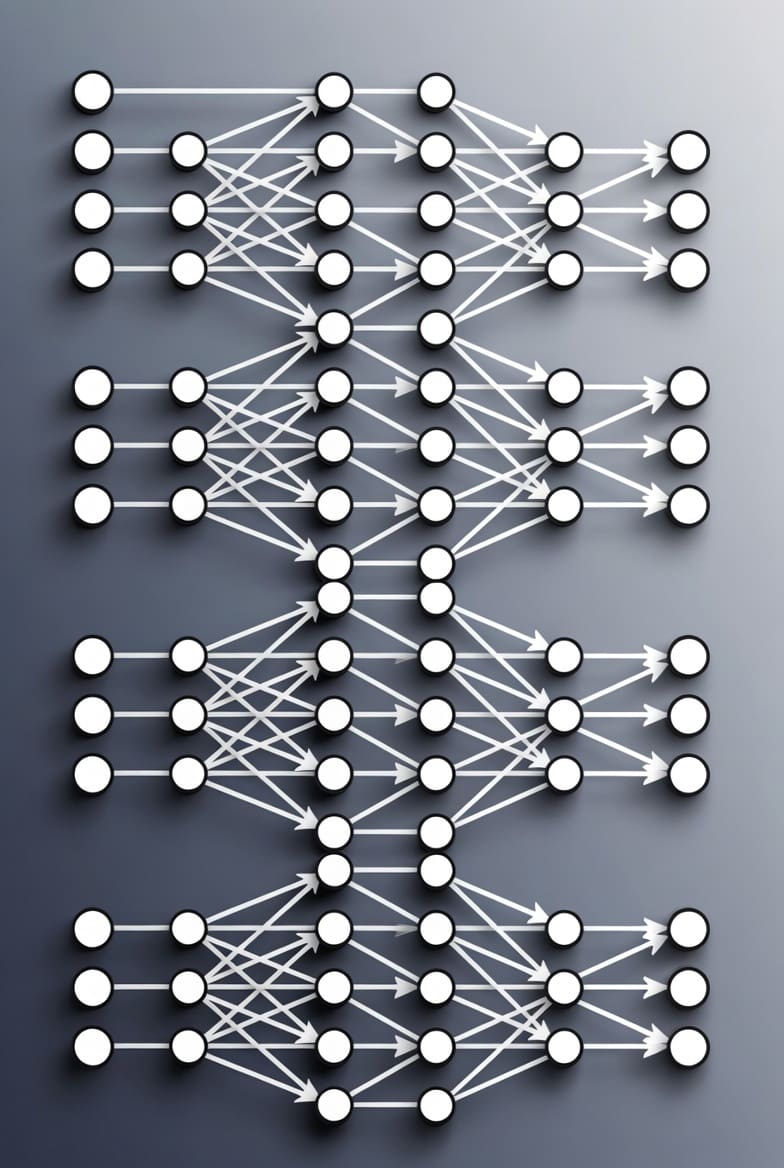

Training costs for Quantum Neural Networks (QNNs) have been demonstrably reduced, with knowledge distillation achieving compression enabling deployment on systems requiring fewer resources. Substantial quantum resources previously limited the practical application of large-scale QNNs, hindering progress in the field. QNNs, inspired by classical neural networks, leverage quantum phenomena like superposition and entanglement to potentially achieve computational advantages in machine learning tasks. However, these advantages are contingent upon the availability of quantum computers with a sufficient number of qubits and low error rates, conditions not yet fully realised. The number of qubits required for a meaningful QNN scales with the complexity of the problem being addressed, and the depth of the quantum circuit, the number of sequential quantum operations, directly impacts the accumulation of errors. These resource demands have presented a significant bottleneck for practical implementation. Now, the technique enables the transfer of learning from expansive ‘teacher’ QNNs to smaller ‘student’ networks, surpassing a threshold where complex models were previously inaccessible.

This compression minimises both the number of qubits and circuit depth needed for QNN operation, without sharply impacting performance, and a self-knowledge distillation method further accelerates the learning process. Knowledge distillation, a concept borrowed from classical machine learning, involves training a smaller ‘student’ model to mimic the behaviour of a larger, pre-trained ‘teacher’ model. In the quantum realm, this is achieved by transferring the probability distributions generated by the teacher QNN to the student QNN, effectively distilling the learned knowledge into a more compact form. The self-knowledge distillation method takes this a step further by allowing the student QNN to learn from its own previous iterations, accelerating the training process and improving performance. A viable pathway towards utilising QNNs on currently available near-term quantum hardware is now available, broadening accessibility for researchers and developers. Numerical analysis revealed that the distillation process minimises both the number of qubits required and the circuit depth, a measure of computational complexity, without substantial performance loss. Initial tests showed compression achieved with minimal impact on classification accuracy across benchmark datasets. The researchers demonstrated a reduction in both parameters and computational cost, paving the way for more efficient quantum machine learning algorithms. However, current results focus on simplified, noise-free simulations and do not yet reflect the challenges of maintaining performance on real, error-prone quantum hardware.

The significance of this work lies in its potential to bridge the gap between theoretical promise and practical realisation of QNNs. Current quantum computers are limited by qubit coherence times and gate fidelities, meaning that quantum computations are susceptible to errors. Reducing the circuit depth is crucial for mitigating these errors, as each quantum operation introduces a chance for decoherence. By compressing QNNs, researchers can reduce the computational burden and increase the likelihood of obtaining reliable results on noisy intermediate-scale quantum (NISQ) devices. Furthermore, the reduction in qubit count lowers the hardware requirements, making QNNs accessible to a wider range of quantum computing platforms. The team’s approach could be particularly valuable for applications such as image recognition, natural language processing, and materials discovery, where QNNs have shown promise but have been hampered by resource constraints.

Architectural flexibility unlocks potential for wider quantum neural network deployment

The current knowledge distillation methodology demonstrates compression to networks with similar configurations, but compressing QNNs into radically different architectures remains an open question. Exploring this architectural flexibility could unlock even greater resource savings and enable deployment on even more constrained devices. The current research primarily focused on distilling knowledge between QNNs with similar structures, such as transferring information from a deep QNN to a shallower version of the same architecture. However, the true potential of knowledge distillation may lie in its ability to transfer knowledge between QNNs with fundamentally different architectures. For example, it might be possible to distill the knowledge from a complex, fully-connected QNN into a more sparse or convolutional QNN, which could be more efficient on certain types of quantum hardware. This would require developing new distillation techniques that can effectively map the learned representations from one architecture to another. It establishes a key foundation for resource-efficient quantum machine learning, mirroring how a skilled teacher imparts knowledge to a student by transferring learned behaviours from a larger, more complex QNN to a smaller one. The analogy to human learning is apt; a student doesn’t necessarily need to replicate the teacher’s exact thought process, but rather grasp the underlying principles and apply them in a simplified manner.

Further investigation is needed to determine the limits of this approach and its ability to maintain performance across diverse QNN structures, but the initial reduction in qubit count and circuit complexity is vital for bringing practical quantum computation closer to reality. Future research directions include exploring different distillation strategies, investigating the impact of noise on the distillation process, and applying the technique to more complex machine learning tasks. The development of robust and efficient knowledge distillation methods will be crucial for unlocking the full potential of QNNs and realising their transformative impact on various fields. The team plans to investigate the scalability of their approach to even larger QNNs and to explore the use of more advanced distillation techniques, such as adversarial training, to further improve performance. Ultimately, this work represents a significant step towards making quantum machine learning a practical reality, paving the way for a new era of intelligent computation.

This research successfully demonstrated that quantum neural networks could be compressed using a technique called knowledge distillation, reducing the resources needed for training. By transferring learning from larger QNNs to smaller ones with similar configurations, researchers observed a reduction in both the number of qubits and circuit depth required. This matters because it addresses a key challenge in quantum computing, deploying complex models on hardware with limited capacity. Future work will focus on distilling knowledge between QNNs with different architectures and exploring how this approach scales to even larger networks, potentially accelerating the development of practical quantum machine learning applications.

👉 More information

🗞 Distilling the knowledge with quantum neural networks

🧠 ArXiv: https://arxiv.org/abs/2603.21586