Quantum error correction remains a significant challenge in realising practical quantum computation, as the delicate superposition and entanglement states underpinning quantum information are highly susceptible to environmental disturbances known as decoherence. Researchers are actively pursuing methods to mitigate these errors without incurring excessive resource overhead, a limitation of established codes such as the surface code. A team comprising Nico Meyer, Christopher Mutschler, and Daniel Scherer from the Fraunhofer Institute for Integrated Circuits IIS in Nürnberg, Germany, alongside Andreas Maier from the Pattern Recognition Lab at Friedrich-Alexander-Universität Erlangen-Nürnberg, present a novel approach detailed in their article, “Learning Encodings by Maximizing State Distinguishability: Variational Quantum Error Correction”. Their work introduces a machine learning objective, termed the ‘distinguishability loss function’, designed to optimise encoding circuits for specific noise characteristics, yielding resource-efficient codes and demonstrating promising results on both simulated and actual quantum hardware from IBM and IQM.

Variational Quantum Error Correction (VarQEC) effectively mitigates errors on current quantum hardware, confirming its practical application and capacity to preserve quantum information. Experiments successfully train error correction codes, adapting them to the specific noise characteristics of both IBM Quantum and IQM superconducting qubit systems, and achieve demonstrable performance gains compared to uncorrected quantum states. This adaptability represents a notable step towards viable error mitigation strategies for near-term quantum devices.

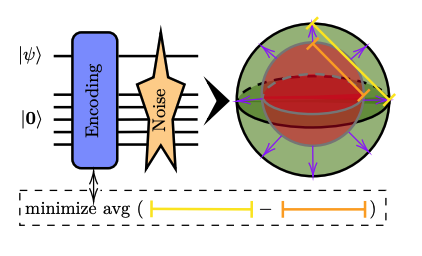

The study emphasises the importance of aligning error correction code design with the underlying hardware architecture and noise profiles. VarQEC employs a distinguishability loss function as a training objective, optimising encoding circuits for resource efficiency and resilience against device-specific errors. The ‘distinguishability loss function’ measures how well the error correction code can differentiate between the intended quantum state and states corrupted by noise. Results from both platforms consistently show performance improvements in patches II and III, indicating the codes’ ability to learn and counteract prevalent noise. These ‘patches’ refer to specific regions within the error landscape where the codes demonstrate improved performance.

Interestingly, experiments on IQM reveal no significant difference

Interestingly, experiments on IQM reveal no significant difference in performance between star and square ansatz topologies, suggesting that, within the constraints of the tested connectivity, topology does not critically impact the effectiveness of the codes. An ansatz is a trial wavefunction or circuit, representing a proposed solution to a quantum problem. However, the identification of a defective qubit on the IQM device underscores the sensitivity of the codes to individual qubit performance and the need for robust calibration procedures.

Future work should focus on incorporating more sophisticated, device-specific noise models into the training process. Currently, the experiments assume uniform noise levels, a simplification that limits the potential for further performance enhancement. Modelling correlated noise – where errors on one qubit influence others – and qubit-specific variations promises to yield more robust and effective error correction strategies.

Expanding the scope of these experiments to include larger qubit systems and more complex quantum circuits represents a crucial next step. Investigating the scalability of VarQEC and its performance under increased computational load will determine its suitability for tackling more challenging algorithms. Furthermore, exploring alternative ansatz designs and optimisation techniques could unlock additional performance gains.

The successful implementation of VarQEC on real quantum hardware validates the potential of machine learning-driven approaches to quantum error correction. This research provides a foundation for developing practical error mitigation strategies that can accelerate the progress towards fault-tolerant computation. The demonstrated adaptability of these codes to different hardware platforms suggests a pathway towards customisable solutions tailored to the unique characteristics of each device.

👉 More information🗞Learning Encodings by Maximizing State Distinguishability:

🗞 Learning Encodings by Maximizing State Distinguishability: Variational Quantum Error Correction

🧠 DOI: https://doi.org/10.48550/arXiv.2506.11552