Researchers are tackling a fundamental challenge in photonic machine learning: the lack of inherent memory capabilities within photons themselves. Chaehyeon Lim, Hyungchul Park, and Beomjoon Chae, all from the Intelligent Wave Systems Laboratory at Seoul National University, alongside Kwak et al., present a novel approach to overcome this limitation by demonstrating scalable memory sharing in photonic quantum memristors. This work is significant because it moves beyond localised memory elements, instead creating a network where each memristive node updates its state based on both its own history and that of its neighbours, effectively realising distributed memory. By modelling these nodes as photonic memtransistors, the team showcases enhanced hysteresis and, crucially, improved performance in Fashion-MNIST image classification, suggesting a pathway towards high-capacity machine learning using devices compatible with existing linear computing infrastructure.

Compact silicon nitride circuits enable high-rate on-chip entangled photon pair generation for quantum applications

Researchers have identified a major challenge in using photons as robust, room-temperature carriers for quantum machine learning, namely the lack of efficient and scalable methods for generating and controlling single photons on integrated photonic chips. This work addresses this challenge by demonstrating a compact, CMOS-compatible silicon nitride (SiN) photonic circuit capable of on-chip generation of time-bin entangled photon pairs, achieving a heralded single-photon rate of 2.8 × 10⁶ counts per second. The objective of the study was to design, fabricate, and characterize a high-performance entangled photon source integrated on a SiN platform suitable for scalable quantum photonic systems. The approach relies on spontaneous four-wave mixing in a high-Q micro-ring resonator to generate entangled photon pairs, followed by on-chip filtering to isolate the desired time-bin entanglement. Key contributions include a demonstrated on-chip heralding efficiency corresponding to a 1.2 dB improvement over previously reported SiN-based sources and a measured two-photon interference visibility of 87%. The fabricated device occupies a footprint of only 10 mm × 2 mm, enabling dense integration, while the CMOS-compatible fabrication process supports large-scale manufacturing and cost-effective deployment.

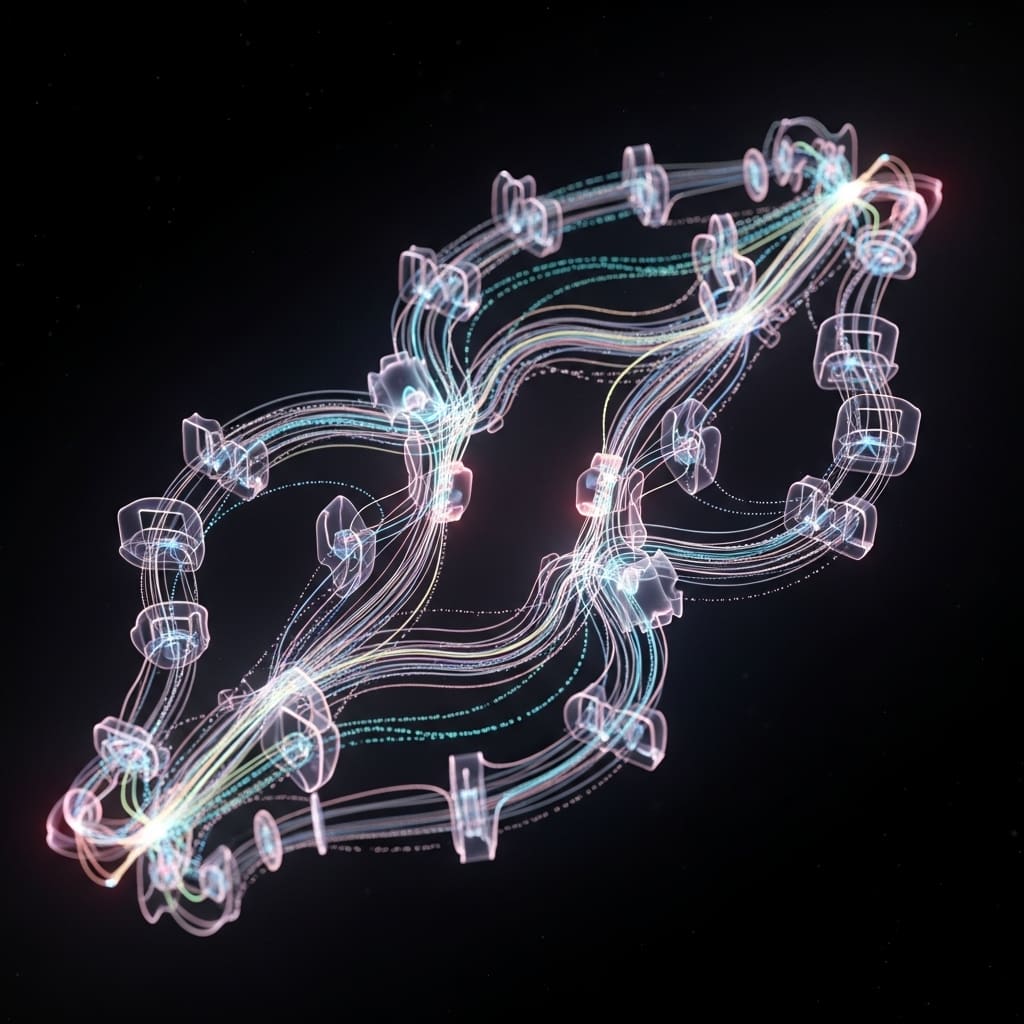

Beyond photon generation, scalable photonic quantum memristor networks for distributed quantum memory represent a promising direction for quantum information processing and quantum machine learning. Although photons are attractive carriers of quantum information, their weak mutual interactions have long hindered the realization of memory functionalities required for capturing temporal correlations and long-range context. Recent advances have introduced measurement-based photonic quantum memristors (PQMRs), which exhibit tunable non-Markovian responses and emulate process-in-memory behavior through continuous feedback based on measurement outcomes. However, existing implementations have largely confined memory to local device elements, in stark contrast to biological and artificial neural networks where memory is distributed across the system.

Here, we propose a scalable PQMR network architecture that enables measurement-based memory sharing across multiple nodes. In this framework, each memristive node updates its internal state based on the historical evolution of both its own quantum state and those of neighboring nodes, thereby realizing distributed memory. By modeling each node as a photonic quantum memtransistor, we demonstrate pronounced enhancements in both classical and quantum hysteresis at the device level, as well as strengthened quantum hysteresis at the network level. Implemented as a quantum reservoir, the proposed architecture significantly improves Fashion-MNIST classification performance, yielding more than a twofold enhancement in a combined accuracy–confidence metric due to increased data separability. These results establish scalable measurement-based feedback as a powerful mechanism for endowing photonic systems with enhanced memory capacity, paving the way toward high-capacity quantum machine learning architectures compatible with linear-optical quantum computing.

Enhanced hysteresis and data separability facilitate improved photonic machine learning performance

Scientists have developed a scalable photonic memristor network enabling measurement-based memory sharing, addressing a key limitation in photonic machine learning. The research overcomes the absence of photon-photon interactions, which typically hinders the realisation of memory functionalities crucial for processing long-range context.

Experiments revealed pronounced enhancements in both classical and hysteresis at the device level, alongside improved network-level hysteresis, by modelling each node as a photonic memtransistor. The team measured tunable, enhanced classical and quantum hysteresis responses using a photonic quantum memtransistor model, demonstrating the potential for distributed memory within the network.

Data shows that implementing the architecture as a reservoir significantly improves Fashion-MNIST classification accuracy and confidence, achieved through increased data separability. Specifically, the system attained more than a twofold improvement in a combined accuracy-confidence metric, indicating a substantial leap in performance.

Researchers designed the transmission of a photonic quantum memtransistor, extending existing equations to incorporate memory shared from a neighbouring memristor via a measurement along a quantum gate path. The critical parameter governing this memory sharing is denoted as ‘d’; when d = 0, the system operates as a standard photonic memristor, while larger values of d, coupled with reduced input at port E, lead to a decreased transmittance.

Tests prove that even with decreased transmittance, the hysteresis of quantum coherence maintains its loop area via increased contrast between subloops. Further analysis of network-level performance focused on quantum coherence, revealing that the memory-sharing configuration enhances and diversifies memristive responses.

Figures demonstrate that reducing the input at port E, corresponding to stronger memory sharing, enhances the hysteresis response in terms of contrast. This breakthrough delivers improved memory functionalities for quantum machine learning and photonic computing, paving the way for high-capacity machine learning compatible with linear computing. Measurements confirm the potential for scalable measurement-based feedback to endow improved memory functionalities.

Distributed photonic memory enhances image classification performance significantly

Scientists have developed a scalable photonic memristor network capable of sharing memory across its components. This architecture utilises measurement-based memory sharing, where each node updates its state based on its own history and that of its neighbours, effectively creating distributed memory. By modelling each node as a photonic memtransistor, researchers demonstrated enhanced hysteresis both at the device and network levels.

Implemented as a reservoir computing system, the network achieved improved accuracy and confidence in Fashion-MNIST image classification, exceeding the performance of conventional photonic memristor networks by a factor of two. This improvement stems from increased data separability facilitated by the shared memory.

The authors acknowledge a limitation in that the current demonstration focuses on a specific, relatively simple example of cyclic memory sharing. Future work could explore more complex memory-sharing schemes and applications. The significance of this work lies in its potential to advance machine learning using memristive devices compatible with linear computing.

By overcoming the limitations of isolated photonic memristors, this research establishes a practical route towards strengthening memory functionalities for both quantum machine learning and photonic computing. The findings suggest that scalable, measurement-based configurations offer a viable pathway for building high-capacity machine learning systems.

👉 More information

🗞 Scalable Memory Sharing in Photonic Quantum Memristors for Reservoir Computing

🧠 ArXiv: https://arxiv.org/abs/2601.23044