On April 4, 2025, researchers presented an innovative framework in their study titled Optimizing Quantum Circuits via ZX Diagrams using Reinforcement Learning and Graph Neural Networks. This framework employs machine learning techniques to reduce noise-induced errors in quantum circuits by minimizing the number of two-qubit gates, thereby enhancing reliability on current quantum hardware.

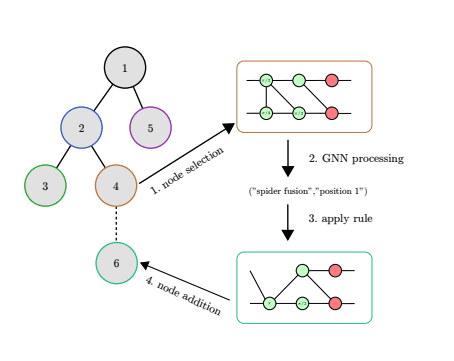

Quantum computing faces limitations due to noise from two-qubit gates. Researchers developed a framework for circuit optimisation using ZX calculus, graph neural networks, and reinforcement learning. Their method combines reinforcement learning with tree search to find optimal rewrite sequences, minimizing CNOT gates more effectively than existing methods. Tested on diverse random circuits, the approach demonstrated competitive performance with state-of-the-art optimizers.

In the rapidly evolving landscape of quantum computing, researchers are continually seeking innovative tools to simplify complex problems. One such tool is ZX calculus, a graphical language that offers a visual approach to understanding and manipulating quantum circuits. Developed as an alternative to traditional algebraic methods, ZX calculus uses diagrams to represent quantum operations, making it easier for both experts and newcomers to grasp intricate concepts.

ZX calculus has demonstrated remarkable versatility by being

ZX calculus has demonstrated remarkable versatility by being complete for various quantum mechanics models. Notably, it is fully equipped to handle stabilizer quantum mechanics, a foundational area in quantum computing. Furthermore, its completeness extends to the Clifford+T gate set, which is crucial for universal quantum computation. This dual capability underscores ZX calculus’s potential as a comprehensive tool for theoretical exploration and practical applications.

Despite its strengths, ZX calculus presents challenges, particularly in circuit extraction—the process of converting graphical representations into executable quantum circuits. Recent studies have shown that this task can be computationally intensive, classified as #P-hard. This complexity highlights the need for advanced algorithms and optimizations to make circuit extraction more efficient and scalable.

Recent advancements have addressed some of these challenges, particularly in handling multi-control gates—a critical component in many quantum algorithms. Researchers have developed techniques within ZX calculus that simplify the decomposition of these complex gates, making them more manageable and paving the way for broader applications in quantum circuit design.

The integration of machine learning with ZX calculus represents another exciting frontier. By leveraging machine learning algorithms, researchers can optimize ZX diagrams, enhancing their efficiency and reducing computational overhead. This synergy accelerates the development of quantum circuits and opens new avenues for automating complex tasks in quantum computing.

Tools such as PyZX have emerged to facilitate these advancements

Tools such as PyZX have emerged to facilitate these advancements. As an open-source library, PyZX provides a practical platform for working with ZX calculus, enabling researchers and developers to experiment and build upon existing work. This accessibility is crucial for fostering collaboration and accelerating innovation in the field.

In conclusion, ZX calculus stands out as a transformative tool in quantum computing, offering both theoretical depth and practical utility. As research progresses, addressing challenges like circuit extraction complexity and leveraging machine learning will be key to unlocking its full potential. With ongoing developments and contributions from the community, ZX calculus is poised to play an increasingly significant role in shaping the future of quantum computing. Its impact extends beyond mere computation, promising new insights into the fundamental principles of quantum mechanics and their applications.

🗞 Optimizing Quantum Circuits via ZX Diagrams using Reinforcement Learning and Graph Neural Networks

🧠 DOI: https://doi.org/10.48550/arXiv.2504.03429