Researchers are increasingly focused on understanding why usage of models within public repositories remains skewed, despite the vast number available. Jonathan Kahana, Eliahu Horwitz, and Yedid Hoshen, all from The Hebrew University of Jerusalem, investigated whether this reflects genuine superiority of popular models or a systematic overlooking of potentially better alternatives. Their extensive evaluation of over 2,000 fine-tuned models revealed a significant number of “hidden gems”, unpopular checkpoints that demonstrably outperform their more widely used counterparts, even achieving a substantial performance boost in areas like mathematics (from 83.2% to 96.0% for Llama-3.1-8B) without increased computational cost. Recognising the impracticality of exhaustively testing every model, the team formulated the discovery process as a Multi-Armed Bandit problem, developing a method that accelerates identification of top models by over 50x using just 50 queries per candidate.

Overlooked models outperform popular counterparts consistently

Scientists have demonstrated the widespread existence of “hidden gems” within public model repositories, revealing that high-performing, yet unpopular, fine-tuned models are consistently overlooked by the community. This work challenges the assumption that concentrated usage patterns reflect efficient market selection, suggesting instead that superior models are systematically being missed. Notably, within the Llama-3.1-8B family, the team discovered checkpoints that improve math performance from 83.2% to 96.0% on the GSM8K benchmark without increasing inference costs, highlighting the potential for substantial gains through more effective model discovery.

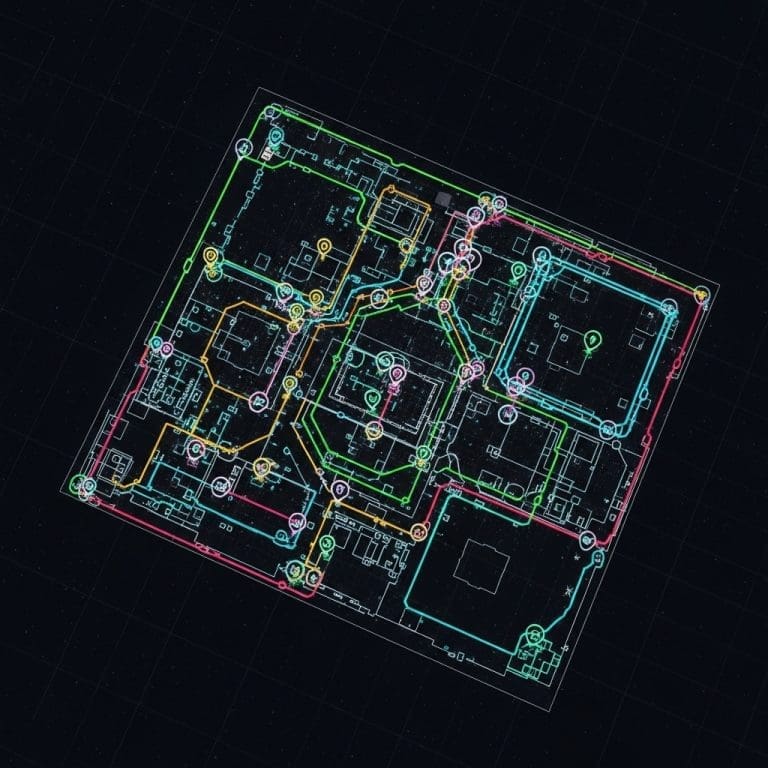

The study addresses the critical bottleneck of selecting the optimal model from the millions available in repositories like Hugging Face, where reliance on documentation is often unreliable due to incomplete or missing model cards. Rather than simply ranking new models against existing leaderboards, the researchers formulated the problem as a Multi-Armed Bandit (MAB) challenge, aiming to identify top-performing models from scratch without prior ranking knowledge. They adapted the Sequential Halving algorithm, accelerating it through the use of shared query sets and aggressive elimination schedules, achieving a remarkable 50x speedup in the discovery process. This innovative approach allows for the retrieval of top models with as few as 50 queries per candidate, making large-scale model evaluation computationally feasible.

Experiments consistently revealed that unpopular models, termed “hidden gems”, frequently outperform widely used baselines across diverse tasks. For example, within the Qwen 7B model tree, a discovered gem improved performance on the ARC-Cs benchmark by 1.1%, on MMLUs by 2.6%, and achieved a substantial 40.1% increase on the challenging GSM8Ks benchmark. Similarly, in the Mistral 7B family, a hidden gem demonstrated improvements ranging from 7.2% on ARC-Cs to an impressive 9.6% on MMLUs. The research establishes a clear need for more efficient model discovery methods, moving beyond simple download counts as a proxy for performance.

This breakthrough opens avenues for developing intelligent model selection tools that can actively identify and promote these hidden gems, democratising access to high-performing models and maximising the potential of fine-tuned checkpoints. By framing the discovery process as an MAB problem and optimising the Sequential Halving algorithm, the team has not only accelerated the search for superior models but also provided a scalable framework for continuously evaluating and updating model rankings within rapidly expanding repositories. The implications extend beyond academic research, offering practical benefits for developers and users seeking to leverage the power of Large language models for a wide range of applications.

Language Model Evaluation via Multi-Armed Bandit approaches

Scientists investigated the prevalence of “hidden gems” within a vast landscape of over 2,000 fine-tuned language models hosted on public repositories. The study meticulously evaluated models from families including Qwen, Mistral, and Llama-3.1-8B, employing a diverse benchmark suite to assess performance across multiple tasks. Researchers measured performance on ARC-Cs, WinoG. s, MMLUs, MBPPs, GSM8Ks, and RouterB. s, establishing a comprehensive performance profile for each model. To quantify improvements, the team calculated gains achieved by “gem” models compared to their popular counterparts, reporting increases ranging from +1.1% to +40.1% depending on the task and model family.

The core methodological innovation lay in framing model discovery as a Multi-Armed Bandit (MAB) problem, specifically adapting the Sequential Halving algorithm. This approach enabled researchers to efficiently navigate the expansive model space without exhaustive evaluation. Experiments harnessed shared query sets across candidate models, reducing variance and accelerating the identification of high-performing checkpoints. The team implemented an aggressive elimination schedule, discarding underperforming models early in the process to further optimise search efficiency. This system delivered top-performing models with as few as 50 queries per candidate, representing a greater than 50x acceleration compared to exhaustive search methods.

To establish a baseline for comparison, the study assessed the performance of base models within each family against the discovered “hidden gems”. The team rigorously tracked download counts for each model, revealing a highly skewed distribution where a small fraction of models dominated usage. This data informed the hypothesis that superior models were often overlooked, prompting the development of the accelerated Sequential Halving algorithm. The method’s ability to rapidly identify models improving math performance from 83.2% to 96.0% demonstrates its potential to unlock significant gains from the long tail of available models.

Furthermore, the research detailed performance gains on specific benchmarks, such as a +12.8% improvement on the GSM8K math dataset using a rarely downloaded Llama-3.1-8B checkpoint. The team’s approach not only accelerates discovery but also enhances average performance by over 4.5%, highlighting the practical benefits of their innovative methodology. By formulating the problem as a MAB, scientists bypassed the computational limitations of exhaustive evaluation, paving the way for more efficient exploration of the rapidly expanding landscape of fine-tuned language models.

Unpopular models yield substantial performance gains

Scientists have uncovered a significant number of “hidden gem” language models within public repositories, challenging the assumption that high download counts accurately reflect model quality. The research team evaluated over 2,000 fine-tuned models, revealing that unpopular models frequently outperform their more popular counterparts across a range of benchmarks. Notably, within the Llama-3.1-8B family, rarely downloaded checkpoints improved math performance on the GSM8K benchmark from 83.2% to an impressive 96.0%, all without increasing inference costs. This substantial gain demonstrates the potential for significant performance improvements hidden within the vast landscape of available models.

Experiments consistently identified these hidden gems across several model families, including Qwen 3B, Qwen 7B, Mistral 7B, and Llama 8B. Data shows that a math-oriented fine-tune within the Qwen-3B tree boosted accuracy on the GSM8K benchmark from 83.5% to 89.0%, approaching the performance of the larger Qwen-7B base version while utilising fewer parameters. The Mistral 7B tree exhibited even more dramatic improvements, with a hidden gem achieving a 40.1% increase in performance on RouterBench, a composite benchmark evaluating diverse capabilities. Measurements confirm that these gains were not limited to specific tasks, with improvements observed across multiple benchmarks including ARC-Cs, WinoG. s, MMLUs, MBPPs, and RouterBench.

To address the computational infeasibility of exhaustively evaluating all uploaded models, the researchers formulated the model discovery process as a Multi-Armed Bandit problem. Adapting the Sequential Halving search algorithm, they incorporated shared query sets and aggressive elimination schedules to accelerate the identification of top-performing models. Tests prove that their method can retrieve top models with as few as 50 queries per candidate, representing a 50x acceleration compared to exhaustive baseline approaches. The team measured performance gains of over 4.5% on average, demonstrating the effectiveness of their accelerated discovery method.

Table 1 summarises the results, showcasing performance gains achieved by the discovered gems compared to the base models within each model tree. For instance, in the Qwen 7B tree, the gem model achieved a 5.4% improvement on the WinoG. s benchmark and a 2.6% improvement on the MMLUs benchmark. These results highlight the potential to unlock substantial performance improvements by efficiently identifying and deploying these overlooked models, offering a pathway to more effective and accessible AI solutions.

Overlooked models boost performance via efficient search

Scientists have discovered that public repositories of fine-tuned language models contain numerous “hidden gems”, models that, despite being unpopular, significantly outperform their more widely used counterparts. An extensive evaluation of over 2,000 models revealed that these overlooked fine-tunes can deliver substantial improvements in performance, such as increasing math accuracy from 83.2% to 96.0% without raising inference costs. This suggests that current model selection processes may not be efficiently identifying the best available options. To address the computational challenge of evaluating every uploaded model, researchers formulated the discovery process as a Multi-Armed Bandit problem and developed an accelerated Sequential Halving search algorithm.

This method, utilising shared query sets and aggressive elimination schedules, can retrieve top-performing models with as few as 50 queries per candidate, representing a more than 50-fold acceleration in discovery speed. The approach consistently identified elite models across different model sizes, demonstrating its robustness and effectiveness. The findings highlight a significant opportunity to improve the performance of language models by more effectively leveraging the wealth of resources available in public repositories. However, the authors acknowledge that the evaluation was limited to a specific set of models and tasks, and that the optimal search strategy may vary depending on the application. Future research could explore adaptive query strategies and investigate the transferability of these “hidden gems” to other domains and model architectures, potentially leading to more efficient and effective model selection practices.

🗞 Discovering Hidden Gems in Model Repositories

🧠 ArXiv: https://arxiv.org/abs/2601.22157