Penn State researchers have unveiled a new optical computing prototype poised to dramatically reduce the energy demands of artificial intelligence. Led by Xingjie Ni, associate professor of electrical engineering, the team detailed their breakthrough today, February 11, in Science Advances. The system utilizes a looping “infinity mirror” design to encode data directly into light beams, accelerating AI computation with significantly lower power consumption. “Traditional computers…consume significant energy and generate a lot of heat,” explains Ni, highlighting the potential of this technology to overcome a critical limitation of current AI systems. By leveraging readily available components, this innovation promises a future where AI’s “heavy math” can be processed with unprecedented efficiency.

Light-Based Computation: Shifting from Electronics to Photons

Photons offer a compelling alternative to electrons in the relentless pursuit of more efficient artificial intelligence. A new prototype developed at Penn State demonstrates a pathway toward drastically reducing the energy demands of AI computation, a challenge that currently strains data centers globally. The system, detailed in a paper published February 11 in Science Advances, moves beyond simply accelerating calculations and tackles the fundamental issue of power consumption. Instead of relying on traditional electronic circuits, the device encodes data directly into beams of light, leveraging the unique properties of photons to perform complex operations.

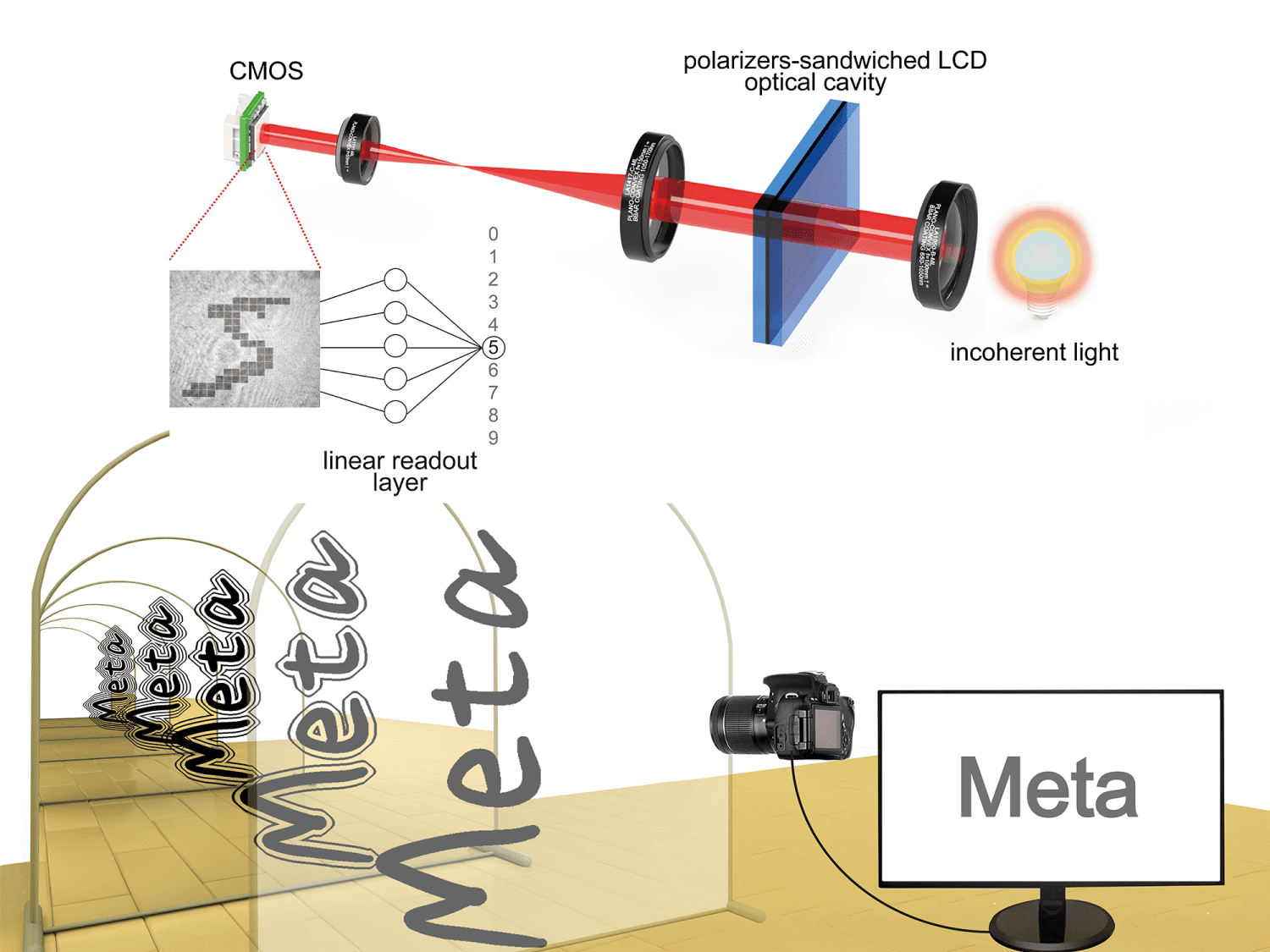

The Penn State team’s innovation utilizes a looping design, reminiscent of an “infinity mirror,” to repeatedly pass light through tiny optical elements. This allows for the creation of nonlinear relationships crucial for advanced AI tasks, something previously difficult to achieve efficiently with light alone. “In most prior demonstrations, however, light handles only the linear, or straightforward, part of computation,” Ni stated, highlighting how this approach overcomes previous limitations. Crucially, the prototype is constructed from readily available components—elements found in LCD displays and LED lights—avoiding the need for exotic materials or high-power lasers.

This focus on accessibility promises a scalable solution to the growing energy crisis surrounding AI. The potential impact on industry is substantial; data centers currently struggle with energy use and heat generation from GPUs, and a more efficient optical module could alleviate this bottleneck. Ni believes this could lead to “cheaper, more sustainable AI services for consumers,” and enable more widespread deployment of AI in devices ranging from cameras to autonomous vehicles, allowing them to operate “in real time, keep sensitive data local and rely less on constant connectivity.”

Nonlinear Optical Loop Enables AI’s Complex Functions

Existing approaches to accelerating artificial intelligence often hit a power wall, demanding ever-increasing energy and cooling infrastructure. Optical computing offers an alternative, processing information with light instead of electricity, and promising speed advantages due to the nature of photons. However, previous optical systems largely addressed only the linear aspects of computation – predictable, straightforward calculations – leaving the more complex, decision-making functions of AI to electronic processors. The Penn State team’s innovation directly tackles this limitation.

Their system doesn’t simply use light for basic calculations, but instead harnesses a looping design to generate the nonlinear behavior crucial for advanced AI functions. “The decision-making that makes AI powerful is nonlinear in nature,” Ni explains, meaning the output isn’t proportional to the input.

Rather than relying on specialized materials or high-power lasers to achieve this nonlinearity, the team implemented a “compact multi-pass optical loop, like an ‘infinity mirror,’ in which the light pattern effectively ‘builds up’ a nonlinear relationship between the input data and the output over repeated passes between the mirrors.” This approach utilizes readily available components, “like what’s used in everyday LCD displays and LED lights,” minimizing both cost and complexity. “Companies are spending enormous amounts on electricity and cooling,” Ni states, noting that energy and heat generation often pose a greater operational challenge than simply acquiring enough GPUs. The team is now focused on creating a programmable and robust module for wider deployment.

Companies are spending enormous amounts on electricity and cooling as AI usage has grown – in many data centers, the biggest problem facing operation is the energy used and heat generated by the GPUs powering AI models, not just the lack of GPUs.

Prototype Reduces AI Energy Use & System Size

The escalating energy demands of artificial intelligence are prompting innovative solutions, and a team at Penn State is tackling the problem with a fundamentally different approach to computation. This isn’t simply about speed, but a dramatic reduction in power consumption and physical footprint. Ni explains that traditional computers “encode data into binary 1s and 0s and perform operations with electronic circuits…but one that consumes significant energy and generates a lot of heat.” The Penn State device, described in a Science Advances paper published February 11, bypasses this limitation by encoding data directly into light beams.

A key innovation lies in the system’s architecture, utilizing a looping design – described as an “infinity mirror” – to amplify nonlinear computational effects. Previous optical computing attempts often faltered because they struggled with the complex, non-linear calculations essential for advanced AI. The team’s solution avoids costly and power-hungry specialized materials, instead leveraging readily available components found in LCD displays and LED lights. By arranging these elements in a multi-pass loop, the system effectively “builds up” the necessary nonlinear behavior. This compact design addresses a critical bottleneck in current AI infrastructure, where data centers are increasingly burdened by energy costs and heat generation.

Future Goals: Programmable, Scalable Optical AI Modules

The immediate future of artificial intelligence hinges on overcoming a critical limitation: energy consumption. Penn State’s recent advances in optical computing aren’t simply about faster processing, but about fundamentally reshaping the energy demands of AI systems, potentially unlocking widespread deployment in previously constrained environments. Xingjie Ni and his team are now focused on transforming their prototype into a practical, deployable module, aiming for a system that is both programmable and scalable. “Going forward, our goal is to turn this proof of concept into an optical computing module that is programmable, robust and ready to deploy,” Ni explained.

This next phase prioritizes developer control, moving beyond a fixed nonlinear response to allow for task-specific tuning. The team envisions a compact unit capable of integrating directly into existing computing platforms, offloading intensive calculations to the optical core while conventional electronics manage control, memory, and overall system flexibility. “Conventional electronics would handle general control, memory and flexibility, while the compact optical module takes on specific, high-volume computations that drive much of AI’s cost and energy use,” Ni stated, highlighting a collaborative rather than replacement approach.

Scaling up to handle larger, more realistic workloads is also a key objective, paving the way for broader application. The potential impact extends beyond mere efficiency gains. By reducing the size and power requirements, this technology could decentralize AI processing, embedding intelligence directly into devices like cameras, sensors, and robotics. This shift would not only enable real-time responsiveness but also enhance data privacy by minimizing reliance on cloud connectivity. Ultimately, the team hopes to deliver hardware that can “power AI models with smaller, faster and more sustainable hardware,” addressing a growing concern within the industry and beyond.

Shrinking the size and power of AI hardware would push intelligence outward – into cameras, sensors, cars, drones, factory robots and medical devices – so they can respond in real time, keep sensitive data local and rely less on constant connectivity.